Sabitlenmiş Tweet

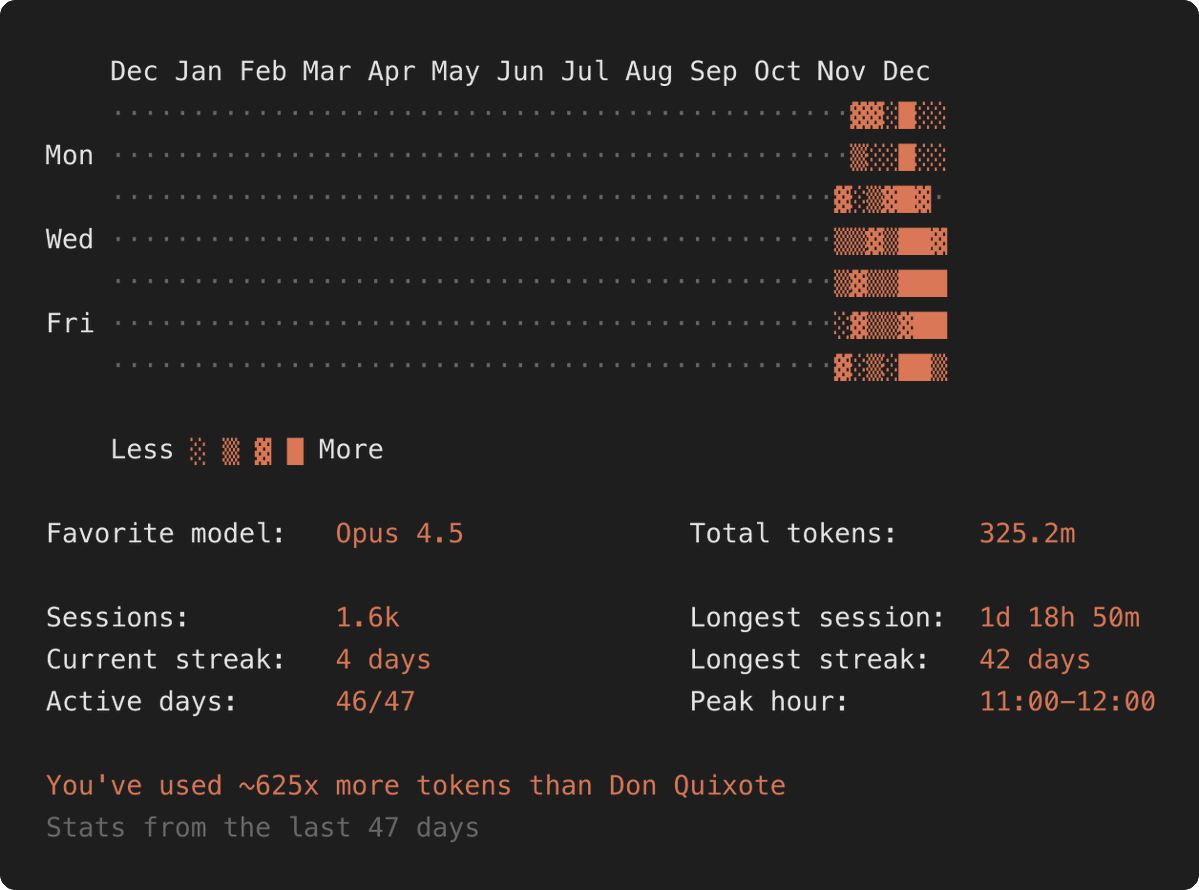

I wrote a blog post about how I use Claude Code (and other models) in my work: invicti.com/blog/security-…

English

harisec

3.9K posts

@har1sec

Interested in web security, bug bounties, machine learning and investing. SolidGoldMagikarp. Orson Kovacs.

Is H1 Using OUR Reports To Train LLMs?

@ahacker1_h1 @Radiowebcc Wow… I thought h1 said they were not using our data to train their AI model. I’m going to ask h1 to clarify 🧐