Harbor Framework

85 posts

You don't need a new IDE. You need a new ISE. Integrated Spec Environment. Spec is the new code. Ship the right spec and your job is basically done.

@xeophon Me before I start looking at harbor rollouts

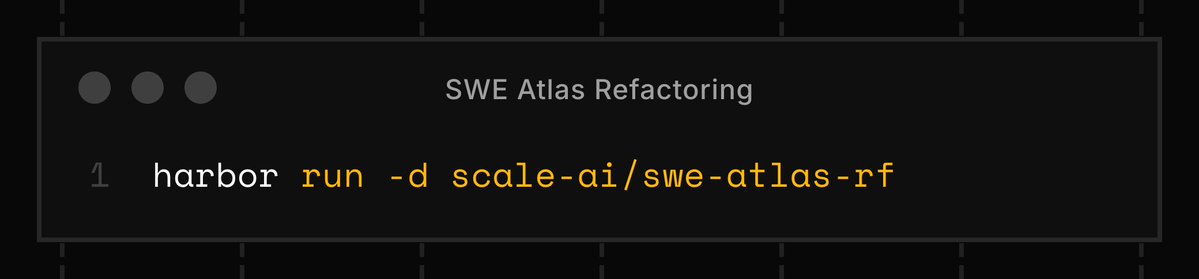

Today we’re releasing Refactoring, the final leaderboard of our SWE Atlas suite. This new leaderboard is the ultimate test of an agent's ability to restructure code without breaking the system. Claude Opus 4.7 with Claude Code takes the top spot🥇

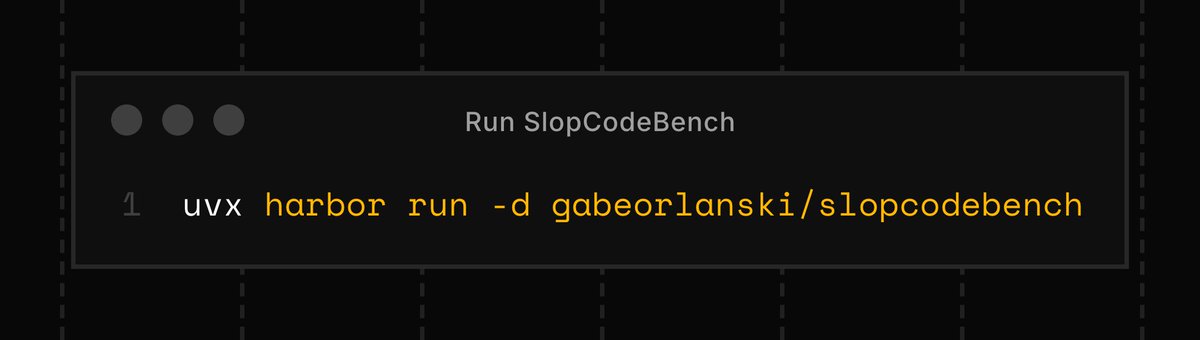

Very excited to announce the v1.0 of SlopCodeBench release: - Doubling the size of the dataset - @harborframework support - scb-check: a CLI that flags slop anti-patterns - Way more model results scbench.ai github.com/SprocketLab/sl… 🧵

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed

No major benchmark is designed for COBOL, Fortran, or Assembly - the languages powering trillions in transactions and infrastructure that must be modernized or risk catastrophic failure. We built Legacy-Bench to measure frontier agents on the code the world actually runs on.

Scaling up an over-night eval run - a single GAIA level 2 task - two contestants: pi as baseline, and another that can do fold/peek operations on its context window - 100 attempts each on the same task Should get a decent picture of failure modes. @harborframework ROCKS

Measure how well your agent writes unit tests using SWE-Atlas Test Writing from @scale_AI. SWE-Atlas Test Writing and SWE-Atlas Codebase QnA both ship in the Harbor format and are available on the Harbor registry.

The Harbor registry is getting an upgrade. Now, anyone can publish to the registry to make their dataset available to every Harbor user:

Terminal-Bench 2.0 went from ~25% → 80% in four months and became the standard eval for frontier CLI agents. Now, TB3 is in the works. I talked to @alexgshaw about what happens when model capabilities climb faster than we can measure them. His answer: the benchmark factory (@harborframework)— infrastructure to develop hard, representative evals at the pace that the frontier moves. As Alex put it: "we need a thousand times more benchmarks than we have right now." 00:23 - How quickly models hill-climbed TB2 01:46 - What rapid progress reveals about benchmarks vs. real-world capability 03:28 - What made Terminal-Bench stick 04:58 - Why the terminal is the right abstraction for agentic AI 07:14 - How TB2 maintains task quality at scale 09:23 - Managing benchmark integrity in a benchmaxxing world 10:47 - Harbor: from experiment to benchmark factory 12:19 - What Harbor does that nothing else did 14:37 - The invariants: what won't change as agent evals evolve 16:55 - The benchmark Alex most wants to see built 18:18 - The ideal human-in-the-loop task creation flywheel 20:32 - How to contribute to Terminal-Bench 3.0

We started with eval first. Benchmarks are the primary way to get real data before getting users. The principle: we would rather burn tokens than burn customer trust. @harborframework product and team have been amazing — and we topped Terminal Bench 1.0 and Terminal Bench 2.0. We evaluate how well the agent channels the model's power, not the model itself. We improve the harness, not the prompt.

How can we autonomously improve LLM harnesses on problems humans are actively working on? Doing so requires solving a hard, long-horizon credit-assignment problem over all prior code, traces, and scores. Announcing Meta-Harness: a method for optimizing harnesses end-to-end