---*

241 posts

---*

@henryvzero

bending reality | human in residence | past life @ycombinator @airbnb @harvard

Harvey and Legora are essentially sales organisations that resell tokens. They have hired legions of ex big law juniors and mid levels as sales people (“GTM”) along with some ex partners to wine and dine their former colleagues. They slap on a UI that makes them look different from ChatGPT but the product differentiation and vertical specific features are far and few in between. You could just as well use both for any white collar job. Their web apps are basically 1. A chatbot interface 2. A projects function where you can upload your files 3. A tabular review function where you can bulk review documents in a table 4. Workflows which are just custom prompts you write for the chatbot or tabular review. I was able to build everything plus some additional functionality they do not have like version control in mikeoss.com in two weeks. I call this the “token reseller theory”. They are like car dealers or real estate agents but for tokens. The model providers get them to do the selling to crack open the reticent legal market. What happens to H/L now that the model providers want the market for themselves? Does not bode well for them.

McDonald’s is reportedly planning a Subscription Fry service, offering unlimited medium fries for $20 per month.

Introducing Pre-IPO Perpetuals (IPOP). The weeks before an IPO are some of the most consequential in a company’s price history, and historically, the least observable. Private market quotes are stale and gated and Public markets haven’t started trading yet. Peak interest coincides with an absence of prices. We’re introducing IPOP markets on XYZ to change that.

Come join our mission to tilt the world in favor of homeowners. We are growing our operations team in Toronto. opendoor.com/careers/open-p…

Exclusive: The White House opposes a plan from Anthropic to expand access to its powerful artificial-intelligence model Mythos on.wsj.com/4cHiUY5

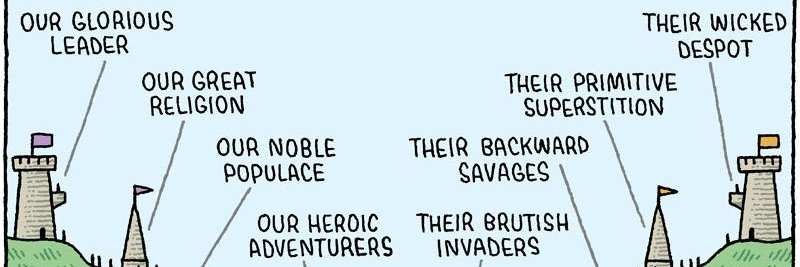

@real_jerseylee @mattyglesias “The labs are running around telling people their product is dangerous and should be taxed and regulated as a ploy to pump up their valuations” is a such a perfect demonstration of the thesis of this paper journals.sagepub.com/doi/10.1177/01…

JUST IN: Allbirds $BIRD stock rises over 420% after announcing shift from shoes to AI.

Unpopular opinion: If you think tokens burned is a productivity metric, no one should take you seriously. Imagine you are a top 0.0001% writer and they are only counting the tokens you produce.

Six months ago, there was a lot of focus on the idea that the there would be a massive glut of unused computing power which would could a recession as AI use plateaued. The "compute bubble" belief was absolutely everywhere. The degree to which this was wrong deserves some notice