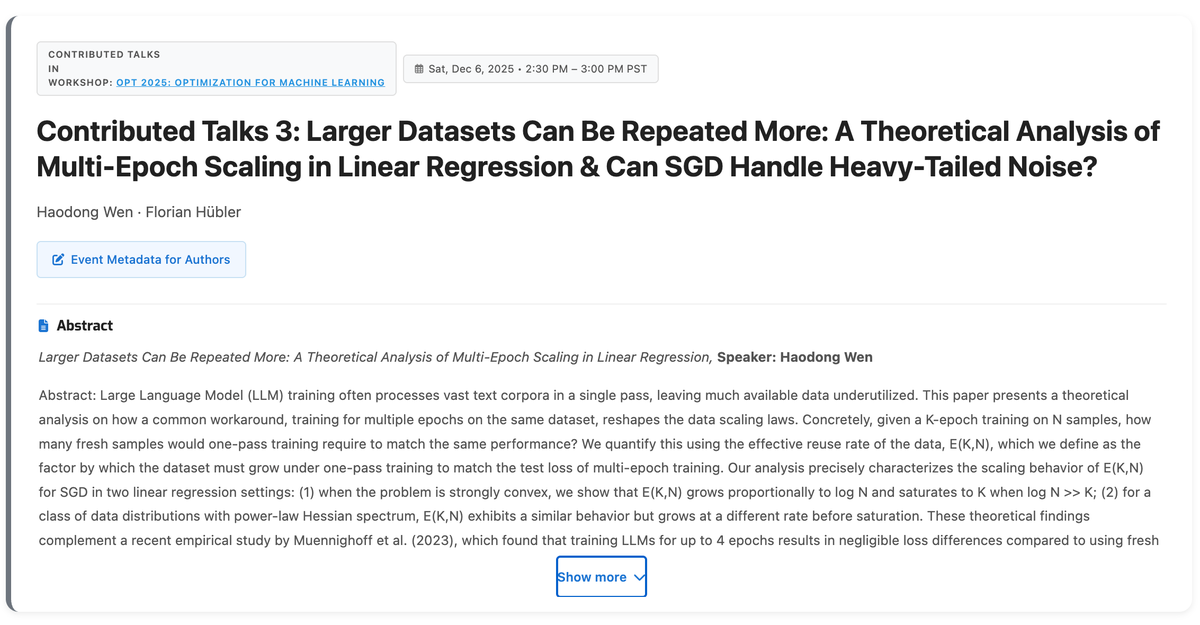

Haodong Wen

22 posts

✈️ Heading to ICLR 🇧🇷 Apr 22–27. Come to our oral on Fri, Apr 24 (10:30 AM–12:00 PM, Room 202 A/B) or find me at our poster (3:15 PM–5:45 PM, P3-#521). We study why LR decay can hurt curriculum-based LLM pretraining — and how to fix it. Happy to chat!

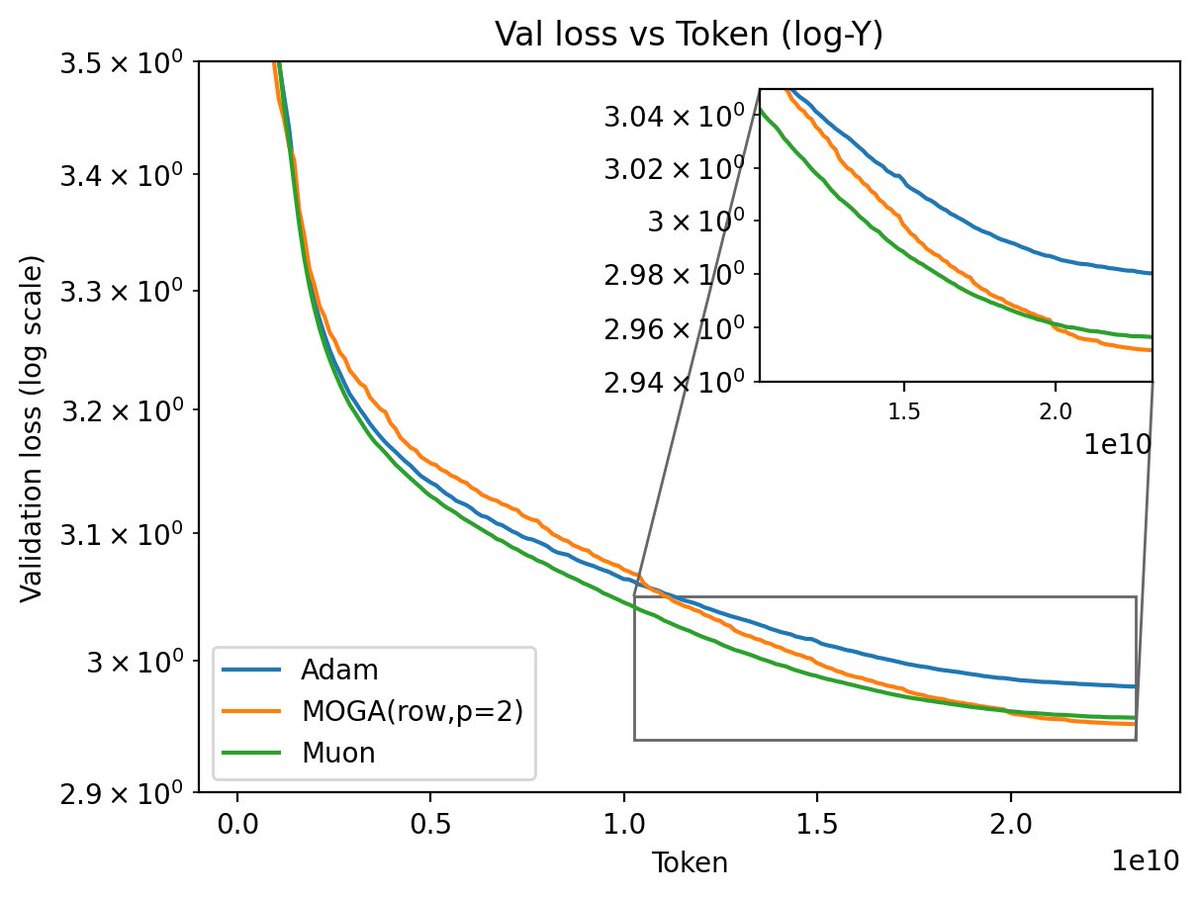

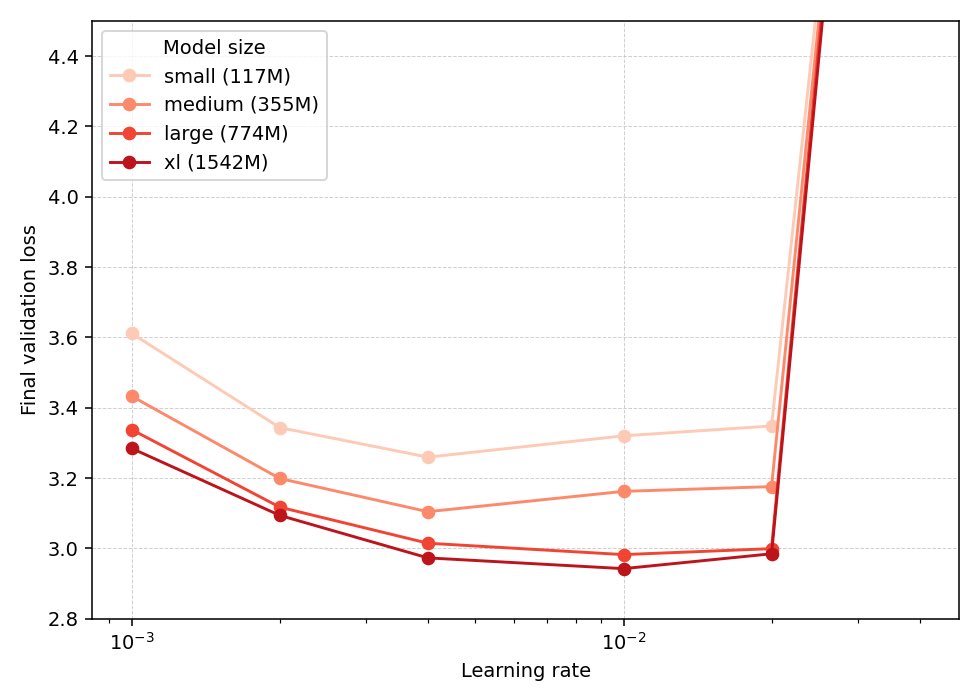

(1/n) Introducing Hyperball — an optimizer wrapper that keeps weight & update norm constant and lets you control the effective (angular) step size directly. Result: sustained speedups across scales + strong hyperparameter transfer.

Adam prefers a different minimizer than SGD (exemplified below), but how? 🤔 Our NeurIPS 2025 Paper: Based on our Slow SDE approximation of Adam, we show that under label noise Adam implicitly minimizes tr(Diag(H)^½), whereas prior works showed that SGD minimizes tr(H). 🧵1/n

🚀 Our NeurIPS 2025 paper: An SDE-based mathematical characterization of how adaptive gradient methods (e.g., Adam, Shampoo, etc.) implicitly reduce the sharpness of the local loss landscape. Under label noise, it is known that SGD implicitly minimizes tr(H). We show that Adam implicitly minimizes tr(Diag(H)¹ᐟ²) — a very unique form of sharpness! In sparse linear regression with diagonal nets, this difference in implicit bias enables Adam to recover the sparse ground truth with much fewer samples than SGD. 👥 Work from our group at Tsinghua, with undergrad intern Xinghan Li @XinghanLi66 and first-year PhD student Haodong Wen @herrywen1.