tdep

930 posts

tdep

@heytdep

founding eng/research new thing @tplus_cx, co-founder @xyclooLabs, trusted (?) execution | 🦀

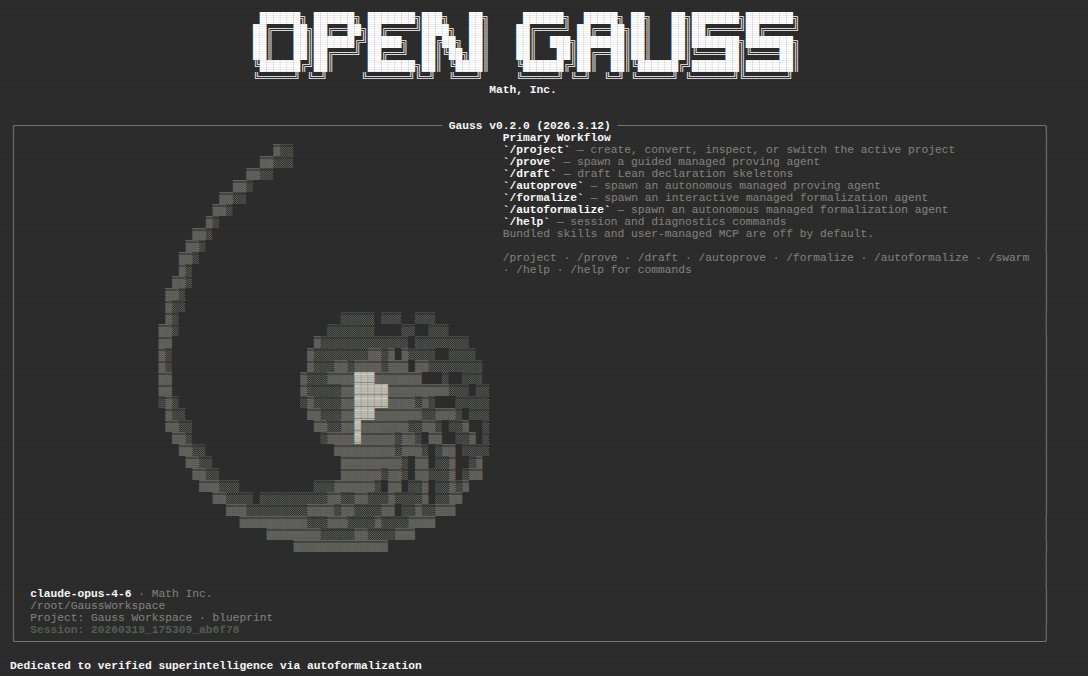

With our benchmark, we set a new standard for evaluating autoformalization using specification-based evaluation. Agents are given an expert-checked Lean statement and can construct their own definitions, lemmas, and proof strategies, like mathematicians.

if you have a single RTX 3090 and want the best local inference setup right now, here's what i landed on after testing 5 open source models across 7 GPU configs this month. GPU: 1x RTX 3090 24GB model: Qwen 3.5 27B Dense Q4_K_M (16.7GB) context: 262K (native max) speed: 35 tok/s generation, flat from 4K to 300K+ reasoning: built in chain of thought, survives Q4 quant config: llama-server -ngl 99 -c 262144 -fa on --cache-type-k q4_0 --cache-type-v q4_0 what this gives you: - 27B params all active every token - no speed degradation as context fills - full reasoning mode on a consumer GPU - 7GB VRAM headroom after model load tested MoE (faster but less depth per token) and dense hermes (same speed, degraded under load). qwen dense hit the sweet spot for single GPU. more architecture comparisons dropping soon. what's your single GPU setup? curious what configs people are running.

@heytdep models are a pyramid we have passed the frontier only era imo only funnel things up when you have to. the frontier models code a harness for the open models, but never actually get the sensitive data using this approach I have reduced my frontier queries 98% or more

Hot off the press: @LandKingdom explains the ins and outs of attested TLS - the only way to remotely talk to TEEs with guarantees that the remote endpoint is confidential and authentic. engineering.chainbound.io/attested-tls-i…

DAMM Capital (Buenos Aires) — buscamos perfil matemático/analítico. Si te interesa modelar sistemas dinámicos, optimizar modelos de machine learning o desarrollar teoría cuantitativa. Requisitos: - Matemática aplicada/teórica (fuerte) - Programación (moderada) 📩 DM con CV.