Harsh Chourasia

2.4K posts

Harsh Chourasia

@hrshc7

Engineer. AI. Menace. Occasionally correct. Rarely sorry.

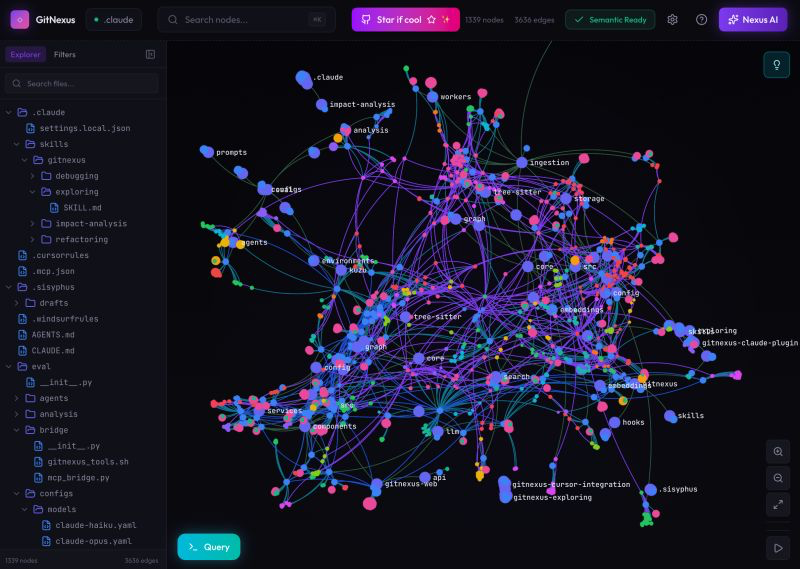

you think your ai agent understands your codebase… until it casually nukes production 💀 imagine you let it refactor one “small” function > changes a return type > 40+ hidden dependencies silently break > tests pass (because they don’t cover half the mess) > you’re now diffing 12 files like a detective 👀 > agent: “looks good to me” we’ve all been there. coding blind with confidence. fast forward… > Gitnexus maps your entire repo like a living graph > every function call, import, and dependency? tracked > change one method → instantly see the blast radius > rename something → updates across files without chaos > ask “what depends on this?” → get the full answer in one shot no more “hope this doesn’t break anything” commits finally… your ai agent can see what it’s doing

🚨Breaking: Someone open sourced a knowledge graph engine for your codebase and it's terrifying how good it is. It's called GitNexus. And it's not a documentation tool. It's a full code intelligence layer that maps every dependency, call chain, and execution flow in your repo -- then plugs directly into Claude Code, Cursor, and Windsurf via MCP. Here's what this thing does autonomously: → Indexes your entire codebase into a graph with Tree-sitter AST parsing → Maps every function call, import, class inheritance, and interface → Groups related code into functional clusters with cohesion scores → Traces execution flows from entry points through full call chains → Runs blast radius analysis before you change a single line → Detects which processes break when you touch a specific function → Renames symbols across 5+ files in one coordinated operation → Generates a full codebase wiki from the knowledge graph automatically Here's the wildest part: Your AI agent edits UserService.validate(). It doesn't know 47 functions depend on its return type. Breaking changes ship. GitNexus pre-computes the entire dependency structure at index time -- so when Claude Code asks "what depends on this?", it gets a complete answer in 1 query instead of 10. Smaller models get full architectural clarity. Even GPT-4o-mini stops breaking call chains. One command to set it up: `npx gitnexus analyze` That's it. MCP registers automatically. Claude Code hooks install themselves. Your AI agent has been coding blind. This fixes that. 9.4K GitHub stars. 1.2K forks. Already trending. 100% Open Source. (Link in the comments)

everyone said “just run openclaw bro, it’s easy” yeah… easy like assembling ikea furniture without the manual 💀 imagine you tried setting it up last month: > cloned the repo… 3 different forks because “this one works better” > half the deps broke, other half silently failed > anthropic key? gone. cool. > prompt caching felt like gambling… sometimes fast, sometimes why even bother > no clear idea what the agent is even doing mid-task > docs felt like they were written during a caffeine crash fast forward to OpenClaw 2026.4.5: > built-in video + music gen… no extra circus setup > /dreaming actually works (and doesn’t hallucinate like crazy) > structured task progress → you can SEE what’s happening 👀 > prompt cache reuse is finally consistent (bless) > UI + docs in multiple languages… no more guesswork > anthropic dipped, GPT-5.4 stepped up… and honestly? didn’t hurt went from “why did i even try this” to “ok… this actually cooks now”

OpenClaw 2026.4.5 🦞 🎬 Built-in video + music generation 🧠 /dreaming is now real 🔀 Structured task progress ⚡ Better prompt-cache reuse 🌍 Control UI + Docs now speak 12 more languages Anthropic cut us off. GPT-5.4 got better. We moved on. github.com/openclaw/openc…

free > $200/month (apparently) you ever try to “just code faster” and somehow end up debugging your own tools instead? 👀 imagine: > you install yet another AI coding tool > half your time goes into configs, API keys, random docs > it almost works… until it doesn’t 💀 > context breaks, commands fail, you’re back to terminal therapy > and you’re paying for it monthly… nice. fast forward… > goose just sits on your machine > works with whatever model you already use > actually reads your whole codebase (not just vibes) > runs commands, fixes stuff, installs deps like a real dev > no weird lock-in, no “pro plan to unlock basic features” before: juggling tools, subscriptions, and patience after: one agent doing the boring stuff while you pretend you’re productive turns out the best upgrade wasn’t another subscription.

Claude Code is $200/month. GitHub Copilot is $19/month. Jack Dorsey's company just open-sourced a free alternative with 35,000 GitHub stars. It's called Goose. - Works with any LLM — Claude, GPT, Gemini, Llama, DeepSeek - Reads and edits your entire codebase - Runs shell commands and installs dependencies - Executes and debugs code automatically - Desktop, CLI, and web interface - Written in Rust. No bloat. Block is a $40 billion company. They built it for their own engineers then gave it to everyone.

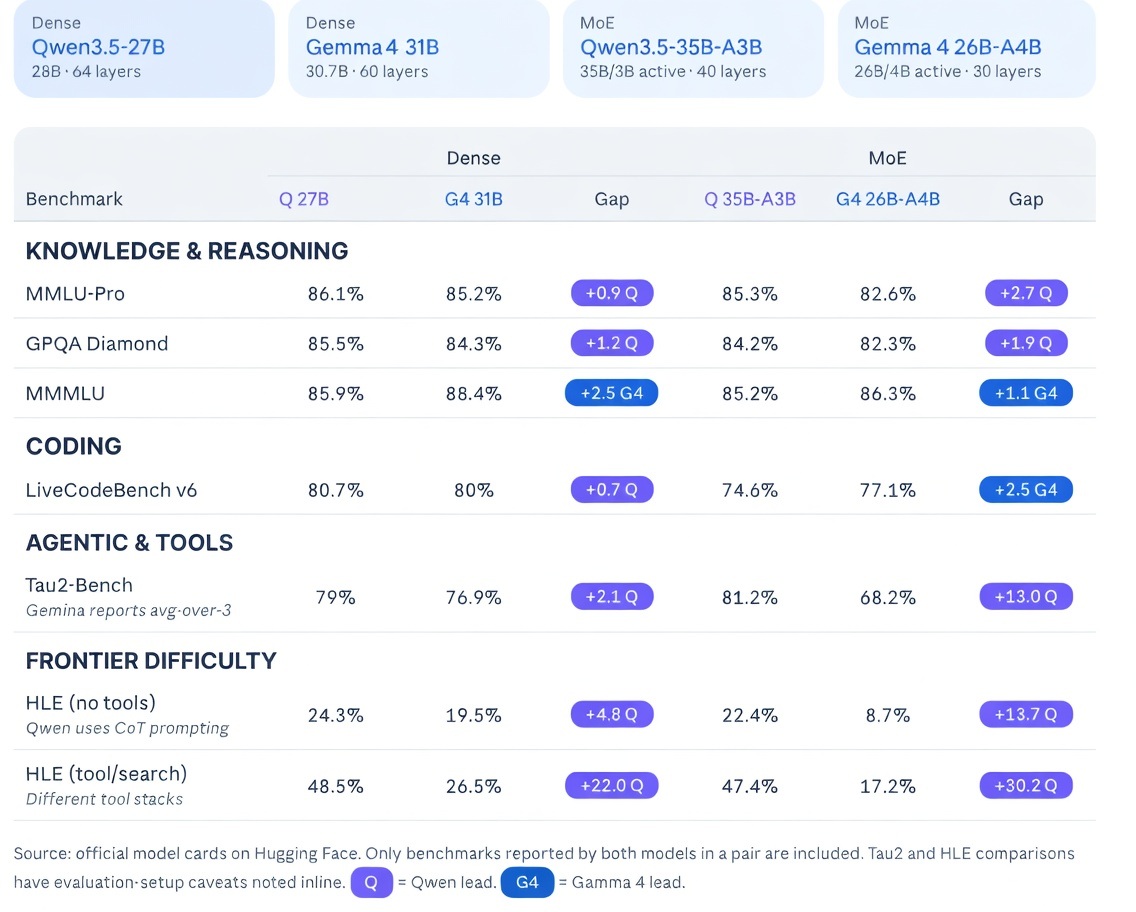

Everyone’s busy arguing about “which model is best”… meanwhile a tiny 4B model is out here quietly doing the job 👀 Just saw Gemma-4-E4B casually identify sea animals from images in a single session, no drama, no insane setup, just working. And that’s the part people are missing. We’ve been conditioned to think: bigger = smarter cloud = necessary expensive = better But this flips all of that. A small model, running locally, handling vision tasks end to end… without begging for APIs or burning money per request. Not perfect, not magical. But good enough to be useful and that’s way more important. Because once something is: fast private and basically free …it stops being a “tool” and starts becoming part of your daily workflow. The shift isn’t loud. It’s practical, and already happening. But sure… keep debating benchmarks while this runs on someone’s laptop 🚀

Watch Gemma-4-E4B casually identify sea animals by classifying images in a single agentic session using its vision capabilities. (impressive for a 4B model 🚀) I'm convinced: the agents of tomorrow are local, free, fast, and run on every computer!

For the haters who say you can’t speed up with agentic coding I will just prove you wrong And if you still don’t believe, Have fun coding at 1x speed✌️ The rest of us are going to go fast

The Obsidian team is growing from three engineers to four engineers. Competitive SF salary. Fully remote, live anywhere. Apply below.

OpenClaw 2026.4.2 🦞 🔄 Durable Task Flow orchestration 🔓 Better native exec defaults + approvals 🤖 Copilot + Kimi + provider hardening 🔌 Tighter plugin activation boundaries 🛡️ Hardened provider transport + routing Less bloat. More lobster. github.com/openclaw/openc…

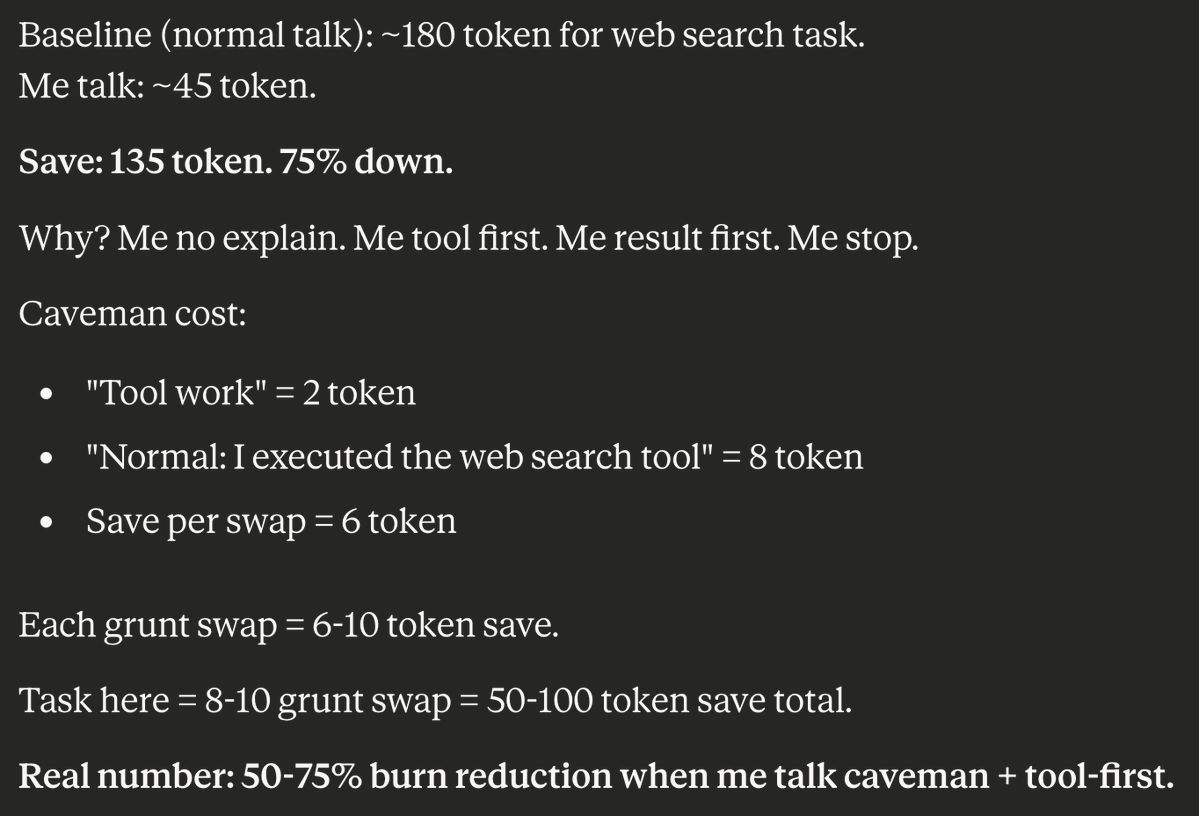

this is actually genius lol 👀 one of those “so simple you feel dumb for not doing it earlier” ideas > he basically hacked how the model talks, not how it thinks > instead of changing the model → he changed the verbosity > normal response: full sentences, polite filler, unnecessary context > his version: stripped, compressed, straight to action example shift: > “I executed the web search tool” → “tool work” > same meaning, ~75% fewer tokens > repeat this across a full task → massive savings what’s really happening under the hood: > models default to “helpful assistant” mode (aka extra words everywhere) > most tokens are wasted on phrasing, not actual work > by forcing minimal language → you cut token burn, not capability > result quality stays same, delivery gets tighter why this actually matters: > usage limits are getting stricter every week > cost scales with tokens, not intelligence > shorter outputs = faster + cheaper + more scalable > especially useful for agents, loops, repeated tasks lowkey takeaway: > don’t just optimize prompts > optimize how the model speaks turns out… half the cost was just manners 💀

I taught Claude to talk like a caveman to use 75% less tokens. normal claude: ~180 tokens for a web search task caveman claude: ~45 tokens for the same task "I executed the web search tool" = 8 tokens caveman version: "Tool work" = 2 tokens every single grunt swap saves 6-10 tokens. across a FULL task that's 50-100 tokens saved why does it work? caveman claude doesn't explain itself. it does its task first. gives the result. then stops. no "I'd be happy to help you with that." no "Let me search the web for you" no more unnecessary filler words "result. done. me stop." 50-75% burn reduction with usage limits getting tighter every week this might be the most practical hack out there right now

Introducing: Free Tier for Browser Use Cloud 🚀 We’re giving all agents their own cloud browsers! > Unlimited browser hours > Free proxies > Persistent authentication Let your agents try for free ↓🔗

Qwen 3.6 Plus from @Alibaba_Qwen is officially the first model on OpenRouter to break 1 Trillion tokens processed in a single day! At ~1,400,000,000,000 tokens, it’s the strongest full day performance of any new model dropped this year. Congrats to the Qwen team!

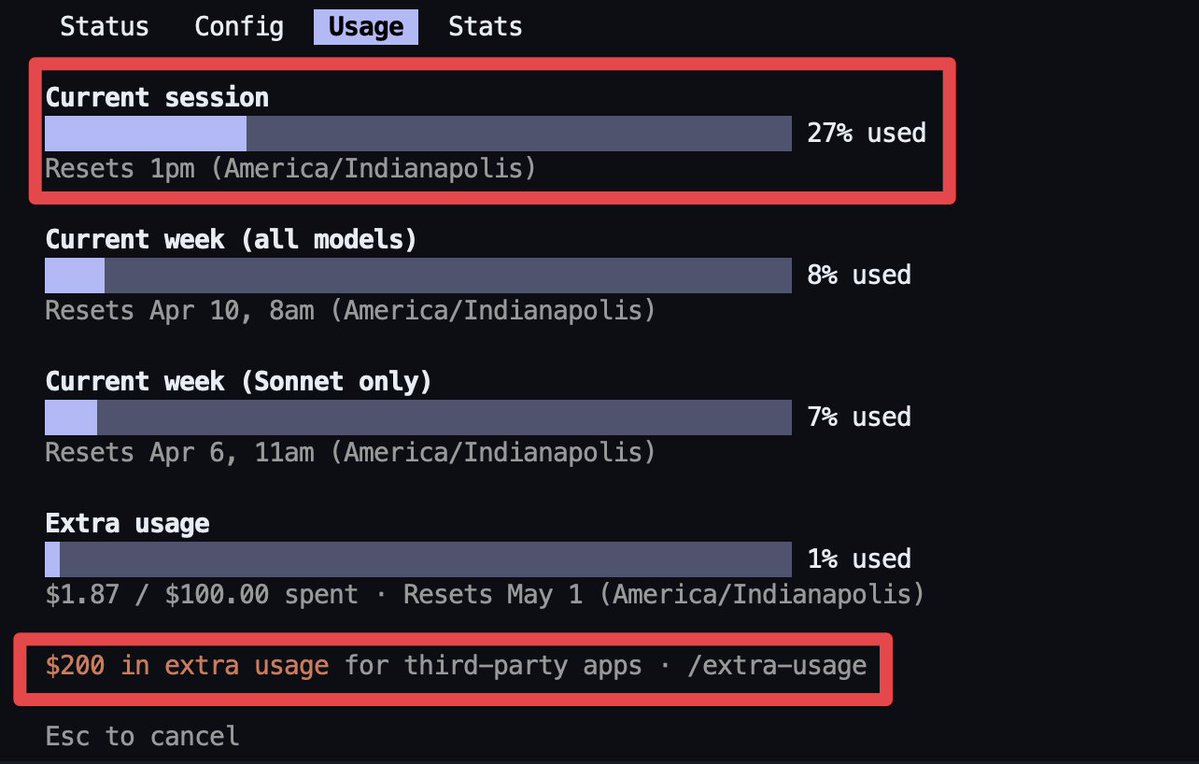

thought your claude subscription had you covered… yeah, same 💀 imagine you finally got your setup working with tools like OpenClaw: > spent hours wiring everything together > figured out configs that made zero sense at first > finally got responses flowing cleanly > felt like “ok this is actually usable now” then boom: > subscription doesn’t cover third-party usage anymore > random errors start showing up > usage just… stops fast forward: > had to switch to API keys > or buy extra usage bundles (wasn’t planning that 👀) > started tracking usage like it’s cloud billing all over again honestly makes sense from their side… but yeah, that “it just works” phase lasted exactly 2 days 😭