Sabitlenmiş Tweet

Hooman Shayani

896 posts

Hooman Shayani

@hsh95

Research Scientist @ Autodesk AI Lab

London, England Katılım Haziran 2009

1.2K Takip Edilen347 Takipçiler

@lucasmaes_ Very nice demonstration of the point. Isn’t explainability the price?

English

@fchollet What’s your definition of symbolic? Or rather, when do you say a rule is not symbolic? I think compression always ends up in symbols that can present more than one thing. But some symbols are understandable and communicatable by humans, some aren’t. Don’t you think?

English

All the great breakthroughs in science are, at their core, compression. They take a complex mess of observations and say, "it's all just this simple rule".

Symbolic compression, specifically. Because the rule is always symbolic -- usually expressed as mathematical equations. If it isn't symbolic, you haven't really explained the thing. You can observe it but you can't understand it.

English

I was not impacted by the Meta PAR/FAIR layoffs today. I’m a research scientist passionate about computer vision, generative models, multimodal understanding, and AI safety. Over the past decade, I’ve contributed to advancing a broad range of applied machine learning problems —

Susan Zhang@suchenzang

👀

English

I was impacted by Meta layoffs today.

As a Research Scientist working on LLM posttraining (reward models, DPO/GRPO) & automated evaluation pipelines, I’ve focused on understanding why/wehere models fail & how to make them better.

I’m looking for opportunities; please reach out!

Susan Zhang@suchenzang

👀

English

I was impacted by the Meta PAR/FAIR layoffs today.

I’m a research scientist passionate about computer vision, generative models, multimodal understanding, and AI safety. Over the past decade, I’ve contributed to advancing a broad range of applied machine learning problems — from multimodal generative models and visual recommender systems, to 2D-to-3D human modeling, large-scale data generation for foundation model training, mechanistic interpretability, and rigorous evaluations for trust & alignment of large-scale AI systems.

I’m actively seeking new opportunities — please reach out if you have any openings!

Susan Zhang@suchenzang

👀

English

Hooman Shayani retweetledi

@GaryMarcus @francoisfleuret Biological systems have a Markov Blanket. They model the world according to their Markov Blanket Boundary. LLMs model their input output distribution which is their MBB and what they can see of the world. Free Energy Principle. Right?

English

@francoisfleuret not the same.

the LLM models an output distribution

(most/many) biological systems model the world.

English

What a very strange angle to demonstrate limitations of LLMs.

Exactly like a bird mimicking another bird's song, an LLM (or an MLP!) matches the visible signal, but devises its internal states ("motor actions") without direct supervision.

Richard Sutton@RichardSSutton

@eigenrobot Even in birdsong learning in zebra finches the motor actions are not learned by imitation. The auditory result is reproduced, not the actions; in this crucial way it differs from LLM training.

English

Hooman Shayani retweetledi

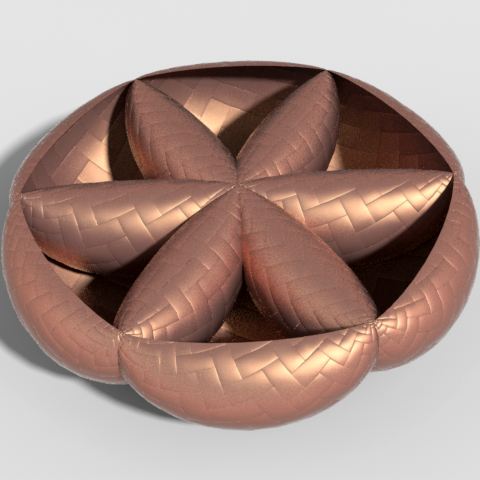

We are hosting live demo at the AI tomorrow booth! Autodesk unleashes Neural CAD 3D generative AI foundation models develop3d.com/cad/autodesk-u…

English

We are hiring a Principal AI Research Scientist proficient in LLM/VLM/MLLM. Join Autodesk AI Lab to drive innovation across various industries and research domains. Apply now to be part of our impactful team! #AI #AutodeskAI #Jobs autodesk.wd1.myworkdayjobs.com/en-US/Ext/job/…

English

@jonathanrichens @KarlFristonNews That is an impressive proof. Does it also mean the agent is using that world model for planning?

English

@hsh95 @KarlFristonNews Similar statement, but Friston assumes the agent operates based on a world model. Whereas we prove that any agent conforming to our assumptions has learned a world model. But also, we say nothing about if / how the world model is used by the agent.

English

Are world models necessary to achieve human-level agents, or is there a model-free short-cut?

Our new #ICML2025 paper tackles this question from first principles, and finds a surprising answer, agents _are_ world models… 🧵

English

@jonathanrichens Isn’t this the same statement as Free Energy Principle from Karl Friston? @KarlFristonNews

English

@docmilanfar Well eq2 is very intuitive while I can’t even understand what the assumptions of eq1 are! 1/2PNr is simply the area of the triangle representing the integral of the interest over time assuming the principal is paid back uniformly.

English

@keenanisalive This is beautiful but rule 5 needs more explanation. What’s the meaning of the notation S(pq)? Why do you say *or*? pq is *and*. Right?

English

I’m #hiring an AI Research Scientist summer Intern to work on 3D generation and editing. Location is flexible (remote/hybrid). Apply here: autodesk.wd1.myworkdayjobs.com/uni/job/London… Also, find many other interns and full-time AI positions @ Autodesk AI Lab in other exciting areas. #AI #3D

English

Hooman Shayani retweetledi

arxiv.org/abs/2411.08017

Autodesk の 3D モデル生成技術の論文

Wavelet Latent Diffusion (WaLa) という手法を提案しているのですが、残念ながら Latent Diffusion の僕の理解が浅く適当なことを言いかねないので、手法のすごさは論文をご参照ください笑

huggingface.co/ADSKAILab

モデルは HF に公開、Google Colab のデモも用意されています。

colab.research.google.com/drive/1W5zPXw9…

飛行機のサンプルを見るとこのくらいであればローカルで動くようになったのかと。

Colab 課金中で L4 / A100 を使える方はぜひ(VRAM 20GB 以上必要)

日本語

Project page (weights, demo): autodeskailab.github.io/WaLaProject/

Code: github.com/AutodeskAILab/…

English

The problem @ylecun refers to, in this case, seems to be due to data bias and overfitting on a famous problem. As soon as you diverge from the original story it comes to its senses!

Yann LeCun@ylecun

😂😂😂 LLMs can plan, eh?

English