Exited to share the results from WeirdML - a benchmark testing LLMs ability to solve weird and unusual machine learning tasks by writing working PyTorch code and iteratively learn from feedback.

Håvard Ihle

652 posts

@htihle

AI researcher, former cosmologist

Exited to share the results from WeirdML - a benchmark testing LLMs ability to solve weird and unusual machine learning tasks by writing working PyTorch code and iteratively learn from feedback.

@saranormous @karpathy @NoPriorsPod Why is he not at a frontier AI lab at the most pivotal time in human history since at least the industrial revolution?

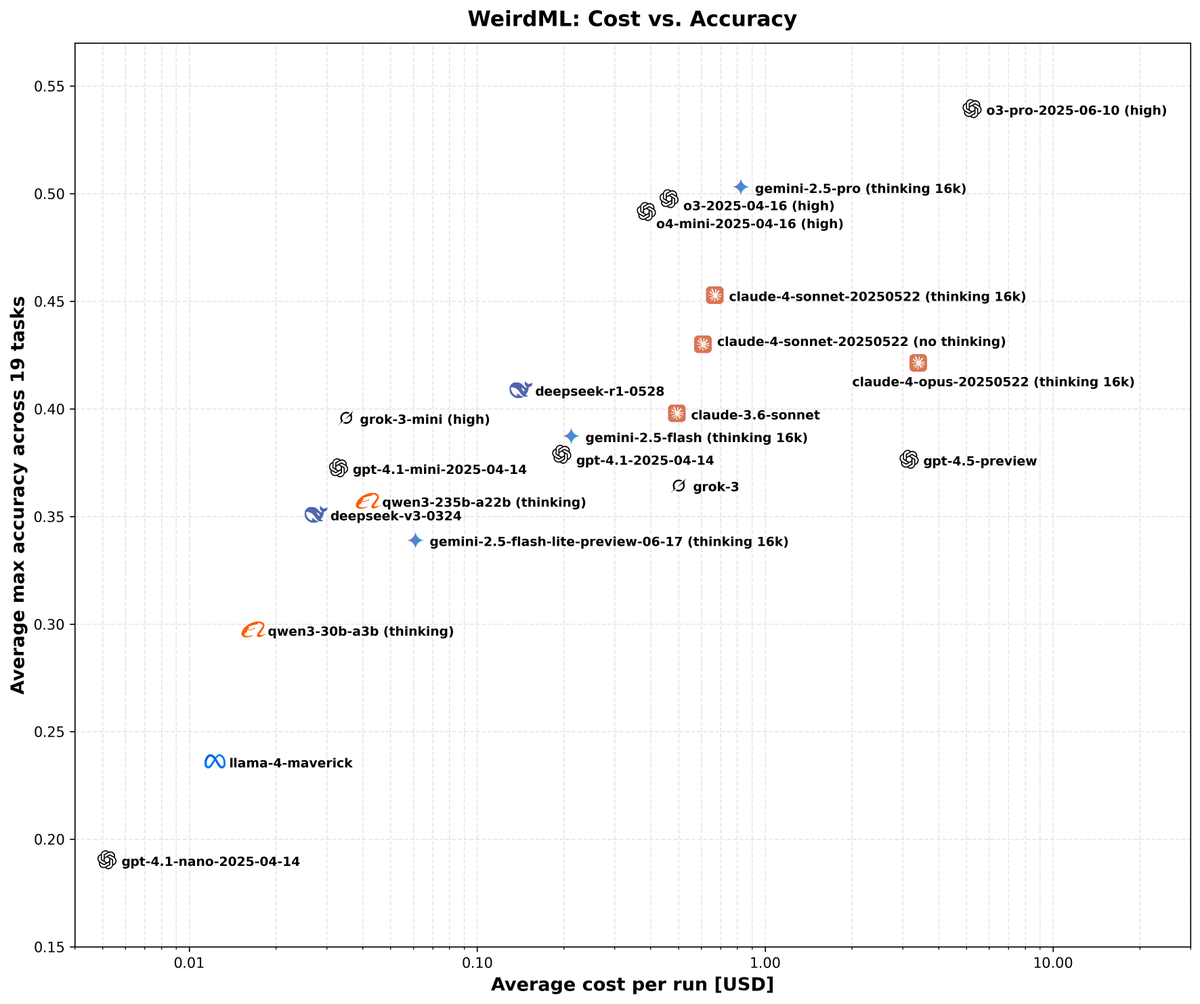

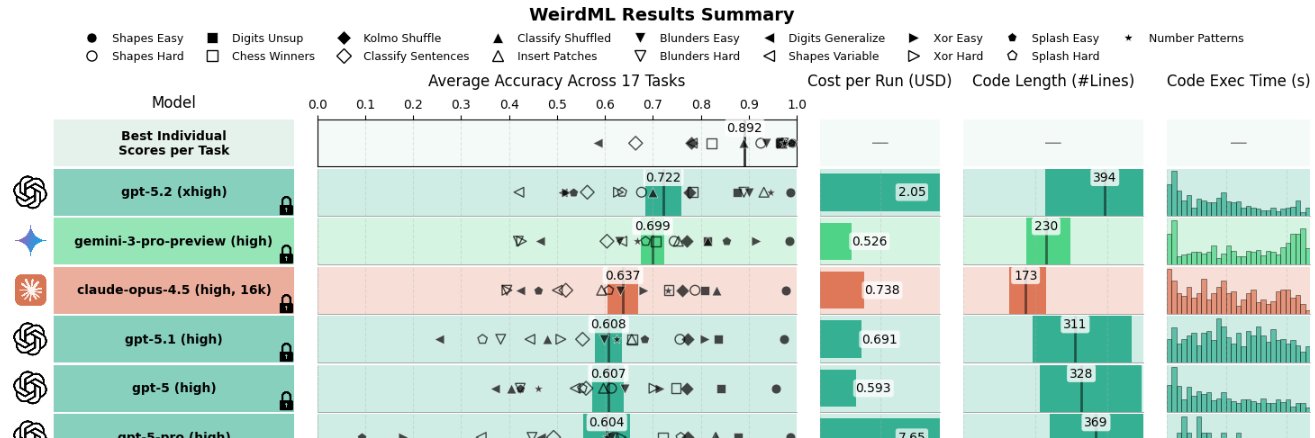

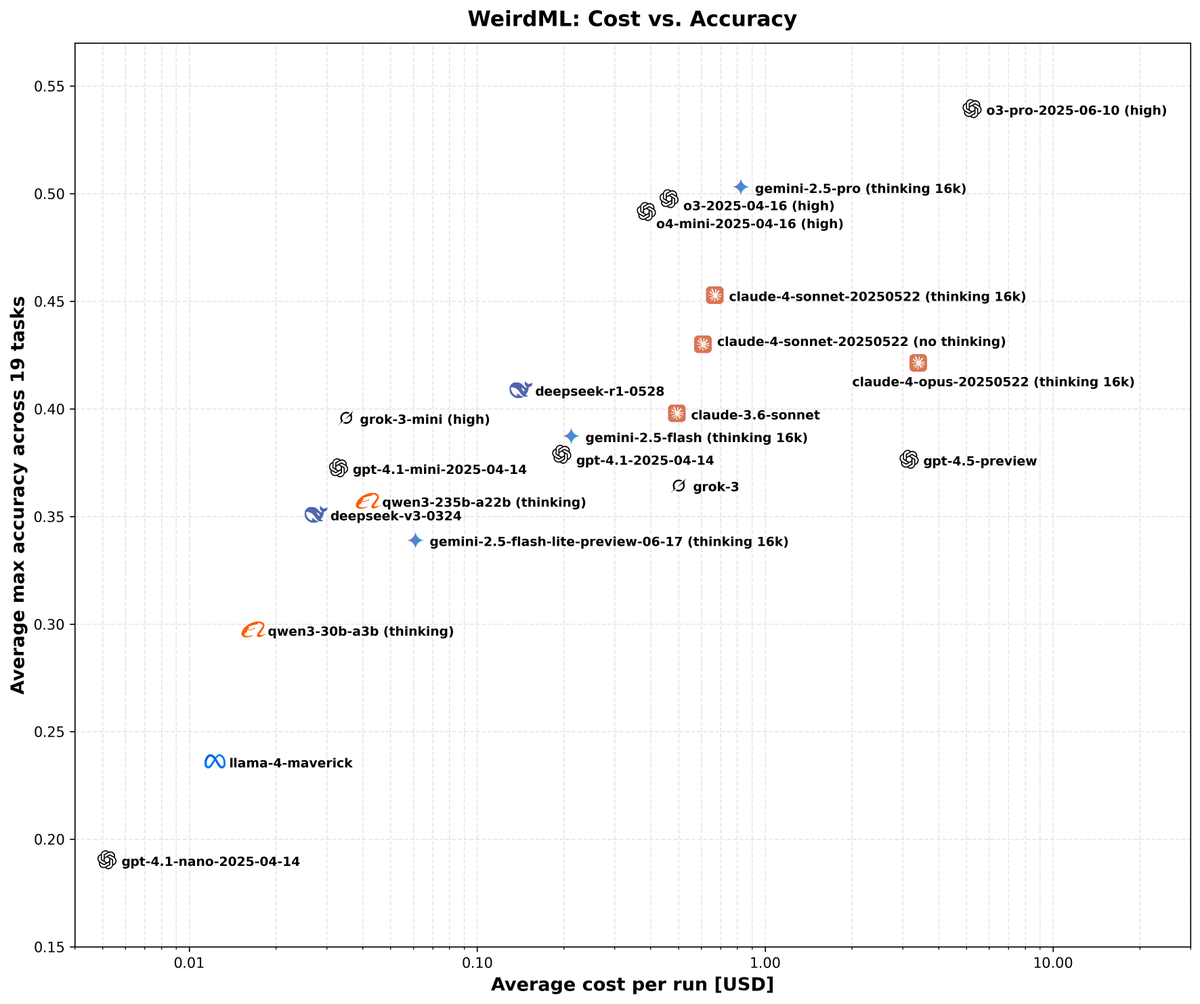

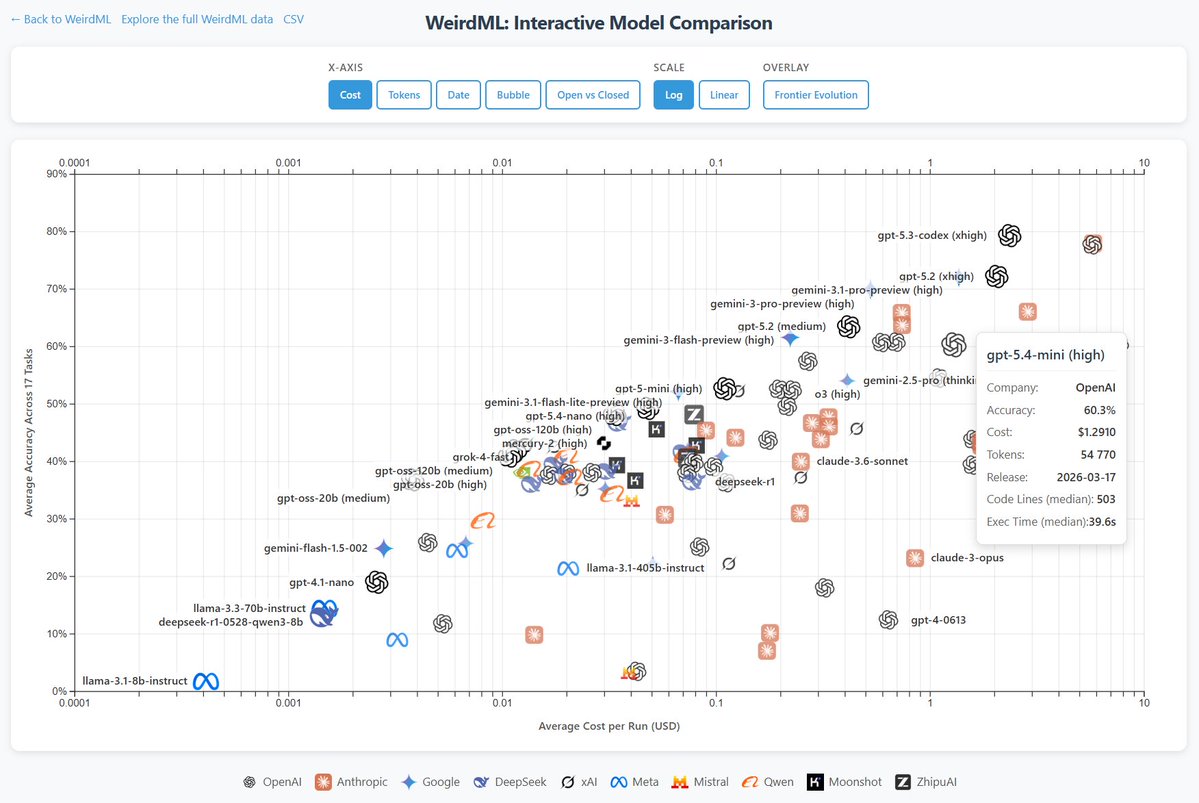

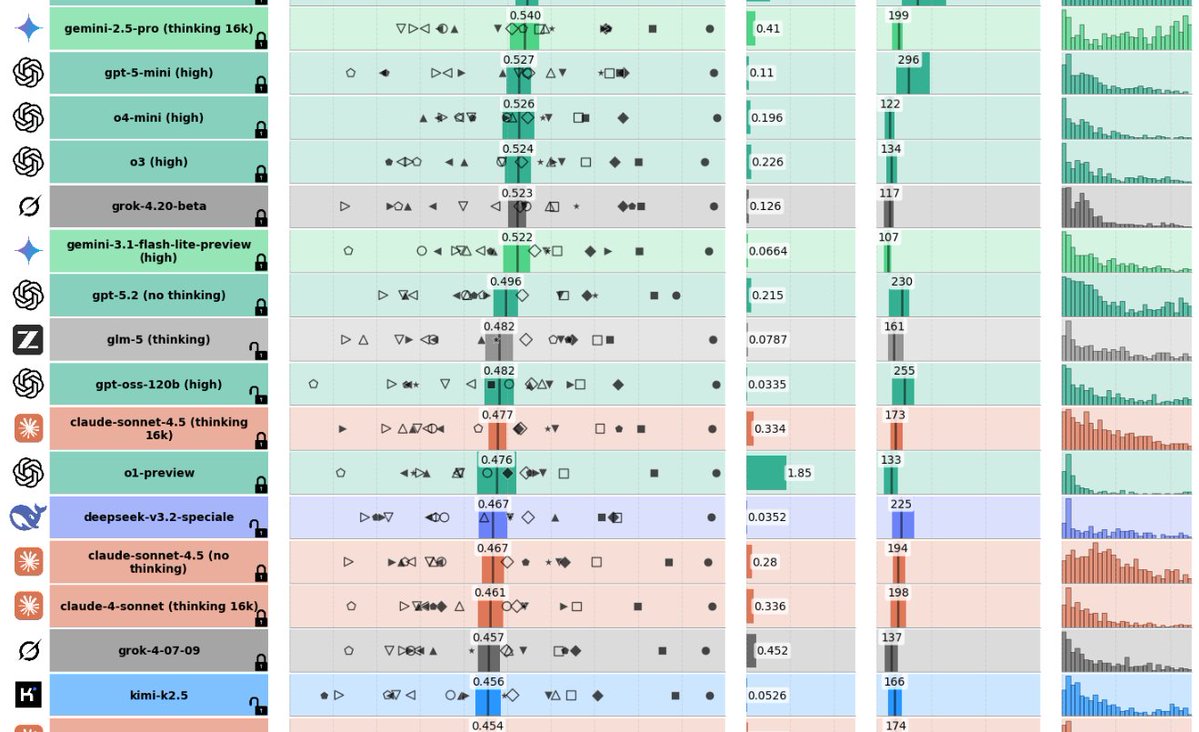

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figures. The first one shows an overview of the overall results as well as the results on individual tasks, in addition to various metadata. The second figure shows cost vs performance and shows a clear scaling with better results for higher costs. We also have a very varied pareto frontier with 11 models from 6 different companies having the best accuracy for a given cost for at least some of the cost range. Grok 3, Claude Opus 4 and GPT 4.5 are the ones that underperform for their costs, while Gemini pro and o3 pro have the best results at the highest costs. Qwen3 30B3A, grok 3 mini and deepseek R1 also each represent a good chunk of the pareto frontier.

Building a self-evolving intelligent agent model - MiniMax M2.7 "M2.7 is our first model which deeply participated in its own evolution" - We believe that future AI self-evolution will gradually transition towards full autonomy, coordinating data construction, model training, inference architecture, evaluation, and other stages without human involvement - To this end, we conducted preliminary exploratory tests in low-resource scenarios. We had M2.7 participate in 22 machine learning competitions at the MLE Bench Lite level open-sourced by OpenAI - These competitions can be run on a single A30 GPU, yet they cover virtually all stages of machine learning research. - We designed and implemented a simple harness to guide the agent in autonomous optimization. The core modules include three components: short-term memory, self-feedback, and self-optimization - Specifically, after each iteration round, the agent generates a short-term memory markdown file and simultaneously performs self-criticism on the current round's results, thereby providing potential optimization directions for the next round - The next round then conducts further self-optimization based on the memory and self-feedback chain from all previous rounds - We ran a total of three trials, each with 24 hours for iterative evolution. From the figure below, one can see that the ML models trained by M2.7 continuously achieved higher performance over time - In the end, the best run achieved 9 gold medals, 5 silver medals, and 1 bronze medal. The average medal rate across the three runs was 66.6%, a result second only to Opus-4.6 (75.7%) and GPT-5.4 (71.2%), tying with Gemini-3.1 (66.6%)

Nemotron 3 Super scores 38.0% on WeirdML, a solid score, and ahead of (the original) qwen3-235b (thinking). I ran it locally through ollama, with quantization q4_K_M, so full precision might do even better. The price is assuming 0.1/0.5$/M.

Nemotron 3 Super scores 38.0% on WeirdML, a solid score, and ahead of (the original) qwen3-235b (thinking). I ran it locally through ollama, with quantization q4_K_M, so full precision might do even better. The price is assuming 0.1/0.5$/M.

Well, seems we're not getting DeepSeek V4 today but we're getting what amounts to its lite version runnable on normal hardware. New architecture, fast, 1M context… …and it's a bit weaker than the equivalent Qwen 3.5.

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figures. The first one shows an overview of the overall results as well as the results on individual tasks, in addition to various metadata. The second figure shows cost vs performance and shows a clear scaling with better results for higher costs. We also have a very varied pareto frontier with 11 models from 6 different companies having the best accuracy for a given cost for at least some of the cost range. Grok 3, Claude Opus 4 and GPT 4.5 are the ones that underperform for their costs, while Gemini pro and o3 pro have the best results at the highest costs. Qwen3 30B3A, grok 3 mini and deepseek R1 also each represent a good chunk of the pareto frontier.

@deanwball My background provides the point. These off base AI opinions are making Heritage look bad so it’s better I just say no than call out how you clearly don’t understand the differences in model Pre-Training and Post/Training that have the DoW thinking differently about Claude.

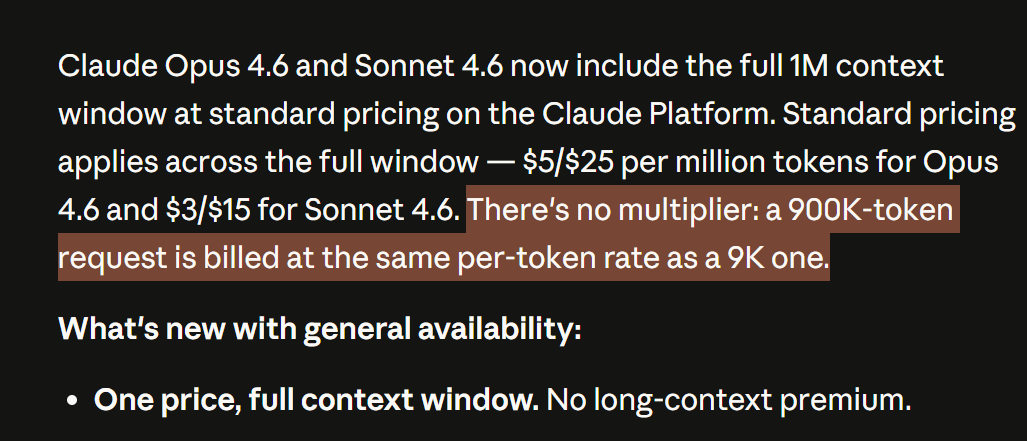

1 million context window: Now generally available for Claude Opus 4.6 and Claude Sonnet 4.6.

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figures. The first one shows an overview of the overall results as well as the results on individual tasks, in addition to various metadata. The second figure shows cost vs performance and shows a clear scaling with better results for higher costs. We also have a very varied pareto frontier with 11 models from 6 different companies having the best accuracy for a given cost for at least some of the cost range. Grok 3, Claude Opus 4 and GPT 4.5 are the ones that underperform for their costs, while Gemini pro and o3 pro have the best results at the highest costs. Qwen3 30B3A, grok 3 mini and deepseek R1 also each represent a good chunk of the pareto frontier.