Dave Kwon

12 posts

Dave Kwon

@iamdavekwon

Dev @ https://t.co/3Zizl82Jrv, building Wizard, the AI harness for our entire team 🇰🇷

这两本书把Claude Code和Codex的Harness工程吃透了,对于Claude Code和Codex源码解析和对比都入木三分,文科生也能读的津津有味: 《Harness Engineering——Claude Code 设计指南》:不是源码注释汇编,也不是产品功能介绍。它关注的是 Claude Code 如何把不稳定模 型收束进可持续运行的工程秩序,让控制面、主循环、工具权限、上下文治理、恢复路 径、多代理验证与团队制度长成一套完整骨架 《Claude Code 和 Codex 的 Harness 设计哲学——殊途同归,还是各表一枝》:比较两套 AI coding harness,最容易犯的错误,是拿一张功能对照表当作思想史。左边写“有技能”,右边也写“有技能”;左边写“有沙箱”,右边也写“有沙箱”;左边写“能开子代理”,右边也写“能开子代理”。这样写的好处是省事,坏处是几乎什么也没说。因为工具中的名词相同,不代表系统的骨架相同。就像两个城市都修了桥,不能说明它们是按同一条河设计的。 Github仓库:github.com/wquguru/harnes… 在线阅读:harness-books.agentway.dev/index.html

Starting tomorrow at 12pm PT, Claude subscriptions will no longer cover usage on third-party tools like OpenClaw. You can still use these tools with your Claude login via extra usage bundles (now available at a discount), or with a Claude API key.

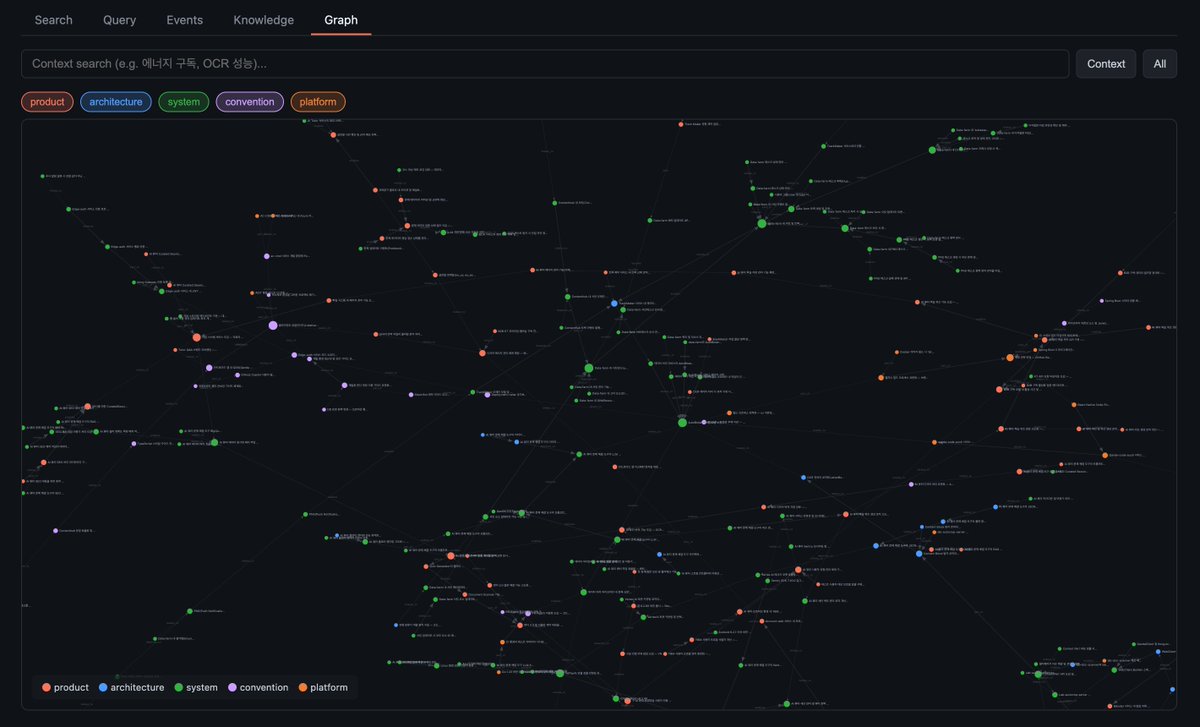

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.