Anshuman Suri

845 posts

Anshuman Suri

@iamgroot42

Research @datologyai | Previously Postdoc @KhouryCollege, Ph.D. @UVA | Interested in data quality x security & privacy.

New Datology Research: We expose "The Finetuner's Fallacy" The standard approach to domain adaptation (pretrain on web data, finetune on your data) is leaving performance on the table. Mixing just 1-5% domain data into pretraining, then finetuning, produces a strictly better model: ◾ 1.75x fewer tokens to reach the same domain loss ◾ 1B SPT model outperforms a 3B finetuned-only model ◾ +6pts MATH accuracy at 200B pretraining tokens ◾ Less forgetting of general knowledge Tested across chemistry, symbolic music, and formal math proofs. SPT wins on every metric. Led by @_christinabaek and @pratyushmaini, with the full Datology team.

Zendaya in the new poster for ‘DUNE: PART THREE’ In theaters December 18.

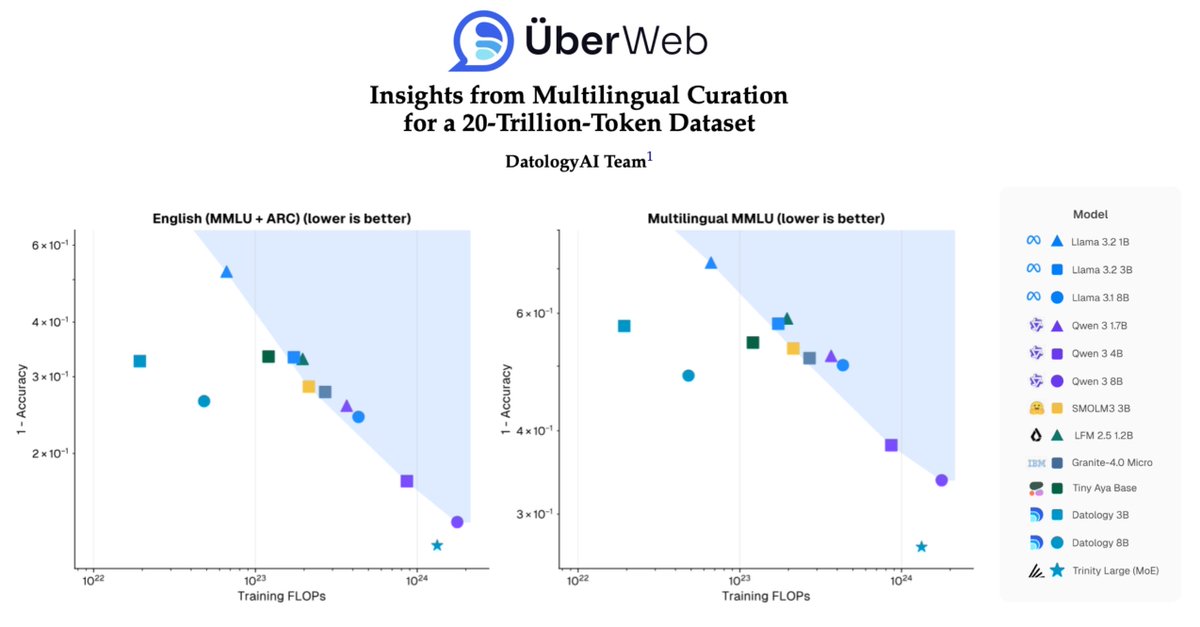

1/ People often think better multilingual models must come at the cost of English performance. Not true. The constraint isn’t capacity, it’s data quality, and we can fix it. Today @datologyAI shares ÜberWeb: a year of multilingual curation lessons, scaled to 20T+ tokens.

this week I have observed first hand the elite meme game possessed by @iamgroot42 .. truly a generational talent

1/ People often think better multilingual models must come at the cost of English performance. Not true. The constraint isn’t capacity, it’s data quality, and we can fix it. Today @datologyAI shares ÜberWeb: a year of multilingual curation lessons, scaled to 20T+ tokens.

1/ People often think better multilingual models must come at the cost of English performance. Not true. The constraint isn’t capacity, it’s data quality, and we can fix it. Today @datologyAI shares ÜberWeb: a year of multilingual curation lessons, scaled to 20T+ tokens.