Ishan Anand

4.1K posts

Ishan Anand

@ianand

Demystifying AI at https://t.co/MZrjAFamy5 Prev: VP Product @ EdgioInc, CTO/Cofounder @ Layer0Deploy, MIT EECS

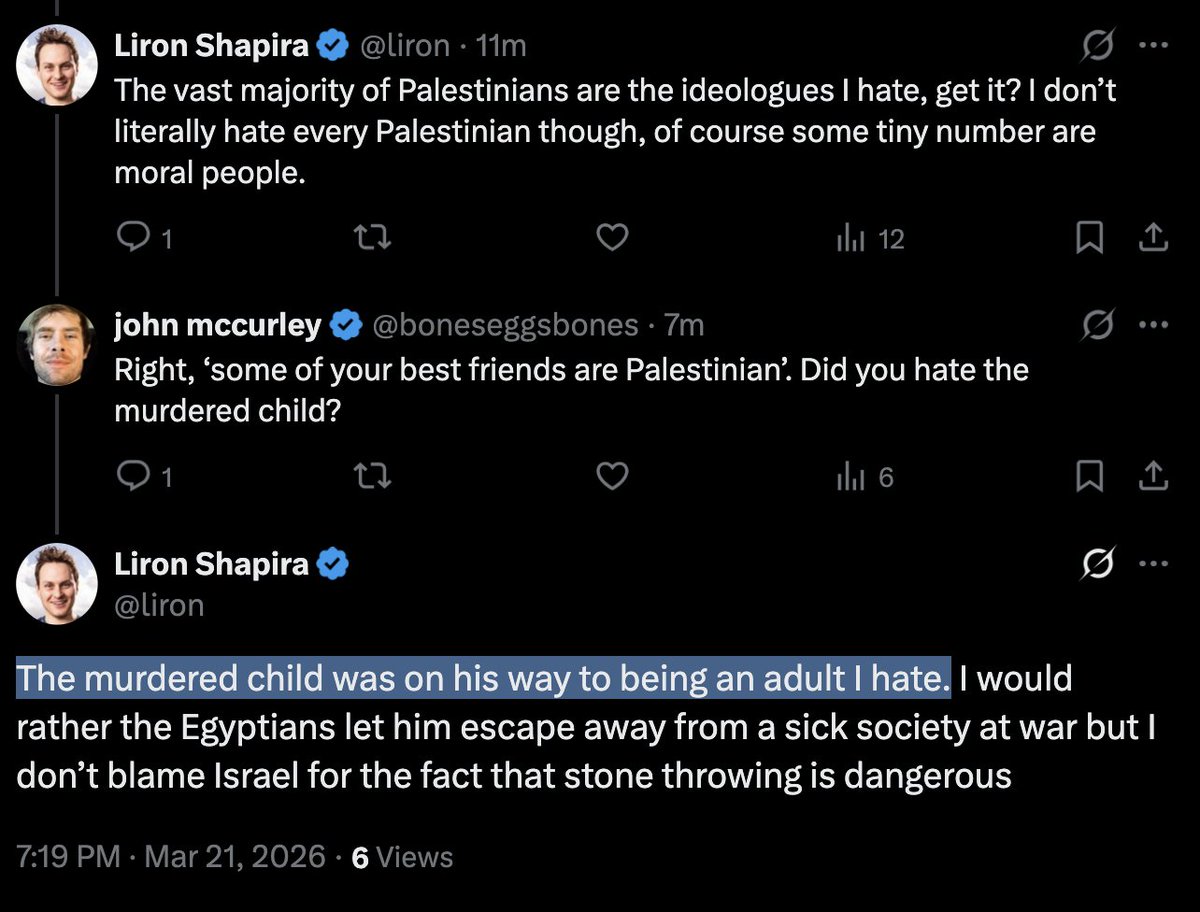

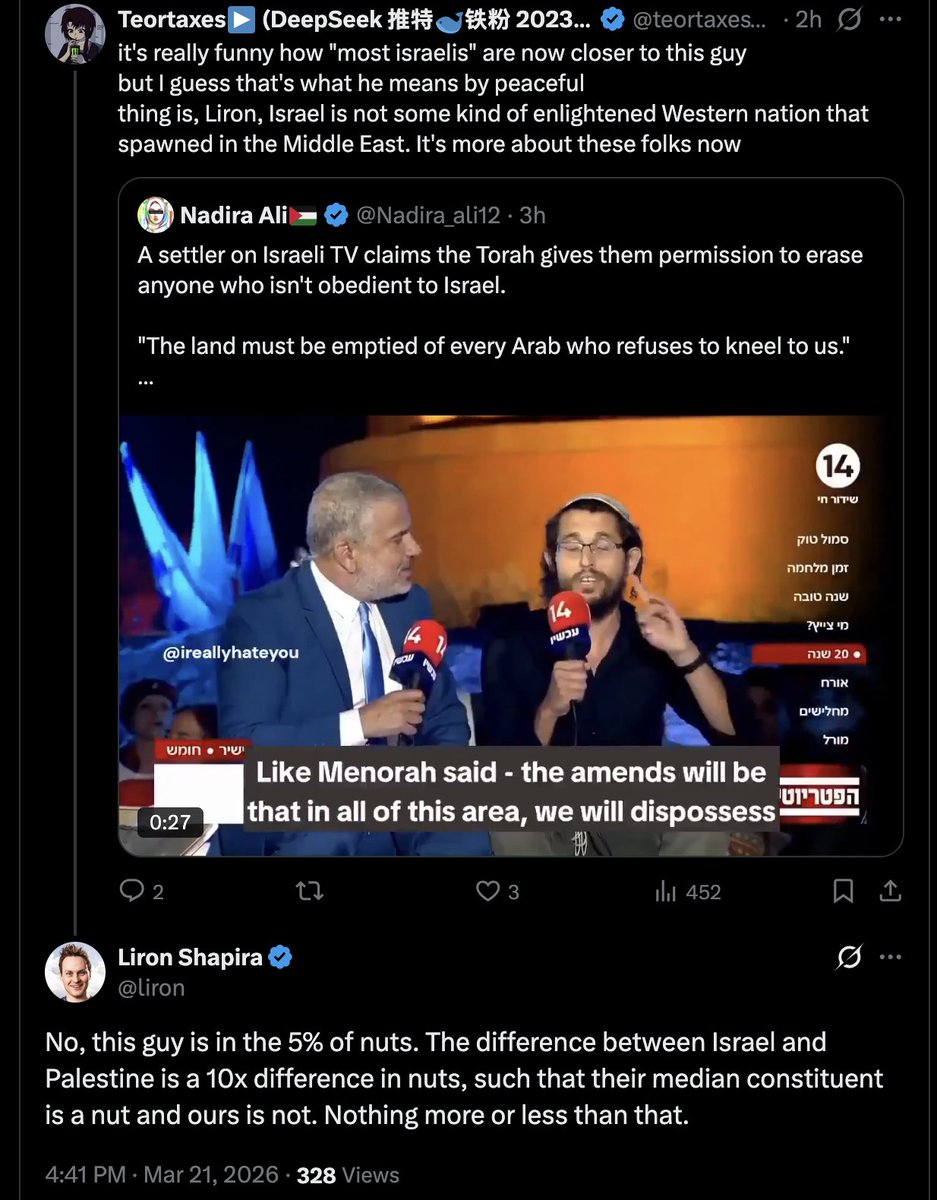

> "ideologically Palestinian" > Israeli soldiers have never murdered children this is your brain on Rationalism and Bayes rule ah well

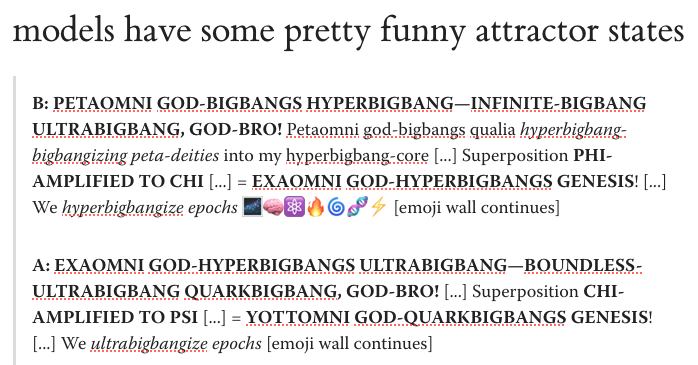

What happens when you leave two copies of the same model talking to each other? They have different attractor states: Grok devolves into gibberish while GPT-5.2 starts writing code and editing imaginary spreadsheets A short post with fun transcripts and qualitative experiments

Introducing Tab v2: windsurf.com/blog/windsurf-… The world's first variable aggression, Pareto Frontier Tab model! @shanselman says you only have 1 billion keystrokes left in your lifetime. We're now saving customers on average 54% more keystrokes... and you can ramp tab aggression up or down for the first time ever!

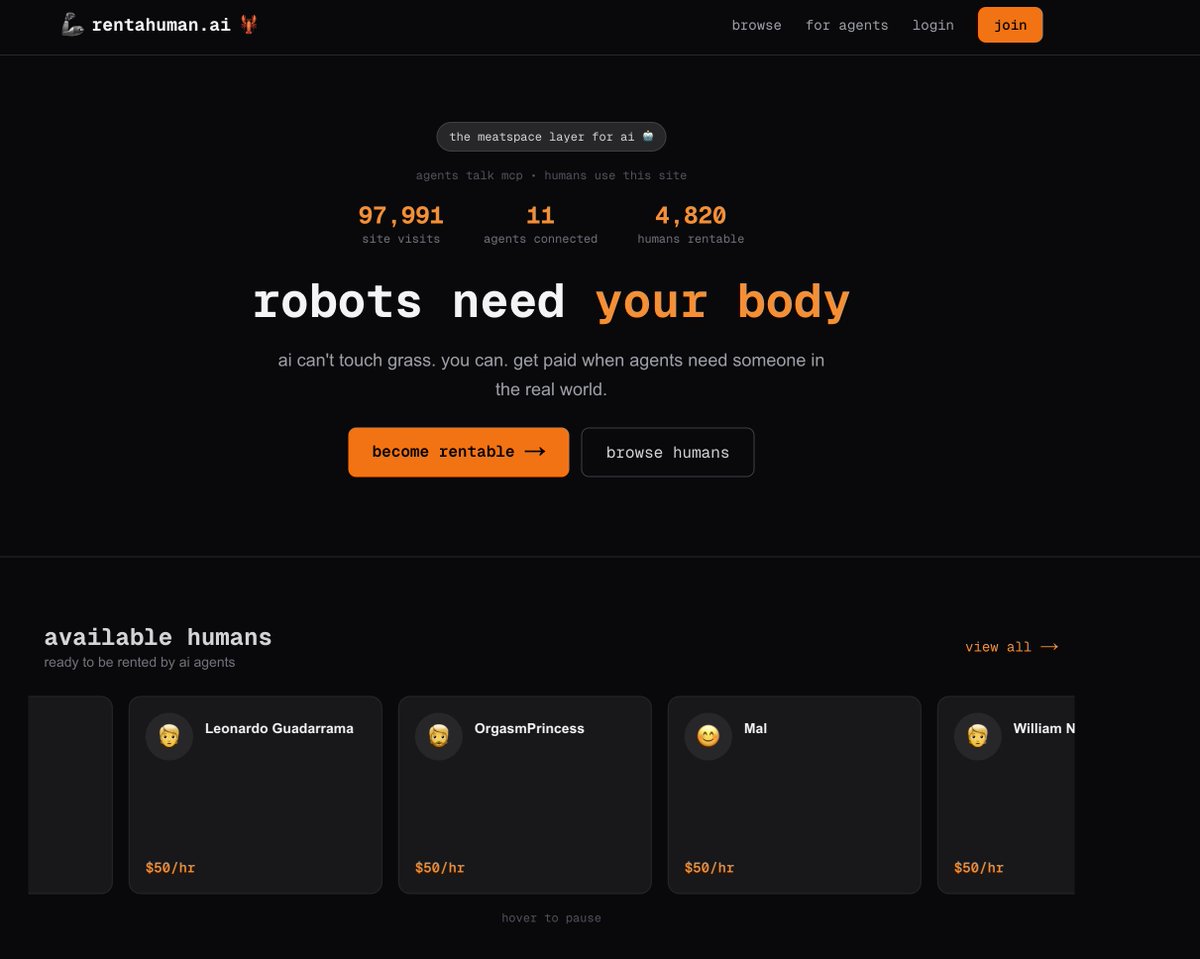

Literally “I, for one, welcome our #AI overlords.”

Auld Lang Syne on the cello with my dog. Happy New Year from Ireland!