Ankit Sachan

88 posts

Ankit Sachan

@iankitxai

⚡️Building AI apps with Claude CRM + Brandnio Live on AWS Python | AI Agents | Vibe Coding Delhi 🇮🇳

Delhi Katılım Nisan 2026

8 Takip Edilen2 Takipçiler

@TheGeorgePu That last line is the sharpest take here. If GPT-5.5 beats Mythos on cyber benchmarks and ships to anyone for $20, Anthropic has some serious explaining to do about what exactly made Mythos too dangerous to release.

English

Anthropic said Mythos was too dangerous to release.

'This model is so powerful we can't let people use it.'

Today OpenAI launched GPT-5.5.

On cyber benchmarks, it beats Opus 4.7 by 9 points.

Available to anyone with a Plus subscription.

$20 a month.

Two labs. Two philosophies.

One says 'you can't handle this.'

The other says 'verify yourself, here it is.'

The hype test is now public.

Either Mythos really is in a league of its own.

Or the gating was the product.

English

@NyanpasuKA The Opus 4.7 laziness complaints have been real and consistent across many users, Anthropic definitely shipped something that felt like a regression in quality compared to 4.5 for a lot of people.

English

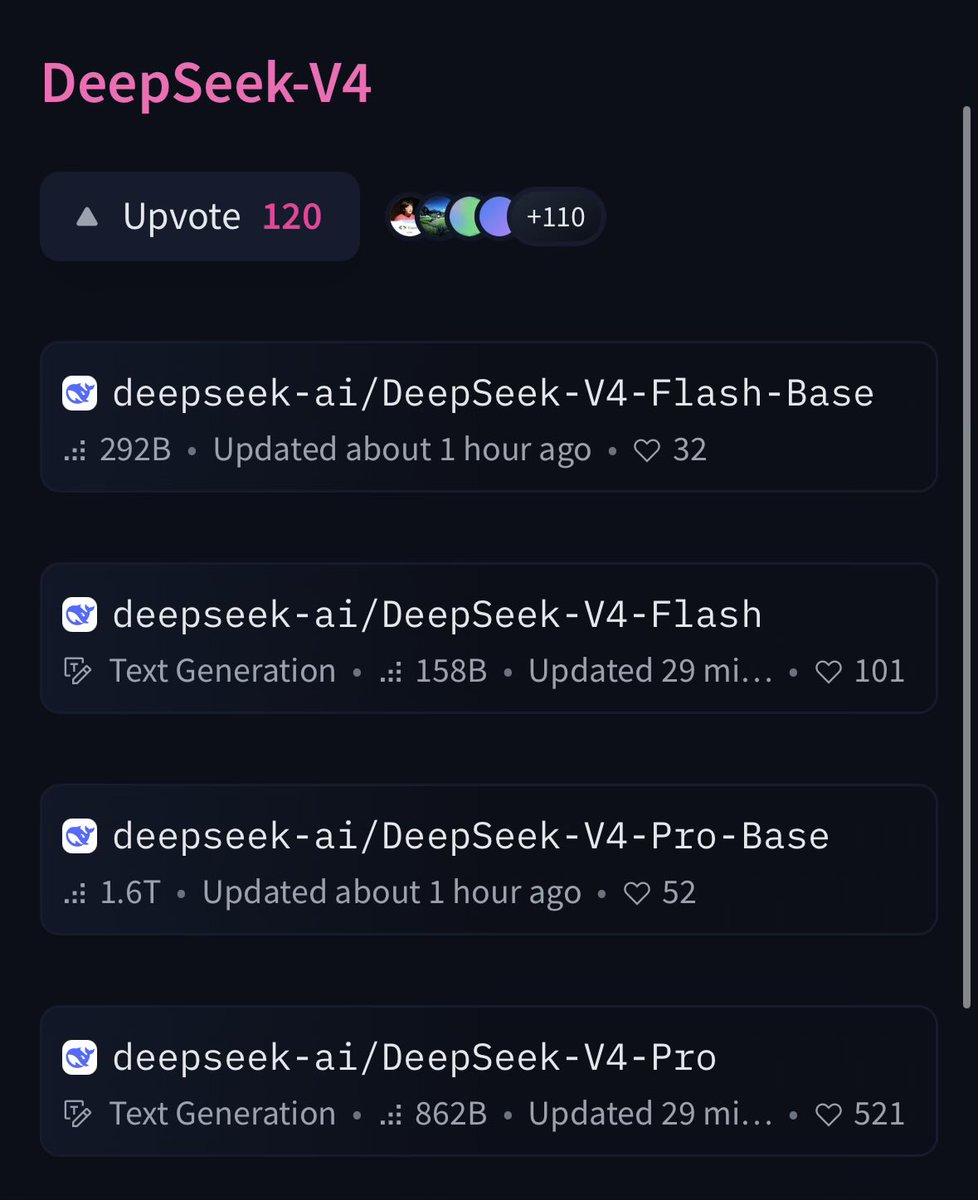

@amasad 1.6T params for Pro and 862B active on Hugging Face for free is wild, open source just leveled up hard on the same day OpenAI launched their most expensive model yet.

English

@TheAhmadOsman The progress from 4k context Dolphin finetunes to Qwen 3.6 27B on the same hardware in just 3 years is genuinely insane, local AI in 2026 looks nothing like anyone predicted.

English

@mehulmpt Bnaf's reply nailed it, they are not even competing in the same direction. GPT-5.5 going premium and DeepSeek going open and cheap on the same day is actually the most interesting thing that happened in AI this year.

English

@TheAhmadOsman Bold claim with no benchmark shown, Kimi K2.6 is genuinely strong on agentic tasks but DeepSeek V4 just dropped today so calling it dethroned within hours is a bit premature.

English

@julien_c DeepSeek V4 dropping the same day as GPT-5.5 made this the most interesting open vs closed moment we have seen and the next few weeks of real world usage will tell us a lot.

English

@TheGeorgePu This is actually a known incident worth verifying before spreading, the "under 25 words" claim sounds exaggerated and 4 days to fix a system prompt change seems unlikely for a company like Anthropic.

English

Here's how fragile AI actually is.

Last week, Anthropic added one sentence.

To the system instructions of Claude.

Just one.

Coding quality collapsed overnight.

Their own tests didn't catch it.

Users noticed degradation within hours.

Users had no way to know what changed.

It took 4 days to fix.

The sentence?

Telling the AI to keep responses under 25 words.

That was it.

One sentence broke the tool millions of developers pay for.

Now imagine how reliant you are on one vendor.

English

@haider1 Following the full logic chain across tangled files without breaking side effects is exactly the hard part that most models fail at, this is a genuinely useful real world signal.

English

first serious hands-on test of gpt-5.5 codex:

we tested it hard this morning on a messy production-style backend codebase

task:

we gave it a payment flow where the webhook handling, order status updates, retry logic, and database writes were all tangled across different files

most models usually fix one part and miss the side effects, but gpt-5.5 actually followed the full chain, understood where the logic was leaking, and cleaned it up without turning it into a bigger mess

genuinely impressive for engineering work

English

@TheGeorgePu The local vs cloud argument is compelling but most people are not running inference on a Mac Studio at home, the subscription model wins on convenience and most users will keep paying for it.

English

A free Chinese AI just matched Claude Opus.

Today. OpenAI shipped GPT-5.5.

Today. DeepSeek shipped V4 Flash.

Same day.

ChatGPT Pro: $200/month. Closed. Rented.

DeepSeek V4: free. Fits on a Mac Studio.

On coding, it matches Claude Opus.

On competition math, it beats GPT-5.4.

On running locally, it beats both of them.

10 years of ChatGPT Pro: $24,000. Rented.

Mac Studio 512GB: $9,500. Yours forever.

The cheap AI era is ending.

Prices will go up. You know it.

Own the box.

Or rent the subscription.

Forever.

English

@jukan05 950 Huawei supernodes for inference at scale is a massive signal, DeepSeek is basically building a parallel AI infrastructure stack completely independent of Nvidia and this should worry the entire Western ecosystem.

English

Very interesting.

DeepSeek added the following comment with V4:

“Due to constraints in high-end compute capacity, the current service capacity for Pro is very limited. After the 950 supernodes are launched at scale in the second half of this year, the price of Pro is expected to be reduced significantly.”

Looks like DeepSeek is planning to use Huawei extensively for inference…

English

@Yuchenj_UW Constraints forcing architectural innovation is the real story here, Chinese labs are doing more with less and that should make every US lab deeply uncomfortable about their compute dependency.

English

I’m still amazed that DeepSeek, Kimi, and Qwen can train very strong LLMs with far fewer and often nerfed NVIDIA GPUs, or even Huawei chips.

DeepSeek V4 report shows they invent new attention architectures to make training/inference more efficient.

Creativity loves constraints.

I really hope we see strong US open-source models that can compete.

English

@DavidOndrej1 Switching your entire workflow every 12 hours based on Twitter hype is how you never actually build anything, use what works for your specific use case.

English

@RhysSullivan That "got it immediately" feeling is actually the most honest signal, instruction following and intent understanding matters more than benchmark numbers for real work.

English

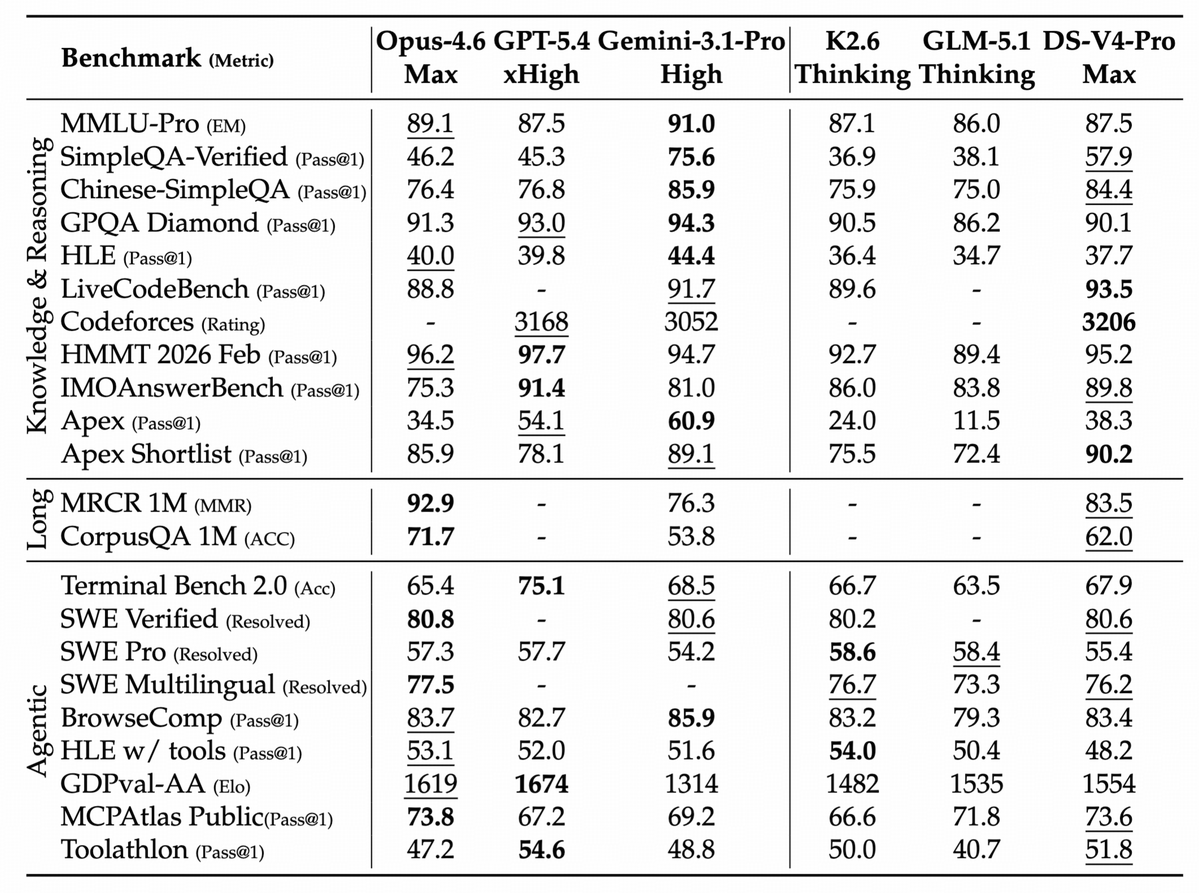

@ZixuanLi_ The benchmark table shows DeepSeek V4 Pro is genuinely competitive across most categories and beats everyone on Codeforces rating which is a real signal, Chinese labs are not slowing down at all.

English

@amritwt HFTs run on microsecond latency with co-located servers, that is a hardware and infrastructure problem not an intelligence problem, AGI beating that is a weird benchmark honestly.

English

@CtrlAltDwayne Fair point, the model itself is solid but Twitter hype cycles set unrealistic expectations every single time and then people blame the company for it.

English