Ihor Stepanov

353 posts

@ihor_step

I am the CEO and co-founder of Knowledgator. We are advancing the #information_extraction field with #opensource #AI models.

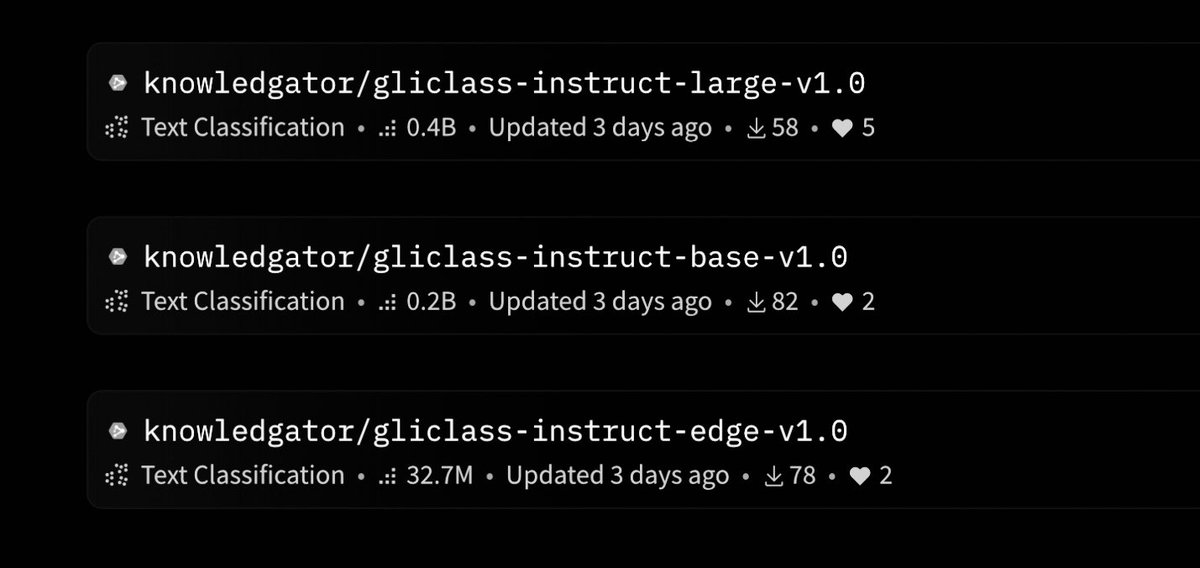

GLiClass-V3: A family of encoder-only models that match or exceed DeBERTa-v3-Large in zero-shot accuracy, while delivering up to 50× faster inference. Core Design: - Single-pass inference: No cross-encoder pairing needed. One forward pass handles all labels. - LoRA adapters: Fine-tuned on logic tasks (e.g., Formal Logic Reasoning, Commonsense QA) for symbolic generalization without catastrophic forgetting. - Edge-ready: gliclass-edge-v3.0 hits 97 ex/s on A6000, ideal for mobile and IoT GLiClass-V3 variants (gliclass-*): (Built on DeBERTa, ModernBERT, and Ettin for edge deployment) - large-v3.0: 70.0% avg F1 (best) - base-v3.0: 65.6% - modern-large: 60.8% - edge-v3.0: 48.7% (fastest, Ettin-based) - x-base: 57% F1 (EN), 42% (multilingual) for robust multilingual zero-shot generalization Benchmarks (zero-shot, no fine-tuning): - CR, SST2, IMDb: ~0.93–0.94 F1 - Outperforms GLiClass-v2 and cross-encoders (e.g., DeBERTa-v3-Large, RoBERTa) - Scales to 128+ labels with massive speedup (DeBERTa-Large: 0.25 ex/s vs GLiClass: 82.6) Use cases: - Multi-label classification (e.g., topic, sentiment, spam) - RAG reranking - Privacy-safe on-device NLP Built on DeBERTa and ModernBERT. Fully open-source. pip install gliclass