Lingpeng Kong

113 posts

Lingpeng Kong

@ikekong

Assistant Professor @ The University of Hong Kong, Previously Research Scientist @ DeepMind

🚀Building on the success of Dream 7B, we introduce Dream-VL and Dream-VLA, open VL and VLA models that fully unlock discrete diffusion’s advantages in long-horizon planning, bidirectional reasoning, and parallel action generation for multimodal tasks.

Happy to introduce my internship work at @Apple . We introduce CLaRa: Continuous Latent Reasoning, an end-to-end training framework that jointly trains retrieval and generation ! 🧠📦 🔗 arxiv.org/pdf/2511.18659… #RAG #LLMs #Retrieval #Reasoning #AI

🚀 Thrilled to share our #NeurIPS2025 paper DynaAct: Large Language Model Reasoning with Dynamic Action Spaces A new test-time scaling view — optimizing the action space itself, while providing a general MCTS acceleration framework for reasoning. 💻 github.com/zhaoxlpku/Dyna…

Diffusion LLM + Agents are 🔥 This is @_inception_ai's Diffusion LLM with @huggingface SmolAgents: - Planning tool use - Executing 20 web searches and parsing results - Synthesizing the data All in 3.5 seconds. With 10 searches it took only 1.6 seconds. Source on GitHub below.

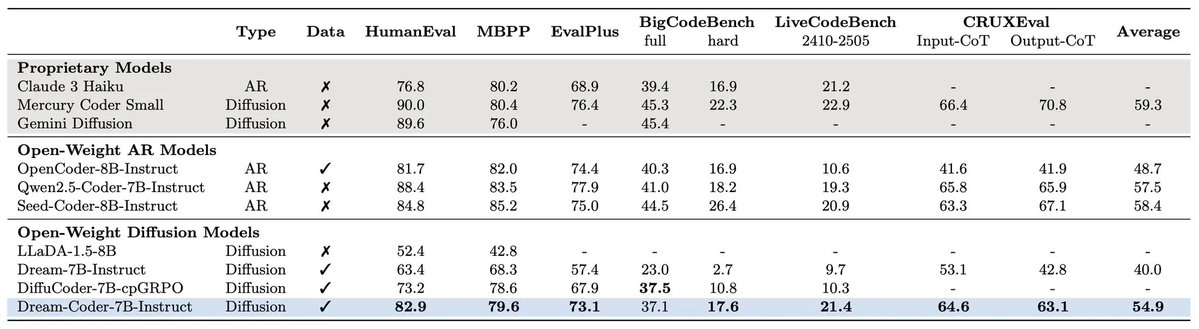

🚀 Thrilled to announce Dream-Coder 7B — the most powerful open diffusion code LLM to date.

Introducing Generative Interfaces - a new paradigm beyond chatbots. We generate interfaces on the fly to better facilitate LLM interaction, so no more passive reading of long text blocks. Adaptive and Interactive: creates the form that best adapts to your goals and needs!