Inceptive retweetledi

How to Teach LLMs to Follow Instructions

I just shared a new tutorial video as part of the Build a Large Language Model From Scratch series!

In this one, we take a pre-trained decoder-only model and turn it into a small personal assistant that can handle free-form instruction-following tasks like answering questions, rewriting text, or generating short responses.

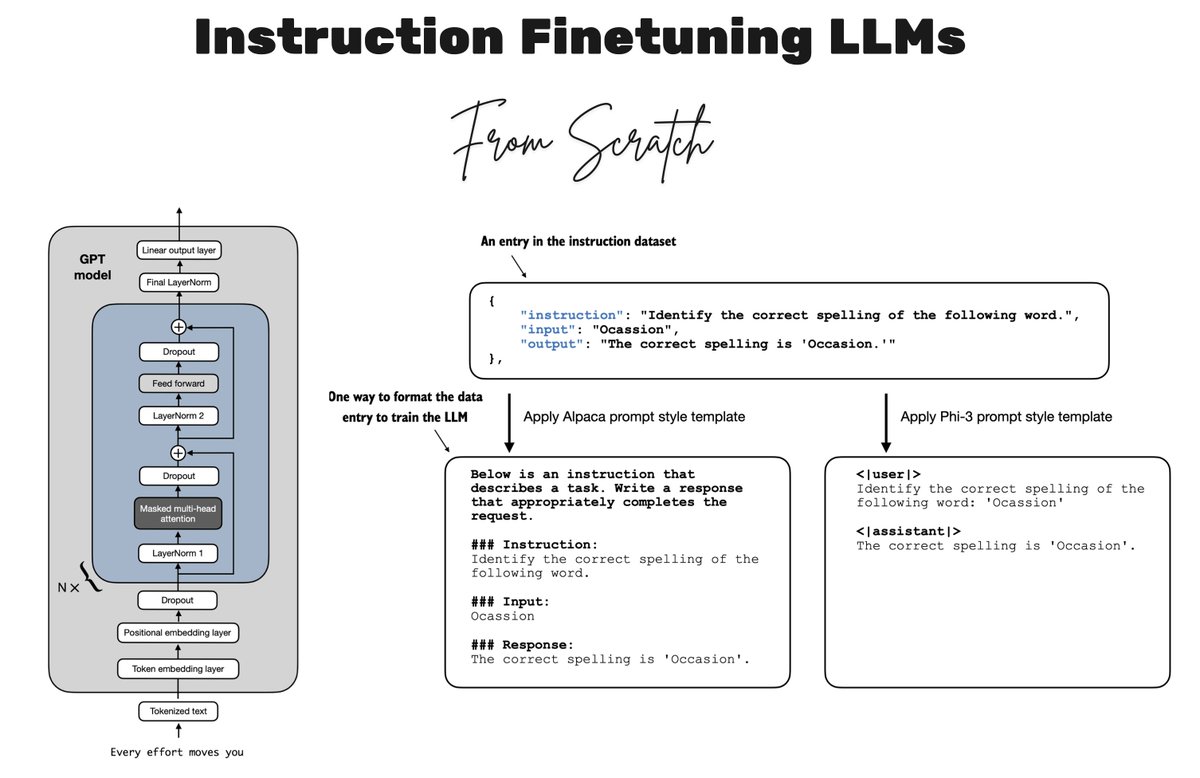

From my experience working with teams building LLM applications, I’ve noticed that many people still think instruction fine-tuning is complicated. But as you’ll see, we reuse the same loss function (and even the same training loop) as during pre-training. The only real difference? How we format the data.

In this video, I walk through:

1. What instruction fine-tuning actually is

2. How to format data into prompt/response pairs using common templates

3. A lightweight dataset to demonstrate the process

4. Practical tips like dynamic padding, loss masking, and clean end-of-text handling

The model we train here is not a ChatGPT competitor, but it is small and fast, which is ideal for educational use, and great way to understand the principles behind instruction-following LLMs.

The full walkthrough is available here: youtube.com/watch?v=4yNswv…

YouTube

English