Inferia AI

27 posts

Inferia AI

@inferiaAI

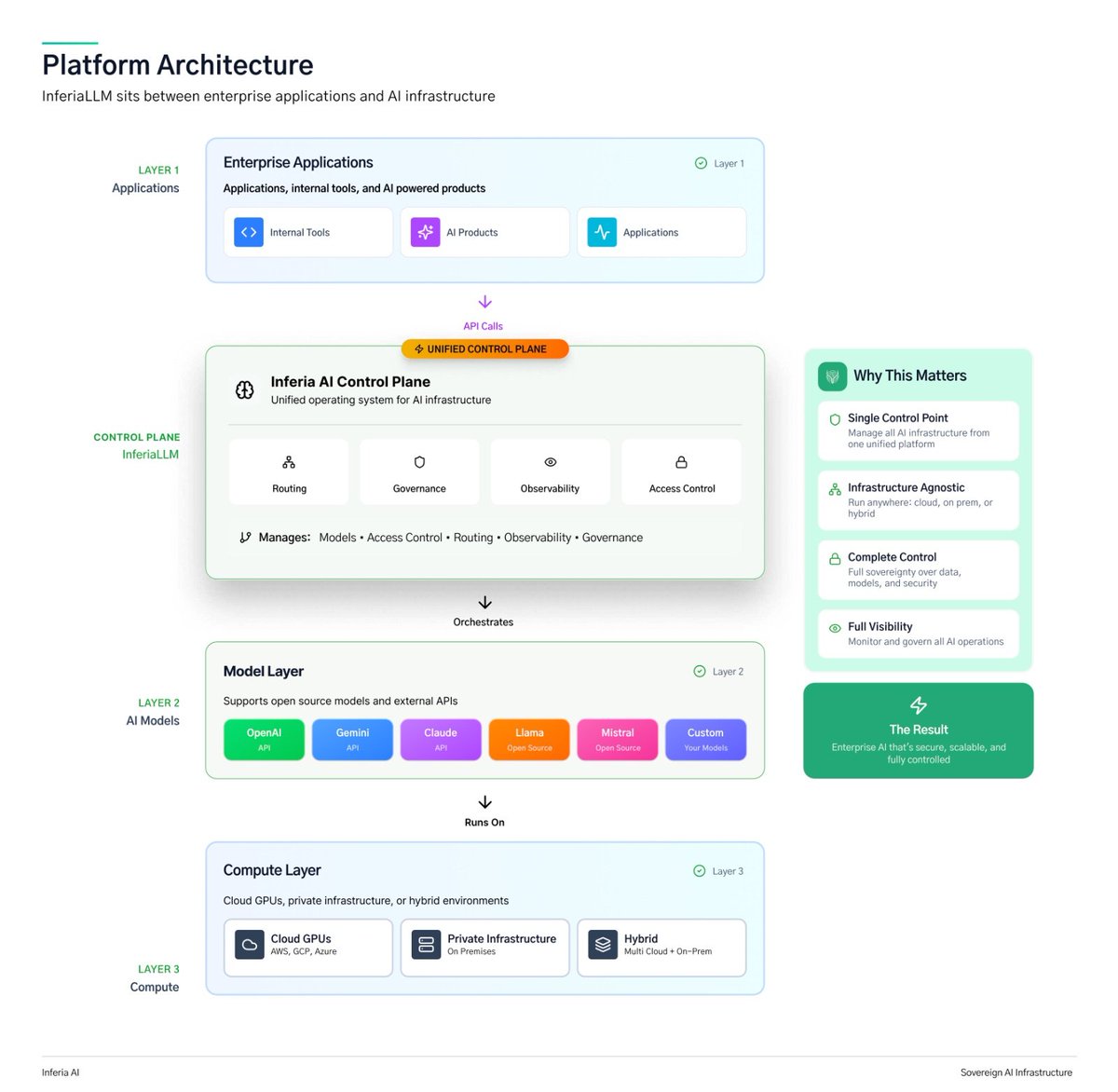

We build Research-driven infrastructure for enterprise-grade private LLM inference and GenAI systems.

Nvidia, Amazon, Google will have to divest from Anthropic if Hegseth gets his way. This is simply attempted corporate murder. I could not possibly recommend investing in American AI to any investor; I could not possibly recommend starting an AI company in the United States.

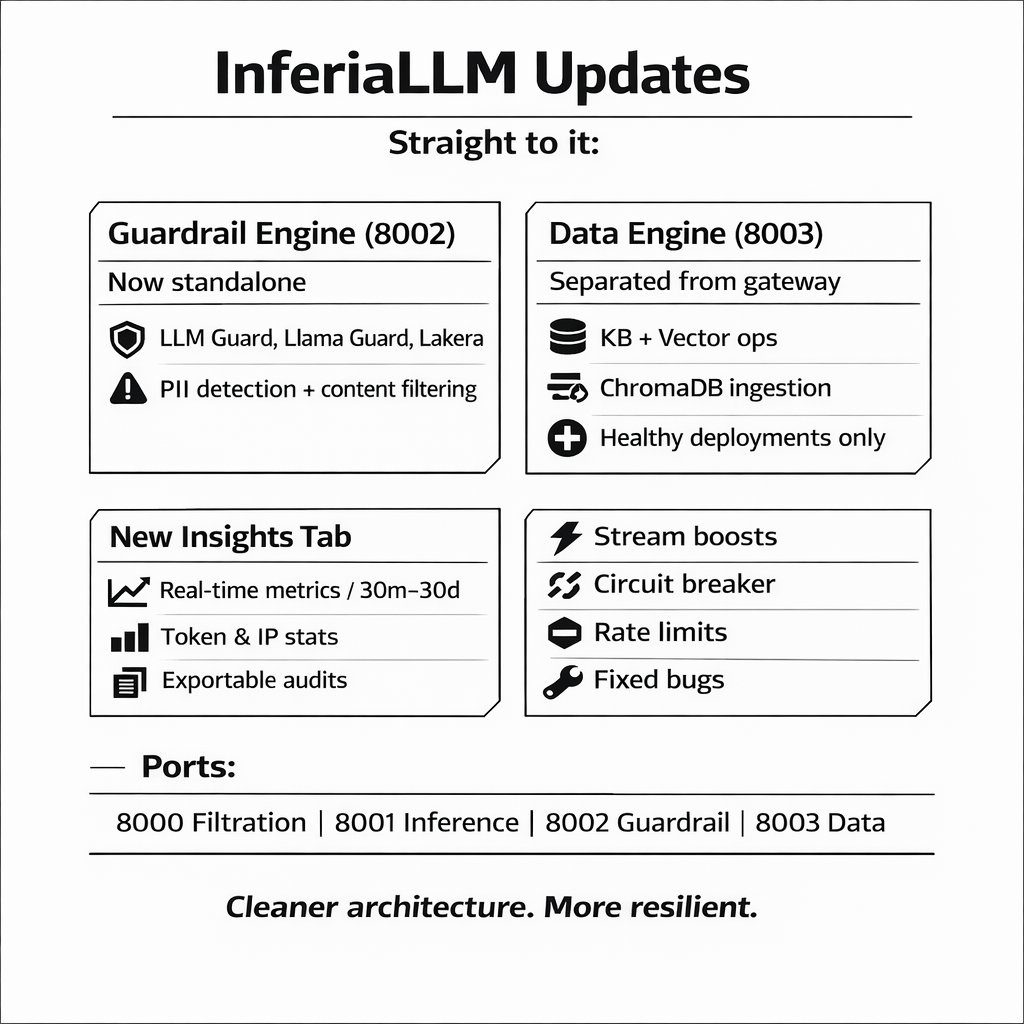

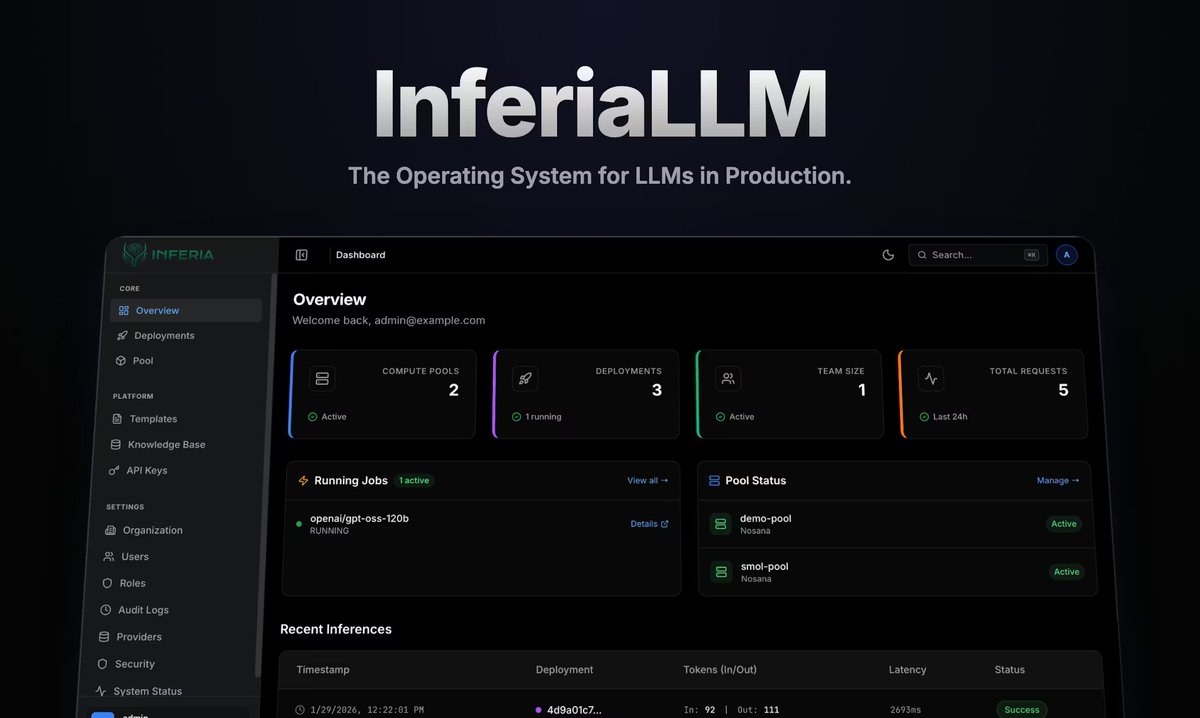

LLMs are powerful. But power without control breaks in production. Something is coming for teams running LLMs at scale. Stay tuned. InferiaLLM The Operating System for LLMs in Production ⏳

AI deployment shouldn’t take weeks. Today at 5PM CET, we go live with @inferiaAI to show how you can deploy any model in under 60 seconds, powered by Nosana’s decentralized GPU network. Set a reminder! x.com/i/broadcasts/1…