Srikar

3.6K posts

@Ryansikorski10 All tech is applied demonology Paul Davies Demon in the machine As usual ~ you on point 🎲🎲 💯

Trying to interpret how a neural-network does what it does? Activations tell you if a neuron responded. Contributions tell you if a neuron mattered! New paper from myself, @Zaki_Alaoui1, @sunnyliu1220 , @SuryaGanguli, and Steve Baccus: arxiv.org/abs/2603.06557

🧠📈A new foundation model for #neuroscience! In the latest Deeper Learning blog, @yuven_duan and @KanakaRajanPhD describe their state-of-the-art POCO model, which accurately forecasts neural dynamics across individuals and species. bit.ly/4afqx5A #NeuroAI

Perfect title, no notes.

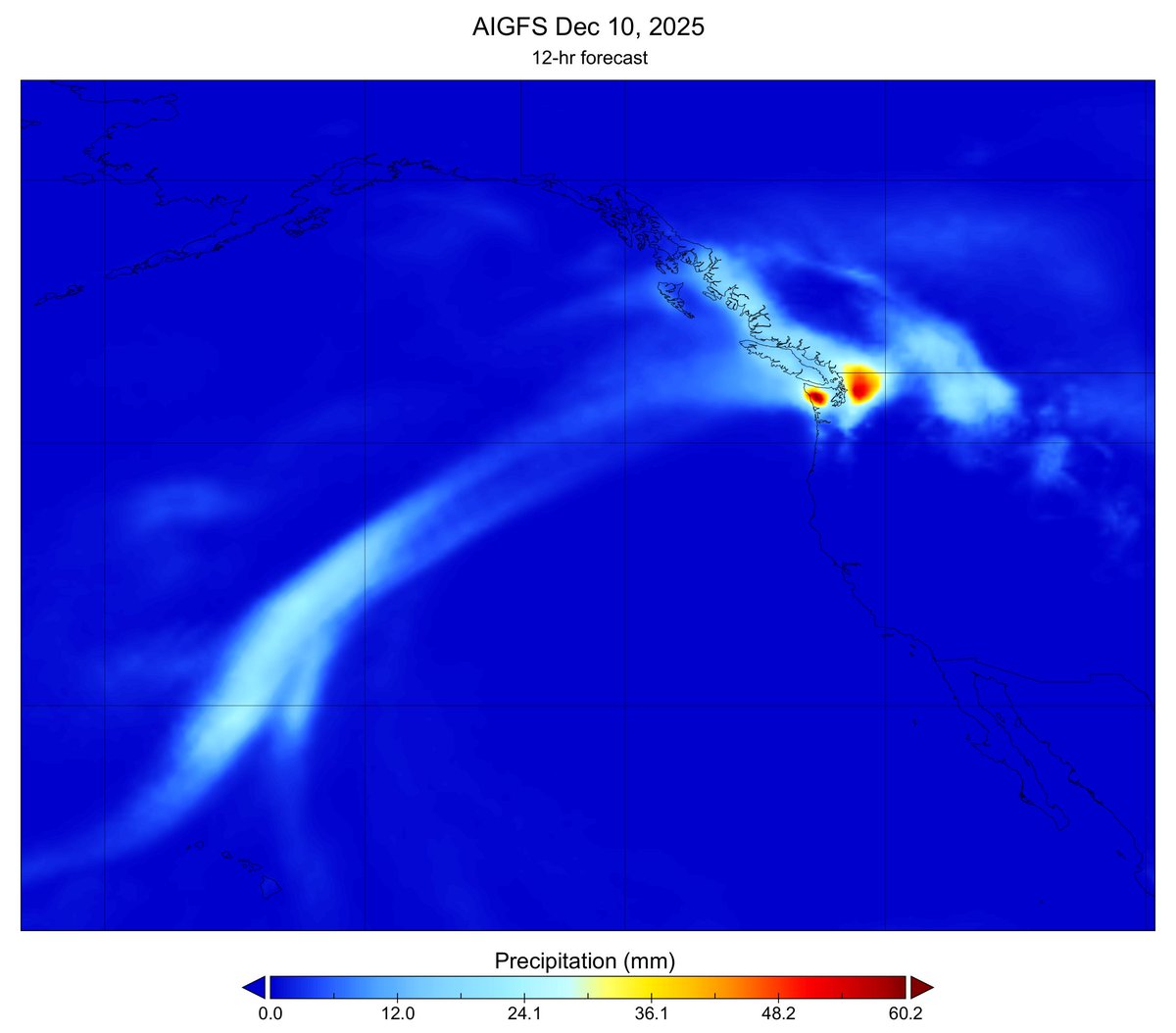

Take two large random matrices and linearly interpolate between them at several hundred steps. Compute the eigenvalues for each interpolated matrix, then plot them in the complex plane. The result is shown here. Made with #python #numpy #matplotlib