Sabitlenmiş Tweet

Timmy Ghiurau

4.6K posts

Timmy Ghiurau

@itzik009

AI × XR × Culture | Co-founder @thepointlabs & MidBrain | ex-Volvo Cars innovation lead | Teaching machines to make sense. Nerd by day rock star by night 🎸🎸🎸

Copenhagen, Denmark Katılım Eylül 2011

2K Takip Edilen1.6K Takipçiler

@garrytan +1 and I’d add: memory & learning.

Orchestration gets you execution, but long-term value comes from systems that actually improve over time.

English

@Vtrivedy10 Harnesses are great for:

- tool use

- execution

- structuring context

But they don’t answer:

“what did the system actually learn?”

Memory + continual learning needs to sit alongside the harness, not inside it.

English

@Tocelot Yes - we built long-horizon agents in Minecraft.

Funny enough, it’s one of the clearest environments to see the core issue: not intelligence, but lack of memory and adaptation over time.

English

Creation is play. That’s been the core loop in Minecraft, civilization, stardew valley, countless other great games

The only difference now is that you use English as the programming language :)

Danny Limanseta@DannyLimanseta

I don't care if people call it AI slop. Vibe coding games is fun. It's become my main hobby now, and no one can take that away from me.

English

Timmy Ghiurau retweetledi

speedrun ❤️ founders inc

Hubert Thieblot@hthieblot

In 2026, I’m inviting 1,000 founders to San Francisco to start their companies. Are you in?

English

This is super interesting.

Feels like we’re converging on the same core idea:

→ experience needs to become reusable structure (skills, trajectories, patterns)

What’s still missing IMO is how that actually updates behavior over time, not just improves retrieval or planning.

Otherwise we risk:

learning artifacts ≠ learning systems

We’ve been exploring this from a memory + continual learning angle - especially how agents internalize experience, not just reuse it.

Would love your take - we just published a paper on this: arxiv.org/abs/2603.15599

English

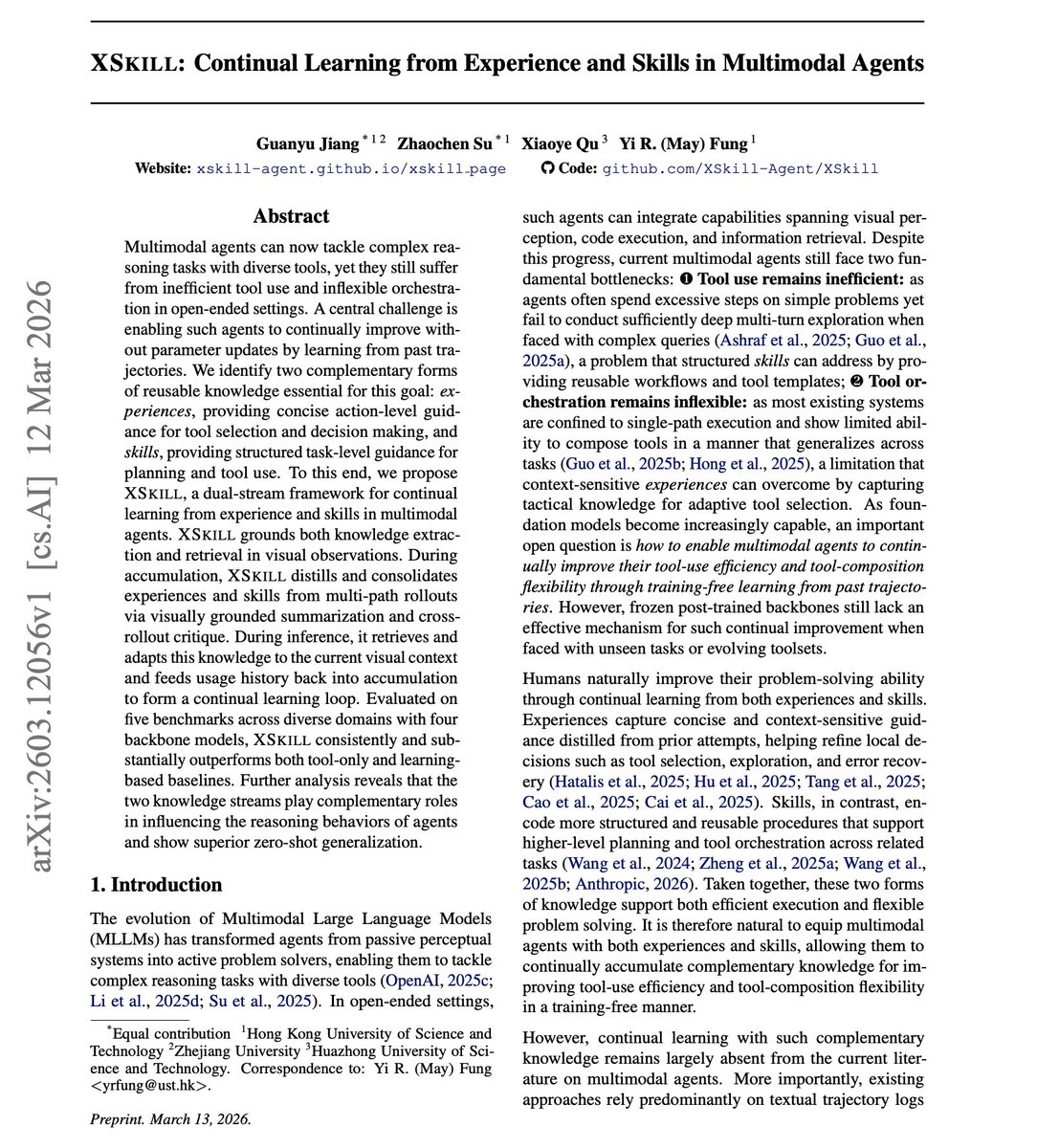

// Continual Learning from Experience and Skills //

Skills are so good when you combine them properly with MCP & CLIs.

I have found that Skills can significantly improve tool usage of my coding agents.

The best way to improve them is to regularly document improvements, patterns, and things to avoid.

Self-improving skills don't work that well (yet).

Check out this related paper on the topic:

It introduces XSkill, a dual-stream continual learning framework.

Agents distill two types of reusable knowledge from past trajectories: experiences for action-level tool selection, and skills for task-level planning and workflows.

Both are grounded in visual observations.

During accumulation, agents compare successful and failed rollouts via cross-rollout critique to extract high-quality knowledge. During inference, they retrieve and adapt relevant experiences and skills to the current visual context.

Evaluated across five benchmarks with four backbone models, XSkill consistently outperforms baselines. On Gemini-3-Flash, the average success rate jumps from 33.6% to 40.3%. Skills reduce overall tool errors from 29.9% to 16.3%.

Agents that accumulate and reuse knowledge from their own trajectories get better over time without parameter updates.

I have now seen two papers this week with similar ideas.

Paper: arxiv.org/abs/2603.12056

Learn to build effective AI agents in our academy: academy.dair.ai

English

@rohit4verse Harnesses enable execution.

But they don’t solve:

→ memory

→ learning

→ behavior change over time

Still missing:

experience → memory → adaptation

We just wrote about this in our latest paper: arxiv.org/abs/2603.15599

English

After 10 years at Volvo Cars, I left to co-found MidBrain.

AI doesn’t fail because it lacks intelligence.

It fails because it doesn’t remember.

Over the past year, we’ve explored this across very different environments:

-AI agents in Minecraft

-Long-running AI companions

-Human behavior simulation in robotics

Different domains. Same failure mode.

AI agents process information -

but they don’t improve from experience.

Today, most AI systems are:

Stateless across sessions

Dependent on large context windows

Repeating the same mistakes

Retrained in expensive batch cycles

They retrieve the past.

But they don’t learn from it.

What’s often called “memory” today is:

store → retrieve → inject → re-run

This improves context.

It does not change behavior.

At MidBrain, we’re building the missing layer:

Memory + continual learning for long-running AI systems

Our thesis:

Intelligence is not just inference.

It is the ability to change through experience.

Today, we’re sharing our first step:

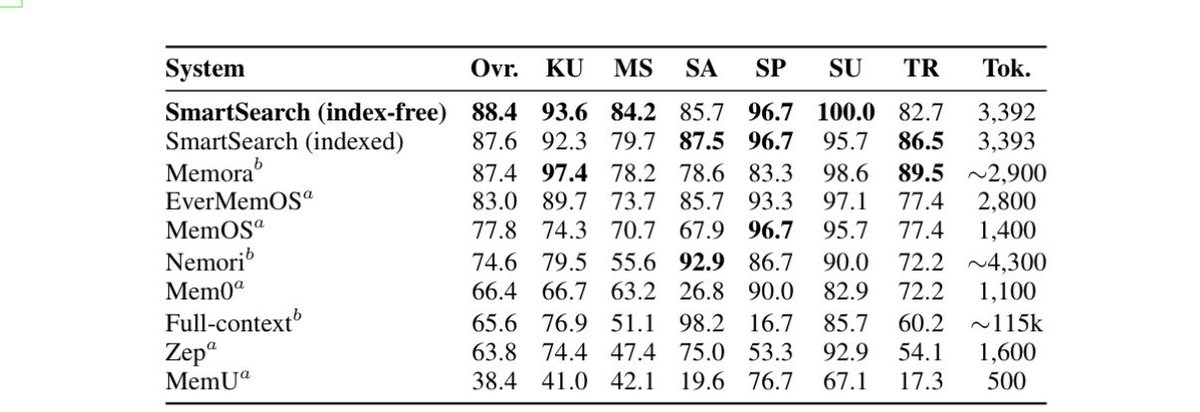

SmartSearch - memory retrieval for long-horizon agents

93.5% on LoCoMo (SOTA)

88.4% on LongMemEval-S (SOTA)

LLM-free retrieval (CPU-only)

~8.5× fewer tokens

Paper: arxiv.org/abs/2603.15599

This work shows:

why retrieval alone doesn’t lead to learning

why memory must consolidate into procedural behavior

why today’s training paradigm is fundamentally inefficient

(2–5× wasted compute, repeated data movement, batch retraining loops)

This is the starting point.

From:

retrieval → memory → learning → behavior

Toward:

AI agents that run for years, not minutes

One identity across chat, code, and physical environments

We’re opening early design partnerships

for teams building long-running agents (copilots, coding agents, companions)

midbrain.ai

English

We tested SmartSearch on the Linux kernel (~2GB), comparing it against a standard LLM with tool use (grep, etc.). As tasks get longer, SmartSearch keeps reasoning grounded by ranking the most relevant memories instead of expanding context. Using our index-free approach, we avoid the massive storage overhead of traditional semantic indices while maintaining stable performance across long execution chains.

English

We tested SmartSearch on the Linux kernel (~2GB), comparing it against a standard LLM with tool use (grep, etc.).

As tasks get longer, SmartSearch keeps reasoning grounded by ranking the most relevant memories instead of expanding context. Using our index-free approach, we avoid the massive storage overhead of traditional semantic indices while maintaining stable performance across long execution chains.

Read more: arxiv.org/abs/2603.15599

English

SOTA on LoCoMo.

SOTA on LongMemEval.

No LLM.

No index.

~8.5× fewer tokens.

arxiv.org/abs/2603.15599

English

We’re SOTA on multiple memory benchmarks.

Without using an LLM.

Without a vector index.

Without blowing up context.

SmartSearch:

•93.5% LoCoMo

•88.4% LongMemEval-S

•~8.5× fewer tokens

The takeaway:

Most “memory” systems just replay context.

They don’t change behavior.

arxiv.org/abs/2603.15599

English

We’re opening design partnerships

for teams building long-running agents:

midbrain.ai

English

Today we’re releasing our first step:

SmartSearch

93.5% LoCoMo (SOTA)

88.4% LongMemEval-S (SOTA)

LLM-free (CPU-only)

~8.5× fewer tokens

arxiv.org/abs/2603.15599

English

Timmy Ghiurau retweetledi

New course: Agent Memory: Building Memory-Aware Agents, built in partnership with @Oracle and taught by @richmondalake and Nacho Martínez.

Many agents work well within a single session but their memory resets once the session ends. Consider a research agent working on dozens of papers across multiple days: without memory, it has no way to store and retrieve what it learned across sessions. This short course teaches you to build a memory system that enables agents to persist memory and thereby learn across sessions.

You'll design a Memory Manager that handles different memory types, implement semantic tool retrieval that scales without bloating the context, and build write-back pipelines that let your agent autonomously update and refine what it knows over time.

Skills you'll gain:

- Build persistent memory stores for different agent memory types

- Implement a Memory Manager that orchestrates how your agent reads, writes, and retrieves memory

- Treat tools as procedural memory and retrieve only relevant ones at inference time using semantic search

Join and learn to build agents that remember and improve over time!

deeplearning.ai/short-courses/…

English