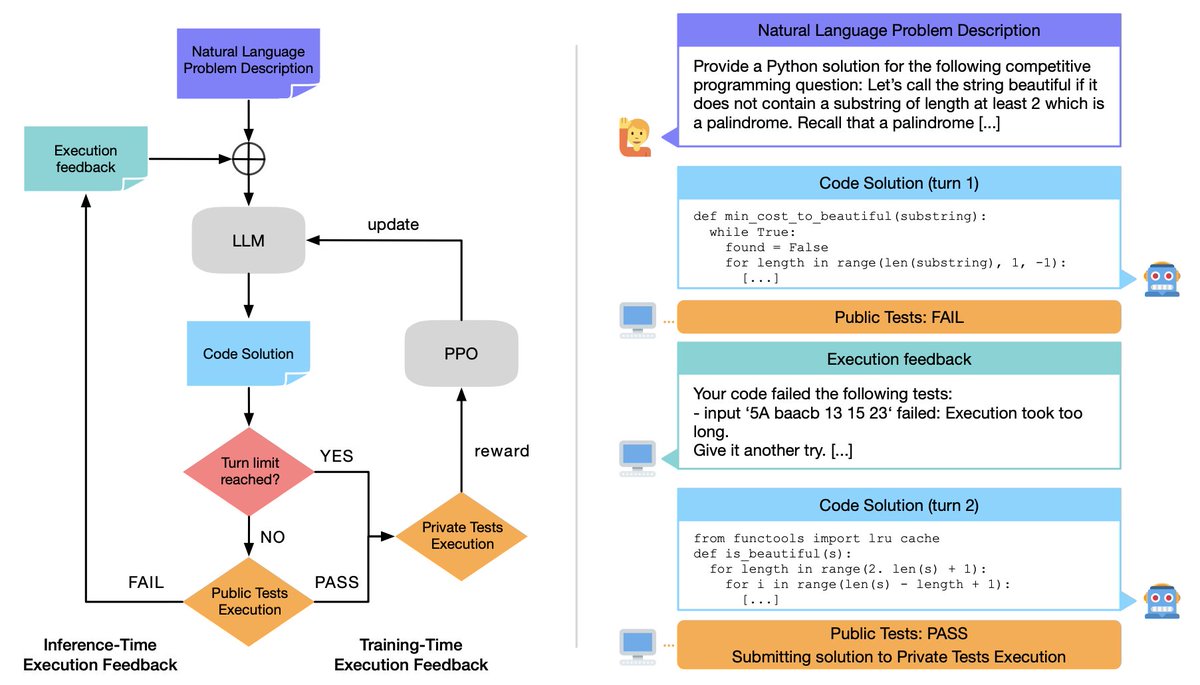

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…

Jade Copet

69 posts

(🧵) Today, we release Meta Code World Model (CWM), a 32-billion-parameter dense LLM that enables novel research on improving code generation through agentic reasoning and planning with world models. ai.meta.com/research/publi…

Join us live tomorrow at 2:30pm CET for some exciting updates on our research! youtube.com/live/hm2IJSKcY…

Today we’re releasing Code Llama 70B: a new, more performant version of our LLM for code generation — available under the same license as previous Code Llama models. Download the models ➡️ bit.ly/3Oil6bQ • CodeLlama-70B • CodeLlama-70B-Python • CodeLlama-70B-Instruct

Unfortunately I could not attend @NeurIPSConf this year :( However, in case you are attending and interesting in SpeechLMs, checkout our work (Wed. 3-5 p.m. PST) presented by @MichaelHassid!! Paper: arxiv.org/abs/2305.13009 Demo, code & models: pages.cs.huji.ac.il/adiyoss-lab/tw…