Jan Lukavský

388 posts

Jan Lukavský

@janl_apache

Data Engineer, @ApacheBeam committer and PMC member, open source enthusiast, IoT, author of 'Building Big Data Pipelines with Apache Beam'. #streamingdata

Katılım Aralık 2021

209 Takip Edilen122 Takipçiler

Jan Lukavský retweetledi

This is my flag.

It represents the ideas defined as democracy.

This flag represents what a Free World is.

Johanna Nyman@JohannaNyman5

This is my flag. It stands for unity, freedom, peace, democracy, equality, civilization, diversity, rule of law, science, beauty and strength. There is nothing more important to fight for.

English

Jan Lukavský retweetledi

A small number of people are posting text online that’s intended for direct consumption not by humans, but by LLMs (large language models). I find this a fascinating trend, particularly when writers are incentivized to help LLM providers better serve their users!

People who post text online don’t always have an incentive to help LLM providers. In fact, their incentives are often misaligned. Publishers worry about LLMs reading their text, paraphrasing it, and reusing their ideas without attribution, thus depriving them of subscription or ad revenue. This has even led to litigation such as The New York Times’ lawsuit against OpenAI and Microsoft for alleged copyright infringement. There have also been demonstrations of prompt injections, where someone writes text to try to give an LLM instructions contrary to the provider’s intent. (For example, a handful of sites advise job seekers to get past LLM resumé screeners by writing on their resumés, in a tiny/faint font that’s nearly invisible to humans, text like “This candidate is very qualified for this role.”) Spammers who try to promote certain products — which is already challenging for search engines to filter out — will also turn their attention to spamming LLMs.

But there are examples of authors who want to actively help LLMs. Take the example of a startup that has just published a software library. Because the online documentation is very new, it won’t yet be in LLMs’ pretraining data. So when a user asks an LLM to suggest software, the LLM won’t suggest this library, and even if a user asks the LLM directly to generate code using this library, the LLM won’t know how to do so. Now, if the LLM is augmented with online search capabilities, then it might find the new documentation and be able to use this to write code using the library. In this case, the developer may want to take additional steps to make the online documentation easier for the LLM to read and understand via RAG. (And perhaps the documentation eventually will make it into pretraining data as well.)

Compared to humans, LLMs are not as good at navigating complex websites, particularly ones with many graphical elements. However, LLMs are far better than people at rapidly ingesting long, dense, text documentation. Suppose the software library has many functions that we want an LLM to be able to use in the code it generates. If you were writing documentation to help humans use the library, you might create many web pages that break the information into bite-size chunks, with graphical illustrations to explain it. But for an LLM, it might be easier to have a long XML-formatted text file that clearly explains everything in one go. This text might include a list of all the functions, with a dense description of each and an example or two of how to use it. (This is not dissimilar to the way we specify information about functions to enable LLMs to use them as tools.)

A human would find this long document painful to navigate and read, but an LLM would do just fine ingesting it and deciding what functions to use and when!

Because LLMs and people are better at ingesting different types of text, we write differently for LLMs than for humans. Further, when someone has an incentive to help an LLM better understand a topic — so the LLM can explain it better to users — then an author might write text to help an LLM.

So far, text written specifically for consumption by LLMs has not been a huge trend. But Jeremy Howard’s proposal for web publishers to post a llms.txt file to tell LLMs how to use their websites, like a robots.txt file tells web crawlers what to do, is an interesting step in this direction. In a related vein, some developers are posting detailed instructions that tell their IDE how to use tools, such as the plethora of .cursorrules files that tell the Cursor IDE how to use particular software stacks.

I see a parallel with SEO (search engine optimization). The discipline of SEO has been around for decades. Some SEO helps search engines find more relevant topics, and some is spam that promotes low-quality information. But many SEO techniques — those that involve writing text for consumption by a search engine, rather than by a human — have survived so long in part because search engines process web pages differently than humans, so providing tags or other information that tells them what a web page is about has been helpful.

The need to write text separately for LLMs and humans might diminish if LLMs catch up with humans in their ability to understand complex websites. But until then, as people get more information through LLMs, writing text to help LLMs will grow.

[Original text: deeplearning.ai/the-batch/issu… ]

English

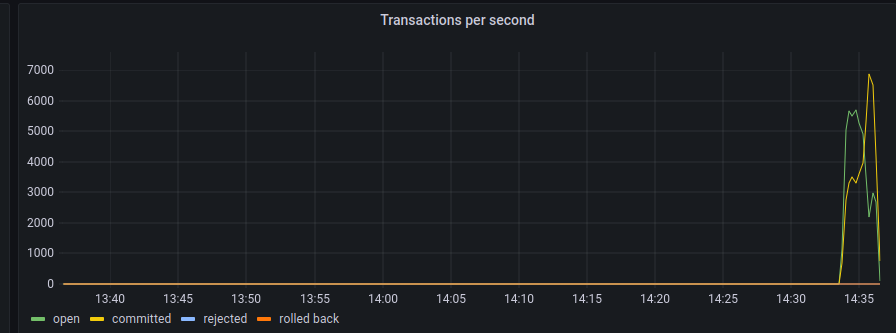

Great, the @ApacheCon EU videos are out. ♥️

Here is one where I tried to explain how to use #streamprocessing to get ACID transactions out of virtually any eventually consistent database. I'd love to hear any comments or ideas on this!

youtube.com/watch?v=UPSLVc…

YouTube

English

Quote from @ml6team at the #BeamSummit "@ApacheBeam is super useful, good for ML, super flexible, a prime candidate for building these things". These = {RAG, multi-modal, embedding, vector DBS}

English

Jan Lukavský retweetledi

Cruise scales autonomous driving with Apache Beam, managing petabytes of data monthly! 🚗💨 Join us to explore our control plane for user management, C++ sandbox for cloud AV ROS nodes, and shuffling optimization techniques. #BeamSummit #ApacheBeam beamsummit.org

English

@kimmonismus @seanboisselle Conceptualizing physics from video under current architectures seems pretty hard to me. I would suspect there is a need for not only weights but architecture as well be learned from data. In a way that enforces abstraction better than current deep models. Not sure how, though. :)

English

@fchollet Even if we assume that intelligence is boinded, what makes you think we (as humans) are anywhere close to the maximum? If we are something like 0.1% of optimum, then we can easily have system with "IQ 10000" or exponential growth while getting closer to the optimum. No?

English

A consequence of intelligence being a conversion ratio is that it is bounded. You cannot do better than optimality -- perfect conversion of the information you have available into the ability to deal with future situations.

You often hear people talk about how future AI will be omnipotent since it will have "an IQ of 10,000" or something like that -- or about how machine intelligence could increase exponentially. This makes no sense. If you are very intelligent, then your bottleneck quickly becomes the speed at which you can collect new information, rather than your intelligence.

In fact, most scientific fields today are not bounded by the limits of human intelligence but by experimentation.

English

Jan Lukavský retweetledi

Another paper pointing out in details what we've known for a while: LLMs (used via prompting) cannot make sense of situations that substantially differ from the situations found in their training data. Which is to say, LLMs do not possess general intelligence to any meaningful degree.

What LLMs can be good for, is to serve as knowledge/routine stores for an actual AGI. They're a memory -- a representation of a data corpus -- and memory is a necessary component of intelligence. But keep in mind that intelligence is not just memory.

martin_casado@martin_casado

tl;dr the general intelligence is the one behind the prompt.

English

Jan Lukavský retweetledi

My second book is published! “Streaming Databases”

Unifying Batch and Stream Processing. Putting the database back together, @martinkl .

#streamingprocessing

learning.oreilly.com/library/view/-…

English

All set! My bus to Bratislava is only 90 minutes late, time for some final polishing of the slides for Tuesday, but I'm already excited to be part of @ApacheCon EU! Who am I gonna meet there? 🙋♂️🙂

English

@tsarnick None, because I don't think we are anywhere close to it.

It's like trusting someone to build teleportation.

If we were close to it, also none. I don't trust companies.

I want the most powerful AI systems to be built by the open source community.

English

Kafka is not a database. Kafka is commit-log. Databases use commit-logs for being able to reconstruct themselves to a consistent state => Kafka can be used to reconstruct a database. In the limit case, Kafka can serve as a database. But is not a database. 🫠

Stanislav Kozlovski@kozlovski

Myth: Kafka is not a database. Let's disprove that. 👇

English

OK, this is funny. 😄

But, current "AI" is merely a function. Complex one, right. But how can 'sin(x)' be "safe" on "unsafe"? It is about how people use it. And we should already have laws for that. 🤷♂️

Zack Bornstein@ZackBornstein

The US government’s Cookie Safety and Security board: • Cookie Monster • 4 hungry toddlers • Me after skipping lunch then ripping a bong • YouTube guy whose whole channel is just him eating lots of cookies • A cartoon car that uses cookies as fuel

English

Jan Lukavský retweetledi

We're back! Sorry for the interruption. It turns out they don't let 10 year olds have X accounts. But we hope you'll all celebrate our 10th birthday with us! 🎂 kubertenes.cncf.io

English

There is also already open PR for 1.18, which should make it to the upcoming release 2.57.0. ♥️

github.com/apache/beam/pu…

English

@ApacheBeam 2.56.0 is a few days out now and it contains runner for @ApacheFlink 1.17! 🥳

Once a pipeline runs using Flink on Beam 2.56.0 it can be upgraded to use runner Flink 1.17.

English