jan retweetledi

jan

15 posts

We’re returning to Würzburg from the largest ACL ever 🌸. We presented 4 papers, including #LLäMmlein 🐑, a natively German decoder model family, a new SotA on ABSA, won another SemEval Task and best paper award. We had a fantastic time and drew inspiration for future research 🚀

English

@ManuelFaysse Nice work! We recently asked ourselves the same question and found that (at least for LLM2Vec) it does make a difference! arxiv.org/abs/2505.13136

English

Find Hippo's thread below:

x.com/gisship/status…

(8/8)

Hippolyte Gisserot-Boukhlef@gisship

🚨 New paper drop: Should We Still Pretrain Encoders with Masked Language Modeling? We revisit a foundational question in NLP: Is masked language modeling (MLM) still the best way to pretrain encoder models for text representations? 📄 arxiv.org/abs/2507.00994 (1/8)

English

jan retweetledi

Exciting news from #CAIDAS @Uni_WUE ! We're proud to announce the launch of "#LLäMmlein," the first LLM exclusively trained in German with 1B parameters! Check out the details in our press release go.uniwue.de/llammleinnews & project page go.uniwue.de/llammlein!

English

@Tim_Dettmers @kellerjordan0 "weak attention" is an interesting concept 🤔 how do you observe/define this? Is the model overall incoherent, or does it miss important cues from the context? i'm curious whether it's something that can be quantified or if it's more of a "vibe"?

English

Just a bit more context: I have a super tight baseline of a Chinchilla 250M model that I ran more than 1,000 training runs on. The data is very diverse. The baseline is so tight that all my research that worked on it worked on the large-scale, but everything that failed also failed on the large scale.

QKNorm did not work well for very large-scale CLIP, but I also saw failures at my super tight baseline. It lead to "weak attention" after some training.

Zero-init looks good on perplexity, but check downstream performance. It usually leads to "weak features" and poor performance.

ReLU^2 leads to "strong training in the wrong direction" similar to a large Adam beta1.

This is my experience. This was years ago and do not remember all the details anymore.

English

We've had an amazing time! Thanks to everyone who joined our sessions and discussions. Until next time. Safe travels home! 🦦✈️📚 @datascience_jmu @Uni_WUE @arthur3131 @semevalworkshop #CAIDAS aclanthology.org/2024.naacl-lon… supergleber.professor-x.de

English

And that's a wrap from the #NAACL2024 in 🇲🇽 Mexico City! We showcased "SuperGLEBer" - the first comprehensive German language benchmark, earned a best paper honorable mention with our hierarchical classifier, and even presented the Roman Empire's take on model hallucinations!

English

jan retweetledi

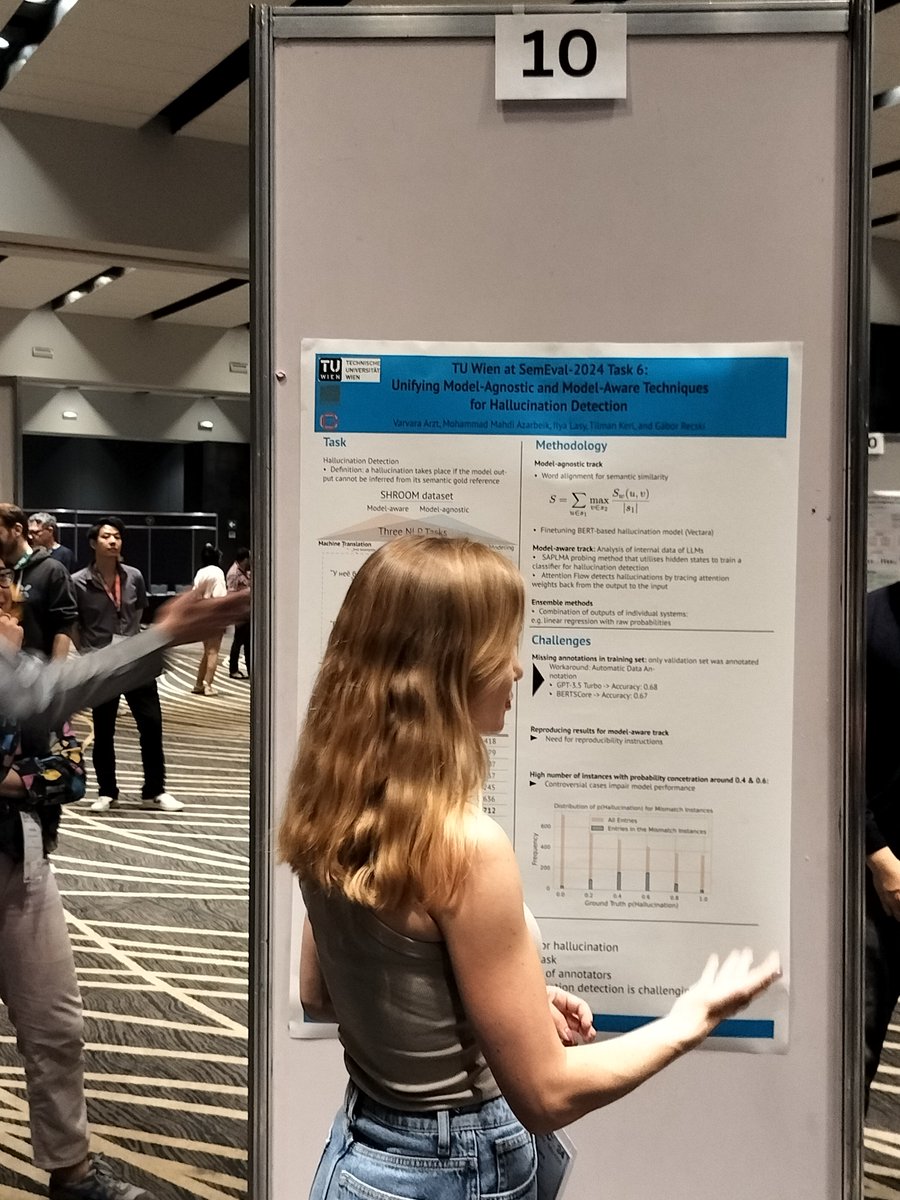

Live poster session for our @SemEvalWorkshop shared task @shroom2024 !

Thanks a lot to all the participants who presented their systems, in person and remotely!

English

All good things come in threes: We will present our last paper at #NAACL2024 "The Roman Empire Strikes Back" at the @SemEvalWorkshop poster session at 14:00. Meet us there!

It is a joint work with @konstantinkobs on detecting hallucination detection in text generation models.

English

We are thrilled to announce that we won the "Best System Paper Honorable Mention Award" at @semevalworkshop (#NAACL2024) for our work "Developing a Hierarchical Multi-Label Classification Head for Large Language Models".

See you at our talk (now) and our poster (15:30)!🦦

English

jan retweetledi

@janpf95 will be presenting our latest paper "SuperGLEBer" aclanthology.org/2024.naacl-lon… about benchmarking German LLMs at #NAACL2024 in 🇲🇽 Mexico City this week! Meet us at the in-person poster session 2 on June 17 at 14:00 PM. @datascience_jmu #CAIDAS @Uni_WUE

English

jan retweetledi

Our paper "SuperGLEBer: German Language Understanding Evaluation Benchmark" was accepted at the NAACL 2024: In our paper, we assemble a broad Natural Language Understanding benchmark suite for the German language and consequently evaluate a wide array of… dlvr.it/T43gJQ

English

@CodingDanny @hringriin @lutz_reinhardt @malteaero @timpritlove Leider nicht ganz so einfach:

idontplaydarts.com/2016/04/detect…

Deutsch

@hringriin @lutz_reinhardt @malteaero @timpritlove Das Problem ist aber das gleiche wenn ich erst ein git repo clone und es dann ausführe. Und wenn ich binary XY aus dem web lade und ausführe ebenfalls. Da ändert es nichts ob ich es in einem command passiert oder nicht.

Deutsch

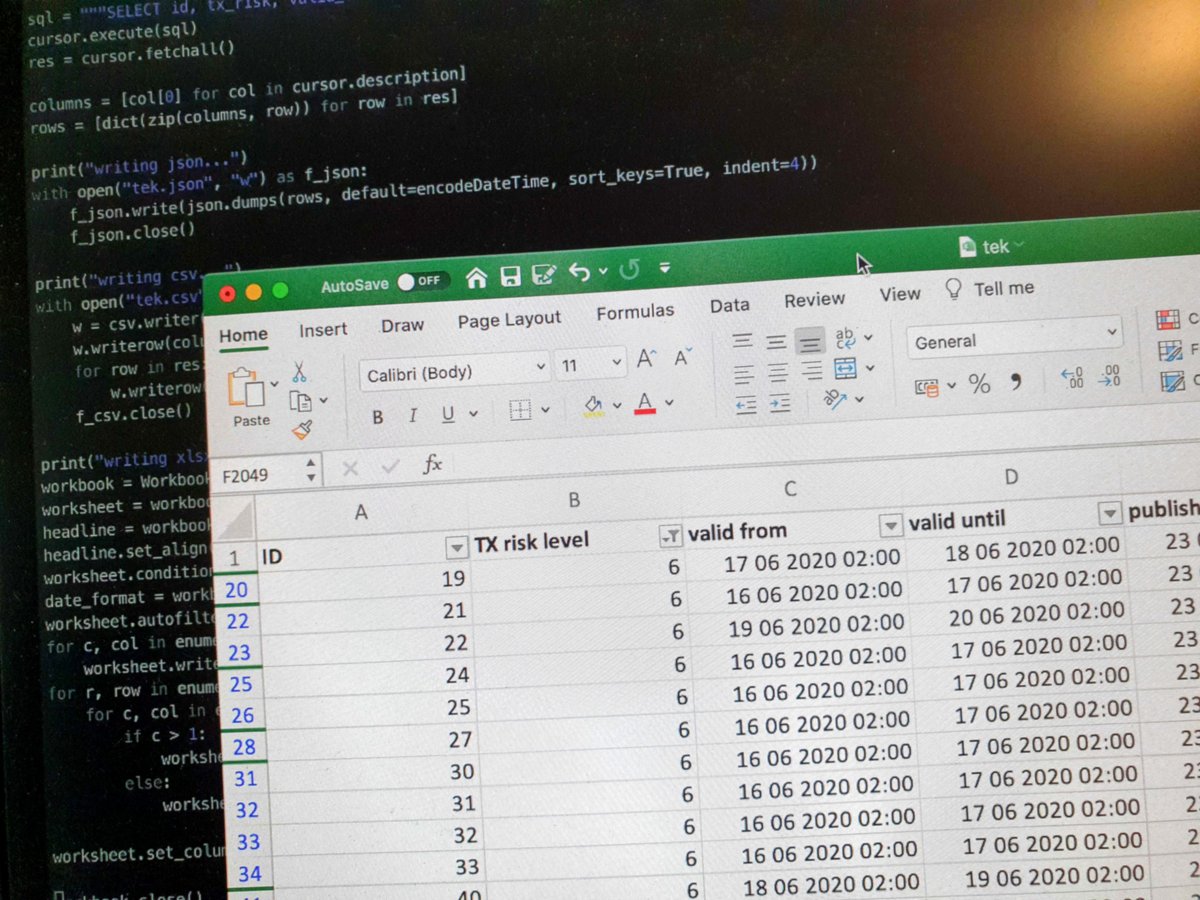

Um den Inhalt der #CoronaWarnApp Pakete leichter analysieren zu können, habe ich die Daten mal in ein angenehmeres Format gebracht.

CSV: cwa.malte.io/tek.csv

JSON: cwa.malte.io/tek.json

Excel: cwa.malte.io/tek.xlsx

1/🧵

Deutsch

@piratomat @theochemiker @csdings @malteaero #issuecomment-650700745" target="_blank" rel="nofollow noopener">github.com/corona-warn-ap…

QME

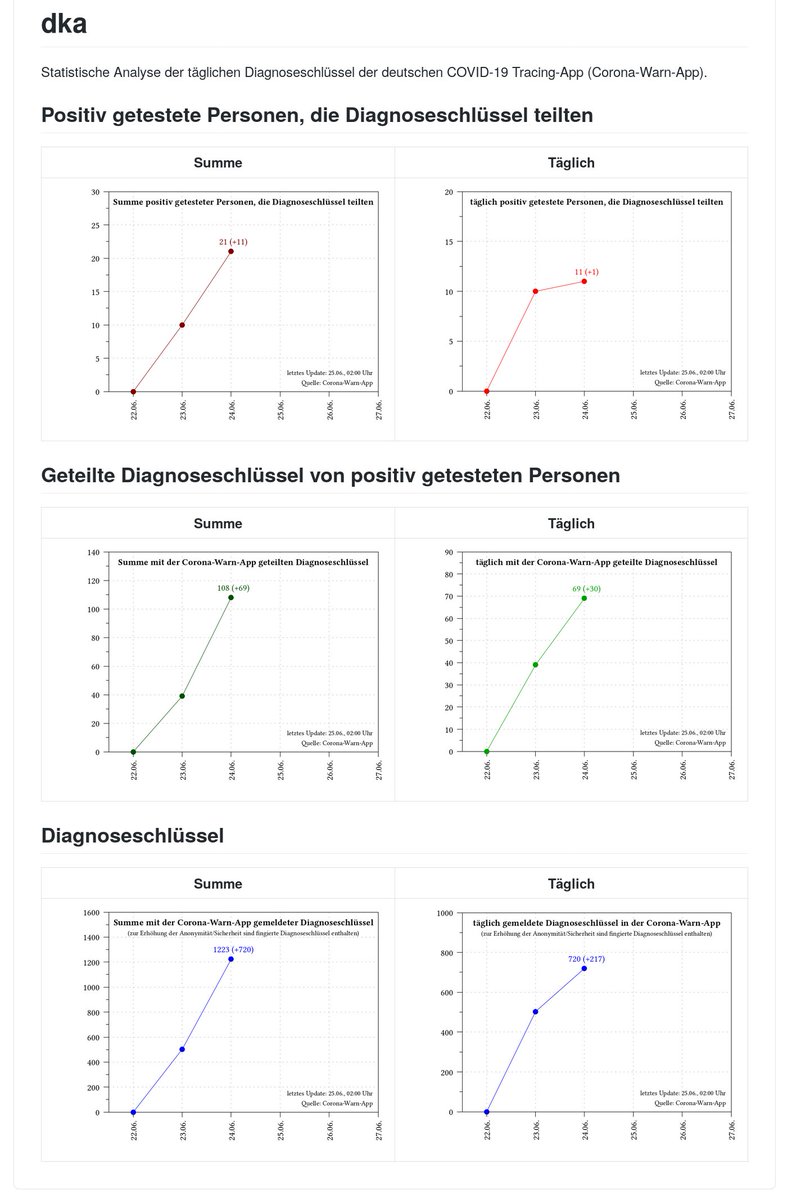

Habe ein kleines Nebenprojekt gestartet, welches die anonymisierten täglichen #CoronaWarnApp-Daten analysiert und grafisch darstellt. Danke an @malteaero für die Inspiration. #CoronaVirusDE #CoronaApp

Projekt unter github.com/micb25/dka

Deutsch