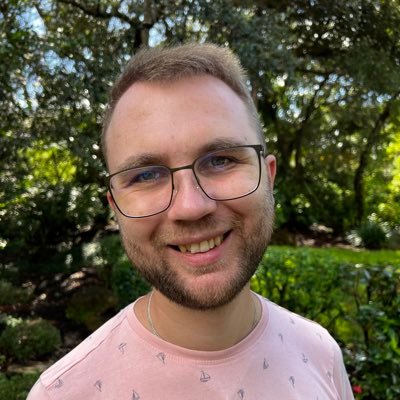

Camilo Vasquez

1.4K posts

Camilo Vasquez

@jcvasquezc1

#machinelearning, #NLProc, #signalprocessing enthusiast 🇨🇴🤖💻 Researcher at @vicomtech

🚀In the future, experienced #remote human #operators will supervise several ⚙️automatized factories 🙌🏽We present 👩🏽💻#OaaS-Operator as a Service 👀Want to know more about these remote operators? 💥Have a look! youtube.com/watch?v=CA_zv8…

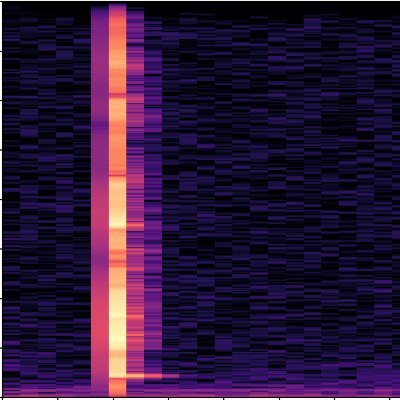

Let's goo! F5-TTS 🔊 > Trained on 100K hours of data > Zero-shot voice cloning > Speed control (based on total duration) > Emotion based synthesis > Long-form synthesis > Supports code-switching > Best part: CC-BY license (commercially permissive)🔥 Diffusion based architecture: > Non-Autoregressive + Flow Matching with DiT > Uses ConvNeXt to refine text representation, alignment Synthesised: I was, like, talking to my friend, and she’s all, um, excited about her, uh, trip to Europe, and I’m just, like, so jealous, right? (Happy emotion) The TTS scene is on fire! 🐐

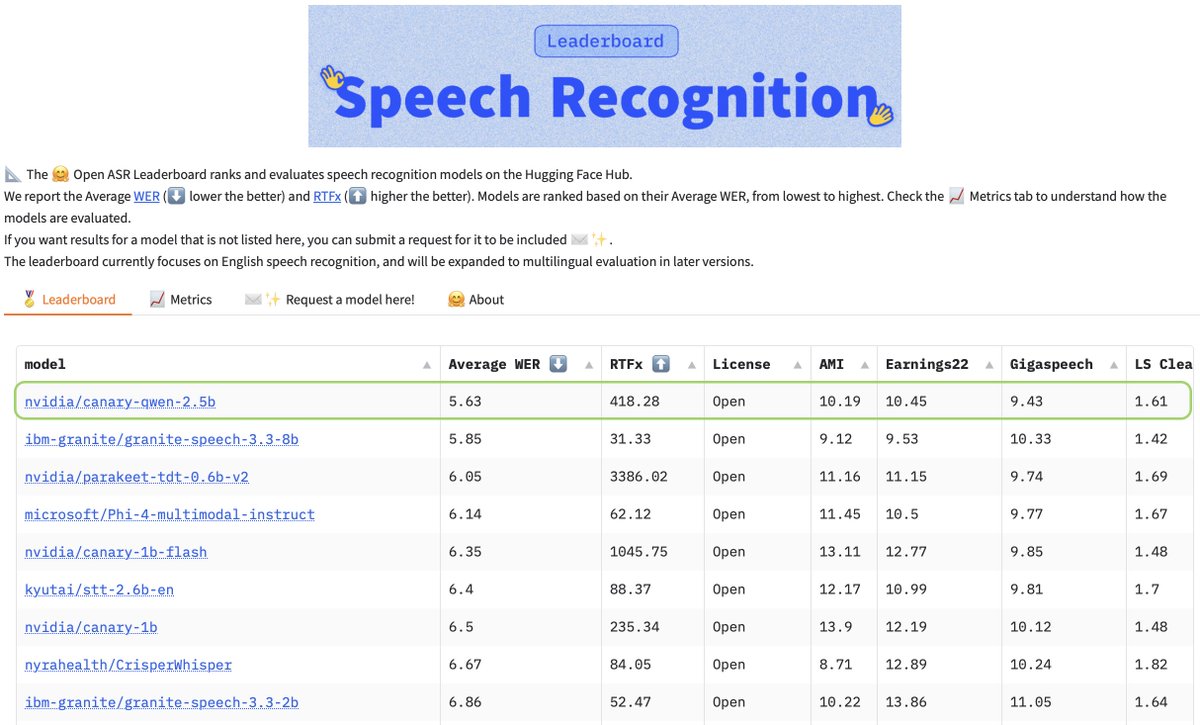

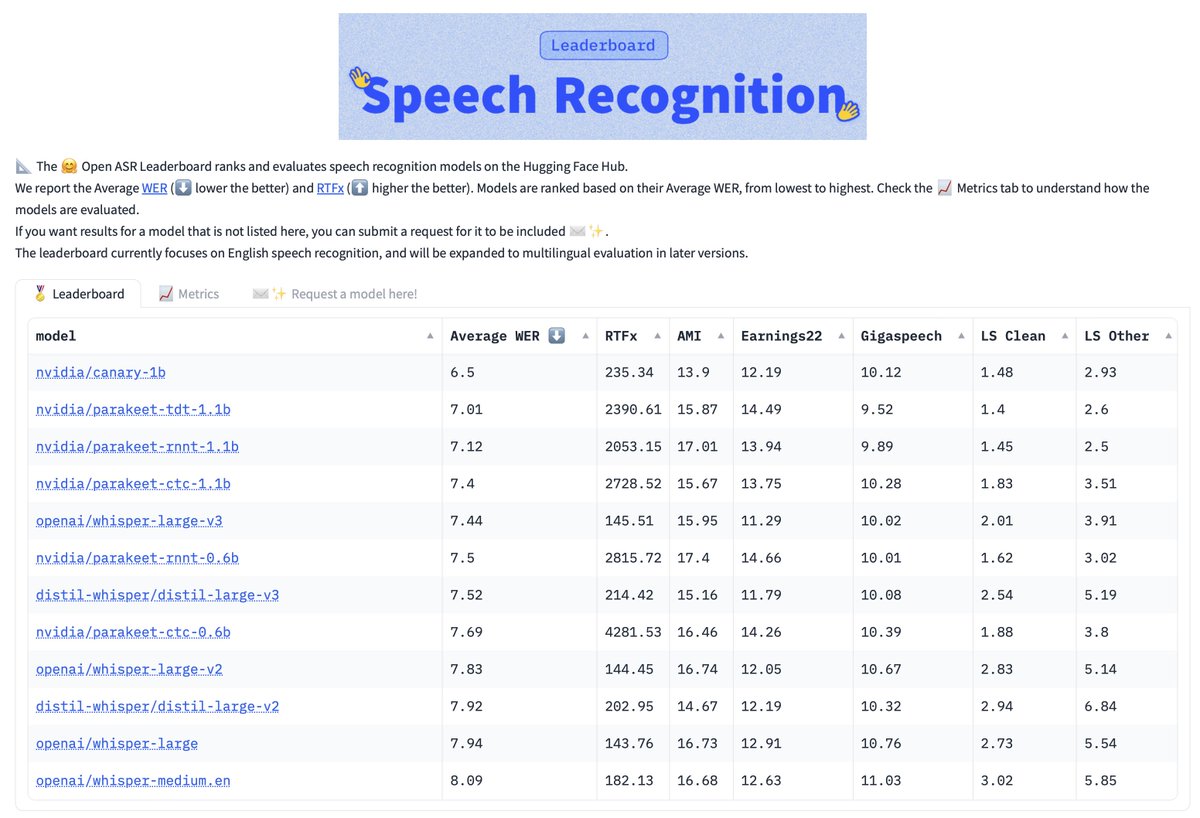

We fkn did it! Whisper Large v3 Turbo is in Transformers! 🔥 Drop-in replacement to Large-v3 - 809M parameters, 8x faster AND multilingual ⚡ > Uses 4 decoder layers as compared to 32 (large v3) > Supports both Timestamps (both Word and Chunk) > Compatible with Flash Attention 2 We're running benchmarks at the moment, will report those soon. Try it out now on the space below 🐐 P.S. Sorry for the rushed audio 🙈

🏆 ACL Best Resource Paper Award: - Latxa: An Open Language Model and Evaluation Suite for Basque by Etxaniz et al. - Dolma: an Open Corpus of Three Trillion Tokens for Language Model Pretraining Research by Soldaini et al. #NLProc #ACL2024NLP