Jianing “Jed” Yang

179 posts

Jianing “Jed” Yang

@jed_yang

Building robots @Figure_robot | PhD 🎓 @UMich on 3D vision. Prev. @Meta @Adobe @CarnegieMellon @GeorgiaTech.

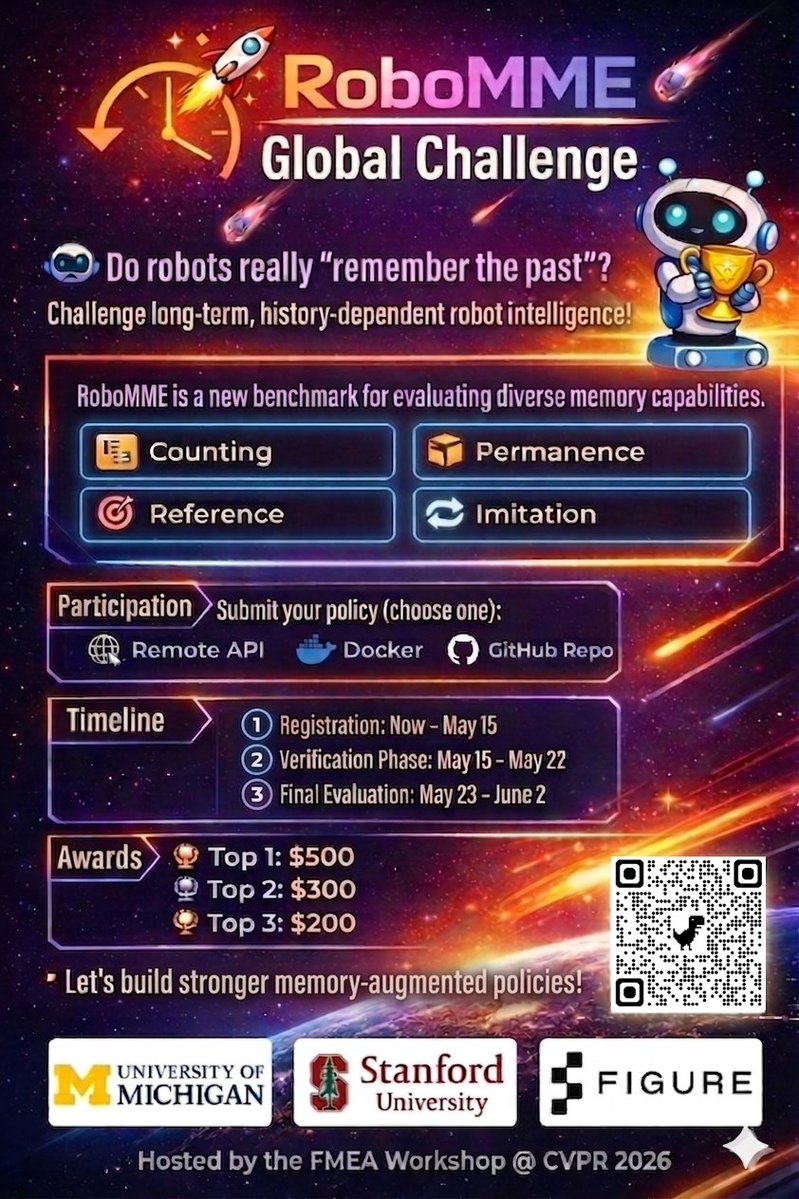

Robot memory methods are growing fast, but systematic evaluation is largely lacking. 📉 Introducing RoboMME: a new benchmark for memory-augmented robotic manipulation! 🤖🧠 Featuring 16 tasks across temporal, spatial, object, and procedural memory 🔗 robomme.github.io

We raised $165M at a $1.15B valuation to stop doing demos. 2026 is about 1) deployment and 2) research. We will start shipping Memo with our new frontier models in a few months. Our series-B is led by Coatue, with Thomas Laffont joining the board. ->🧵

Robot memory methods are growing fast, but systematic evaluation is largely lacking. 📉 Introducing RoboMME: a new benchmark for memory-augmented robotic manipulation! 🤖🧠 Featuring 16 tasks across temporal, spatial, object, and procedural memory 🔗 robomme.github.io

In my recent blog post, I argue that "vision" is only well-defined as part of perception-action loops, and that the conventional view of computer vision - mapping imagery to intermediate representations (3D, flow, segmentation...) is about to go away. vincentsitzmann.com/blog/bitter_le…

LLMs are now learning space, geometry, and how to move. 🤖📐 The 2nd CVPR 3D-LLM VLA Workshop brings together language, 3D perception, and action for embodied intelligence. 📢 Call for Papers is OPEN: #tab-your-consoles" target="_blank" rel="nofollow noopener">openreview.net/group?id=thecv…

🌐 Website: 3d-llm-vla.github.io If your research lives at the intersection of words, worlds, and robots—this one’s for you. #CVPR2026 @CVPR

(1/N) Will this be the BERT/GPT moment for 3D vision? Finally, unsupervised pre-training for 3D works. Led by @qitao_zhao , we present E-RayZer — a fully self-supervised 3D reconstruction model that: 🔥Matches or surpasses supervised methods like VGGT 👀Learns transferable 3D representations, outperforming CroCo, VideoMAE, and DINO 📈Scales with more unlabeled data A new recipe for scalable 3D foundation models.

Introducing SAM 3D, the newest addition to the SAM collection, bringing common sense 3D understanding of everyday images. SAM 3D includes two models: 🛋️ SAM 3D Objects for object and scene reconstruction 🧑🤝🧑 SAM 3D Body for human pose and shape estimation Both models achieve state-of-the-art performance transforming static 2D images into vivid, accurate reconstructions. 🔗 Learn more: go.meta.me/305985

🚨 Thrilled to introduce DEER-3D: Error-Driven Scene Editing for 3D Grounding in Large Language Models - Introduces an error-driven scene editing framework to improve 3D visual grounding in 3D-LLMs. - Generates targeted 3D counterfactual edits that directly challenge the model’s biased or incorrect reasoning patterns, e.g. orientation or distance. - Retrains the model with more informative, bias-breaking 3D evidence, leading to stronger spatial and attribute grounding. Thread 🧵👇

Introducing Figure 03

AimBot A Simple Auxiliary Visual Cue to Enhance Spatial Awareness of Visuomotor Policies