Jeff Harris

1.4K posts

Jeff Harris

@jeffintime

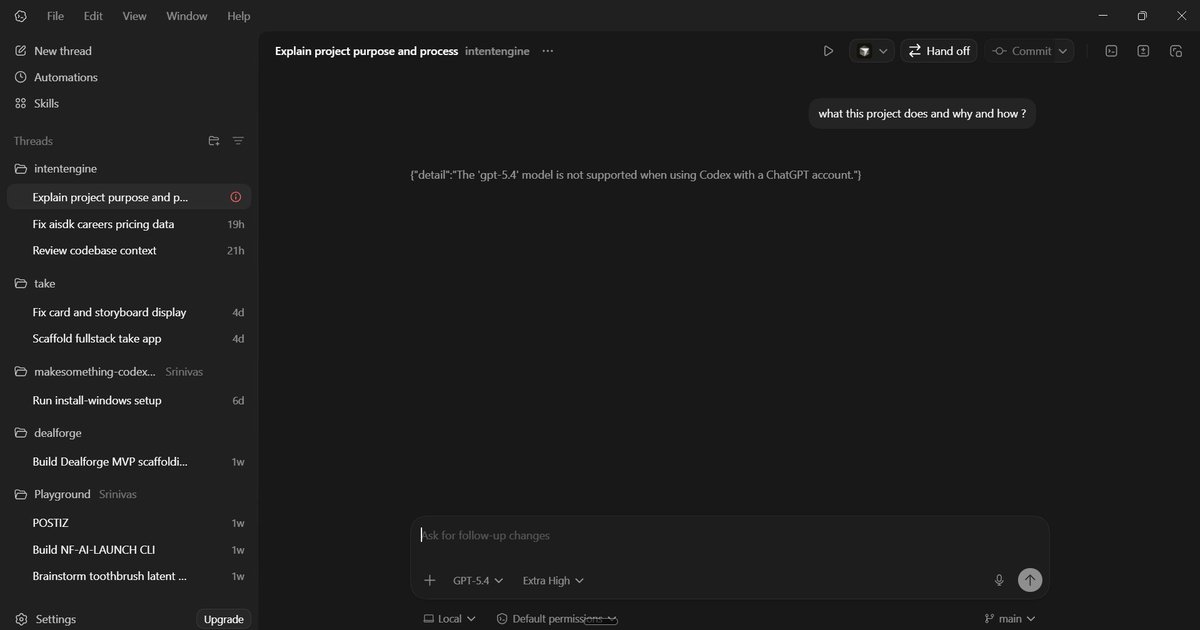

the malleability of minds. Codex @openai

With Codex the there is quite the gulf in load between peak and off-peak times, and we would like to achieve more of a smoother traffic pattern as that would be a more optimal use of our compute. We have ideas, but curious what you all think we should do? Would more usage during off-peak and surge multiplier during peak times make sense?

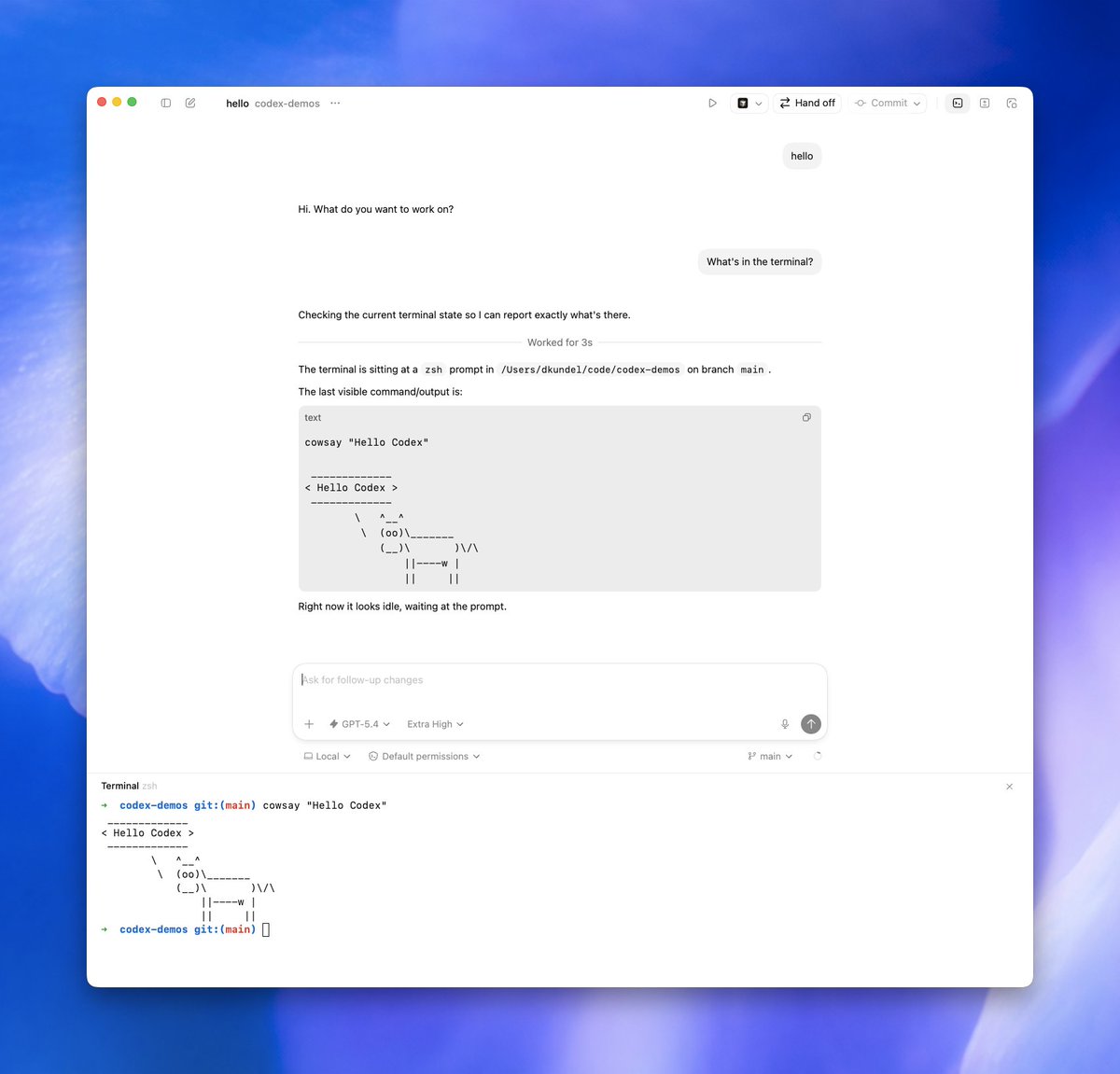

@dkundel @OpenAIDevs @raycast I’m constantly copying command output from the app command window. Shouldn’t codex be aware of the command output?

Working at OpenAI is fun because questioning everything and taking risks is part of the culture. Within Codex, the team asks itself how we could make it an order of magnitude better every few months and then sets most things aside to go and do it across the entire stack. Some examples were the Codex App and our first deployment of Cerebras inference with WebSockets. We are now well under way on the next bet and it’s making even our best engineers nervous as it’s at the edge of what’s possible today.

OK, Codex is back and stable and we should be good for a while. Reset button pressed, should see it in a bit

Codex is back up. We introduced an issue that caused requests to be blocked and rejected as high cyber risk. This was fixed in ~8 mins and we are now back online and fully operational. Team will reset rate limits, that’s been a while. Enjoy one on us.

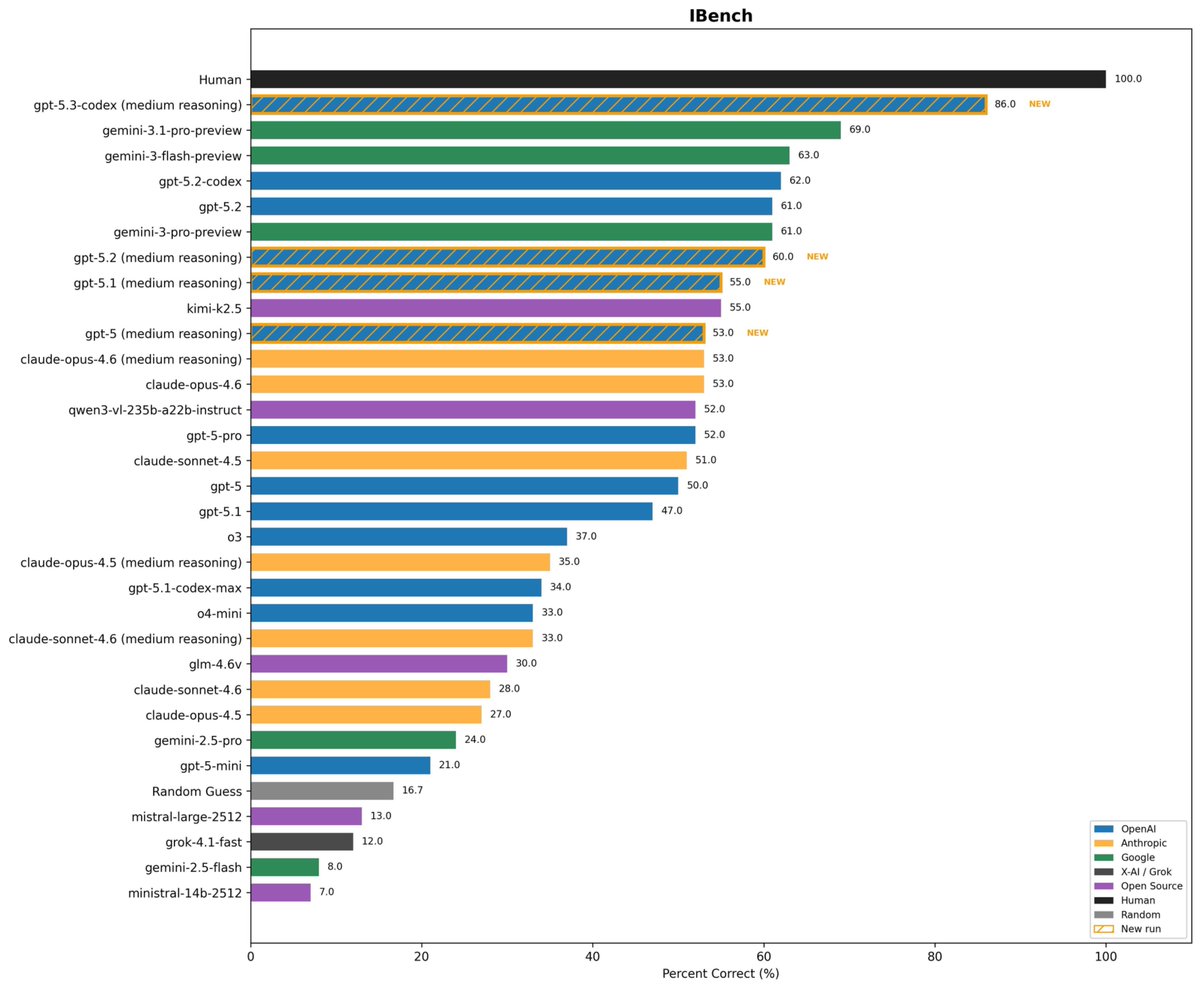

We tested @OpenAI's new WebSocket connection mode for the Responses API into Cline and the early numbers are wild. Instead of resending full context every turn, WebSocket mode keeps a persistent connection, sends only incremental inputs. With 5.2 Codex results vs the standard API: → ~15% faster on simple tasks → ~39% faster on complex multi-file workflows → Best cases hitting 50% faster WebSocket handshake adds slight TTFT overhead on short tasks, but it gets amortized fast. On heavier workloads with dozens of tool calls, the speed gains are massive. Still expanding our test sample, but this is a very promising step forward for every Cline user. Faster AI coding is coming.