Jeff Wang 👨🚀

3K posts

Jeff Wang 👨🚀

@jffwng

Product Lead @AIatMeta (MSL / FAIR). I like language models. I also like non-language models. Previously at Twitter and startups

Most tech companies break out product management and product marketing into two separate roles: Product management defines the product and gets it built. Product marketing wires the messaging- the facts you want to communicate to customers- and gets the product sold. But from my experience that's a grievous mistake. Those are, and should aways be, one job. There should be no separation between what the product will be and how it will be explained- the story has to be utterly cohesive from the beginning. Your messaging is your product. The story you're telling shapes the thing you're making. I learned story telling from Steve Jobs. I learned product management from Greg Joswiak. Joz, a fellow Wolverine, Michigander, and overall great person, has been at Apple since he left Ann Arbor in 1986 and has run product marketing for decades. And his superpower- the superpower of every truly great product manager- is empathy. He doesn't just understand the customer. He becomes the customer. So when Joz stepped into the world with his next-gen iPod to test it out, he fiddled with it like a beginner. He set aside all the tech specs- except one: battery life. The numbers were empty without customers, the facts meaningless without context. And, that's why product management has to own the messaging. The spec shows the features, the details of how a product will work, but the messaging predicts people's concerns and finds way to mitigate them. - #BUILD Chapter 5.5 The Point of PMs

We’re looking for a Senior Research Science Lead to tackle the next frontier of AI: Social Intelligence. In this role, you’ll spearhead our work in complex multi-agent ecosystems, bridging the gap between central orchestration and cognitive science. Details below.

Excited to announce that @ManusAI has joined Meta to help us build amazing AI products! The Manus team in Singapore are world class at exploring the capability overhang of today’s models to scaffold powerful agents. Looking forward to working with you, @Red_Xiao_!

🔉 Introducing SAM Audio, the first unified model that isolates any sound from complex audio mixtures using text, visual, or span prompts. We’re sharing SAM Audio with the community, along with a perception encoder model, benchmarks and research papers, to empower others to explore new forms of expression and build applications that were previously out of reach. 🔗 Learn more: go.meta.me/568e5d

Very interesting observations on the interaction between pre/mid/post-training. 1. The gain from RL is largest when the task is neither too easy nor too hard. 2. Pretraining should focus on cultivating broader atomic skills - RL can combine them to solve composite problems. 3. For tasks near the pretraining distribution heavy mid-training is effective. For harder tasks assigning more compute to RL is effective. 4. Process rewards are helpful for generalization (if we can utilize them!) How can we define atomic skills in general reasoning? And how can we further promote that, potentially using synthetic data? This could be an interesting area to explore in the atomic vs composite skills view of RL.

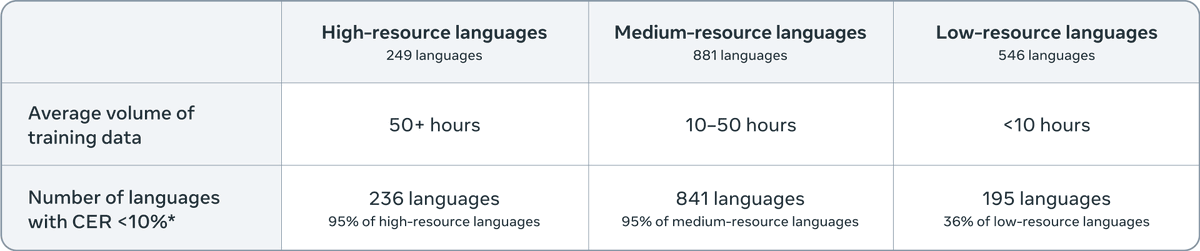

Introducing Meta Omnilingual Automatic Speech Recognition (ASR), a suite of models providing ASR capabilities for over 1,600 languages, including 500 low-coverage languages never before served by any ASR system. While most ASR systems focus on a limited set of languages that are well-represented on the internet, this release marks a major step toward building a truly universal transcription system. 🔗 Learn more: go.meta.me/f56b6e