Julian Hamann

3.2K posts

Julian Hamann

@jhamann93

Do Only Good Everyday https://t.co/5WTq1d0x0S

if you don't have these in your configs you're ngmi

Microsoft Threat Intelligence has observed threat actors actively experimenting with techniques to bypass or “jailbreak” AI safety controls. By reframing malicious requests, chaining instructions across multiple interactions, and misusing system‑ or developer‑style prompts, threat actors can coerce models into generating restricted content that bypasses built‑in safeguards. These techniques demonstrate how generative AI models are probed, shaped, and redirected to support reconnaissance, malware development, and social engineering while minimizing friction from moderation. AI guardrails have become dynamic surfaces that attackers test and manipulate to sustain operational advantage. As AI becomes more deeply embedded in enterprise workflows, understanding how attackers test and manipulate these guardrails is critical for defenders. Learn more about securing generative AI models on Azure AI Foundry: msft.it/6013Qs5oX

People of pi, github.com/rcarmo/piclaw/… now brings piclaw kicking and screaming into the world outside containers - you can now install it bearskin on a machine, although it's still experimental outside Docker /cc @badlogicgames

🚨🇲🇽 BREAKING — Mexican Scientist Successfully Eliminates HPV.

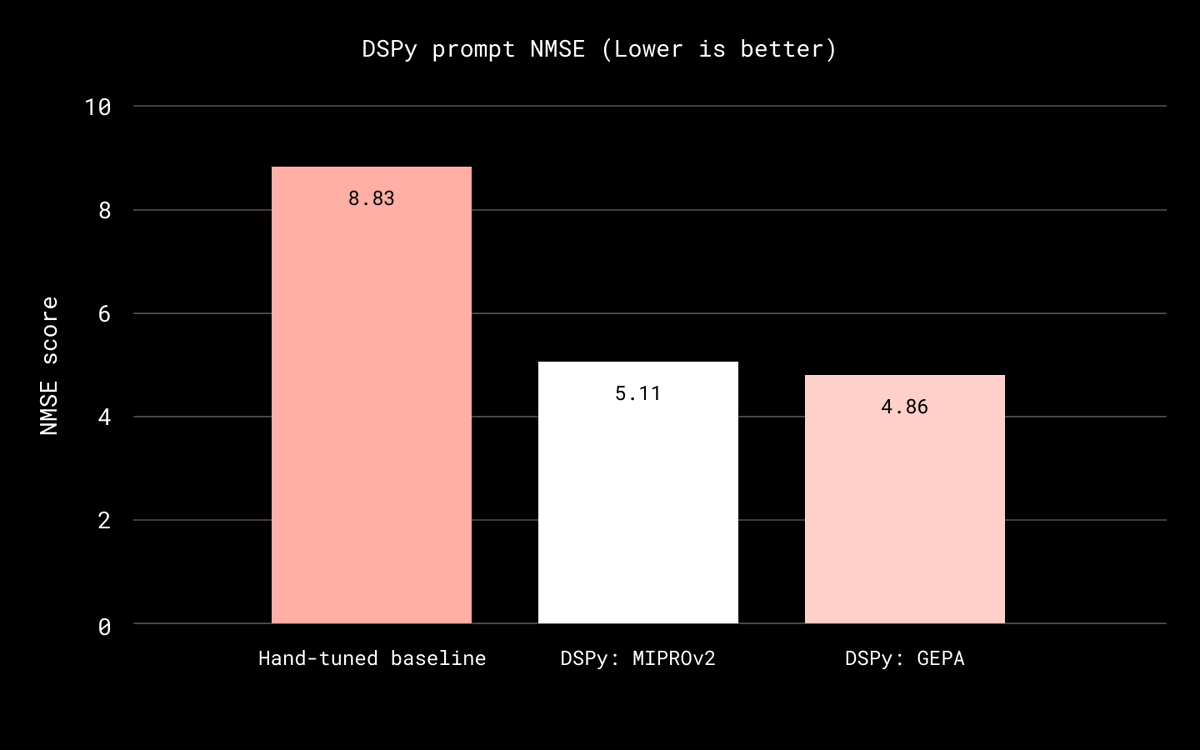

How we used DSPy to turn our relevance judge into a measurable optimization loop, making it more reliable and scalable in Dropbox Dash.