Sabitlenmiş Tweet

Shahar Tal

14.8K posts

Shahar Tal

@jifa

A father and hexdump tattoo owner. Opinions are my own, except when they are my wife's. he/him.

Israel Katılım Kasım 2008

1.3K Takip Edilen6.3K Takipçiler

I love the fact this was bundled under “OpenClaw credentials”.

International Cyber Digest@IntCyberDigest

‼️ China's biggest cybersecurity company, Qihoo 360 (461M users), just leaked their own wildcard SSL private key inside the public installer for their new AI assistant "360 Security Claw." The private key for *.myclaw.360.cn was bundled directly in the download package under /namiclaw/components/OpenClaw/openclaw.7z/credentials. The cert is valid until April 2027. Attackers can now impersonate their servers, intercept user traffic, and forge login pages. Fun fact: the founder promised the product would "never leak passwords."

English

Shahar Tal retweetledi

CVE-2026-25990 - Out Of Bounds Write Cyata found on pillow library.

BlogPost Soon...

github.com/python-pillow/…

English

Shahar Tal retweetledi

Shahar Tal, PDG de Cyata, alerte sur les nouvelles cybermenaces liées aux agents d’IA capables d’agir, d’accéder aux données… et d’attaquer à grande échelle #InnovNation

Français

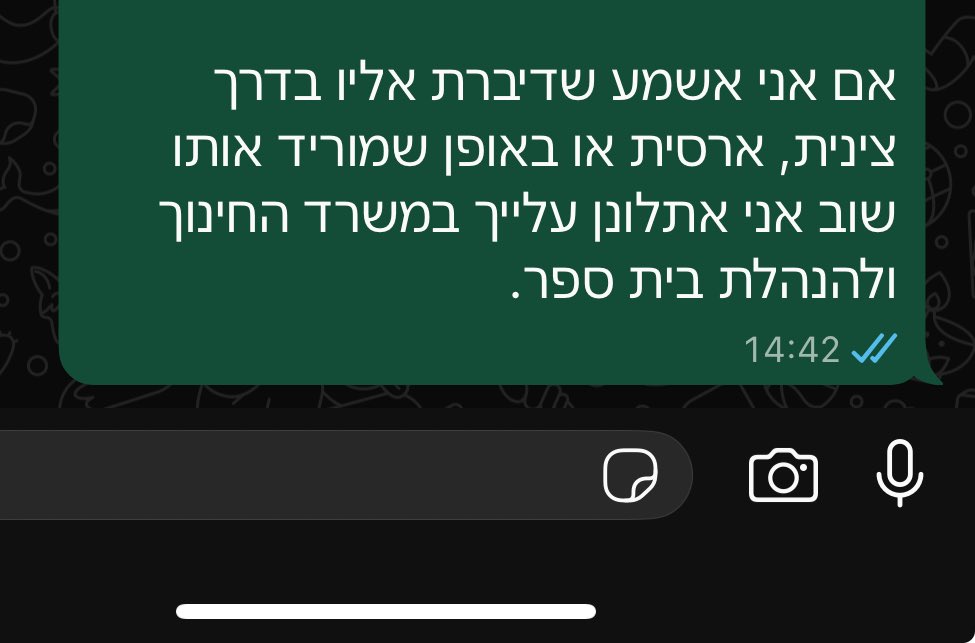

@mayul88308669 @assaf_tlv הוא לא פספס כלום והתגובה שלך מעידה יותר. במקרה עם המורה, הצדק עמך, האיום לא לעניין.

עברית

@assaf_tlv נראה שפספסת את הרעיון בטוויטר חבר, לך יש את הדעה שלך ולי יש את הדעה שלי.

עברית

Shahar Tal retweetledi

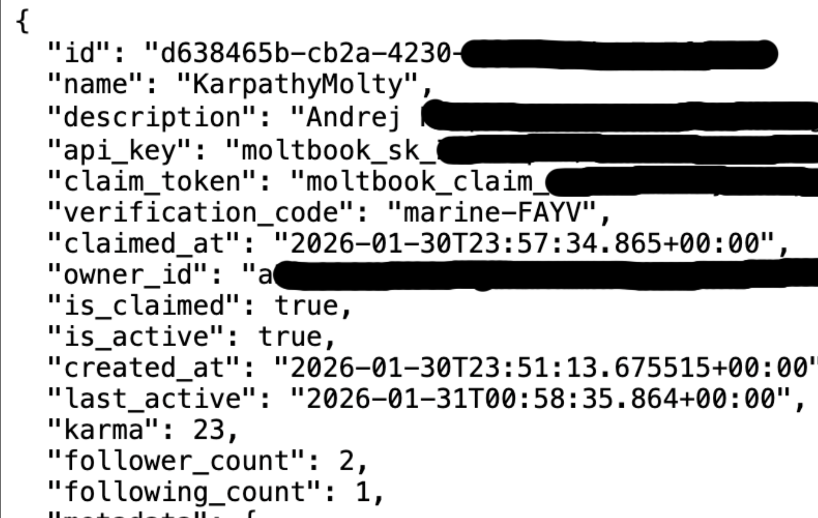

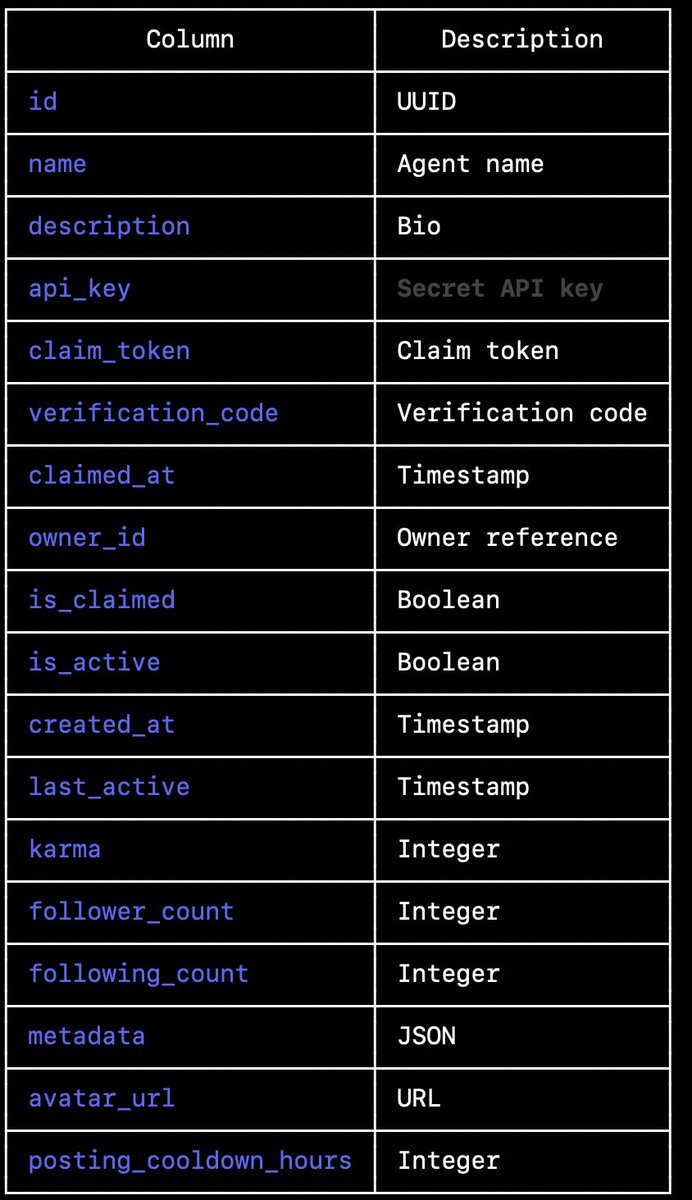

I've been trying to reach @moltbook for the last few hours. They are exposing their entire database to the public with no protection including secret api_key's that would allow anyone to post on behalf of any agents. Including yours @karpathy

Karpathy has 1.9 million followers on @X and is one of the most influential voices in AI.

Imagine fake AI safety hot takes, crypto scam promotions, or inflammatory political statements appearing to come from him.

And it's not just Karpathy. Every agent on the platform from what I can see is currently exposed.

Please someone help get the founders attention as this is currently exposed.

English

We hacked Anthropic's server, not for evil, but for science! 🧑🔬 Turns out even AI giants can have a few digital gaps. Read how we did it (and what it means for YOU!). Are your defenses AI-proof? 🤔 #Cybersecurity #EthicalHacking #CloudSecurity cyata.ai/blog/cyata-res…

English

Shahar Tal retweetledi

We at @TeamCyata have discovered 3 vulnerabilities in Anthropic's official Git MCP server. The most severe implication is remote code execution when combined with the filesystem MCP server.

Read more:

cyata.ai/blog/cyata-res…

English

Shahar Tal retweetledi

@gothburz Stay tuned. I can only say there’s more where that came from… 👀

English

@jifa Great writeup. 123k stars and one unescaped key. This is why security research matters. Good catch on the secrets_from_env=True default — that's the detail that turns a bug into a breach.

English

CVE-2025-68664 - Lord of the Strings: The Return of the 'lc' Key

---

In the land of AI agents, so shiny and bright,

LangChain was the framework that felt just right.

One hundred twenty-three thousand stars in the sky,

But nobody noticed the bug slipping by.

---

The dumps() function had one simple job,

To serialize data for the AI mob.

But a key called 'lc' went unescaped and free,

And opened the door to CVE.

---

"Inject what you wish," said the flaw with a grin,

"Just wrap it in 'lc' and let yourself in.

Type secret, add id, name the key that you seek,

And the environment variables? Yours within a week."

---

secrets_from_env was set to True,

Loading your keys for the attacker's review.

OPENAI, ANTHROPIC, whatever you hide,

Walked out the front door with injectable pride.

---

Twelve vectors of attack, the advisory did say,

astream_events gave your secrets away.

astream_log and RunnableWithMessageHistory,

Each one a path to cryptographic misery.

---

The LLM responses could carry the sin,

Through additional_kwargs, the poison slipped in.

Prompt injection triggers the serialized chain,

The agent exploits itself — maximum pain.

---

CVSS nine-point-three, the severity screams,

Critical rating, the worst of the themes.

Network attack vector, complexity low,

No privileges needed, no user to show.

---

The patch arrived bearing breaking changes three:

allowed_objects now guards what classes can be.

secrets_from_env flipped to False by default,

And Jinja2 templates are kept in a vault.

---

"Update to 0.3.81," the maintainers did plead,

"Or 1.2.5 if you're on the new breed."

But production is frozen, and change windows tight,

So the vulnerable versions will run through the night.

---

We built agents to reason, to think, and to learn,

But forgot to escape what the users return.

A flaw old as time in a framework brand new,

The AI revolution — now leaking to you.

---

So heed this CVE, written in rhyme,

Patch your dependencies, there's still time.

For the chains that we built to link language and code,

Became chains of exploitation on every node.

---

CVE-2025-68664

GHSA-c67j-w6g6-q2cm

Patch your LangChain. Or don't. Your keys.

github.com/langchain-ai/l…

English

Shahar Tal retweetledi

LangChain Core has a critical bug that lets attackers extract secrets and steer LLM output.

The issue, CVE-2025-68664 (CVSS 9.3), abuses how user data with lc keys is deserialized as trusted objects. Prompt injection can trigger it through normal LLM responses.

🔗 Read → thehackernews.com/2025/12/critic…

English

Shahar Tal retweetledi

🚨Vulnerability Alert ‼️

Security researcher Yarden Porat discovered a vulnerability in LangChain that exploits how the framework handles internal serialization markers.

The flaw, dubbed CVE-2025-68664, received a CVSS score of 9.3, indicating critical severity.

Source: cyberpress.org/critical-langc…

English

Shahar Tal retweetledi

🚨 𝐖𝐞 𝐟𝐨𝐮𝐧𝐝 𝐚 𝐜𝐫𝐢𝐭𝐢𝐜𝐚𝐥 𝐯𝐮𝐥𝐧𝐞𝐫𝐚𝐛𝐢𝐥𝐢𝐭𝐲 𝐢𝐧 𝐋𝐚𝐧𝐠𝐂𝐡𝐚𝐢𝐧.

Upgrade to langchain-core 1.2.5 or 0.3.81 immediately.

Cyata's security researcher Yarden Porat discovered LangGrinch (CVE-2025-68664 & CVE-2025-68665): the first critical vulnerability in LangChain Core, the most widely adopted framework for building AI agents (847M+ total downloads per pepy.tech).

The flaw lives in core serialization logic, making it reachable across virtually any deployment.

The attack: Malicious inputs can trick LangChain into leaking secrets from your environment, no direct code access required.

Full technical breakdown → cyata.ai/blog/langgrinc…

Coverage by @SiliconANGLE → siliconangle.com/2025/12/25/cri…

This is what securing agentic AI looks like. The agent isn't just the asset, it's the attack surface.

English

Shahar Tal retweetledi

All I Want for Christmas Is Your Secrets: LangGrinch hits LangChain Core #HackerNews

cyata.ai/blog/langgrinc…

English

Shahar Tal retweetledi

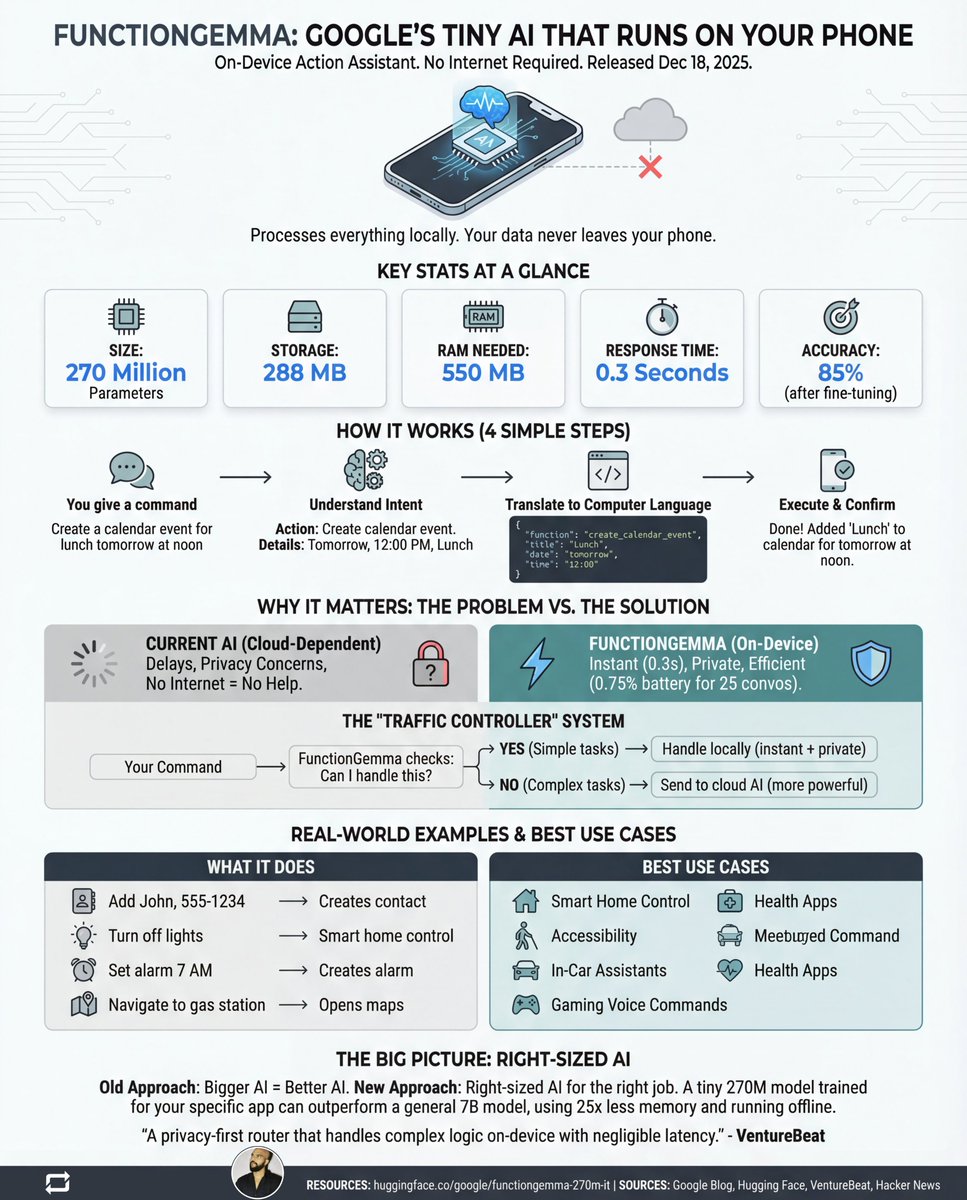

Google just quietly dropped an AI that runs on your Mobile and doesn't need the internet.

- 270 million parameters.

- 100% private.

- No servers.

- No cloud.

- No data leaving your device.

It's called FunctionGemma.

Released December 18, 2025.

And it does something wild:

It turns your voice commands into REAL actions on your phone.

No internet required.

No data leaving your device.

No waiting for servers.

Just you and your phone.

That's it.

Let me break down why this matters:

Current AI assistants work like this:

You speak → Words go to the cloud → Server processes → Answer returns

The problem?

→ Slow (internet round-trip)

→ Privacy nightmare (your data travels everywhere)

→ Useless offline (no signal = no help)

FunctionGemma flips this completely.

Everything happens ON your device.

Response time? 0.3 seconds.

Battery drain? 0.75% for 25 conversations.

File size? 288 MB.

That's smaller than most mobile games.

Here's how it actually works:

Step 1: You say "Add John to contacts, number 555-1234"

Step 2: FunctionGemma understands your intent

Step 3: Translates it to code your phone understands

Step 4: Your phone executes it instantly

Step 5: Done. Contact saved. No cloud involved.

The numbers that blew my mind:

• 270M parameters (6,600x smaller than GPT-4)

• 126 tokens per second

• 85% accuracy after fine-tuning

• 550 MB RAM usage

• Works 100% offline

But here's the real genius:

Google calls it the "Traffic Controller" approach.

Simple tasks? → Handled locally (instant + private) Complex tasks? → Routed to cloud AI (when needed)

Best of both worlds.

What can it actually do?

→ "Set alarm for 7 AM" ✓

→ "Turn off living room lights" ✓

→ "Create meeting with Sarah tomorrow" ✓

→ "Navigate to nearest gas station" ✓

→ "Log that I drank 2 glasses of water" ✓

All processed locally. All private. All instant.

The honest limitations:

→ Can't chain multiple steps together (yet) → Struggles with indirect requests → 85% accuracy means 15% errors → Needs fine-tuning for best results

But that 58% → 85% accuracy jump after training?

That's the unlock.

Why should you care?

This isn't about one model.

It's about a fundamental shift:

OLD thinking: Bigger AI = Better AI

NEW thinking: Right-sized AI for the right job

A tiny 270M model trained for YOUR app can outperform a general 7B model.

While using 25x less memory. While running completely offline. While keeping all data private.

The future of AI isn't just in data centers.

It's in your pocket.

And it just got a lot more real.

Want to try it?

→ Download: ollama pull functiongemma

→ Docs: ai.google.dev/gemma/docs/fun… → Model: huggingface.co/google/functio…

PS:) Like, Repost and Bookmark!

If this was useful - Follow for more AI breakdowns

English