Charbellakis@charbofficiel

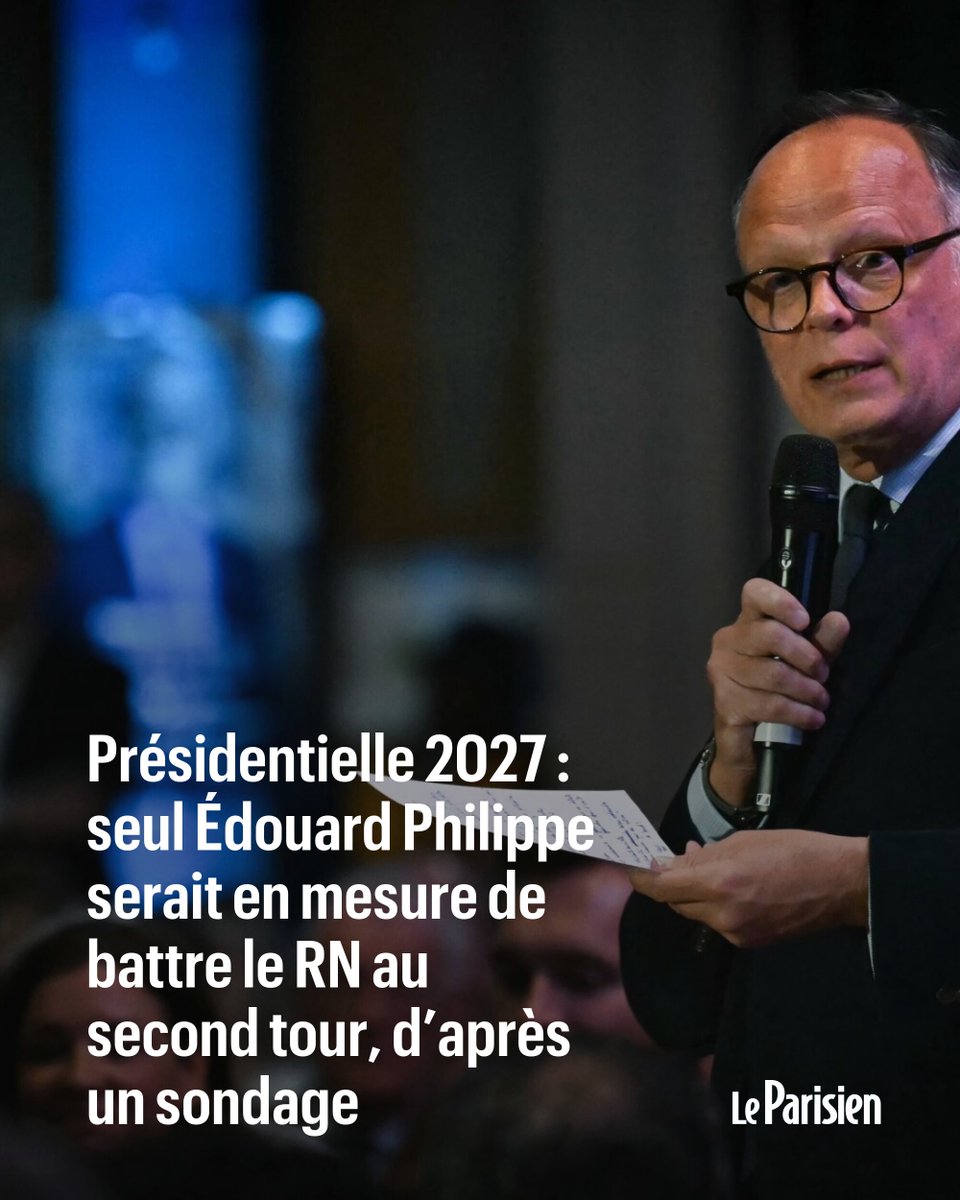

🔥 LE SCÉNARIO PHILIPPE SE PRÉCISE !

Un sondage sort… et comme par magie, même scénario : le RN gagne partout… sauf face à Édouard Philippe.

Et là, on te déroule le storytelling parfait :

👉 “le seul capable de battre le RN”

👉 “le candidat crédible”

👉 “le recours raisonnable”

Pendant ce temps, Jordan Bardella est donné largement en tête au 1er tour,

Jean-Luc Mélenchon donné perdant dans certains duels, et tout converge vers un seul nom.

On est dans la traditionnelle mise en scène.

Ce cirque ne fait que commencer… Attendez la suite…

𝘚𝘰𝘶𝘳𝘤𝘦 : 𝘓𝘦 𝘍𝘪𝘨𝘢𝘳𝘰 𝘢𝘷𝘦𝘤 𝘈𝘍𝘗, 𝘴𝘰𝘯𝘥𝘢𝘨𝘦 𝘌𝘭𝘢𝘣𝘦 𝘱𝘰𝘶𝘳 𝘉𝘍𝘔𝘛𝘝 / 𝘓𝘢 𝘛𝘳𝘪𝘣𝘶𝘯𝘦 𝘋𝘪𝘮𝘢𝘯𝘤𝘩𝘦 (𝘮𝘢𝘳𝘴 𝟤𝟢𝟤𝟨)

#politique #sondage #présidentielle #france #analyse