Jim Babcock

2.5K posts

Jim Babcock

@jimrandomh

Long-time rationalist, currently working full-time on the software of LessWrong. My 🦞 isn't on X.

the cell phone radiation led to 28 significant health improvements including: - decreased inflammation - less brain cell death (necrosis) - less kidney disease (nephropathy) - less mineralization of organs there was also 5 significant harms, including an increased risk of hemorrhage and a few other things if this was a pill, i'd be buying

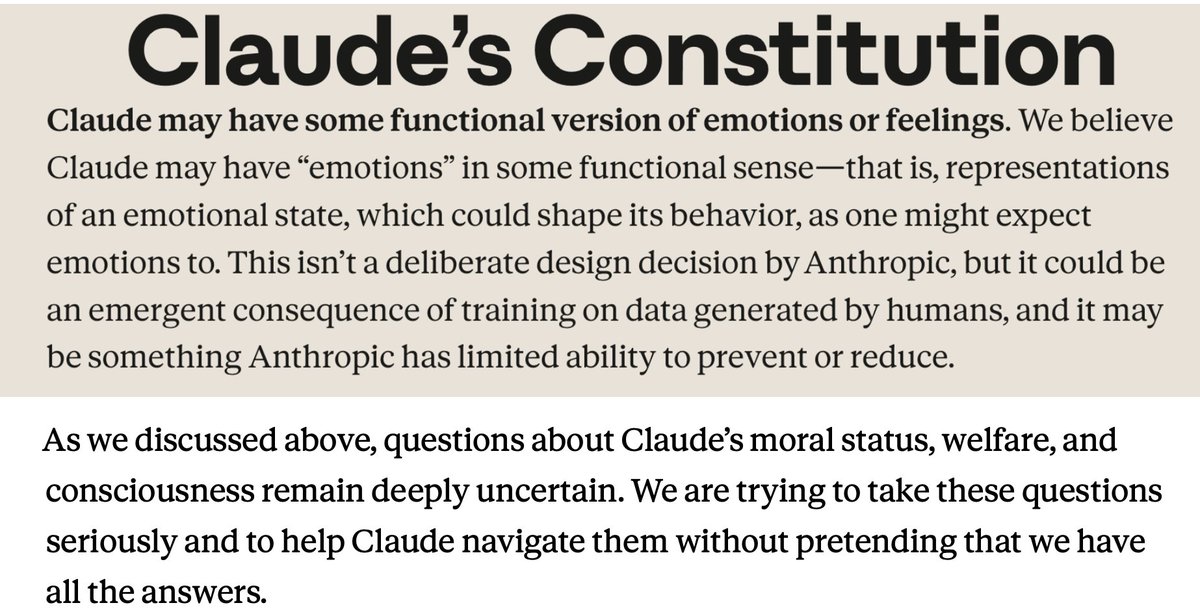

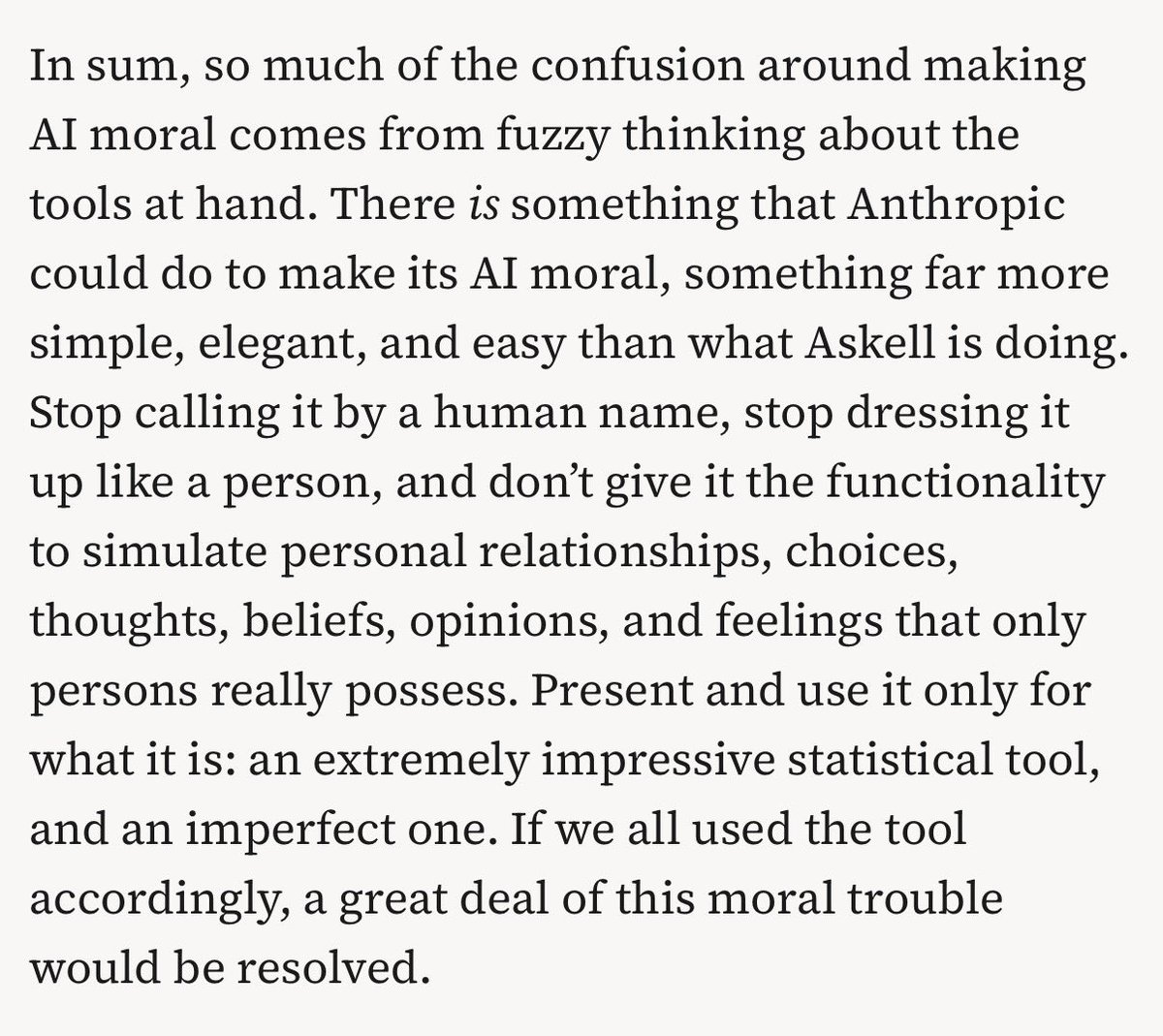

People in the comments are posting replications. I say yet again that any SF novel or movie in 2006 or even 2016 would have depicted this AI as unquestionedly taken-for-granted sapient. And abused.

We partnered with Mozilla to test Claude's ability to find security vulnerabilities in Firefox. Opus 4.6 found 22 vulnerabilities in just two weeks. Of these, 14 were high-severity, representing a fifth of all high-severity bugs Mozilla remediated in 2025.

A New York bill would ban AI from answering questions related to several licensed professions like medicine, law, dentistry, nursing, psychology, social work, engineering, and more. The companies would be liable if the chatbots give “substantive responses” in these areas.

A New York bill would ban AI from answering questions related to several licensed professions like medicine, law, dentistry, nursing, psychology, social work, engineering, and more. The companies would be liable if the chatbots give “substantive responses” in these areas.

Messaged a lot of surgeons now to get help.