จิตร์ทัศน์

31.7K posts

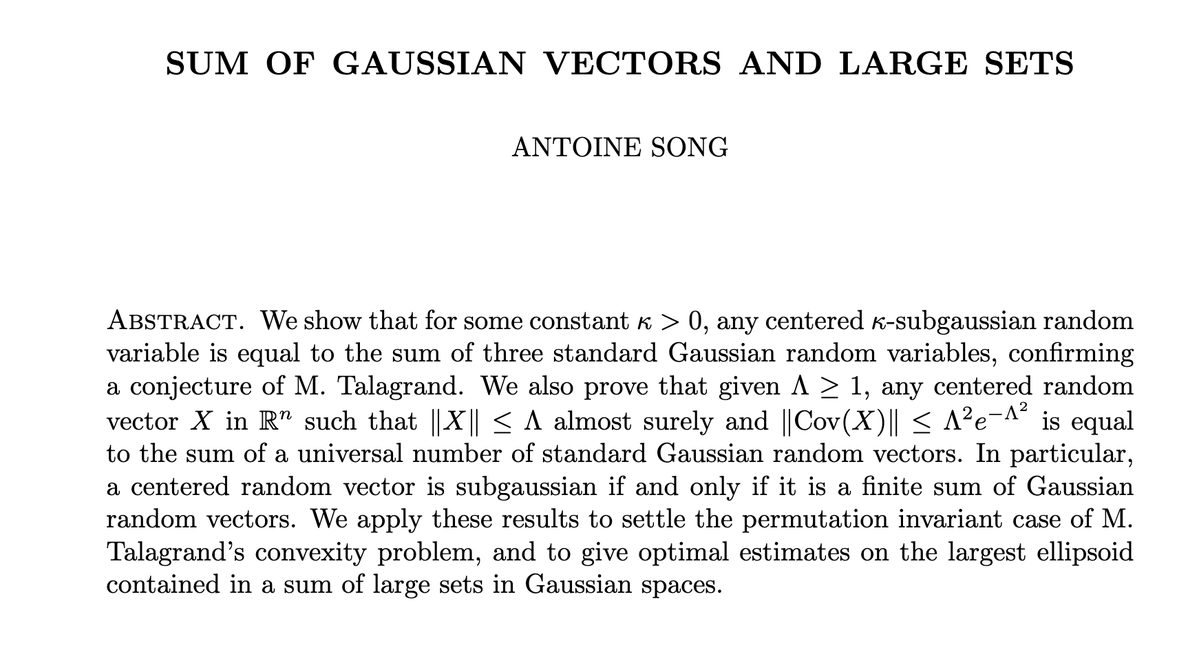

This is one of the coolest such examples! See comments from Lichtman below, who proved the related primitive set conjecture arxiv.org/abs/2202.02384

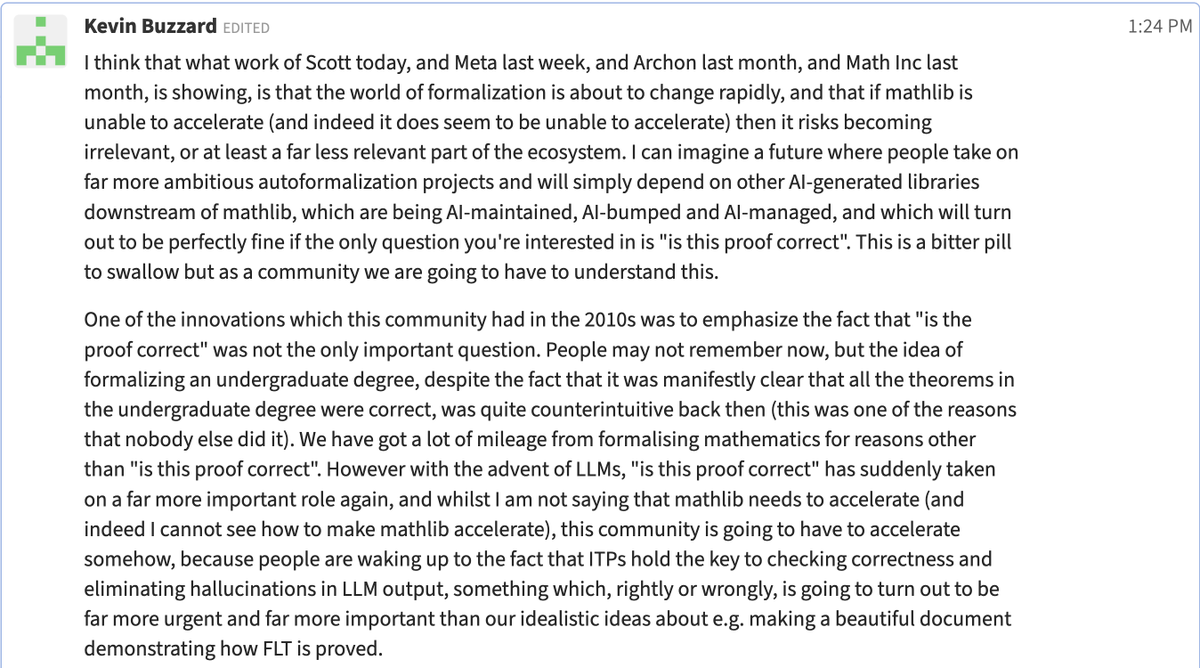

A counterpoint, also on zulip:

One more zulip comment worth reposting here:

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

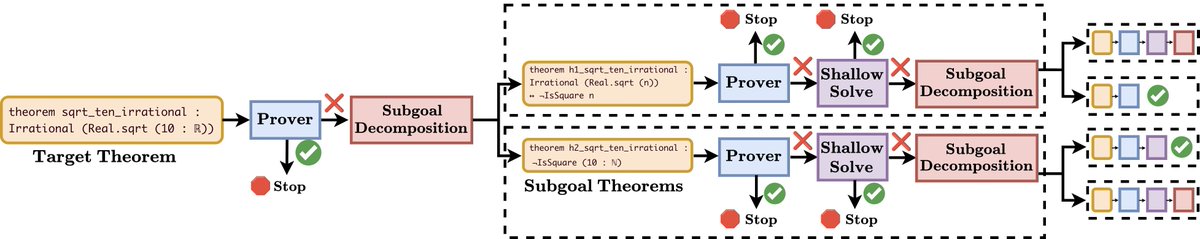

A decade ago, I abandoned the first math problem my PhD advisor ever gave me. This week, I finally solved it—and formally verified it—using @AnthropicAI's Claude Code, @OpenAI's Codex, and @HarmonicMath's Aristotle. Here’s how AI turned my 10-year-old notes into a 15,000-line Lean 4 proof. 🧵

🔊@UofMaryland will lead a $2.6M @DARPA-funded effort to accelerate mathematical discovery with #AI. Led by @MTHajiaghayi, the GENIUS project aims to develop AI systems that reason alongside mathematicians to solve complex problems. 🔗 go.umd.edu/Hajiaghayi-3-2…