James

5.5K posts

@jmac_ai

Ask me about #ReinforcementLearning #AI research @SonyAI_global RL for games, robotics, and other real-world applications Views and tweets are my own.

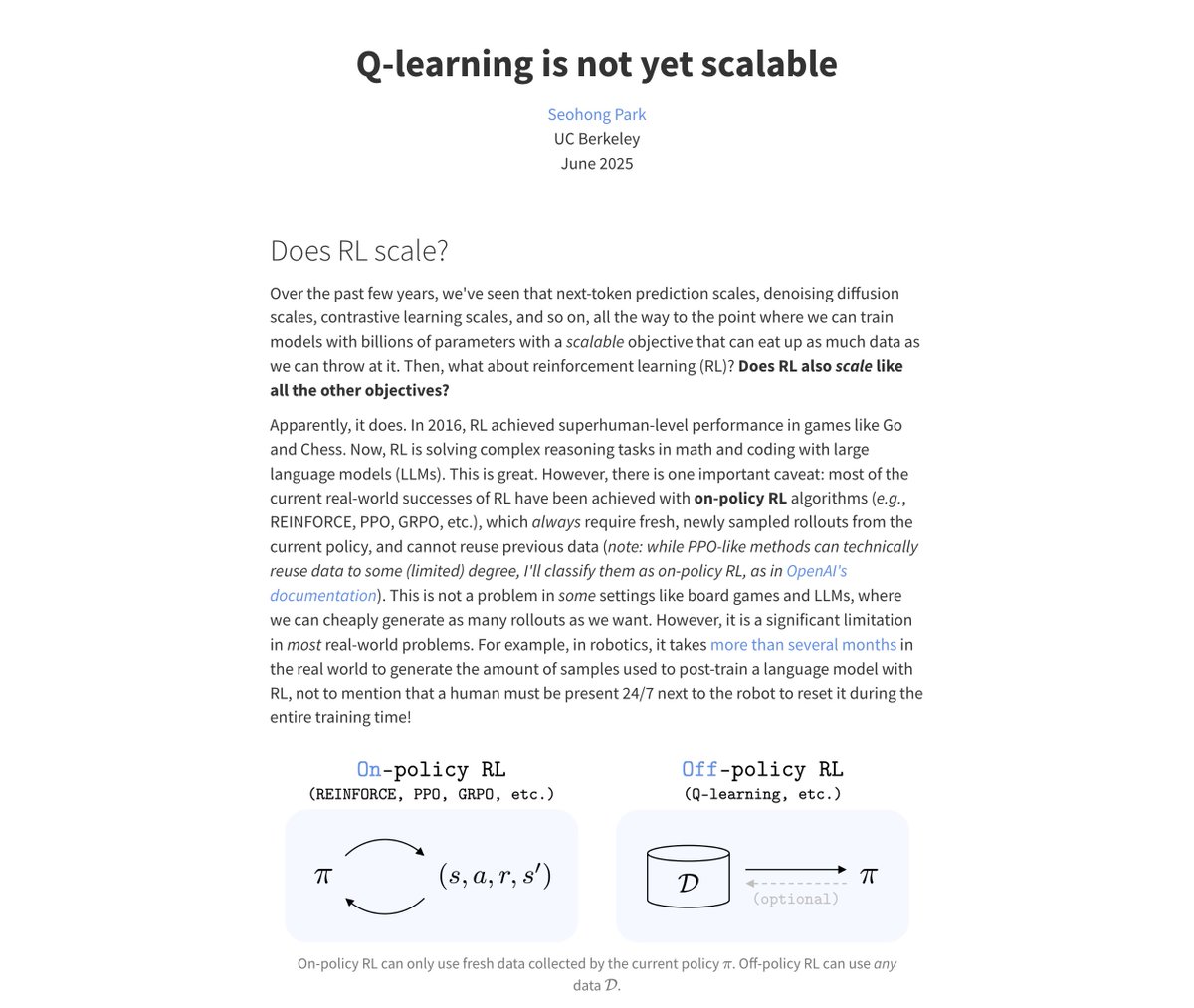

Today, ICE officers in Minneapolis were conducting targeted operations when rioters began blocking ICE officers and one of these violent rioters weaponized her vehicle, attempting to run over our law enforcement officers in an attempt to kill them—an act of domestic terrorism. An ICE officer, fearing for his life, the lives of his fellow law enforcement and the safety of the public, fired defensive shots. He used his training and saved his own life and that of his fellow officers. The alleged perpetrator was hit and is deceased. The ICE officers who were hurt are expected to make full recoveries. This is the direct consequence of constant attacks and demonization of our officers by sanctuary politicians who fuel and encourage rampant assaults on our law enforcement who are facing 1,300% increase in assaults against them and an 8,000% increase in death threats. This is an evolving situation, and we will give the public more information as soon as it becomes available.

BOW WOW WOW, had the pleasure to try my voice on rock music with @huskybythegeek and I love it! Happy late PC release to Final Fantasy VII Rebirth 🐶 #FF7Rebirth

FFVII Rebirth - Bow wow wow (Stamp Battle) goes Rock ft. @PernelleMusic

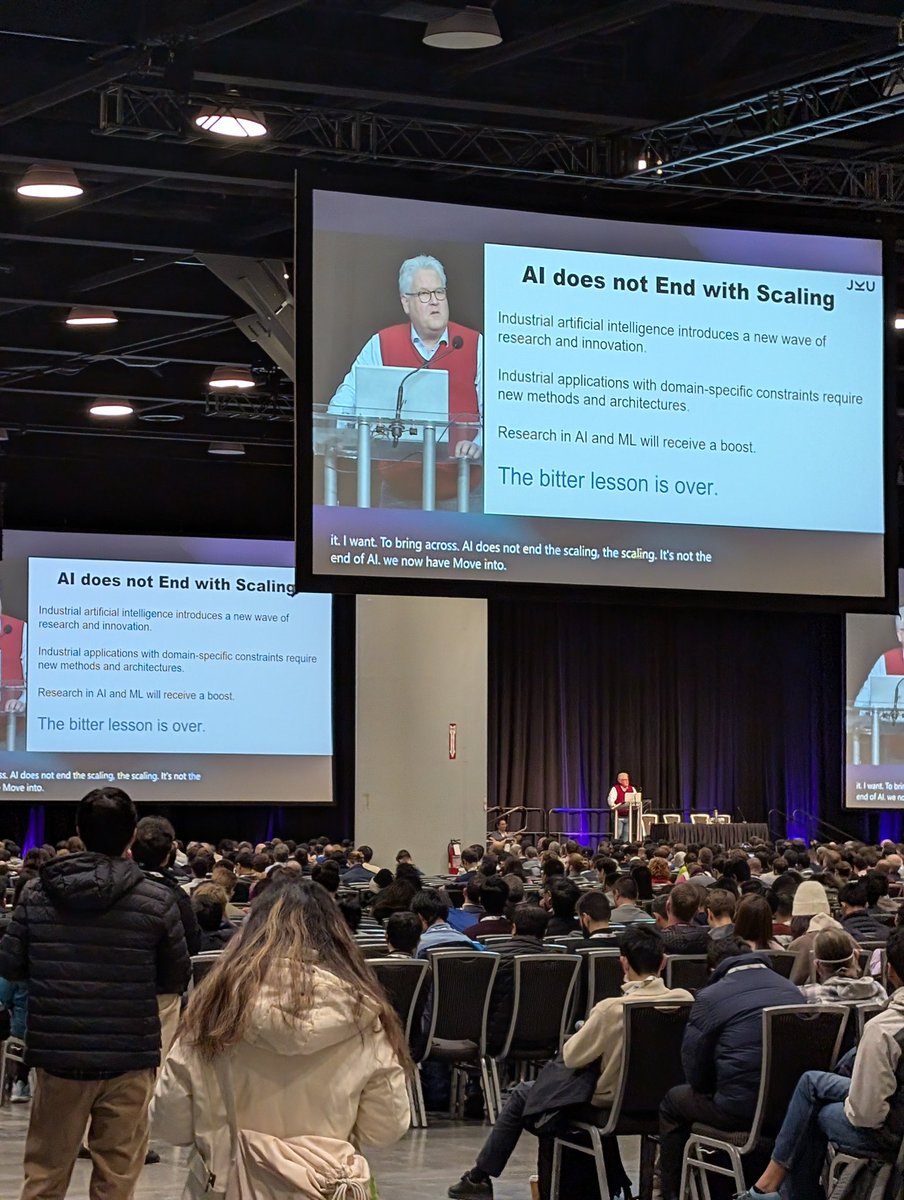

"For better or worse, we are building a God." Tim Urban of @waitbutwhy fame describes how he started down the rabbit hole of AI and realized the importance of the topic.