Joe de Moraes

104 posts

Joe de Moraes

@joedemoraes

Master Your Mindset, Transform Your Software Engineering Career https://t.co/Xk6YtaZGXe

Steve Jobs on one of the secrets of life.

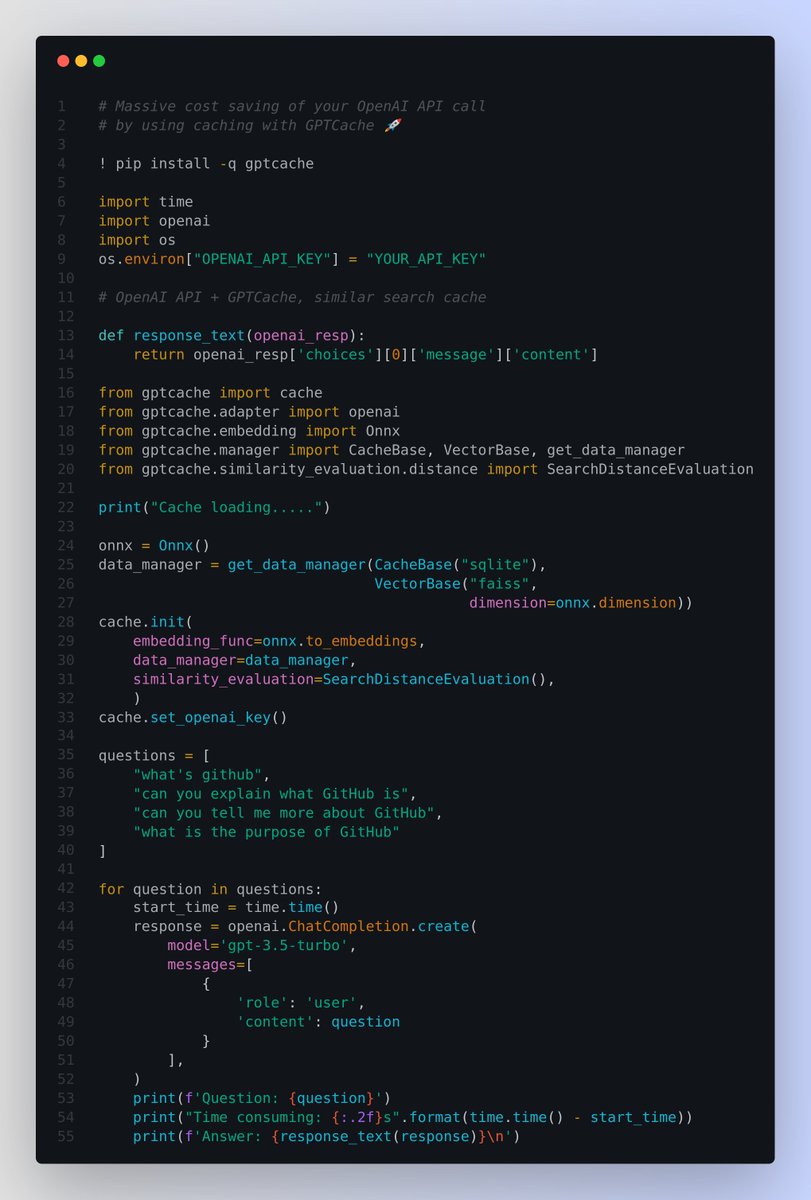

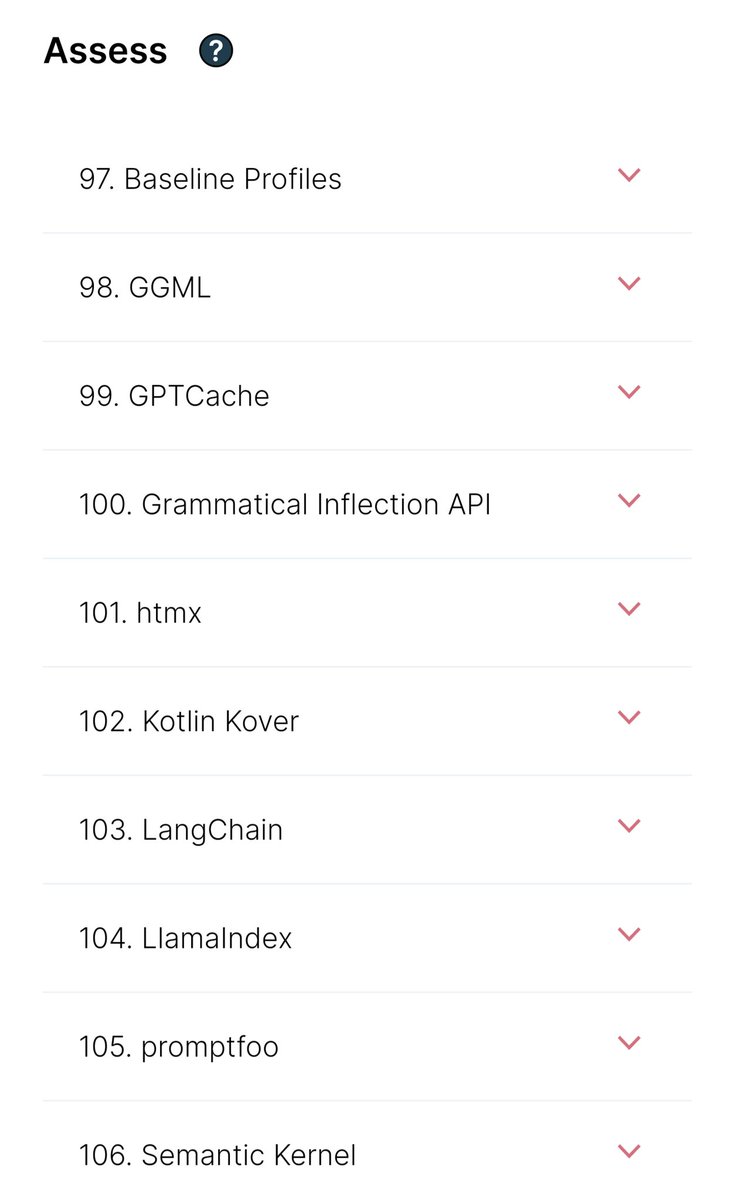

Massive cost saving (by 50% or more) of your OpenAI API / ChatGPT API call by using caching with GPTCache 🚀 🟠 Also much faster response times 🟠 Overcome the rate limits restrictions and 🟠 Greatly enhance the scalability of your application, by reducing the load on the LLM service. ---------- 🤔 The Problem it solves using an exact match approach for LLM caches is less effective due to the complexity and variability of LLM queries, resulting in a low cache hit rate. ---------- 🤔 How does it work? To address this issue, GPTCache adopt alternative strategies like semantic caching. Semantic caching identifies and stores similar or related queries, thereby increasing cache hit probability and enhancing overall caching efficiency. GPTCache employs embedding algorithms to convert queries into embeddings and uses a vector store for similarity search on these embeddings. This process allows GPTCache to identify and retrieve similar or related queries from the cache storage. Users can customize their own semantic cache, and and can even develop their own implementations to suit their specific needs. ---------- GPTCache offers three metrics to gauge its performance, which are helpful for developers to optimize their caching systems: 📌 Hit Ratio: This metric quantifies the cache's ability to fulfill content requests successfully, compared to the total number of requests it receives. A higher hit ratio indicates a more effective cache. 📌 Latency: This metric measures the time it takes for a query to be processed and the corresponding data to be retrieved from the cache. Lower latency signifies a more efficient and responsive caching system. 📌 Recall: This metric represents the proportion of queries served by the cache out of the total number of queries that should have been served by the cache. Higher recall percentages indicate that the cache is effectively serving the appropriate content.