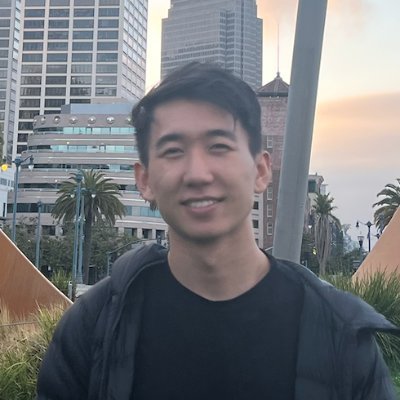

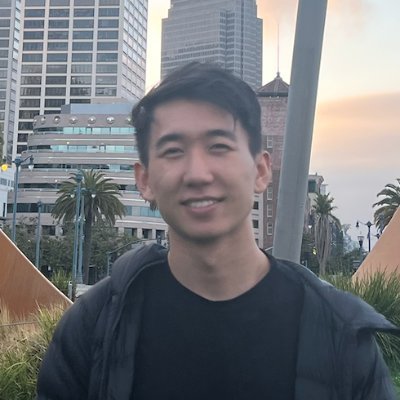

John Qian

139 posts

John Qian

@johnlqian

Building @MatricesAI, prev @AdeptAILabs, founding eng at @weights_biases. I like world modeling

Today, we are announcing Proximal. Proximal is a research lab for data. Our core belief is that data which is complex enough to teach today’s frontier models is not bottlenecked by domain experts, but by great ideas and excellent software. We are excited about a world in which coding agents can autonomously run for multiple weeks, solve the hardest technical problems and discover novel ideas that advance progress in various domains of science and engineering. We believe that we are not far from this future, but that the biggest bottleneck preventing us from achieving it is training data. Many companies work on data, but most of them are approaching it the wrong way. Historical capability breakthroughs are the result of creative engineers discovering scalable data collection methods, not thousands of contractors manually writing task demonstrations. Inevitably, the potential impact of human data will become smaller and smaller as model capabilities increase: agents are already outperforming most humans in many domains - the number of experts that are capable of judging model outputs shrinks with every new model release. Proximal is a new data company. We are not a recruiting firm or a talent marketplace, but a research and engineering organization that treats data as a problem which deserves the same level of rigor as work on training algorithms and model architectures. We think that this is the most impactful work towards agents that can autonomously solve complex technical problems, and intend to share our research and progress in the open.

“End of the exponential” is such an annoying, ambiguous phrase, esp. from a gifted communicator. So we’re going to reach the limit at infinity? Or the growth will become sub-exponential? 🙄

We’re rapidly approaching the point where if your QA process of RL tasks requires humans actually solving the tasks, your pipeline is too slow. The only types of tasks worth creating nowadays are ones that are easier to create than they are to solve. The easiest way to do this is taking something functional, breaking or removing a component, and making the task about recovery or repair.