Jon Tow

31 posts

I am excited to open-source PipelineRL - a scalable async RL implementation with in-flight weight updates. Why wait until your bored GPUs finish all sequences? Just update the weights and continue inference! Code: github.com/ServiceNow/Pip… Blog: huggingface.co/blog/ServiceNo…

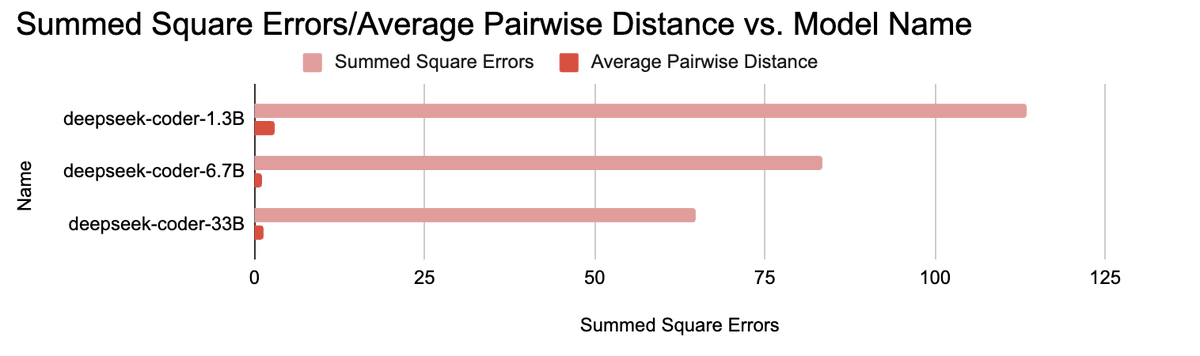

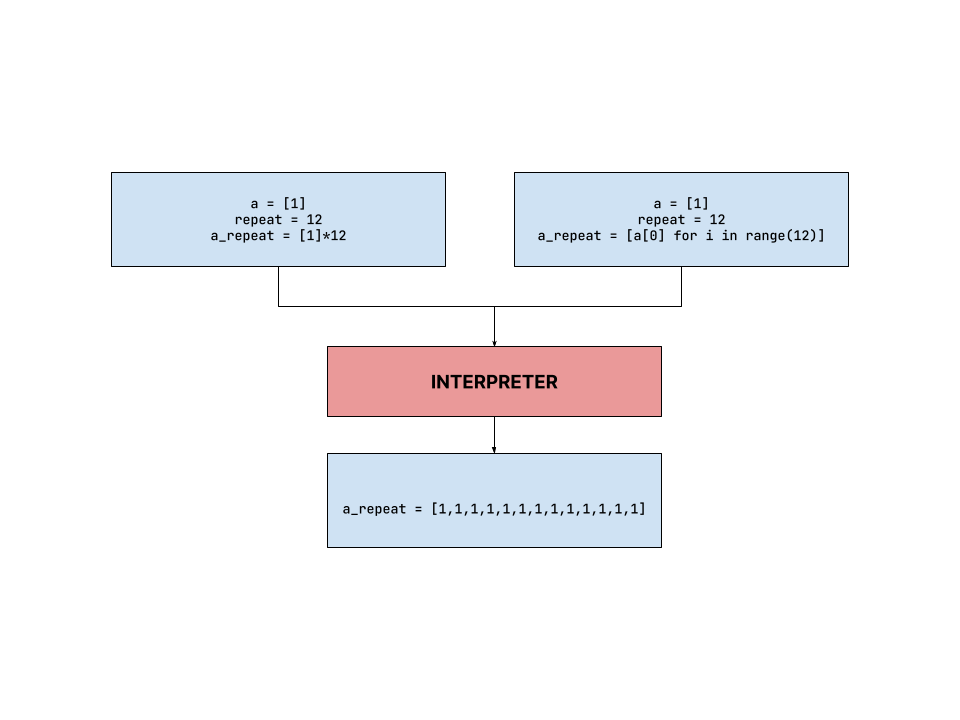

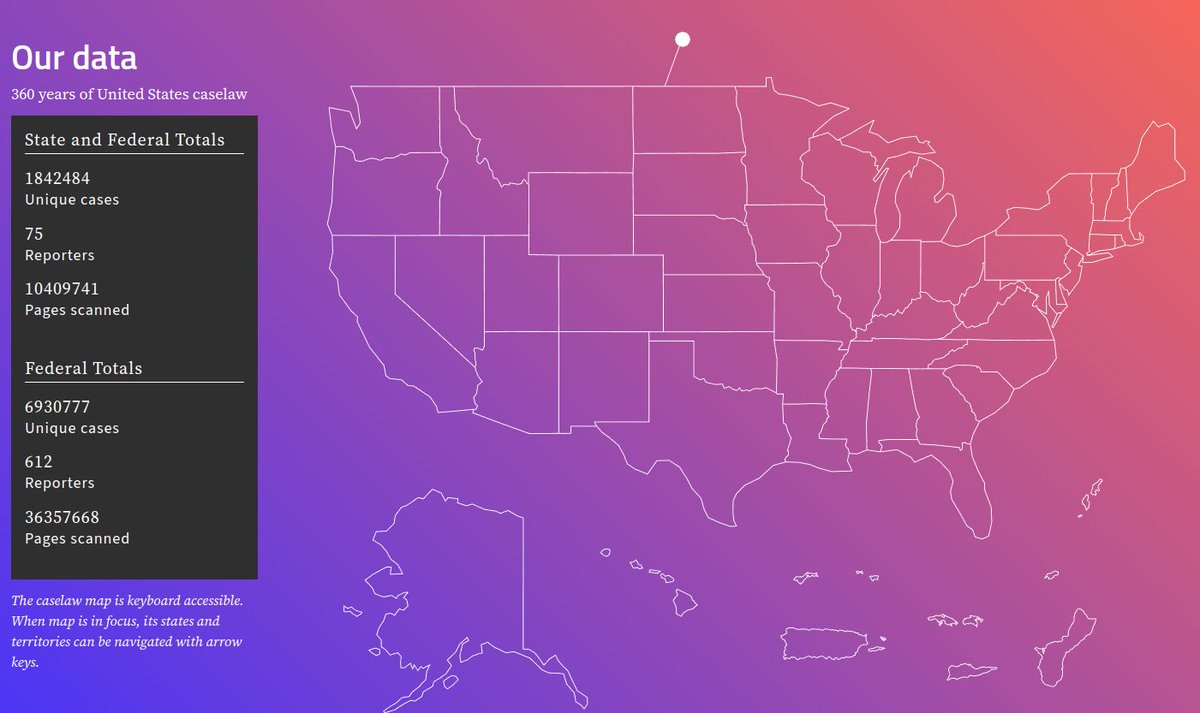

Excited to share our new paper, Lessons From The Trenches on Reproducible Evaluation of Language Models! In it, we discuss common challenges we’ve faced evaluating LMs, and how our library the Evaluation Harness is designed to mitigate them 🧵 arxiv.org/abs/2405.14782

Stable LM 2 12B is a pair of powerful 12 billion parameter language models trained on multilingual data in English, Spanish, German, Italian, French, Portuguese, and Dutch, featuring a base and instruction-tuned model. You can now try the model here: huggingface.co/stabilityai/st… (1/3)

Introducing three new open-source PaLM models trained at a context length of 8k on C4. Open-sourcing LLMs is a necessity for the fair and equitable democratization of AI. The models of sizes 150m, 410m, and 1b are available to download and use here: github.com/conceptofmind/…