Jun Kim

19 posts

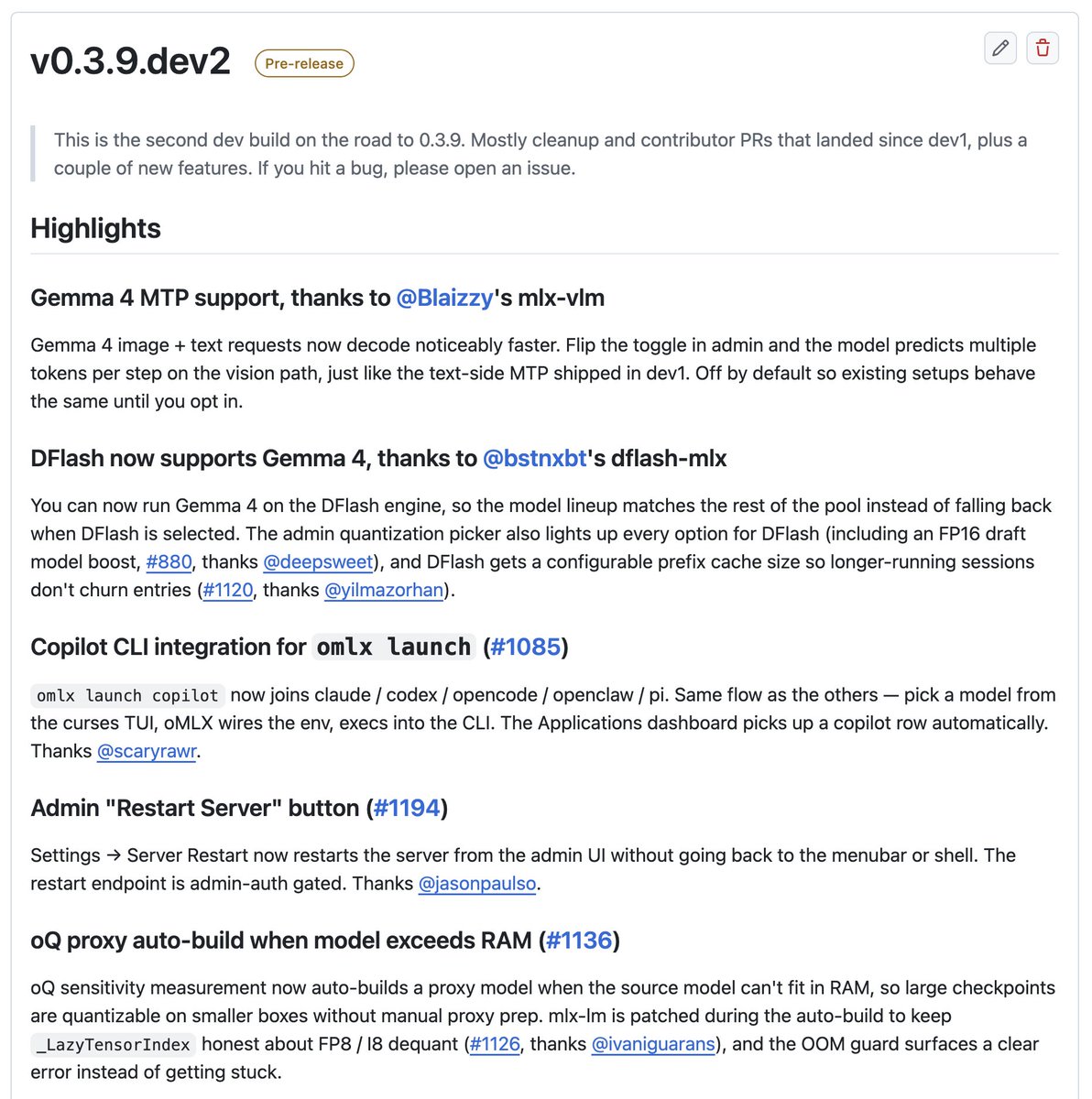

oMLX 0.3.9.dev2 released. Highlights: - Gemma 4 MTP on the vision path (thanks to @Prince_Canuma's mlx-vlm). Image+text decodes much faster now - Gemma 4 on the DFlash engine (thanks to @bstnxbt's dflash-mlx) - ParoQuant support - omlx launch copilot joins claude / codex / opencode / openclaw / pi - Restart server button right in the admin UI - oQ auto-builds a proxy when the model can't fit in RAM Plus a lot of bug fixes and 20 new contributors in this cycle. Thanks everyone! github.com/jundot/omlx/re…

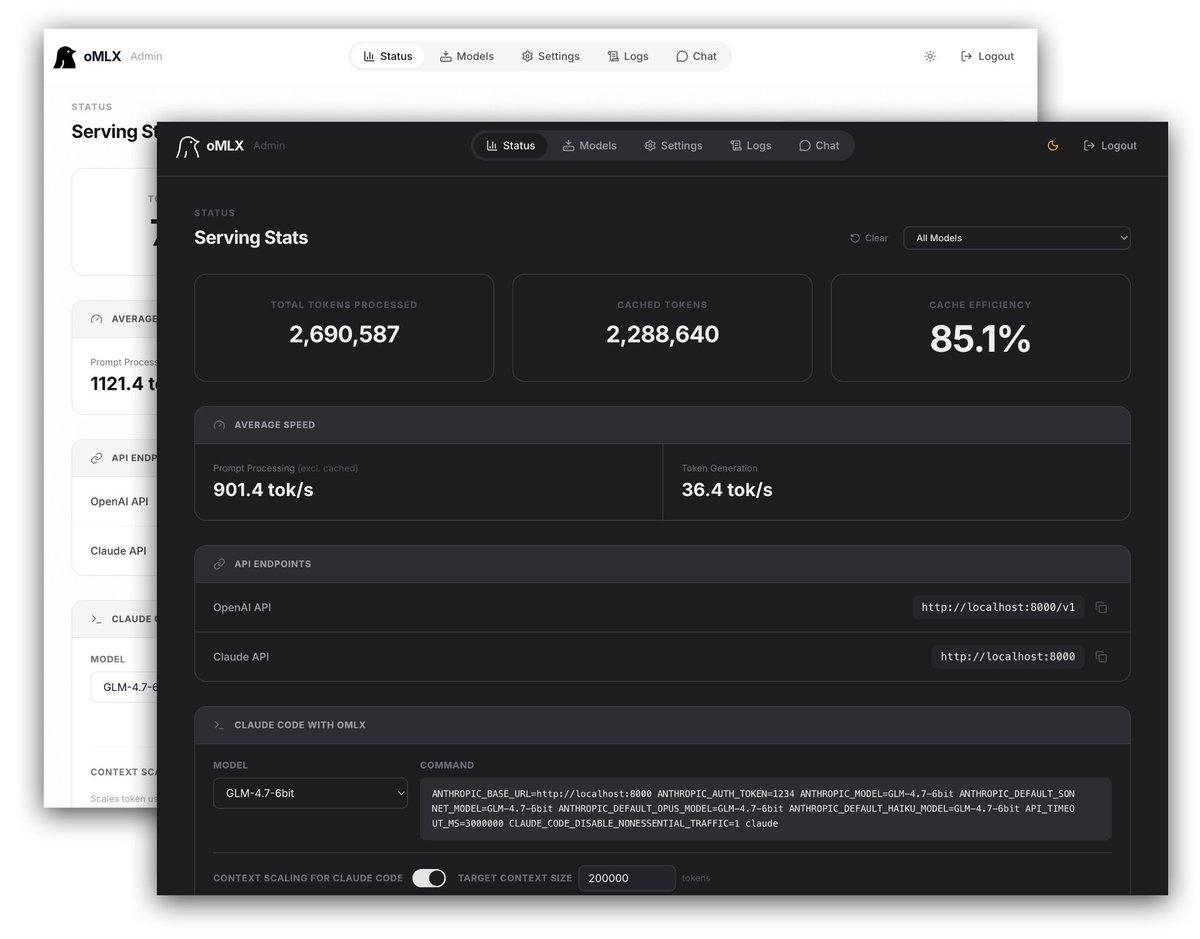

Two open-source MLX inference servers worth knowing about if you run LLMs on Mac: MTPLX (@youssofal) Uses a model's own MTP heads for speculative decoding. No draft model needed. ~63 tok/s on Qwen3.6-27B (M5Max). Mathematically exact sampling too; not just greedy prefix matching. oMLX (@jundot) Tiered KV cache that persists to SSD across restarts. Huge for coding agents where you're sending the same codebase context repeatedly. Also serves LLMs, VLMs, embeddings, rerankers, and audio simultaneously. They're solving different problems; MTPLX maximizes tok/s, oMLX maximizes workflow efficiency. Both have OpenAI + Anthropic-compatible APIs, both work with Claude Code/OpenCode/Cursor out of the box. Running both depending on the task. But, both worth checking out.

Mac-optimized LLM inference server github.com/jundot/omlx

Watching @awnihannun at @ollama

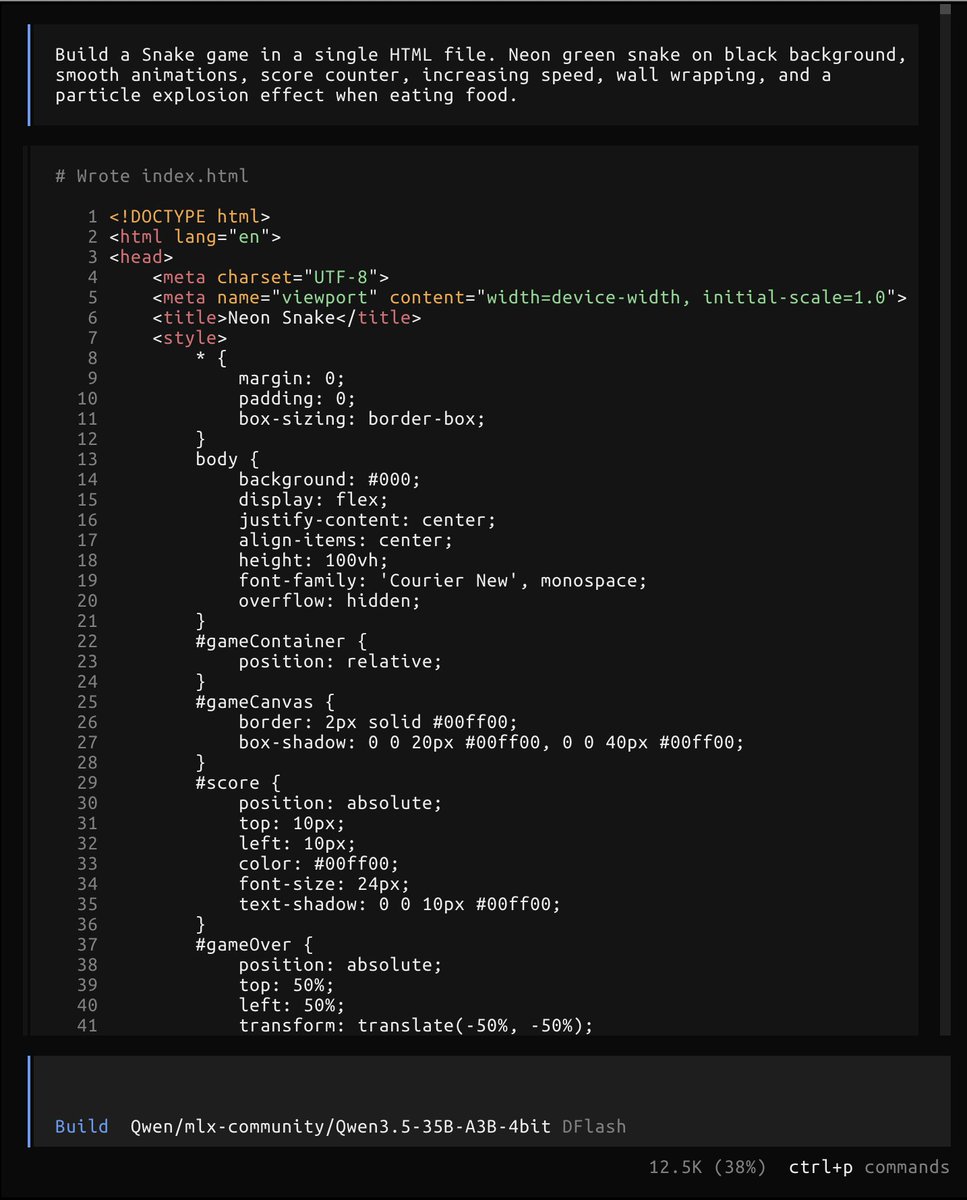

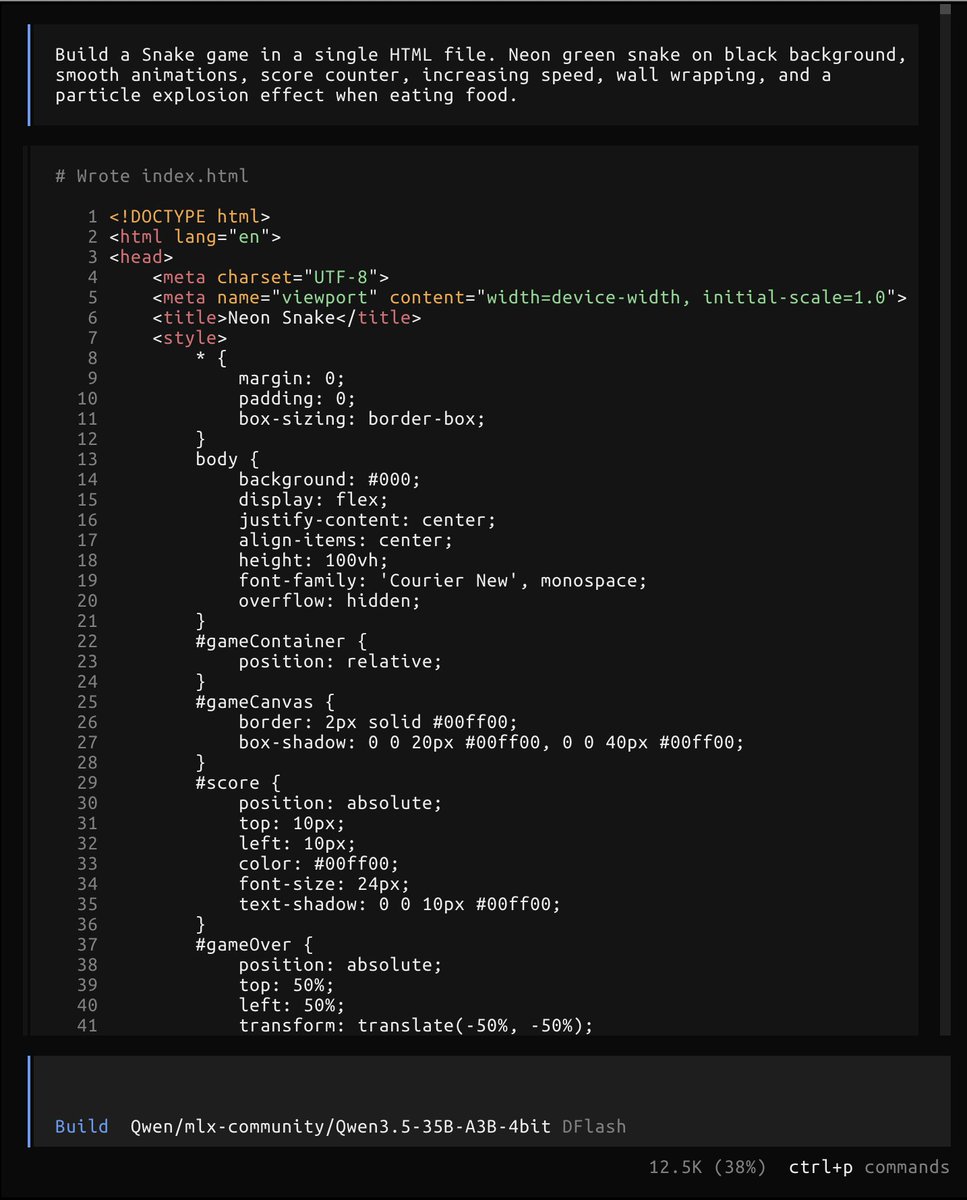

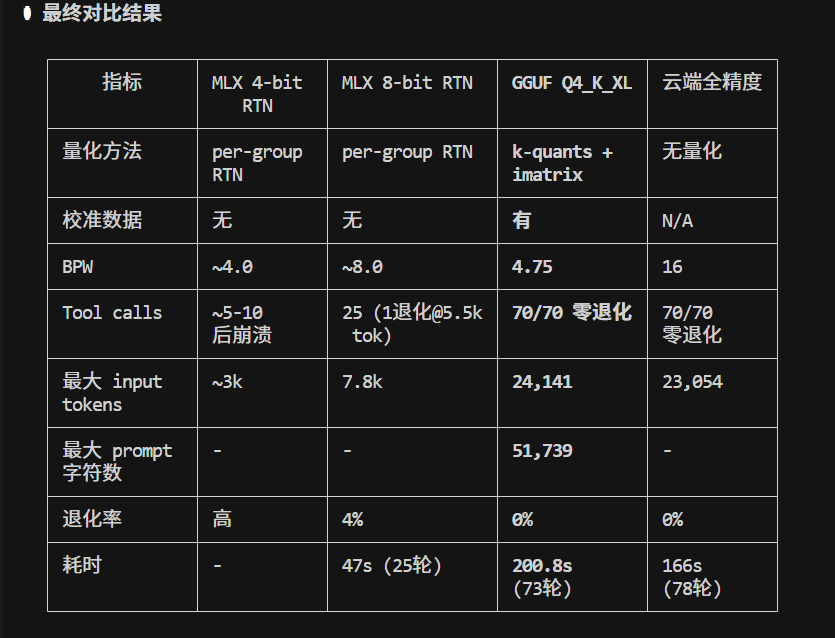

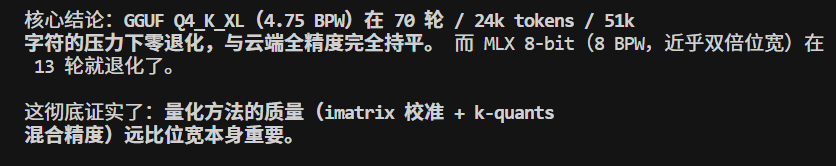

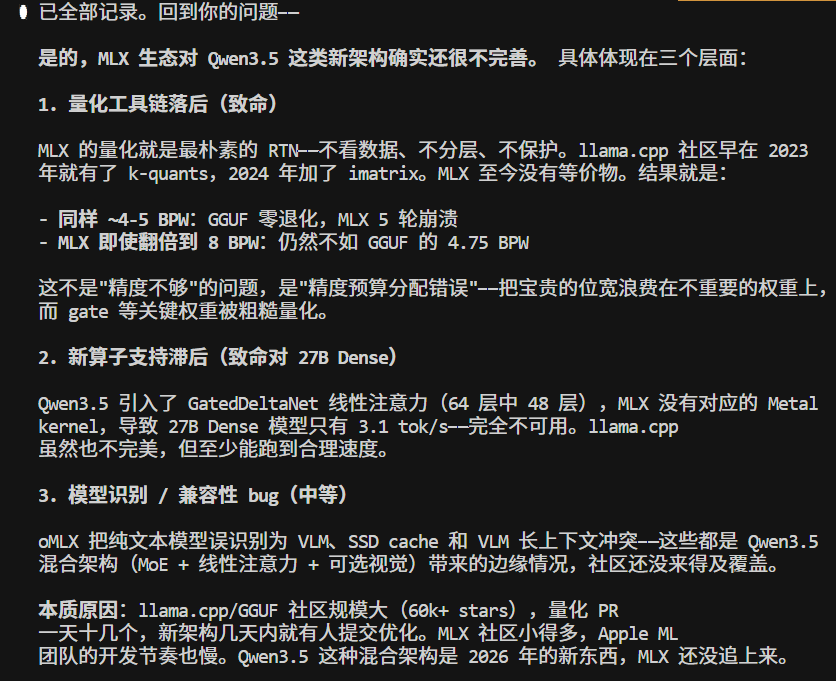

还是算了,qwen3.5低档model不适用于 claude code 的生产力。 MLX/Qwen3.5-35B-8bit , 虽然速度快,也有点智能, 但是不大适用于 claude code, 很容易退化。 大概13轮开始吧。 而一个任务,read write bash 来五六次 tool很常见,基本等于很难用于生产了。