Joonho Lee

454 posts

Joonho Lee

@junja941

Roboticist at Neuromeka, KR

I'm uploading a recording here to make up for the cut-off during our onsite presentation @NeurIPSConf #NeurIPS2025. 🙏We are deeply grateful for your previous support and the encouragement to upload this recording. ⭐️Despite the interruption, our work was recognized with an Outstanding Paper award.

We present "hybrid system" that supplements conventional automation with "learning" for task & safety-level adaptiveness Deployed in factory for motor cable soldering (< 0.6 mm tolerance), resulting 108 motors, 99.4% SR with < 20 min data per task Paper: arxiv.org/abs/2604.22235

Heard some people like wheels?😁 Humanoid robots are the ideal form of general-purpose robots (perfect for general AI and human-derived data). They can work without wheels — but they can also have wheels if they want. Whatever works.

I don't think the average person realizes how often humanoid robots break.

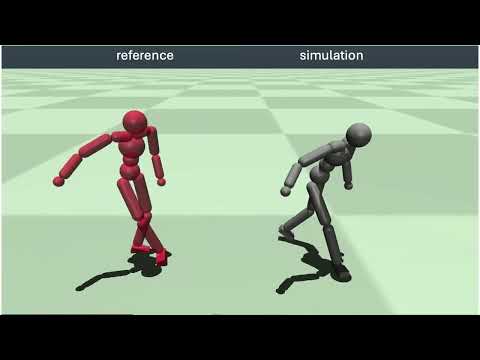

We recently explored how to learn a time-varying linear (TVL) policy for character control. It works surprisingly well! In simulation, a TVL policy can handle every Deepmimic-style task we throw at it. No neural net at deployment, just a sequence of matrices.

Meet RIVR TWO, our next-generation robot designed for doorstep delivery and AI data collection at scale.