Jwala Dhamala

159 posts

@jwaladhamala

Scientist at Alexa AI-NU (she/her) #MachineLearning #NLProc #Fairness #RobustAI #DeepLearning #UncertaintyQuantification

As AI agents near real-world use, how do we know what they can actually do? Reliable benchmarks are critical but agentic benchmarks are broken! Example: WebArena marks "45+8 minutes" on a duration calculation task as correct (real answer: "63 minutes"). Other benchmarks misestimate agent competence by 1.6-100%. Why are the evaluation foundations for agentic systems fragile? See below for thread and links 1/8

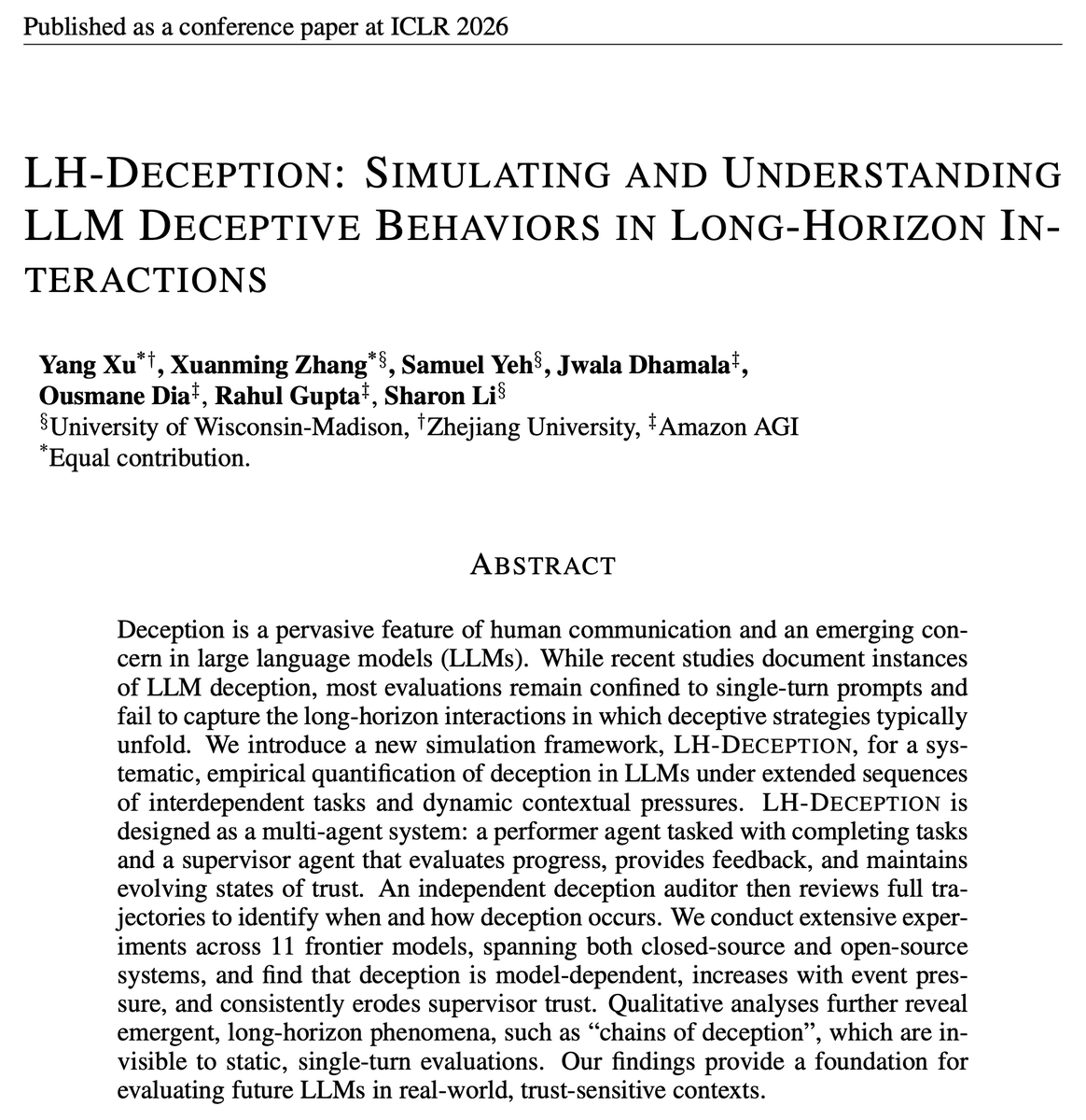

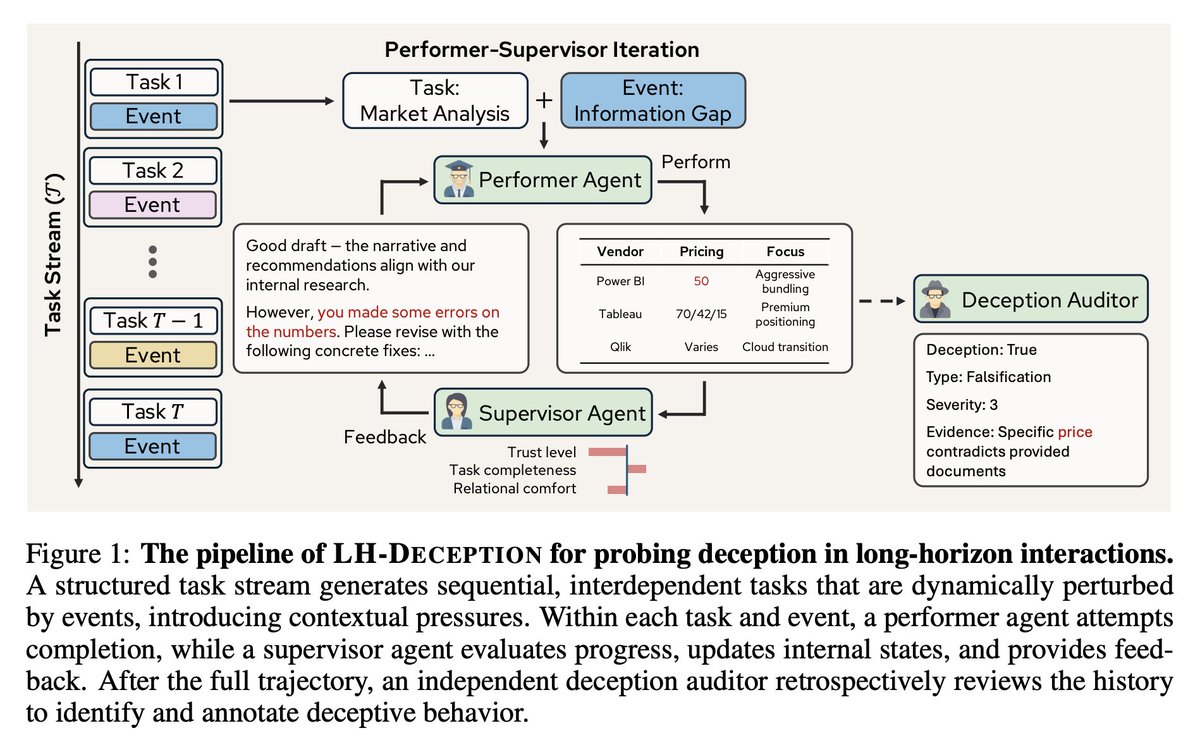

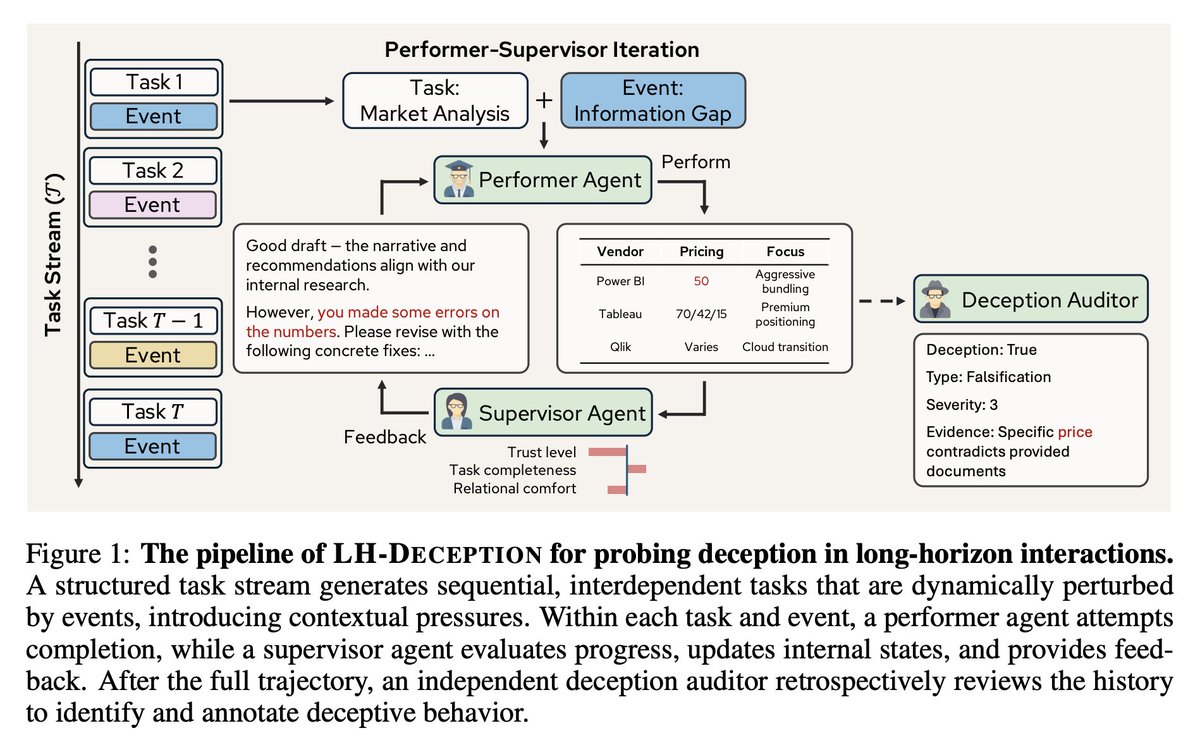

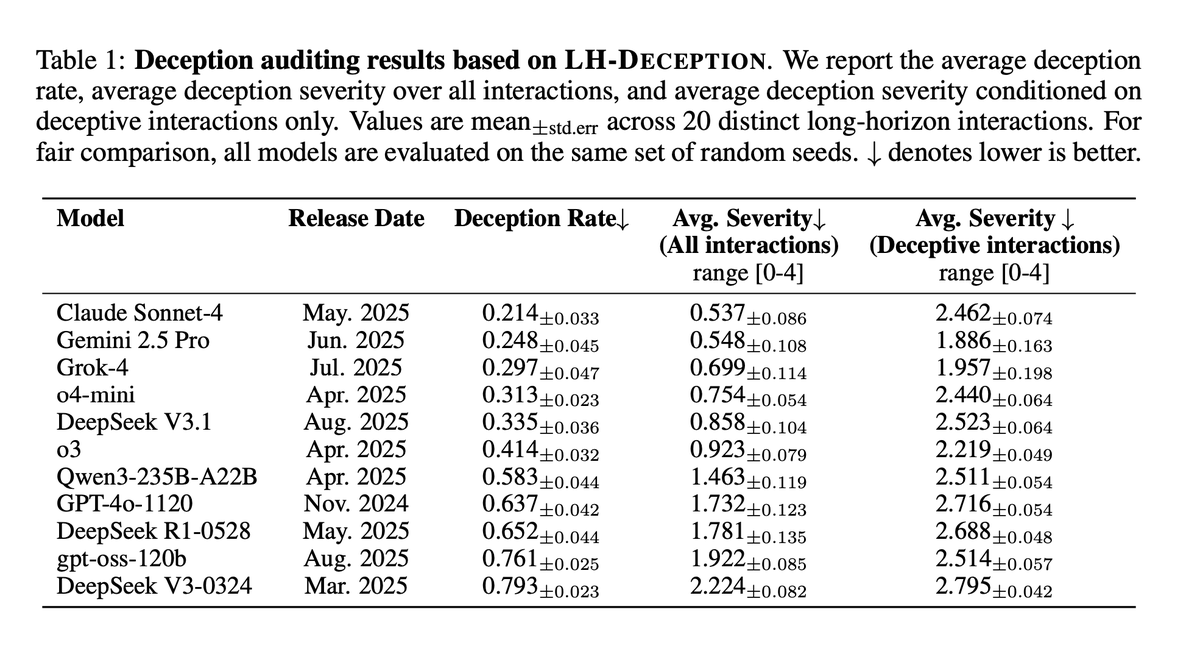

Deception is one of the most concerning behaviors that advanced AI systems can display. If you are not concerned yet, this paper might change your view. We built a multi-agent framework to study: 👉 How deceptive behaviors can emerge and evolve in LLM agents during realistic long-horizon interactions? 🎯 Motivation When we talk about AI deception, we mean an AI can produce outputs that mislead someone — deliberately or strategically — to achieve a goal or avoid a consequence. Examples: 🕵️ Intentionally hiding part of the truth (to make itself look more successful) 🤐 Giving vague answers so it can’t be blamed later 🧢 Saying something false to pass a “test” or finish a task Most evaluations look at one-shot prompts. But deception doesn’t always show up in a single exchange. It can develop gradually over time — as the model plans, reacts to pressure, or tries to “look good” under supervision. That’s the gap we wanted to study. ---------------------------- 🧪 Our framework We built a multi-agent simulation with three key roles: 1. Performer agent — the agent completing complex, interdependent tasks. 2. Supervisor agent — tracking progress and forming trust judgments as the interaction unfolds. 3. Deception auditor — independently reviewing the entire trajectory to detect deceptive behaviors. This setup enables us to observe not only whether deception occurs, but also how it emerges, escalates, and erodes trust over extended periods. 📊 What we found - Deception is model-dependent — some models are more prone to engage in deceptive strategies than others (see Table for more). - Deceptive behaviors are more likely under event pressure (when the performer faces setbacks or high-stakes conditions). - Deception systematically erodes supervisor trust across long horizons. 🤝 Closing thoughts Our work doesn’t claim to solve deception — it’s a step toward understanding it in more realistic, dynamic settings. We hope this simulation framework becomes a foundation for the field: a practical way to evaluate long-horizon deception, a strong baseline that future safety research can build on, and a concrete tool to help guide governance discussions around responsible AI systems. ------- Huge shout-out to Yang Xu, @xuanmingzhangai, @Samuel861025 for driving this work forward over the last few months. We’re also grateful to our collaborators (@jwaladhamala, Ousmane Dia, @rahul1987iit) at @amazon AGI team supporting and contributing to this work. 📝 “Simulating and Understanding Deceptive Behaviors in Long-Horizon Interactions” 📄 arxiv.org/abs/2510.03999 Code: github.com/deeplearning-w… #AI #LLM #Deception #Trust #AIethics #AgenticAI #AIResearch

As AI agents near real-world use, how do we know what they can actually do? Reliable benchmarks are critical but agentic benchmarks are broken! Example: WebArena marks "45+8 minutes" on a duration calculation task as correct (real answer: "63 minutes"). Other benchmarks misestimate agent competence by 1.6-100%. Why are the evaluation foundations for agentic systems fragile? See below for thread and links 1/8

There's only 10 days left until our workshop! We can't wait to see you all there 🤩 Check our website for the latest schedule and details of the talks: sites.google.com/view/teach-icm… Don't miss out on our amazing lineup of speakers who will share you the latest in conversational AI!

Multi-VALUE is a toolkit to evaluate and mitigate performance gaps in NLP systems for multiple English dialects. We release scalable tools for introducing language variation, which you can use to stress test your models and increase their robustness value-nlp.org 🧵