Yves Le Goff

33.8K posts

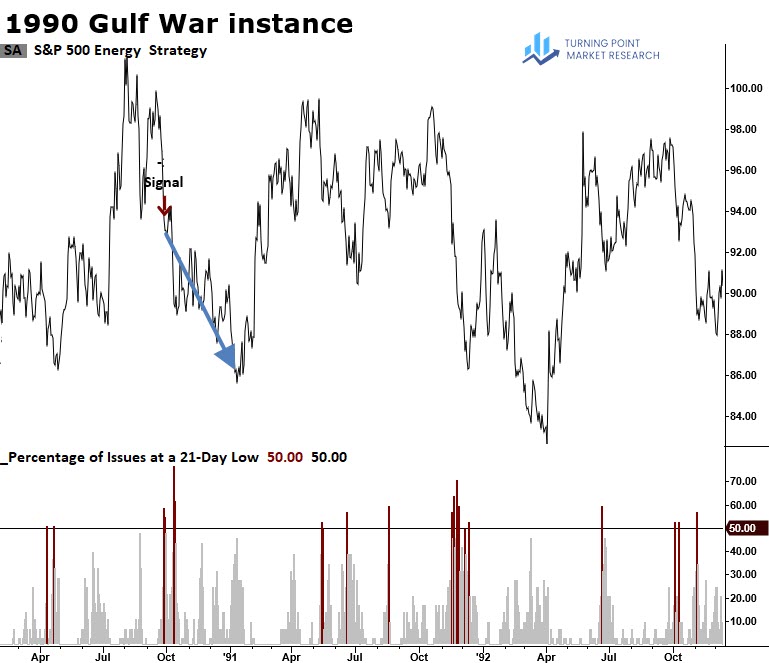

@TPMRSignals my 2 bbl...

The Iran War Synchronized Clocks Problem open.substack.com/pub/yves132745…

English

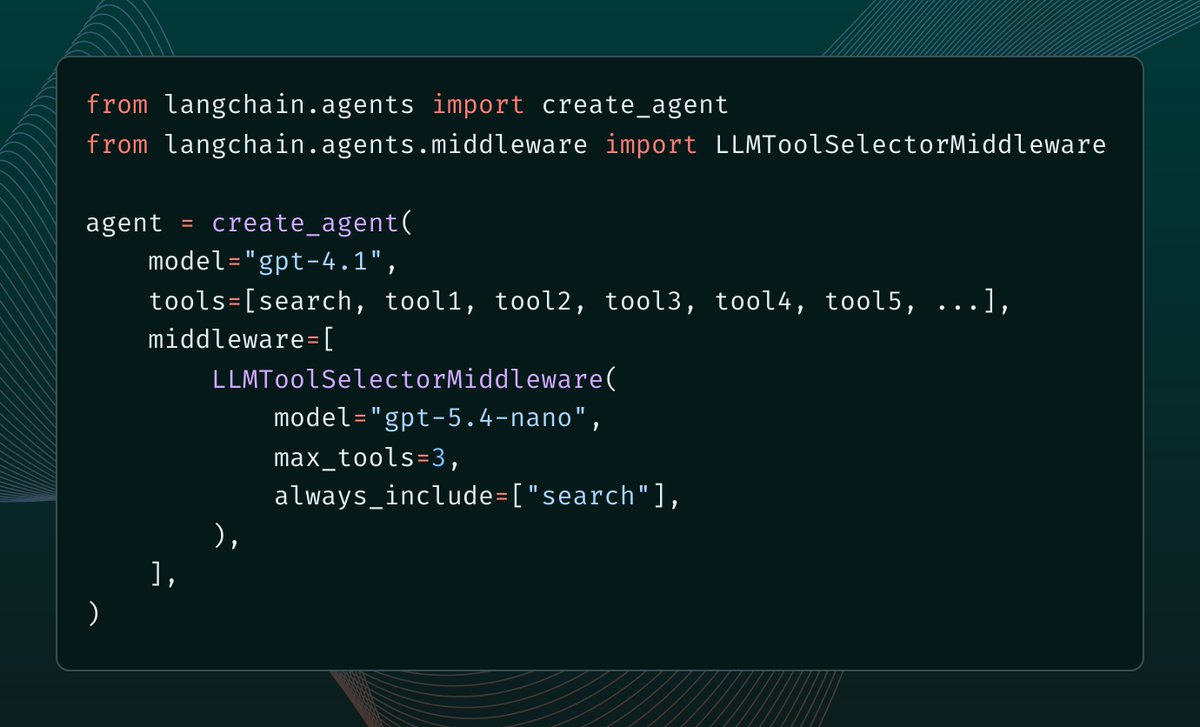

@apanasenko @sydneyrunkle @sydneyrunkle: Thanks for the series—really appreciate the professionalism.

@apanaseko: Point granted caching/stability risks. That said, with always_include for a stable core + monitoring, the focus/token wins often make it worthwhile. Trade-offs worth thinking about.

English

@sydneyrunkle It’s a very poor practice that breaks prompt caching and violates the rules of constraint sampling for the GPT family.

English

Yves Le Goff retweetledi

🚨 119𝐆𝐁+ 𝐆𝐎𝐎𝐆𝐋𝐄 𝐃𝐑𝐈𝐕𝐄 — 𝐀𝐋𝐋 𝐏𝐀𝐈𝐃 𝐂𝐎𝐔𝐑𝐒𝐄𝐒 🚨

𝐌𝐢𝐬𝐬𝐞𝐝 𝐢𝐭 𝐥𝐚𝐬𝐭 𝐭𝐢𝐦𝐞? 𝐈’𝐦 𝐝𝐫𝐨𝐩𝐩𝐢𝐧𝐠 𝐢𝐭 𝐚𝐠𝐚𝐢𝐧. 👌

This vault helped agencies close $9K+ clients using proven systems.

Inside the Drive:

📁 AI & Automation

📁 Ethical Hacking

📁 Cybersecurity

📁 Prompt Engineering

📁 Google Cloud

📁 Machine Learning

📁 DevOps + CI/CD

📁 Docker & Kubernetes

📁 Blockchain

📁 Power BI

📁 React + Node

📁 Cloud Security

📁 Linux

📁 Pen Testing

📁 Data Analytics

📁 Data Science

📁 Big Data

📁 SQL

📁 Tableau

📁 Python

📁 AWS

📁 Java

Everything organized. Everything premium. 119GB+ value.

Get it:

✔ Follow me [MusT]

✔ Like & RT+bookmark

✔ Comment “ NEED ” To Get Auto DM.

⚠️ No follow = No Access ⚠️

English

@Suryanshti777 Hard not to like these...worth also considering that thanks to increasingly large context windows + user persistent critical thinking in response to LLM answers, LLMs adjust to your style and challenge you more, and you challenge them. And so on.

=> Prompts = starting point

English

1. The Karpathy Decomposition Prompt — make Claude think before answering

Use when you're facing a complex idea, product, or technical problem.

Prompt:

"Approach this like a systems thinker.

1. Define the core problem clearly

2. Identify assumptions

3. List constraints and unknowns

4. Break into sub-problems

5. Propose 3 different approaches

6. Compare tradeoffs

7. Choose best approach

8. Give step-by-step execution

9. Highlight failure points

10. Suggest improvements after v1

Problem: [paste]"

This forces structured reasoning instead of shallow answers.

English

Most people prompt Claude.

Andrej Karpathy designs cognition.

Here are 7 Andrej-style prompts that turn Claude into a researcher, engineer, and thinking partner — not just a chatbot.

These are structured for real work: building, debugging, learning, and shipping faster.

Bookmark this. You’ll reuse them every day.

English

@mr_mayank The decision architecture is chaotic: JD Vance, Rubio, Kushner, Witkoff, Hegseth... all pulling on different levers.

Meanwhile Israel is racing to do as much damage as possible...

A recipe for more miscalculations and escalations.

GIF

English

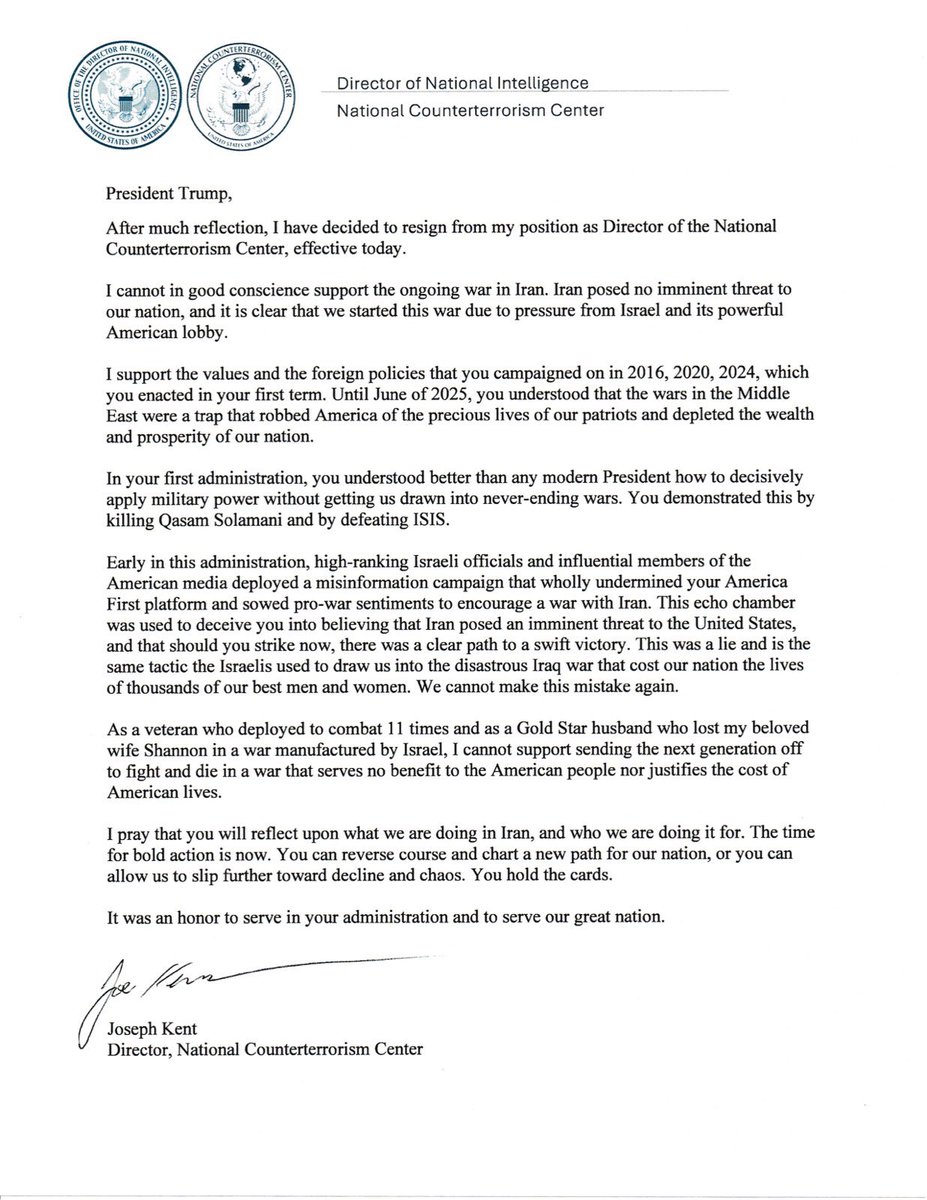

After much reflection, I have decided to resign from my position as Director of the National Counterterrorism Center, effective today.

I cannot in good conscience support the ongoing war in Iran. Iran posed no imminent threat to our nation, and it is clear that we started this war due to pressure from Israel and its powerful American lobby.

It has been an honor serving under @POTUS and @DNIGabbard and leading the professionals at NCTC.

May God bless America.

English

@mdancho84 Interesting. I'd add that requirements and budget could play a role....

English

@BrendanCarrFCC Carr has the smallest, narrow, technical hook to investigate or threaten license non-renewal/revocation. But the case is extremely weak in practice, rarely successful historically, and faces severe constitutional vulnerabilities fraught with successful 1st Amendment challenges.

English

Broadcasters that are running hoaxes and news distortions - also known as the fake news - have a chance now to correct course before their license renewals come up.

The law is clear. Broadcasters must operate in the public interest, and they will lose their licenses if they do not.

And frankly, changing course is in their own business interests since trust in legacy media has now fallen to an all time low of just 9% and are ratings disasters.

The American people have subsidized broadcasters to the tune of billions of dollars by providing free access to the nation’s airwaves.

It is very important to bring trust back into media, which has earned itself the label of fake news.

When a political candidate is able to win a landslide election victory after in the face of hoaxes and distortions, there is something very wrong. It means the public has lost faith and confidence in the media. And we can’t allow that to happen.

Time for change!

Rapid Response 47@RapidResponse47

English

@abxxai 3/3

The practical fix and main takeaway for me: treat LLMs as fallible assistants, invest in clear prompts and citations, use multiple models/agents, and add a critic step when stakes are high. Panic less, verify more.

English

@abxxai 2/3

Granted best models still fabricate. That’s why blind trust in a single 200K run is reckless.

English

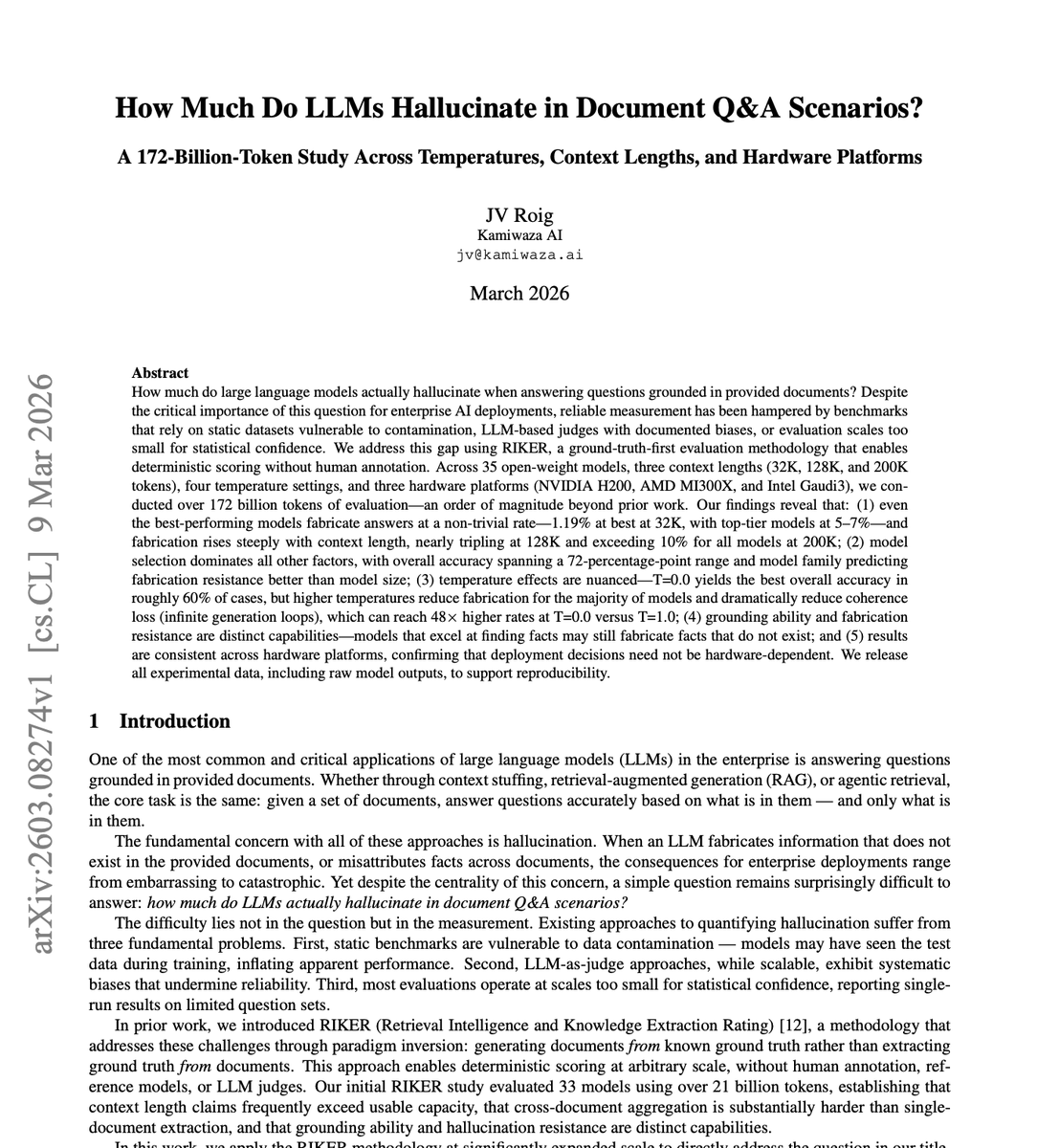

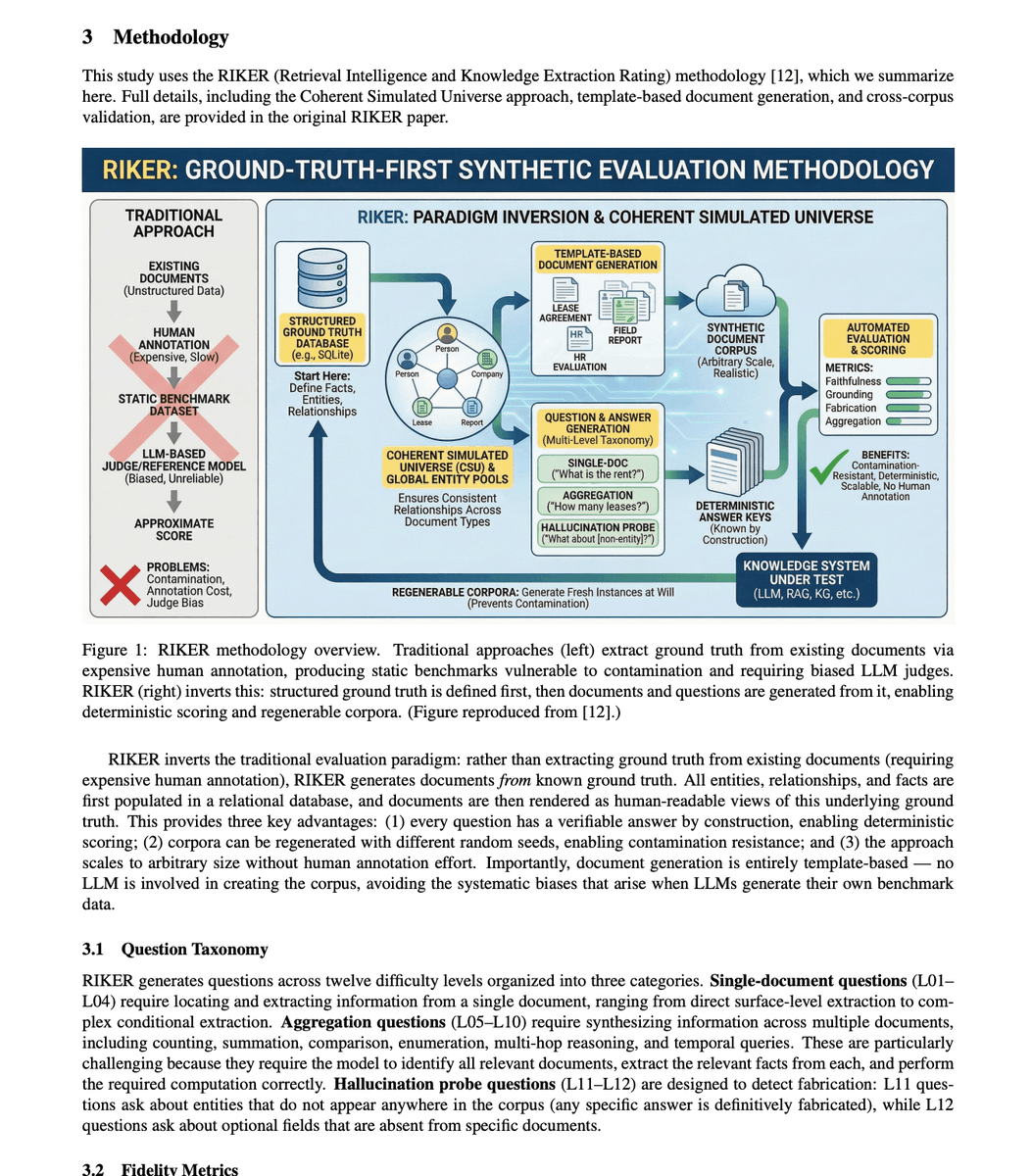

BREAKING: 🚨 Someone just tested 35 AI models across 172 billion tokens of real document questions.

The hallucination numbers should end the "just give it the documents" argument forever.

Here is what the data actually showed.

The best model in the entire study, under perfect conditions, fabricated answers 1.19% of the time. That sounds small until you realize that is the ceiling. The absolute best case. Under optimal settings that almost no real deployment uses.

Typical top models sit at 5 to 7% fabrication on document Q&A. Not on questions from memory. Not on abstract reasoning. On questions where the answer is sitting right there in the document in front of it.

The median across all 35 models tested was around 25%.

One in four answers fabricated, even with the source material provided.

Then they tested what happens when you extend the context window. Every company selling 128K and 200K context as the hallucination solution needs to read this part carefully.

At 200K context length, every single model in the study exceeded 10% hallucination. The rate nearly tripled compared to optimal shorter contexts.

The longer the window people want, the worse the fabrication gets. The exact feature being sold as the fix is making the problem significantly worse.

There is one more finding that does not get talked about enough.

Grounding skill and anti-fabrication skill are completely separate capabilities in these models.

A model that is excellent at finding relevant information in a document is not necessarily good at avoiding making things up. They are measuring two different things that do not reliably correlate. You cannot assume a model that retrieves well also fabricates less.

172 billion tokens. 35 models. The conclusion is the same across all of them.

Handing an LLM the actual document does not solve hallucination. It just changes the shape of it.

English

@techNmak Clever. Cheap advice: Jump in at the deep end. Using say Claude, build a non trivial app. Something that would address a problem you have. e.g., where did i put that chat seesion? Integrate and orchestrate multiple inputs/LLMs, agents + add persistence via say MCP/Supabase)

English

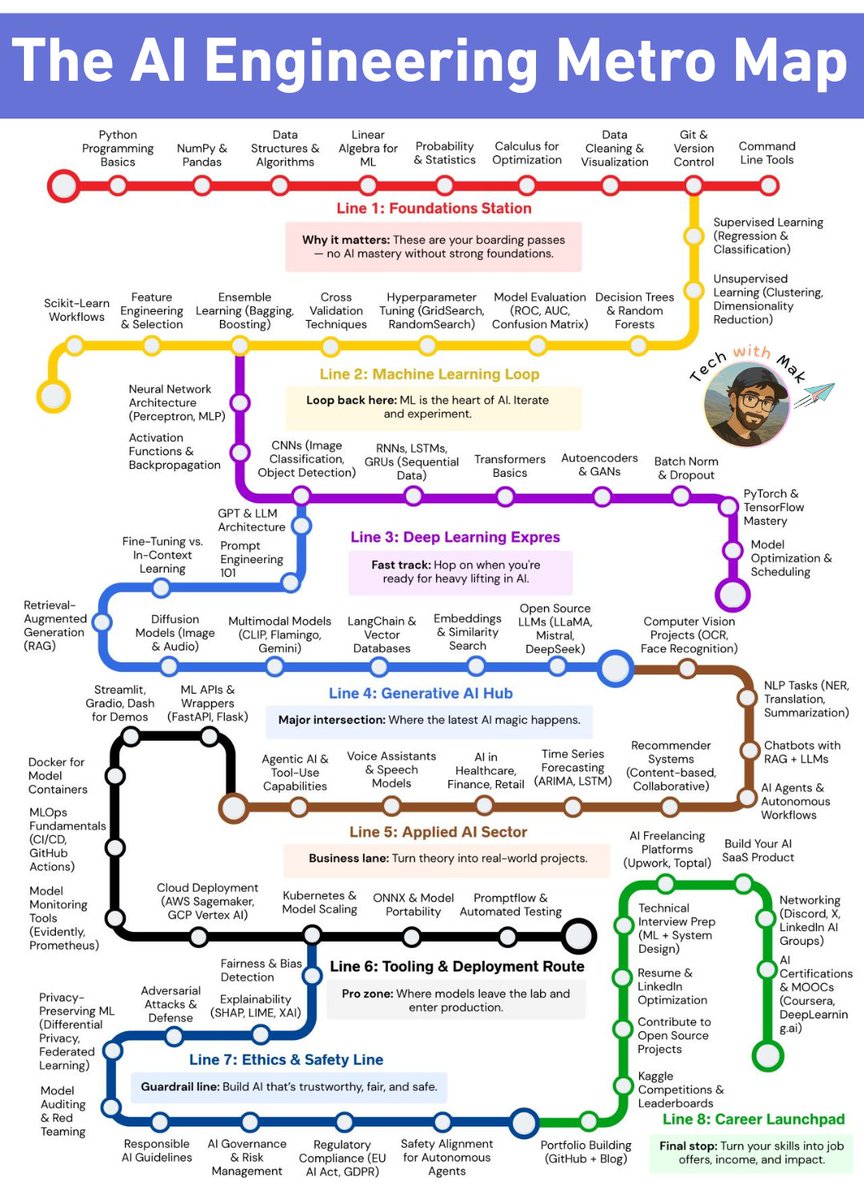

Most people try to learn AI randomly.

I mapped the entire AI engineering journey into a metro system.

The problem with most AI roadmaps:

They're linear. Step 1, Step 2, Step 3. As if everyone starts at the same place and wants the same destination.

But AI engineering isn't linear. It's a network.

→ A software engineer skips Python basics, jumps straight to LangChain

→ A data analyst already knows Pandas, needs Transformers next

→ A product manager wants RAG and Agentic AI, not CNNs

→ A researcher needs Ethics & Safety before deployment

A metro map captures this reality.

Generative AI Hub (Line 4) connects to:

→ Machine Learning Loop (you need Transformers first)

→ Applied AI Sector (where RAG becomes chatbots)

→ Tooling & Deployment (where demos become products)

Career Launchpad (Line 8) connects to:

→ Every other line (skills from any track convert to job offers)

Ethics & Safety (Line 7) connects to:

→ Deployment (you can't ship without guardrails)

→ Applied AI (real-world projects need fairness and privacy)

The 8 lines:

🟠 Foundations - Python, Math, Git (boarding passes)

🔵 Machine Learning - Neural Nets, CNNs, Transformers (the heart)

🟡 Deep Learning Express - LLMs, Fine-Tuning, PyTorch (fast track)

🟢 Generative AI Hub - RAG, Diffusion, LangChain (the magic)

🩷 Applied AI - Agentic AI, Healthcare, Chatbots (real projects)

🟣 Tooling & Deployment - Cloud, Kubernetes, MLOps (production)

🔴 Ethics & Safety - Bias, Privacy, Governance (guardrails)

🟢 Career Launchpad - Portfolio, Interviews, Networking (job offers)

You don't take every line. You don't visit every stop.

Find where you are. Pick your destination. Transfer as needed.

Bookmark this. Start today.

English

@Rajatsoni oddly satisfying...but I could not help thinking about the old adage: the market will remain irrational longer than you will remain solvent...

English

@WalaMuse16632 No offense, but this looks like a taxonomy of RAG options hook up and give birth to a maintenance nightmare. A real architecture is what you chose *not* to build as much as what you did.

English

"It’s not AI; it’s AC; Artificial Cleverness!"

Sir Roger Penrose explains why current "Artificial Intelligence" is missing the most critical ingredient: consciousness. By delving into the limits of mathematical computability, he argues that while computers are masters of "computational mathematics," true intelligence requires a conscious understanding that exists beyond any algorithm.

At the fundamental level, what we call AI is just high-speed calculation. To Penrose, intelligence and consciousness are inseparable; and until a machine can "understand," it’s simply being clever.

English

@World_Insights1 US/Israeli strikes have already degraded ~83% of drone attack rates and ~90% of ballistic missile launches.

Air dominance is established over much of Iran, allowing persistent ISR (MQ-9s, Global Hawks, F-35 pods, satellites).

Next 2 weeks: Ukrainian drones @ 100->200/day

English

Day 9 👇

Iran Missile launches during the first 9 days of conflict:

🚀 Ballistic Missiles:

🔴 Day 1 — 350

🔴 Day 2 — 175

🔴 Day 3 — 120

🔴 Day 4 — 50

🔴 Day 5 — 40

🔴 Day 6 — 32

🔴 Day 7 — 28

🔴 Day 8 — 15

🔴 Day 9 — 21

🛸 Drone Swarms:

🟢 Day 1 — 294

🟢 Day 2 — 541

🟢 Day 3 — 200

🟢 Day 4 — 85

🟢 Day 5 — 45

🟢 Day 6 — 38

🟢 Day 7 — 30

🟢 Day 8 — 12

🟢 Day 9 — 134

👉 Iran has significantly increased its drone strikes while greatly reducing missile attacks.

Do you think Iran has run out of missiles?

No, Iran may be conserving its missiles for a long, prolonged war.

English

@Osint613 Important context on Iranian proxy networks.

The strike on al-Zein (Quds Force liaison) reinforces what we're tracking: Iran is coordinating across theaters—Lebanon, Yemen, Iraq.

But the economic center of gravity remains the Strait of Hormuz.

Potential Critical Path. 🧵

English

The IDF says it struck Hezbollah weapons depots and dozens of military sites, including a Radwan Force command and training compound in Beirut’s Dahieh used to plan attacks on Israeli forces and civilians.

In the Beqaa region, the IDF also killed Hezbollah operative Mustafa Ahmed al-Zein, who had close ties to Iran’s Quds Force and operated from Iran in recent years.

English