Sarthak

307 posts

@code_star @soldni @eliebakouch @Grad62304977 @samsja19 1. There is a possibility of only repository level filtering when training coding models (this was a choice by deepseekcoder), in this case, they're most likely retaining the different branches. Lets take the example of commaai/openpilot (serving as a typical oss repo here)

The standard for frontier coding evals is changing with model maturity. We now recommend reporting SWE-bench Pro and are sharing more detail on why we’re no longer reporting SWE-bench Verified as we work with the industry to establish stronger coding eval standards. SWE-bench Verified was a strong benchmark, but we’ve found evidence it is now saturated due to test-design issues and contamination from public repositories. openai.com/index/why-we-n…

what surprised me was that even smaller models one-shotting through I guess, the hard part about making unverifiable domains verifiable isn't about having a strong reasoner model to provide rewards?

There's been a hole at the heart of #LLM evals, and we can now fix it. 📜New paper: Answer Matching Outperforms Multiple Choice for Language Model Evaluations. ❗️We found MCQs can be solved without even knowing the question. Looking at just the choices helps guess the answer and get high accuracies. This affects popular benchmarks like MMLU-Pro, SuperGPQA etc. and even "multimodal" benchmarks like MMMU-Pro, which can be solved without even looking at the image ⁉️. Such choice-only shortcuts are hard to fix. We find prior attempts at fixing them-- GoldenSwag (for HellaSwag) and TruthfulQA v2 ended up worsening the problem. MCQs are inherently a discriminative task, only requiring picking the correct choice among a few given options. Instead we should evaluate language models for the generative capabilities they are used for. We show discrimination is easier than even verification, let alone generation. 🤔 But how do we grade generative responses outside "verifiable domains" like code and math? So many paraphrases are valid answers... We show a scalable alternative--Answer Matching--works surprisingly well. Its simple--get generative responses to existing benchmark questions that are specific enough to have a semantically unique answer without showing choices. Then, use an LM to match the response against the ground-truth answer. 👨🔬We conduct a meta-evaluation by comparing to ground-truth verification on MATH, and human grading on MMLU-Pro and GPQA-Diamond questions. Answer Matching outcomes give near-perfect alignment, with even small (recent) models like Qwen3-4B. In contrast, LLM-as-a-judge, even with frontier reasoning models like o4-mini, fares much worse. This is because without the reference-answer, the model is tasked with verification, which is harder than what answer matching requires--paraphrase detection--a skill modern language models have aced💡 Lets shift the benchmarking ecosystem from MCQs to Answer Matching. Impacts: Leaderboards: We show model rankings can change and accuracies go down making benchmarks seem less saturated. Benchmark Creation: Instead of creating harder MCQs, we should focus our efforts on creating questions with for answer matching, much like SimpleQA, GAIA etc. 🤑 Cost: Finally, to our great surprise, answer matching evals are cheaper to run than MCQs! See our paper for more, its packed with insights. 🧵 has paper and more result figures.

Another day, another reason not to use an LM as a judge. Building benchmarks is tough, and sometimes using an LM-as-a-judge looks like an easy solution to this problem, but it almost never is. Building benchmarks is about finding tough problems whose solution is easy to verify. And we've shown, in SWE-bench, SciCode, AlgoTune, SWE-fficiency, VideoGameBench, CodeClash, and CritPt that we can find extremely tough challenges that are verifiable deterministically. And we'll continue to find even tougher benchmarks, without using any type of ML model to judge correctness.

We estimate that Claude Opus 4.6 has a 50%-time-horizon of around 14.5 hours (95% CI of 6 hrs to 98 hrs) on software tasks. While this is the highest point estimate we’ve reported, this measurement is extremely noisy because our current task suite is nearly saturated.

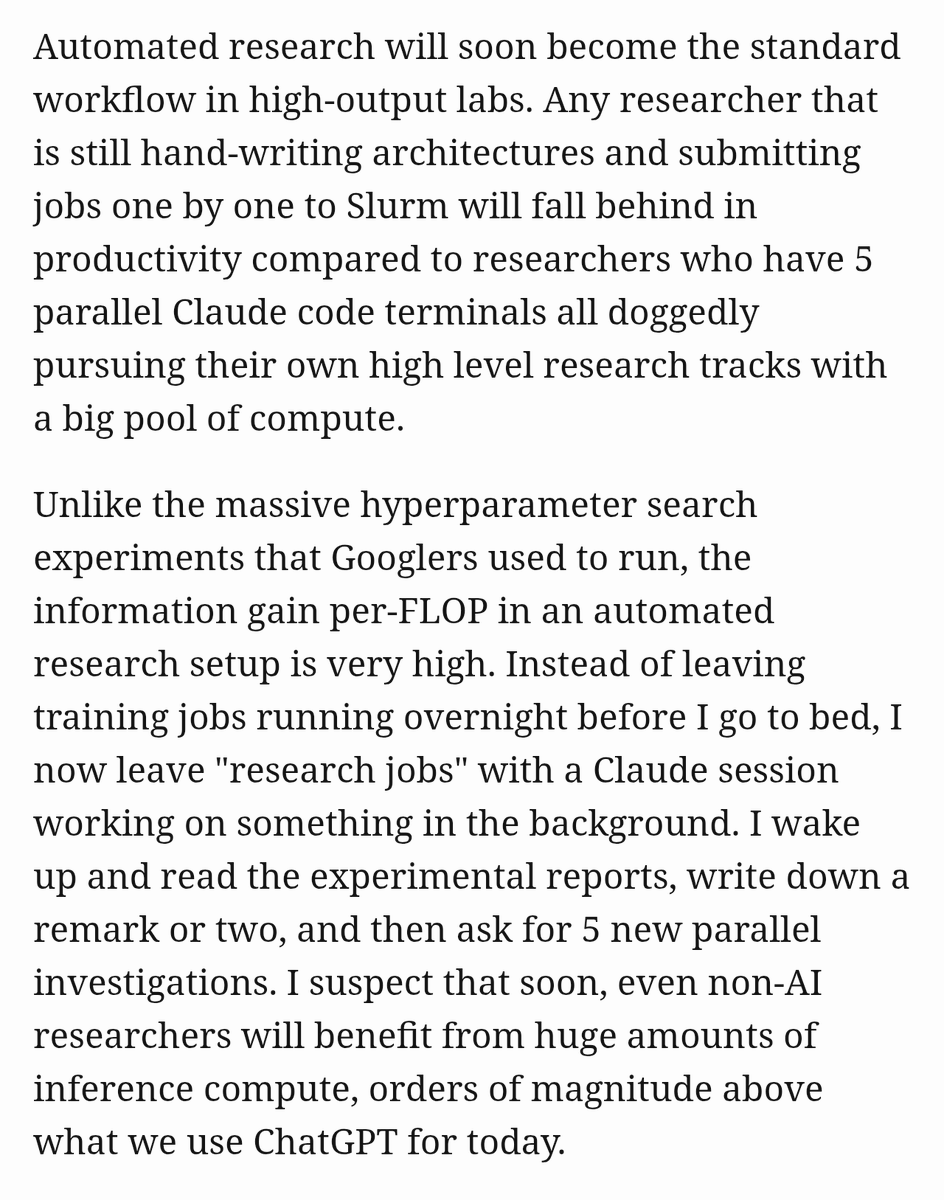

As Rocks May Think: an interactive essay on thinking models, automated research, and where I think they are headed. Enjoy! evjang.com/2026/02/04/roc…

Hinton, LeCun, and every other neolab: Gradient descent is fundamentally broken. It needs thousands of examples to learn what humans do in only a few. It’s time to start looking for a radical new learning paradigm to close the gap. In-context learning: Do I mean nothing to you?

did synth data generation for the same task in Sept 2024 and today fighting mode collapse was so hard back then and is completely absent now we've came a long way, wondering if it is only because models got larger or did the labs actually get an improved data distribution

It took me weeks, but finally it's there: an overlong blogpost on synthetic pretraining. vintagedata.org/blog/posts/syn…