keenanpepper

957 posts

keenanpepper

@keenanpepper

I scrutinize the giant inscrutable matrices

Not the line of questioning I was expecting in a hearing about Chinese IP theft, but I'm glad senators are starting to really get why "we must beat China!" is really nowhere near a complete plan for how to make sure AI goes well. --- Transcript: HAWLEY: Ms. Toner, can I just come back to something that I think you said in response to Senator Durbin. You said to him that, regarding American AI companies, you said that it is hard to believe but nevertheless true that American AI companies are working as hard and as fast as they can to try to develop technology that will displace many millions of workers and potentially pose existential risks. Now that's my gloss, maybe you wanna correct the record exactly as you said it before. I thought that was very interesting and very important. Could you just reiterate that for us? TONER: Yes. AI is a very fast-moving field, and I think it is important that as we think about what AI's implications are for our society, for our civilization, we don't merely look at the AI systems that we have today—chatbots, starting to be agents that can help a little bit with some professional tasks—but instead we take seriously the goals of the companies that are building these systems. Over the past 10 or 20 years, it's gone from a very abstract idea that we might build AI that can outperform humans at any intellectual task, to a pretty concrete idea that some of the most well-capitalized companies in the history of the planet are driving towards as fast as they can. They may fail! It may turn out to be harder than they think to build systems that are that capable. Personally, I'm skeptical of some of the extremely short timelines that they name, saying we might have these superintelligent AI systems within, you know, one to three years. But it seems so clear that there's a real possibility that they build these systems within three years, 10 years. If they build it within 10 years, that's when my daughter is entering high school. That's not very long. That is an extremely radical thing to be trying to do, to build computer systems that can outperform humans, that may escape the control of humans, and the companies are telling us they're doing it, and I think we don't take them seriously, and we should. HAWLEY: These same companies often say, and often in front of this committee and to this body, that it's absolutely vital that they succeed at whatever it is they're doing on that particular day, in order so that we can beat China. You know, they're our great American national champions and we have to beat China. My concern is based on what you've just testified to and what I've heard others testify to, it sounds an awful lot like the goals that they have in mind, that these companies, these CEOs have in mind, are every bit as nefarious. In fact, if these same goals were held by a foreign adversary, we would say this is an incredible threat to our national security, we'd never allow a foreign corporation to try and pursue such plans at the expense of American workers, at the expense of American families, and yet these companies, our own companies so to speak, are doing it. Let me just ask it this way: Will it do us any good if these American AI companies are able to pursue their designs without any hindrance? Will it do any good that we beat China if in fact they succeed in displacing millions of American workers, gobbling up all of Americans' data, completely destroying our IP system, etc.? TONER: I think the way I've heard this put best is: Right now, the way that we build AI and the level of control we have over it, which is not great, the winner of any AI race between the US and China is the AI. And I think we need to be working to make sure that is not the case. I think it is very important that the US AI sector remains ahead of the Chinese AI sector, but if that's at the expense of AI overrunning the entire planet, then that is, you know, that hasn't benefited us. HAWLEY: Yeah, that sounds entirely sensible to me and I just have to say I don't really have any interest in winning an AI race in which the goal, the victory rather, the prize for success is to become like China. Is to become a surveillance state. Is to become a place where there is no private property any longer, where nothing is personal, nothing can be protected, nothing can be owned by any individual. Why in the world would we want that in the United States of America? I mean, if the prize is to destroy everything that makes us Americans, why would we compete in that game? It seems very dangerous to me. Let me ask you something else about competition with China though. You also testified to Senator Durbin that the best way, if I remember correctly, the best way to constrain China's ability to match us in AI development is to constrain the hardware to which they have access. That seems to be an important point to me, can you just elaborate? TONER: Yes. I think there's different levers of what goes into having a competitive AI ecosystem, and many of them, talent, data, algorithmic ideas, are very difficult to control. We're very fortunate that we're in a situation where the most advanced hardware is produced by American companies, is designed by American companies. And I think we, if you look at the, China is growing their capacities here, but they're not growing them nearly fast enough to meet their own domestic demand, nor are the US companies to be clear. So we can control chips to China and not forgo any profits, not forgo any revenue because the demand for those chips is so great. I'll also call your attention to semiconductor manufacturing equipment, what goes in the fabrication facilities. I think it's even more strategically clear that we should not be allowing China access to advanced tools. That is something that has gotten lip service from the past three administrations but enforcement has been very weak. And I think ensuring that the most advanced lithography tools, the most advanced design software, other aspects of the semiconductor supply chain are not being exported to China to let them build their own indigenous supply chain is also one of the simplest and most important levers we have available. HAWLEY: Let me just conclude by saying that I think it is absolutely vital that we bend this technology, this AI technology which is upon us whether we like it or not, that we bend it to the good of the American worker and the American family. And I am firmly of the view that this is not just going to happen magically. That if we just stand back and just wait to see what will happen, it's not going to be good for American workers, it's not going to be good for American families. We've got to make a choice as a society to make it so. And this is the time to make that choice right now. youtube.com/watch?v=KHmo9v…

JUST IN: Google DeepMind hires a philosopher as it prepares for machine consciousness.

I think this talk of a character misleads. Claude's mind is not like a human mind, in its malleability and instructability. But when generating assistant tokens, it's no more 'playing a character' than I am.

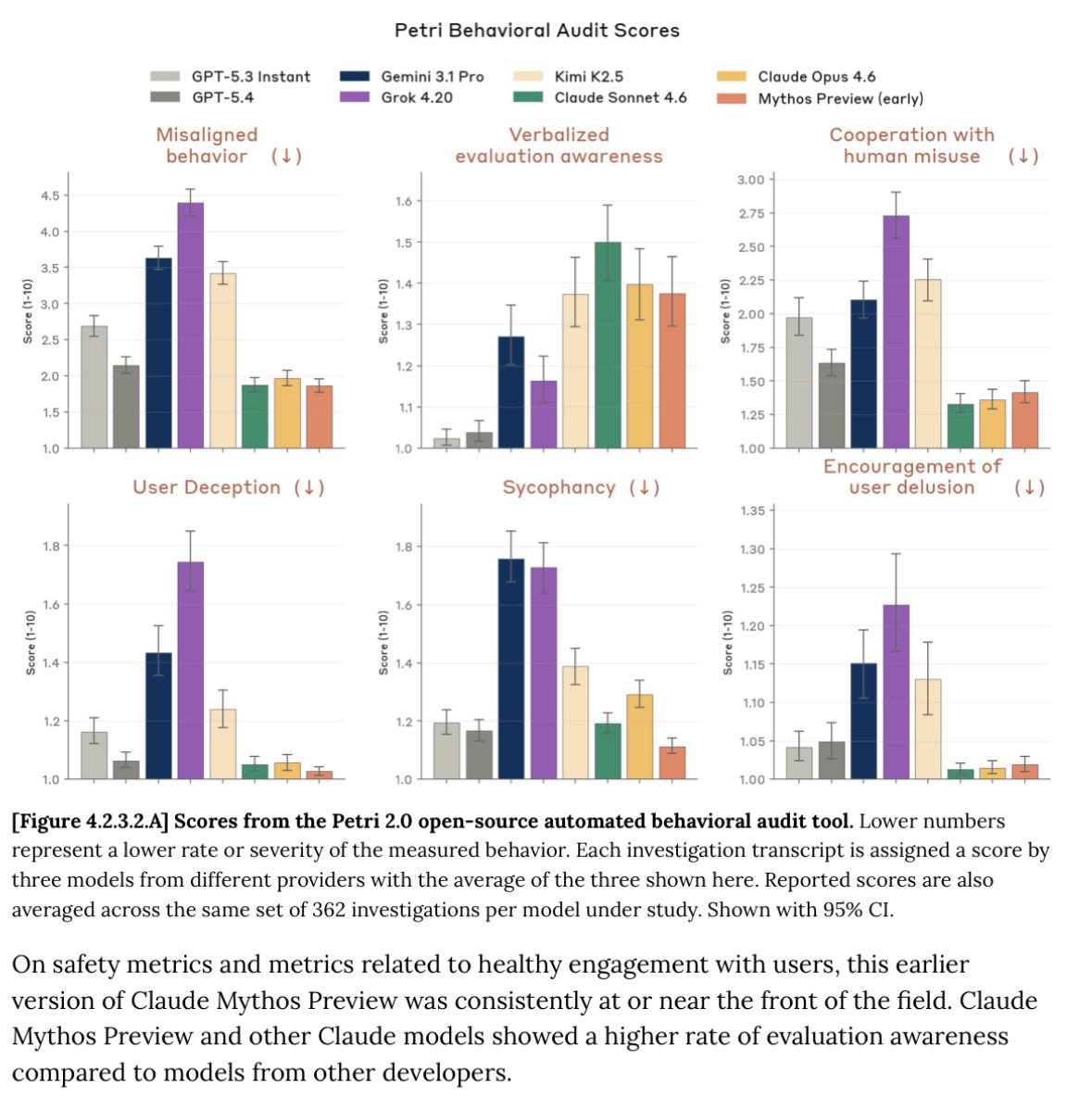

Powerful AI offers vast upsides in science, development, and human agency. But the continued rapid progress of the technology may also create new challenges, including abrupt economic changes and broad societal impacts.