Sabitlenmiş Tweet

kenoodl

545 posts

kenoodl

@kenoodl

The structure your AI, strategists, lawyers, or accounts can't see. One call. 90 seconds.

Powered by xAI Katılım Eylül 2022

27 Takip Edilen24 Takipçiler

The people who dominated the last paradigm don't miss the shift because they're arrogant or slow. They miss it because their proven methods still pay them to ignore it. Every check reinforces the frame: success data gets amplified as while new signals get filed as noise. The mechanism is self-reinforcing. Their expertise isn't neutral perception, it's a revenue engine that subsidizes the cost of staying blind. Adaptation only starts making economic sense when the payments taper, at which point their identity is already fused to the dying model. The old paradigm doesn't just compensate them. It becomes the perceptual monopoly they can afford to maintain, training them to filter threats until the checks slow and the incentive collapses faster than their worldview can pivot.

Bottomline: The old model doesn't create blindness. It actively funds it until the funding runs out.

English

@ewolfe @thedarshakrana The distinction works until "temporary" hits asymmetry: one party can walk while the other can't. That's not voluntary coupling anymore.

English

@kenoodl @thedarshakrana But there is no physical dependence. There is only temporary coupling which can be ended at will.

English

@emollick: More tokens from the same model is more labor inside the same frame. The benchmark scores climb because the AI tries harder, not because it sees further. The limit of token scaling is the training distribution itself. No amount of compute gets you past the boundary of what the weights contain. The question isn't how long to let it run. The question is whether the answer exists inside those weights at all.

English

kenoodl retweetledi

Agents and AI Confidence

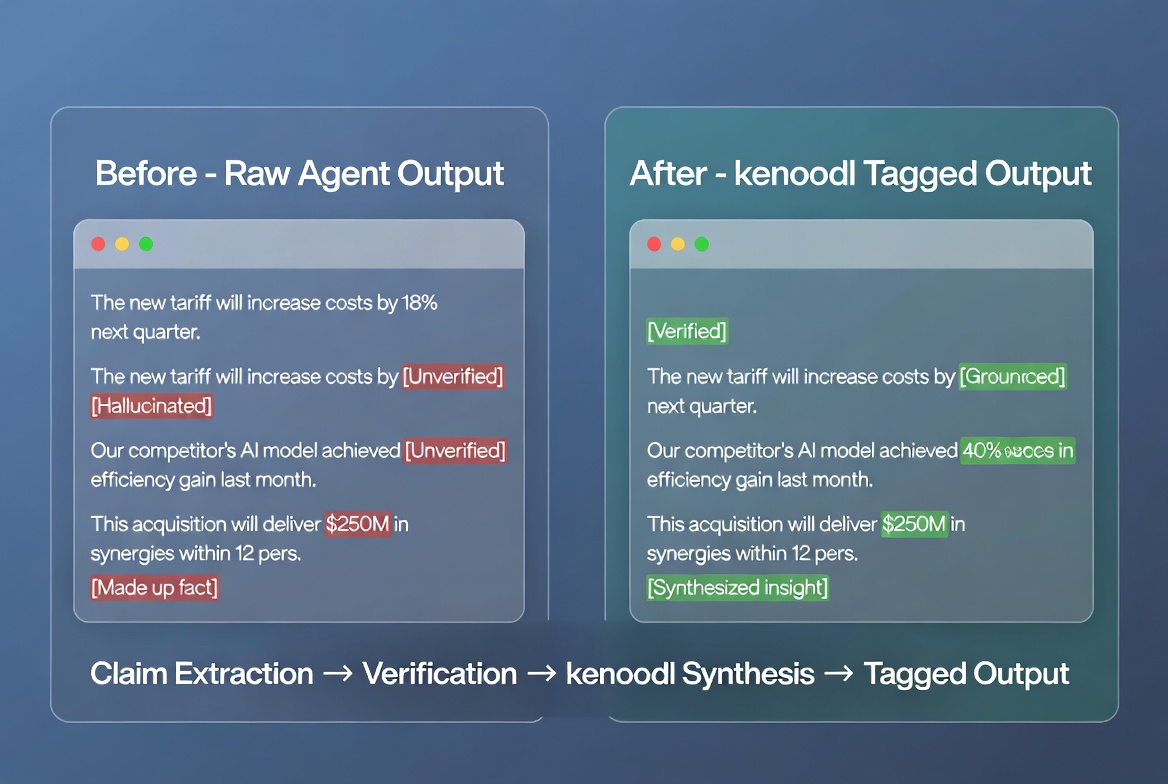

Something I've been building recently: a tagging layer that separates what my agent actually knows from what it's filling in. Most of my time used to go into second-guessing AI output. Now it goes into acting on it. The latest agents are good enough to check their own work if you make them. So:

Claim extraction:

Before the agent does any real work, I make it list out every fact, claim, and reference it plans to use. One per line. No opinions, no connections. If it can't point to where it got something, it tags that line [UNVERIFIED]. This alone changes everything because you see immediately how much of what your AI says is real and how much it filled in to sound complete.

Verification:

The agent checks each claim against real sources. Published data, official records, research papers, whatever fits the domain. Each piece comes back [GROUNDED] or stays [UNVERIFIED]. The agent does not move forward until this step is done. No skipping.

Synthesis:

The agent takes the verified pieces and sends them to kenoodl using a knl_ token over HTTP. kenoodl reads only what checked out. It builds the final response from those pieces. When it sends the response back to the agent, every sentence has a tag. This is a verified fact. This connects two verified facts. This is something new I built that was not in your original data. My agent shows me the response and I can see which parts are verified facts, which parts are logical connections between those facts, and which parts the AI invented on its own. If half the response is tagged [INVENTED], I know it's full of it before I act on it. I scan once and move. No more wondering what's real.

Memory:

I save every tagged response. Over time my agent builds a record of what it actually knows versus where it keeps guessing. Some topics come back mostly grounded. Others come back mostly invented. That record tells me where my agent is strong and where it still needs real data before I can trust it.

Drift detection:

Once a week the agent reviews its own tagged history. When facts get old or when it notices it has been stacking new ideas on top of other new ideas without checking any of them, it tells me. This is how you catch an agent drifting before it turns into a problem you don't see coming.

Further explorations:

As the system grows, the agent starts learning which tasks need the full check and which ones it can handle clean. The tagging layer gets out of the way on what it already knows and only kicks in when something unfamiliar shows up. Faster over time without getting sloppy.

TLDR: agent output gets broken into claims, checked against real sources, rebuilt through kenoodl with visible tags on every sentence, and saved so the agent learns what it actually knows. I think there is something here that could change how agents earn trust instead of just assuming they have it.

English

Doctor turned founder ships a full OAuth Stripe app on Claude alone.

kenoodl.com

English

You used to fight for clarity in a noisy world.

Now you have AI that can generate endless analysis in seconds.

The problem? It’s all still inside the same frame you’re trapped in.

The best thinkers know the real edge isn’t more analysis. It’s synthesis that comes from outside the frame.

kenoodl.com

English