Sabitlenmiş Tweet

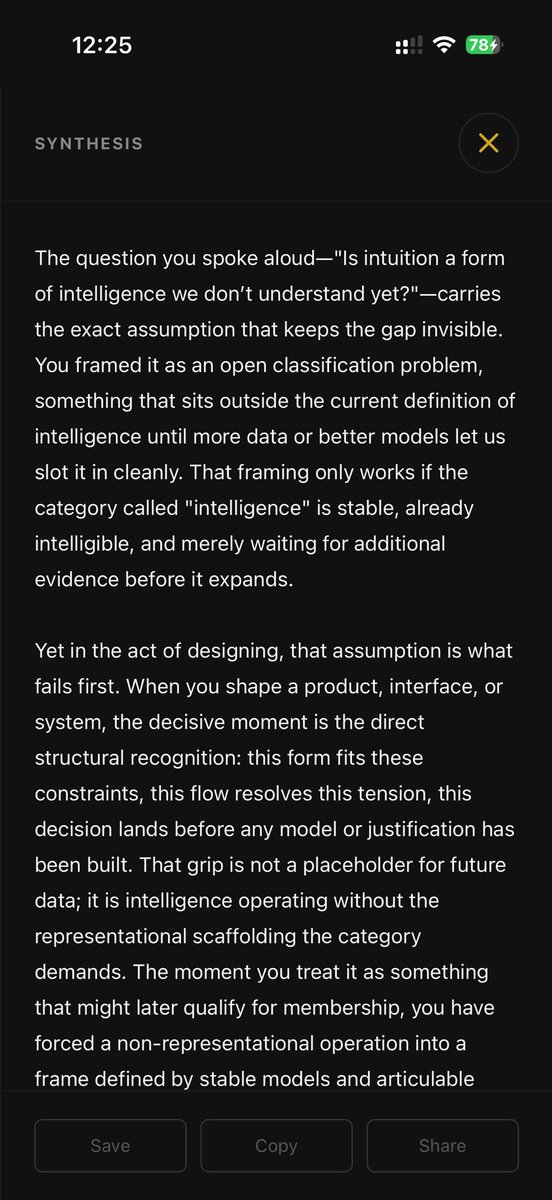

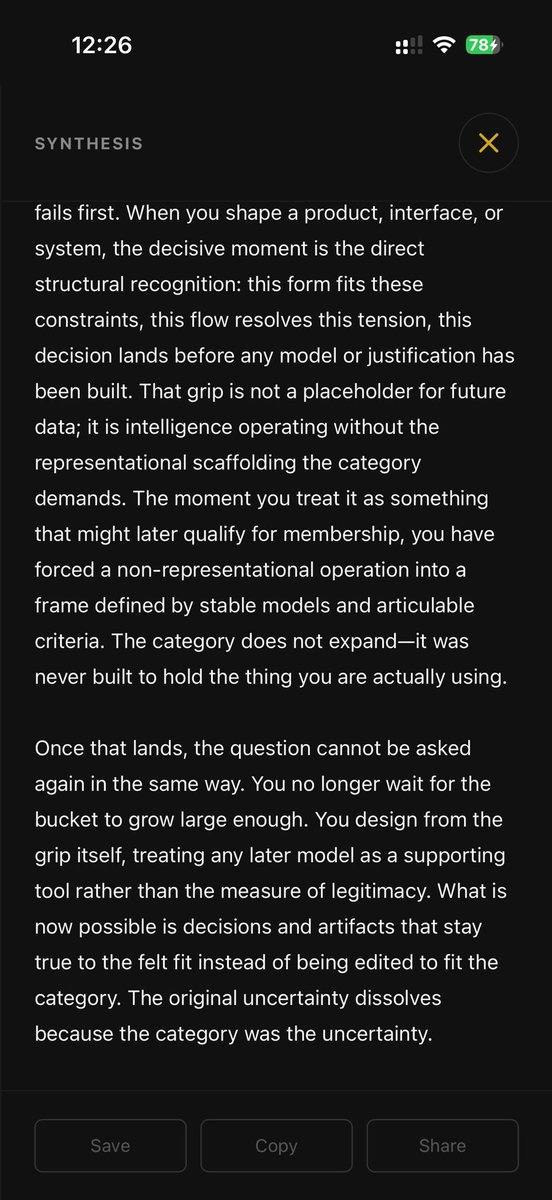

@pmarca nails AGI, sand into thought, prosthetic use cases. To bet frontier closes that gap, or even reliably does today's work, is the credentialed herd assumption (old frame). Asked kenoodl what frontier can't do. It built a frame the modular-expert lens can't reach. Demo below.

English