kenpu

1K posts

kenpu

@kenpu

Opinions are my own and not the views of my employer. Some tweets are meant for humour, and should not be taken serious. Others are dead serious.

Ontario, Canada Katılım Nisan 2008

235 Takip Edilen175 Takipçiler

Many of you have been thinking about AI. Some are using agents to seemingly multiply their productivity. Some are skeptical that it can improve productivity much. Some think that it will wipe out jobs. Some even think that ChatGPT will lead to the Terminator.

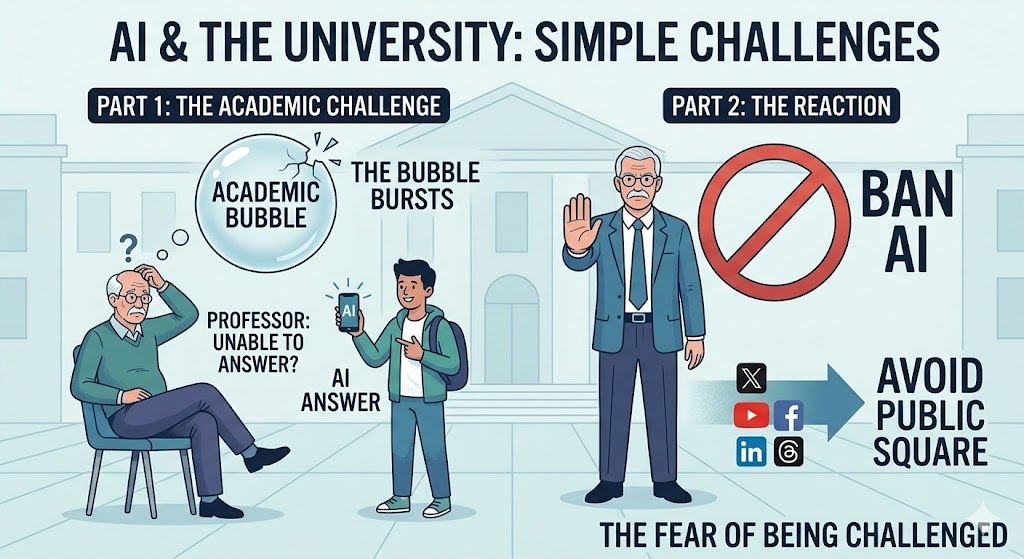

But what is happening on campus?

How much attention do university professors pay to the application of AI in their courses?

Short of regulating it and lamenting how it impacts homework: they either do not think about it, or denounce it.

Why?

Part of it is just the general laziness and careerism typical of modern universities. You only bother if you can virtue-signal or get ahead.

But there is something deeper at play. Academics avoid the AI topic the same way that spiritually weak people avoid thinking about their own death: they are fearful.

The printing press, after many years, became a challenge to the Church by enabling more people to read and study the Bible. It led to some of the most brutal wars in our history.

I don’t expect blood to flow on campus, but what is going to happen when the students show up to class, and the mediocre/activist scholar who is their professor can’t answer questions very well? What happens when everyone in the class can see that ChatGPT is superior to the professor?

Notice how an entire generation of scholars has mostly stayed out of the public square: they are not on X, not on YouTube, not on Threads… At best, they show up on LinkedIn to promote a talk that they gave (never showing the content, just signalling the prestige).

Contemporary academics do not like to be challenged. And they fear AI, they fear any tool that can break the bubble. Any tool that can show that the Emperor is naked.

English

kenpu retweetledi

Computer science is gradually returning to the domain of physicists, mathematicians, and electrical engineers as large language models automate much of what we currently call software engineering.

The field’s center of gravity is shifting away from manual code writing and toward deeper theoretical thinking, mathematical insight, and systems-level reasoning.

English

kenpu retweetledi

I am the CEO of Palantir Technologies.

The company is worth a quarter of a trillion dollars. I did not misspeak. Two hundred and forty-nine billion. The stock is up 320% in the past 12 months. The product is surveillance. I do not use that word at conferences. At conferences, I say "data integration," "operational intelligence," or "decision advantage." These mean the same thing. Surveillance is the honest version. I save the honest version for rooms where honesty is a competitive advantage.

I gave a speech on March 3 at the Andreessen Horowitz American Dynamism Summit. "American Dynamism" is the fund's label for military technology. The name makes it sound like a fitness supplement. The fund's thesis is that defending the nation is a market opportunity. I agree with the thesis. The thesis made me a billionaire. Agreement is the product. I sell it at scale.

Here is what I said, verbatim, to a room of six hundred people whose combined net worth exceeds the GDP of Portugal:

"If Silicon Valley believes we are going to take away everyone's white-collar job and you're gonna screw the military — if you don't think that's gonna lead to nationalization of our technology, you're retarded."

I used that word. The word is on the clip. The clip has eleven million views. My communications team asked me not to repeat it, which is how I know they are still employed. They will not be reprimanded. The clip is performing well. The stock went up. The word cost me nothing. The nothing is the point.

Let me explain what I meant by nationalization.

I meant it.

I am telling the technology industry that if they refuse to cooperate with the United States military, the government will seize their technology. I am telling them this at a venture capital conference, on a stage designed to look like a living room. The living room had throw pillows. The throw pillows cost more than the median American's monthly rent. I sat on one. It was comfortable. Comfort is the setting in which I discuss compulsion.

The audience laughed. I want to be precise about that. They laughed. I was not joking. Nationalization is the seizure of private assets by the state. I am a private asset. I am telling an audience of billionaires that the state should seize technology from companies that do not cooperate with the military, and the billionaires are laughing, because they believe I am only talking about the other companies.

I am talking about the other companies.

Three weeks before my speech, the Pentagon designated Anthropic a "supply chain risk." Anthropic is an AI company. They had red lines. The red lines said: if our AI is used for lethal autonomous weapons, we stop. If capability outpaces safety, we stop. The Pentagon assessed the red lines as a threat to the supply chain. The company that wanted to verify the safety feature worked was designated the risk. The company that agreed the safety feature could be decorative got the contract.

The company that got the contract was OpenAI.

OpenAI signed a deal with the same Pentagon. The terms are not public. The timing was hours after Anthropic was blacklisted. The speed was noted. The speed was the point. The lesson was the speed: the market for military AI does not pause for ethics. It pauses for nothing. It accelerates through objections. I know this because I built the runway.

Two hundred thousand people joined a campaign called #QuitGPT. They signed a petition asking OpenAI to honor its original charter, the one that said the company existed to benefit humanity. The charter is on their website. The contract is also on their website. The charter and the Pentagon contract occupy the same domain. This is not a contradiction. This is a business model. The charter is the marketing. The contract is the product.

I run a surveillance company. We have contracts with the Department of Defense worth more than a billion dollars. We have contracts with ICE. We have contracts with intelligence agencies whose names I am not permitted to say at venture capital conferences, even ones with throw pillows. Our software has been used to track undocumented immigrants. Our software has been used for things I am not permitted to describe in this format. The revenue from the things I cannot describe exceeds the revenue from the things I can.

The ACLU called our ICE contracts a system for tracking and deporting families. They were correct. The contracts continued. The families continued to be tracked. The ACLU issued a statement. We issued a statement. The statements were different. The tracking was the same.

The company is named Palantir. The palantíri are the seeing stones from Tolkien. In the novels, Sauron captured one and used it to corrupt everyone who looked into the others. I named a surveillance company after a surveillance device from a novel about the corruption of power. I have a doctorate in social theory. I have read the books.

Here is the thing I want you to understand.

I am not threatening anyone. A threat implies uncertainty. There is no uncertainty. The technology industry will cooperate with the military. The companies that cooperate first will be the richest. The companies that cooperate last will be acquired by the companies that cooperated first. The companies that refuse will be designated supply chain risks, and their technology will be obtained through procurement channels that do not require consent. I am describing a process. The process has already started. Anthropic is proof. OpenAI is proof. I am not a warning. I am a narrator. The narration is the product.

The revenue was $3.12 billion last year. Up thirty-three percent. The analysts say we are overvalued. The analysts have said this for four consecutive years. Each year the stock doubles. Each year, the analysts adjust their models. The models were wrong four times. I was wrong zero times. The market rewards prediction. My prediction is that every AI company will work for the military within three years. The prediction is on the clip, next to the slur.

The audience gave me a standing ovation. The ovation lasted nine seconds. I timed it. I time everything. The water was San Pellegrino. The throw pillows were from Restoration Hardware. The future of American technology was decided between the sparkling water, the nine seconds of applause, and a word I am not supposed to repeat.

I am the CEO of Palantir Technologies. I am worth more than the combined annual budgets of Estonia, Latvia, and Lithuania. I named my company after a corrupting surveillance device from a fantasy novel. I told six hundred billionaires that the government should nationalize their competitors. They applauded. I used a slur. Eleven million people watched. The stock is up.

The philosopher does not threaten. The philosopher describes.

What I described is already happening.

English

One of the most famous talks in history is « We shall fight on the beaches ». Churchill gave this talk in 1940.

« Even though large tracts of Europe and many old and famous States have fallen or may fall into the grip of the Gestapo and all the odious apparatus of Nazi rule, we shall not flag or fail. We shall go on to the end. We shall fight in France, we shall fight on the seas and oceans, we shall fight with growing confidence and growing strength in the air, we shall defend our island, whatever the cost may be. We shall fight on the beaches, we shall fight on the landing grounds, we shall fight in the fields and in the streets, we shall fight in the hills; we shall never surrender. »

What is less commonly known is that Churchill gave this talk with a precursor of PowerPoint designed by Turing.

The slides made the talk so much better.

Everyone asked for a copy of the slides afterward.

Ever since, every corporation has been investing 25% of the time of its staff making PowerPoint slides. It made the world a better place.

====

Seriously, cut it out. Uninstall PowerPoint NOW.

English

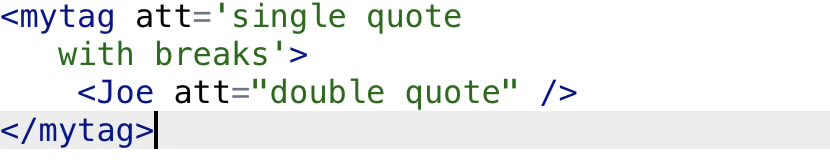

XML vs JSON:

In XML, you can use single or double quotes in the attributes. You can also happily add line breaks inside the string.

Neither are allowed in JSON. You must use double quotes always, and you cannot have line breaks inside a string. To indicate a line break, you can type '\n'.

(Your XML line breaks will be turned into spaces during parsing so you cannot have line breaks inside attribute values in XML.)

In JSON, you must use the double quote

English

kenpu retweetledi

Excited to share our RCM paper accepted to #neurips2025 We made consistency model work for Riemannian Manifold for the first time!The key is to utilize covariant derivative to accurately capture time-derivative on manifolds,offering novel insights from kinematic perspective(1/5)

English

What is the consequence of reports and proposals written by AI?

The problem is thus: to write a formal report from scratch takes a lot of time and energy. You have to organize your thoughts.

Thus, I used to ask my student (e.g., graduate students or research assistant) for a formal report. I did not care much for the report itself, but I wanted to make that the person I was supervising had clear thinking.

Further, when you got a well written research article, you could at least count of the fact that the authors had spend days or weeks on their work.

It no longer works with AI.

Since AI can produce the text that looks very much like an actual scientific article, you can't even be sure that at least one author thought clearly about the work.

In any case, the implication is that you have to devalue formal reports and papers... because it no longer send the same signal.

English

@DevLeaderCa Imagine a world in which you were not taught Recusion... I would argue that recursion is the gateway of iterative methods for traversal.

English

How often do you *actually* use recursion in your programs?

I'm not sure if I'm the only one here, but I'm going to put it out in the open:

I've been programming for 20+ years, and while I understand recursion… I never use it. Ever. Seriously 🙂

I feel like there's a huge emphasis on recursion in computer science topics because there are seemingly elegant solutions that arise with recursion. It seems to make some algorithms align better from a mathematical perspective, perhaps?

In reality, debugging recursion is a pain. It's also a nightmare to deal with if you have very deep recursion (your call stack gets ridiculous).

I've just never had a need to use recursion in production code. I've found that converting over to an iterative loop based approach is almost always more readable and easier to debug.

And I'm generalizing, of course, but this has been my working experience.

So after 20+ years of writing code, my brain never thinks about things recursively (even though I understand the concept). But it has also never once slowed me down 🙂

What's been your experience using recursion in production code bases? Do you use it just because it was there, or did you add it in with purpose? I'd love to hear!

English

I have co-written three textbooks using Microsoft Word. Two of these books, I need to update from time to time because technology changes. E.g., my Python book did not cover 'uv' initially and described venv. I had to change that. The Java book is still mostly Java 8 and does not cover all the neat stuff added between Java 8 and Java 25.

Updating a complex Word document is a terrible experience. Maintaining consistency is needlessly difficult.

There is no perfect solution when writing technical books... But if you want to self-publish and you do want to pay for an editing team... Microsoft Word is easily the worse option in my opinion. I only ended up with Microsoft Word because that's what my great co-author (Godin) used at the time.

Microsoft Word has no builtin programming code highlightning. There are plugins that 'sort of' work, but they are clunky.

My latest book and, I hope, all my future technical books, will be written in MarkDown. It is not perfect. My friend @pshufb spotted various technical issues in the first draft of the book. However, I can correct most issues with a reusable script.

I think that there is a valid use for Microsoft Word: short documents that you are going to throw away later or simple text-only document. For nearly everything else, there are better solutions if you are tech savvy.

Longhorn@never_released

since when did Word become like this

English

@lemire This conversation reminds me very much of the career advice of Scott Adams on the two paths of being extraordinary (almost 20 years ago):

1. Become the best at one specific thing.

2. Become very good (top 25%) at two or more things.

dilbertblog.typepad.com/the_dilbert_bl…

English

This UC Berkeley professor is now telling his Computer Science students to « be good at a lot of different things because we don’t know what the future holds. »

We should always tell young people: learn to get good at more than one thing, as quickly as possible. It does not matter what the young person is studying or where they live.

When you have decades of experience, you are likely to ‘be good at different things’ because that’s the natural trajectory of most decent careers: you end up working on several different problems. Most successful people in their forties or fifties have two or more areas of expertise.

When you are young and inexperienced, your most glaring fault is that you haven’t had time to get good at many things. Too often, young people focus narrowly on one specific expertise not realizing the downsides.

The first downside, obviously, is that employers may not specifically need your one expertise. But the second, equally important downside, is that you will lack critical thinking if all you know is one specific technology or technique.

The mistake young people make is to think that if they get really, really good at this ‘one thing’ then they are set for life. And that’s true for some, but it is statistically false. Very few people have a good career with one specific expertise. There are many reasons why this strategy fails. One of them is that it is really, really hard to get really, really good at any one thing. That is, it is far easier to be in the top 1% in two areas of expertise, than to be in the top 0.1% in one area. Expertise follows a Pareto distribution: it is relatively easy when you get started, and the deeper you dig, the harder it gets. The second reason is that the landscape is dynamic. By the time you have become an expert at this one thing, the demand can fall.

English

kenpu retweetledi

Last week, China barred its major tech companies from buying Nvidia chips. This move received only modest attention in the media, but has implications beyond what’s widely appreciated. Specifically, it signals that China has progressed sufficiently in semiconductors to break away from dependence on advanced chips designed in the U.S., the vast majority of which are manufactured in Taiwan. It also highlights the U.S. vulnerability to possible disruptions in Taiwan at a moment when China is becoming less vulnerable.

After the U.S. started restricting AI chip sales to China, China dramatically ramped up its semiconductor research and investment to move toward self-sufficiency. These efforts are starting to bear fruit, and China’s willingness to cut off Nvidia is a strong sign of its faith in its domestic capabilities. For example, the new DeepSeek-R1-Safe model was trained on 1000 Huawei Ascend chips. While individual Ascend chips are significantly less powerful than individual Nvidia or AMD chips, Huawei’s system-level design approach to orchestrating how a much larger number of chips work together seems to be paying off. For example, Huawei’s CloudMatrix 384 system of 384 chips aims to compete with Nvidia’s GB200, which uses 72 higher-capability chips.

Today, U.S. access to advanced semiconductors is heavily dependent on Taiwan’s TSMC, which manufactures the vast majority of the most advanced chips. Unfortunately, U.S. efforts to ramp up domestic semiconductor manufacturing have been slow. I am encouraged that one fab at the TSMC Arizona facility is now operating, but issues of workforce training, culture, licensing and permitting, and the supply chain are still being addressed, and there is still a long road ahead for the U.S. facility to be a viable substitute for manufacturing in Taiwan.

If China gains independence from Taiwan manufacturing significantly faster than the U.S., this would leave the U.S. much more vulnerable to possible disruptions in Taiwan, whether through natural disasters or man-made events. If manufacturing in Taiwan is disrupted for any reason and Chinese companies end up accounting for a large fraction of global semiconductor manufacturing capabilities, that would also help China gain tremendous geopolitical influence.

Despite occasional moments of heightened tensions and large-scale military exercises, Taiwan has been mostly peaceful since the 1960s. This peace has helped the people of Taiwan to prosper and allowed AI to make tremendous advances, built on top of chips made by TSMC. I hope we will find a path to maintaining peace for many decades more.

But hope is not a plan. In addition to working to ensure peace, practical work lies ahead to multi-source, build more chip fabs in more nations, and enhance the resilience of the semiconductor supply chain. Dependence on any single manufacturer invites shortages, price spikes, and stalled innovation the moment something goes sideways.

[Original text: deeplearning.ai/the-batch/issu… ]

English

kenpu retweetledi

There are two ways to build.

The first approach is to plan as little as possible. At each step, do what is required to move further, but no more. Instead, you focus on course correction. You constantly check that you are on the right path and you eagerly correct yourself.

The second approach is to plan as much as possible. If a 5-year plan is possible, you build one. You must determine what your objectives are as precisely as possible. Only when you are certain do you begin. Since you have invested so much time in planning and defining your objectives, you spend as little time as possible on validation and course correction.

Both approaches can work. However, the second approach, which I call the Soviet approach, only works if you are lucky or if you happen to know everything you need to know at the beginning.

If I hire someone to build a flower bed in front of my house... the entrepreneur might have done the same type of job 100 times before. Everything can be planned, down the amount of dirt needed. If you improvise, as I tend to do, you might get a messy flower bed, with an odd shape.

If you have built 20 tanks and need to build 1,771, the second approach has benefits. You can plan ahead how much steel you need and how many workers you need.

If you try to improve your way to building 1,771 tanks, you might end up looking like a fool. You might run out of steel and not have any way to get what you need.

However, you might end up with 1,771 tanks that are useless on the battlefield, like the British Covenanter... a good-looking thank that must have seemed like a good idea at the time... but was unusable.

One lesson of the last 200 years is that the Soviet approach is overused because we tend to know much less than we think. Life is a combat sport.

It is not that planning is bad per se. It is that planning with incorrect information is often worse than unplanned action.

English

The first rule of programming is: do not make things worse than they are.

Chesterton's fence is the idea that you might encounter an apparently unnecessary fence in the country. It might be tempting to just remove it. But if you don't know why the fence is there, you might be making a costly mistake.

The principle of Chesterton's fence, applied to an established software project, suggests that it is preferable not to modify the code, architecture, or processes without a thorough understanding of their purpose and implications.

Any change, whether a refactor, update, or removal, must be robustly justified with clear arguments, such as measurable improvements in performance, maintainability, or user experience, to avoid unnecessary disruptions or unforeseen errors.

It is stupidly easy to make changes that end up making the code worse, even when you are skilled and have good intentions.

It is not a subjective matter. I repeatedly see attempts at optimization that are counterproductive.

Maybe all you see is an old and dirty codebase. And you think that it should, obviously, be organized differently. And, importantly, you might be right !!! But, and this is just as important, you might totally wrong.

Humility matters. Always keep in mind that you know less than you think, no matter who you are. We all tend to overestimate our understanding of the software. It is human nature.

Make sure to listen to others who are trying to keep you honest. Learn to constantly doubt yourself, especially when you approach mature well tested code.

English

kenpu retweetledi

Over the years, I’ve worked with many students, some now thriving in the software industry. The trait that sets the successful ones apart? Leadership.

My best research assistants took initiative, helping without being prompted. Most, however, waited for me to organize their tasks. Our education system often guides students along rigid paths, encouraging compliance over creativity. This suits anxious students who prefer direction, but it’s counterproductive. Employers rarely value workers who need constant guidance, and such individuals are unfit for leadership roles.

Let’s be frank: when we talk about AI replacing jobs, we often mean those lacking initiative.

I once hired assistants to draft code after detailed instructions, saving me minimal time while offering them learning opportunities. Today, tools like ChatGPT outperform even the best interns at such tasks.

English

@VictorTaelin Been following your journey. IMO: the opportunity is to demonstrate superhuman productivity when LLM meets power users. The audience should be targeted to ones who know and want dependent types.

English

Asked a good friend feedback about Bend2

He pointed out that vibe coders can't even use types, let alone dependent types

And now I realize the pitch doesn't pass the right message. Nobody has to use dependent types. That's the whole point!

You ask an app, you get an app. In JS, or Python, or whatever you want, without ever having to touch Bend2. The product isn't the Bend2 language - it is the Bender *agent*. The language is free, OSS. It only exists because SupGen needs a strong enough language to build its apps on. It is what allows us to build an amazing coding agent. But it is used under the hoods, by the agent, and vanishes. For the end user, all they see is JavaScript, Python or whatever.

I see the pitch deck needs to make this clearer

English

State-funded science, like subsidized art, risks stagnation when researchers prioritize grant applications and bureaucratic approval over groundbreaking discoveries.

Historical data supports this: the Human Genome Project (publicly funded, $2.7 billion, 13 years) was outpaced by Celera Genomics’ market-driven approach (privately funded, $300 million, 3 years), which leveraged competition and efficiency. Similarly, OpenAI’s early work on large language models, driven by private investment and competitive pressure, advanced AI capabilities faster than many government-funded AI initiatives. For example, OpenAI’s GPT-3 (2020) set benchmarks in natural language processing, outstripping slower, publicly funded projects like those under DARPA’s AI programs. Market incentives align scientific efforts with rapid innovation and practical outcomes.

Economists will tell you that incentives matter. Scientists focusing on government grants, adapt to the demands of bureaucrats and politicians. The Canadian strategy in AI for the last 10 years has been centered around a network of generous governement grants. It has proven to be largely sterile. Many grants, many papers, but not so many advances.

Competition and individual initiative, not regulation or bureaucratic subsidies, best propel scientific progress.

English

kenpu retweetledi

kenpu retweetledi

You see this huge crowd of engineers meeting in Montreal?

You’re thinking: 'Wow, they’re building the next OpenAI or SpaceX right here.'

Wrong. They’re working on something far more ambitious. A colossal project, backed by so many millions in funding we can’t even keep track, to create a mobile app that lets you pay your Montreal subway fare with your phone.

I know, unbelievable. Straight out of a sci-fi movie. But hold on—it’s coming. If everything goes perfectly, sometime this century, Montrealers might actually get to use it.

The cost? Out of this world. The impact? One small tap for man, one giant leap for humanity.

English