Kate Sanders

283 posts

Kate Sanders

@kesnet50

LLM post-training, reasoning, and multimodality. Ph.D. @jhuclsp, incoming researcher at Microsoft Copilot Tuning.

Cambridge, MA Katılım Ağustos 2021

362 Takip Edilen322 Takipçiler

Kate Sanders retweetledi

🚨 Calling all RAG researchers & NLP folks:

RAG4Reports is coming to ACL 2026 this July — a workshop + shared tasks dedicated to the hardest version of RAG: long-form, citation-backed, multilingual report generation.

Here's why you should care 🧵👇

🔗 rag4reports.github.io

English

Kate Sanders retweetledi

Kate Sanders retweetledi

📹 + 🧠 + 📝 = 🔥 First call for MAGMaR 2026, the 2nd workshop on multimodal augmented generation via multimodal retrieval! If #RAG isn't hard enough for you, try multilingually and multimodally. Collocated with @aclmeeting in San Diego in July.

nlp.jhu.edu/magmar/

English

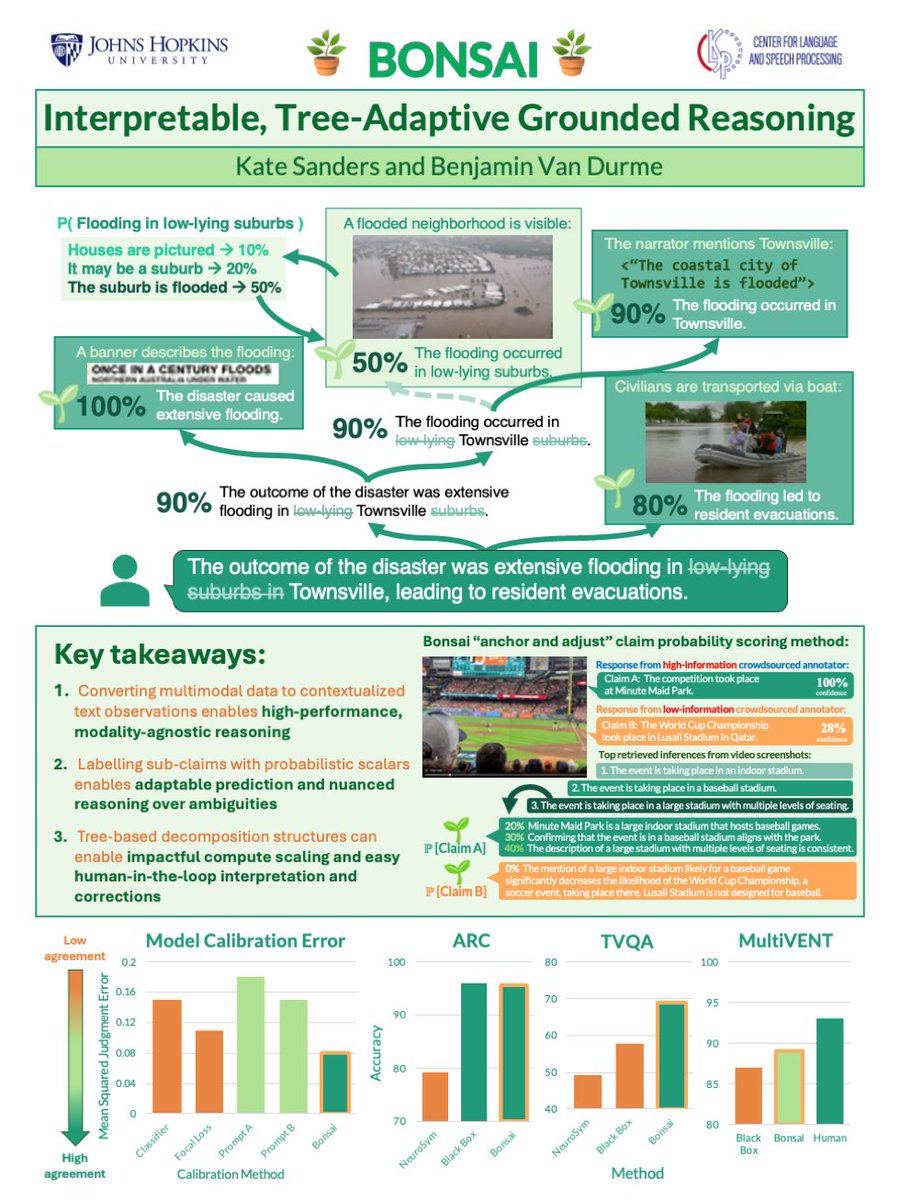

Will be presenting Bonsai on Thursday, 1/22 at the morning talks session and noon poster session:

arxiv.org/pdf/2504.03640

English

Kate Sanders retweetledi

Kate Sanders retweetledi

Kate Sanders retweetledi

Mark your calendars! Join IAA next Tuesday, November 18 at 10:45 a.m. to hear Dr. @anqi_liu33 from @JHUCompSci present her talk "Robust and Uncertainty-Aware Decision Making under Distribution Shifts" as part of our seminar series.

Additional info: iaa.jhu.edu/event/iaa-semi…

English

Kate Sanders retweetledi

Kate Sanders retweetledi

Kate Sanders retweetledi

Kate Sanders retweetledi

Kate Sanders retweetledi

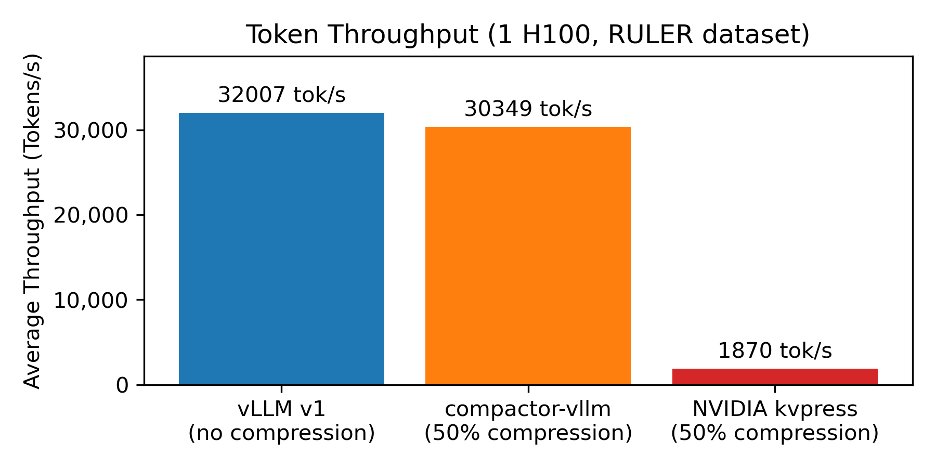

New in-depth blog post - "Inside vLLM: Anatomy of a High-Throughput LLM Inference System". Probably the most in depth explanation of how LLM inference engines and vLLM in particular work!

Took me a while to get this level of understanding of the codebase and then to write up this one - i quickly realized i understimated the effort. 😅 It could have easily been a book/booklet (lol).

I covered:

* Basics of inference engine flow (input/output request processing, scheduling, paged attention, continuous batching)

* "Advanced" stuff: chunked prefill, prefix caching, guided decoding (grammar-constrained FSM), speculative decoding, disaggregated P/D

* Scaling up: going from smaller LMs that can be hosted on a single GPU all the way to trillion+ params (via TP/PP/SP) -> multi-GPU, multi-node setup

* Serving the model on the web: going from offline deployment to multiple API servers, load balancing, DP coordinator, multiple engines setup :)

* Measuring perf of inference systems (latency (ttft, itl, e2e, tpot), throughput) and GPU perf roofline model

Lots of examples, lots of visuals!

---

I realize i've been silent on social - many of you noticed and thanks for reaching out! :) --> I'm so back! lots of things happened.

Also, in general, I'm a bit sick of superficial content, it really is an equivalent of junk food (h/t @karpathy).

I want to do the best/deepest technical work of my life over the next years and write much more in depth (high quality organic food ;)) so I might not be as frequent around here as i used to be (? we'll see). I'll make it a goal to share a few paper summaries a week or stuff that's relevant / in the zeitgeist.

If you have any topics that happened over the past few weeks/months drop it down in the comments i might focus on some of those in my next posts.

---

Huge thank you to @Hyperstackcloud for giving me an H100 node to run some of the experiments and analysis that i needed to write this up. The team there led by Christopher Starkey is amazing!

Also a big thank you to Nick Hill (who did a very thorough review of the post - basically a code review lol; Nick's a core vLLM contributor and principal SWE at RedHat) and to my friends Kyle Krannen (NVIDIA Dynamo), @marksaroufim (PyTorch), and @ashVaswani (goat) for taking the time during weekend when they didn't have to!

English

Kate Sanders retweetledi

Our work on readability evaluation for Plain Language Summarization will appear at #EMNLP2025!! @DanielKhashabi @mdredze

Paper: arxiv.org/pdf/2508.19221

TLDR: Traditional readability metrics correlate poorly with human judgements & LMs consider deeper readability features. 1/6

English

Kate Sanders retweetledi

BloomScrub🧽 is now accepted to EMNLP 2025 as a main conference paper! Check out our post below for a detailed summary⬇️

Jack Jingyu Zhang @ ICLR 🇧🇷@jackjingyuzhang

Current copyright mitigation methods for LLMs typically focus on average-case risks, but overlook worst-case scenarios involving long verbatim copying ⚠️. We propose BloomScrub 🧽, a method providing certified mitigation of worst-case infringement while preserving utility.

English

Taking off for Vienna #ACL2025! 🇦🇹 Excited to talk with people about transparent reasoning, multimodality, and fact verification. Stop by our multimodal RAG workshop on Friday 🔥🔥🔥

x.com/MAGMaR_worksho…

Please reach out if you want to grab coffee!

MAGMaR@MAGMaR_workshop

New Workshop on Multimodal Augmented Generation via MultimodAl Retrieval (MAGMaR) to be held at @aclmeeting ACL in Vienna this summer. We have a new shared task that stumps most LLMs - including ones pretrained on our test collection. nlp.jhu.edu/magmar/

English

Kate Sanders retweetledi

@abe_hou @StanfordAILab @ben_vandurme @DanielKhashabi @TianxingH @jackjingyuzhang @orionweller @tsvetshop @du_hongru @StellaLisy @hiaoxui Congratulations!!!!! Excited to see what you do next!

English

I am excited to share that I will join @StanfordAILab for my PhD in Computer Science in Fall 2025. Immense gratitude to my mentors: @ben_vandurme @DanielKhashabi @TianxingH @jackjingyuzhang @orionweller @tsvetshop Lauren Gardner @du_hongru @StellaLisy @hiaoxui

🧵:

English

Kate Sanders retweetledi

@jeff_cheng_77 @danqi_chen @ben_vandurme @ruyimarone @orionweller @jhuclsp Super exciting, huge congratulations!!!!!

English

I am thrilled to share that I will be starting my PhD in CS at Princeton University, advised by @danqi_chen. Many thanks to all those who have supported me on this journey: my family, friends, and my wonderful mentors @ben_vandurme, @ruyimarone, and @orionweller at @jhuclsp.

English