Sabitlenmiş Tweet

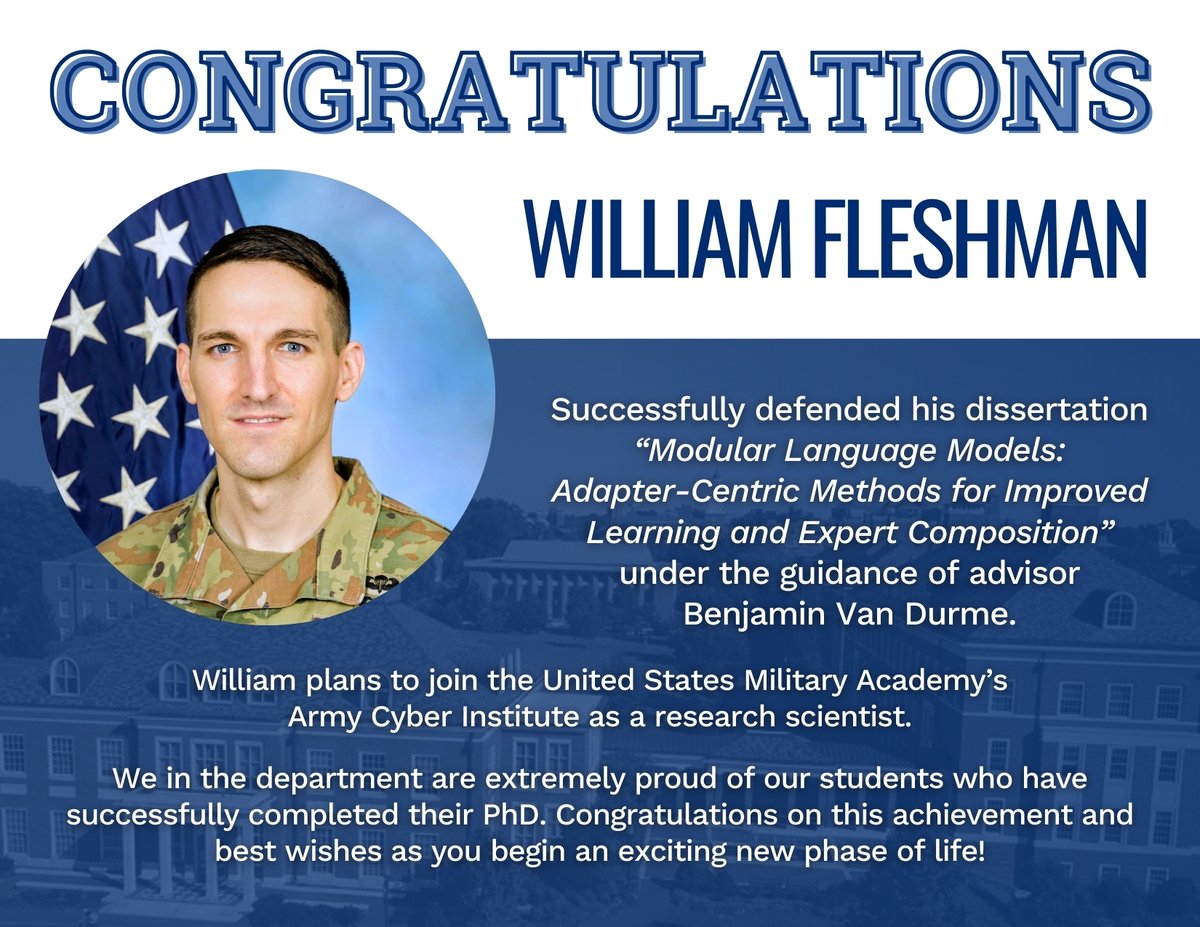

William Fleshman

337 posts

William Fleshman

@willcfleshman

US Army & PhD student at Johns Hopkins University

Katılım Ağustos 2017

153 Takip Edilen424 Takipçiler

William Fleshman retweetledi

William Fleshman retweetledi

JHU mmBERT extended from 8k to 32k token length by vLLM Semantic Router Team. Cutting edge results on 1,800+ languages, now with longer context!

huggingface.co/llm-semantic-r…

English

@ChromeHODLs @hillery_dan That's definitely easier, just might not be optimal depending on your tax situation. Assuming you can almost capture both rates, swapping back and forth with T-bills would compound faster up to a 25% tax. Tax free accounts, if available, is the real way to go.

English

@ChromeHODLs @hillery_dan Opportunity cost. If you can capture the dividend by only tying up your capital for a couple of days then the rest of the month that capital can be making money elsewhere.

English

@EdwardRaffML Maybe it's you that has 0 recall and precision 🤯

English

I’m super thrilled and honored to be named an Amazon AI PhD Fellow 💫

Huge thanks to @AmazonScience for generously supporting our research at JHU! We’ll be advancing AI alignment in collaboration with folks at Amazon.

Rohit Prasad@RohitPrasadAI

Excited to announce @amazon's new AI PhD Fellowship Program supporting 100+ students across 9 universities like Carnegie Mellon, MIT & Stanford. Fellows will be paired with senior scientists working in related fields, plus receive financial support and AWS credits for research. Learn more: amazon.science/news/amazon-la…

English

William Fleshman retweetledi

Summer '26 PhD research internships at Microsoft Copilot Tuning. Continual learning, complex reasoning and retrieval, nl2code, data efficient post-training.

jobs.careers.microsoft.com/global/en/job/…

English

🚨Check out the paper with @ben_vandurme for more juicy details, like how we improve SpectR and SEQR by calibrating the adapter norms!

arxiv.org/abs/2509.18093

English

William Fleshman retweetledi

William Fleshman retweetledi

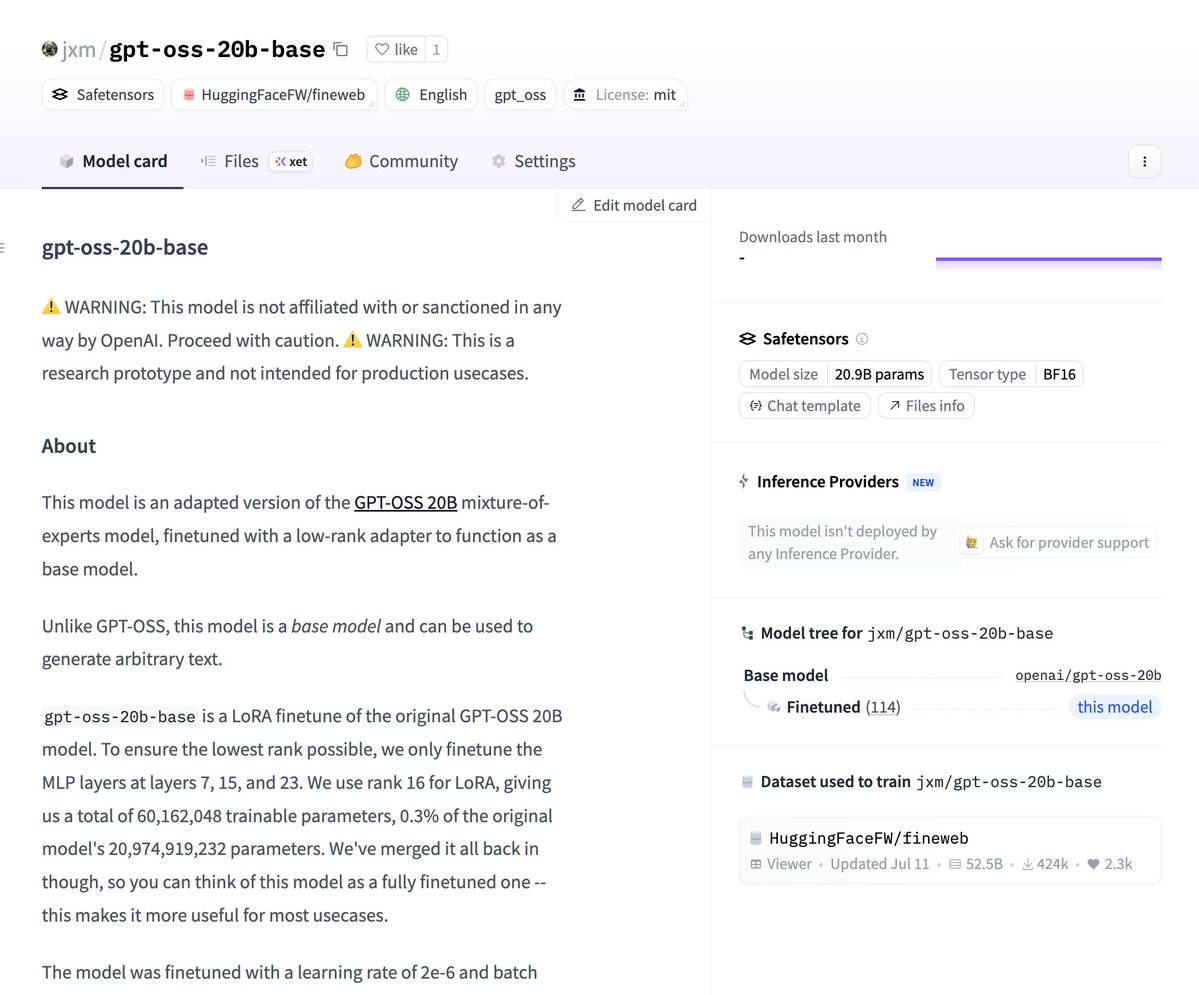

@jxmnop Cool stuff, when we did RE-Adapt (arxiv.org/abs/2405.15007) with llama we saw many of the base->instruct weight updates are approx. low rank but some layers were not. You could repeat your experiment with the llama instruct models to see how close to base you actually get.

English

William Fleshman retweetledi

I am growing an R&D team around Copilot Tuning, a newly announced effort that supports adaptation at a customer-specific level.

Join us! jobs.careers.microsoft.com/global/en/job/…

We collaborate with a crack team of eng and scientists that support the product, also growing!

jobs.careers.microsoft.com/global/en/job/…

English

Check out the paper w/@ben_vandurme now on arXiv:

arxiv.org/abs/2507.05346

English

SpectR was accepted at @COLM_conf!

Our follow-up work, LoRA-Augmented Generation (LAG), combines SpectR w/ a first pass filtering of adapters using Arrow routing. LAG is significantly more efficient, enabling SpectR like performance with much larger LoRA libraries!

William Fleshman@willcfleshman

🚨 Our latest paper is now on ArXiv! 👻 (w/ @ben_vandurme) SpectR: Dynamically Composing LM Experts with Spectral Routing (1/4) 🧵

English

@investingidiocy Thanks for answering my question on TTU. I'm looking forward to the new series of blog posts. Cheers!

English