Ray

238 posts

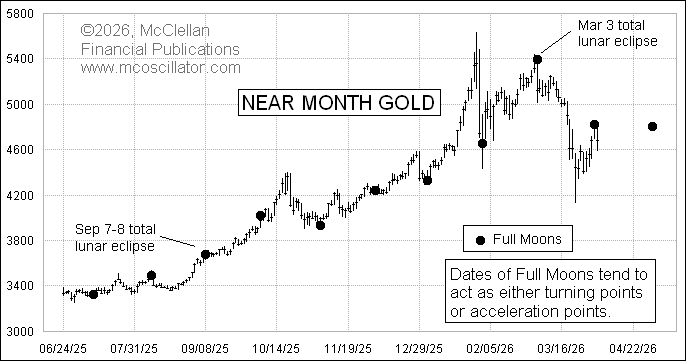

This is a chart I shared this week in my Daily Edition, noting once again that dates of full moons tend to mark turning points or acceleration points for gold prices. I once tried years ago to disprove this point, and I failed. It is real. But figuring out in advance exactly how a future full moon will manifest this behavior is something I have not figured out how to do.

English

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

Ray retweetledi

In Haneda International Airport of Japan, a Chinese woman was loudly scolding a Taiwanese traveler, telling him “Taiwan belongs to China... before you travel abroad, you need to have a clear understanding of politics first...”

After police arrived, the Taiwanese traveller explained the situation to the police using Japanese language, then the Chinese woman told him:“You need to speak human language”...

MANY Chinese now feel that they are the rulers of human kind, and the whole world must obey them. Taiwannese, Japanese and other people are just their slaves.

English

Ray retweetledi

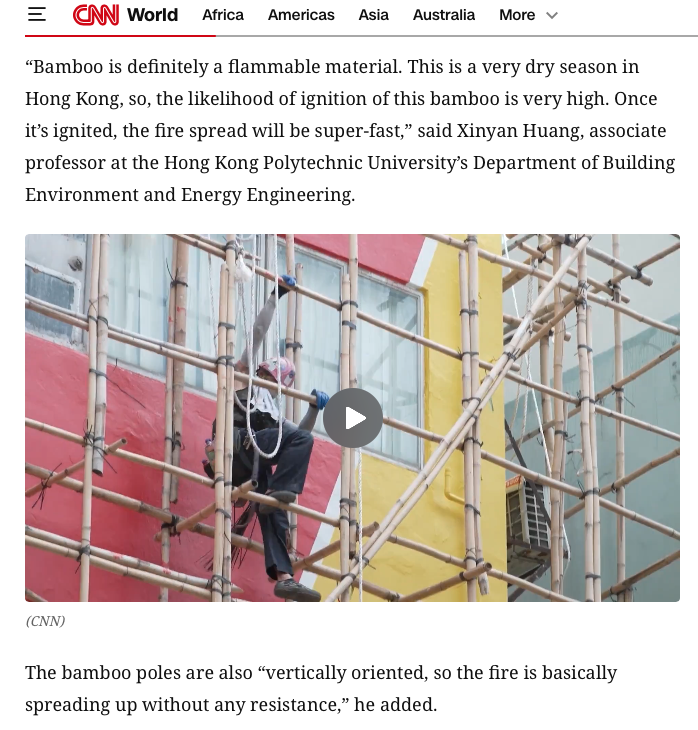

The bamboo scaffolding still stands, just FYI of those who blame it.

Joel Chan@kjoules

25 hours later

English

Ray retweetledi

The “bamboo scaffolding caused the fire” narrative is not only factually wrong, it reflects a prejudice against in-situ craft and HK's own construction traditions. The fire started with the netting. The bamboo is still intact. We deserve better reporting. @BBCWorld

English

Ray retweetledi

Reddit post states: "Government-funded 'Care Teams' busy taking photographs of themselves while actual volunteers do all the work of organising supplies at Tai Po after the fire"

reddit.com/r/HongKong/com…

Video: @Annoygrass via Threads.

English

Ray retweetledi

李家超多年怠政,懒政,香港原本运行的民主政治被国家安全和铺天盖地的意识形态宣传所取代

想不到这么做,真的会有报应?

香港人的心应该都已经死了

为死难者默哀

从我小时候记事起,香港就没发生过这么大的火灾,这次去包括失踪人数,死亡恐怕得有上百人

从小我就想去香港,我就知道香港的文具质量最好;巧克力、梅子糖最好吃

可惜我从来没去过,疫情之后也去不了

以后也不会再去了

李家超先生

您赶紧下台吧😅😅😅

BBC News 中文@bbcchinese

【最新消息】香港行政长官李家超表示,发生在大埔宏福苑多座大楼的严重火灾已导致36人死亡,279人失踪,7人危殆。

中文

Ray retweetledi

Google just dropped "Attention is all you need (V2)"

This paper could solve AI's biggest problem:

Catastrophic forgetting.

When AI models learn something new, they tend to forget what they previously learned. Humans don't work this way, and now Google Research has a solution.

Nested Learning.

This is a new machine learning paradigm that treats models as a system of interconnected optimization problems running at different speeds - just like how our brain processes information.

Here's why this matters:

LLMs don't learn from experiences; they remain limited to what they learned during training. They can't learn or improve over time without losing previous knowledge.

Nested Learning changes this by viewing the model's architecture and training algorithm as the same thing - just different "levels" of optimization.

The paper introduces Hope, a proof-of-concept architecture that demonstrates this approach:

↳ Hope outperforms modern recurrent models on language modeling tasks

↳ It handles long-context memory better than state-of-the-art models

↳ It achieves this through "continuum memory systems" that update at different frequencies

This is similar to how our brain manages short-term and long-term memory simultaneously.

We might finally be closing the gap between AI and the human brain's ability to continually learn.

I've shared link to the paper in the next tweet!

English