Kimtako

48 posts

Kimtako retweetledi

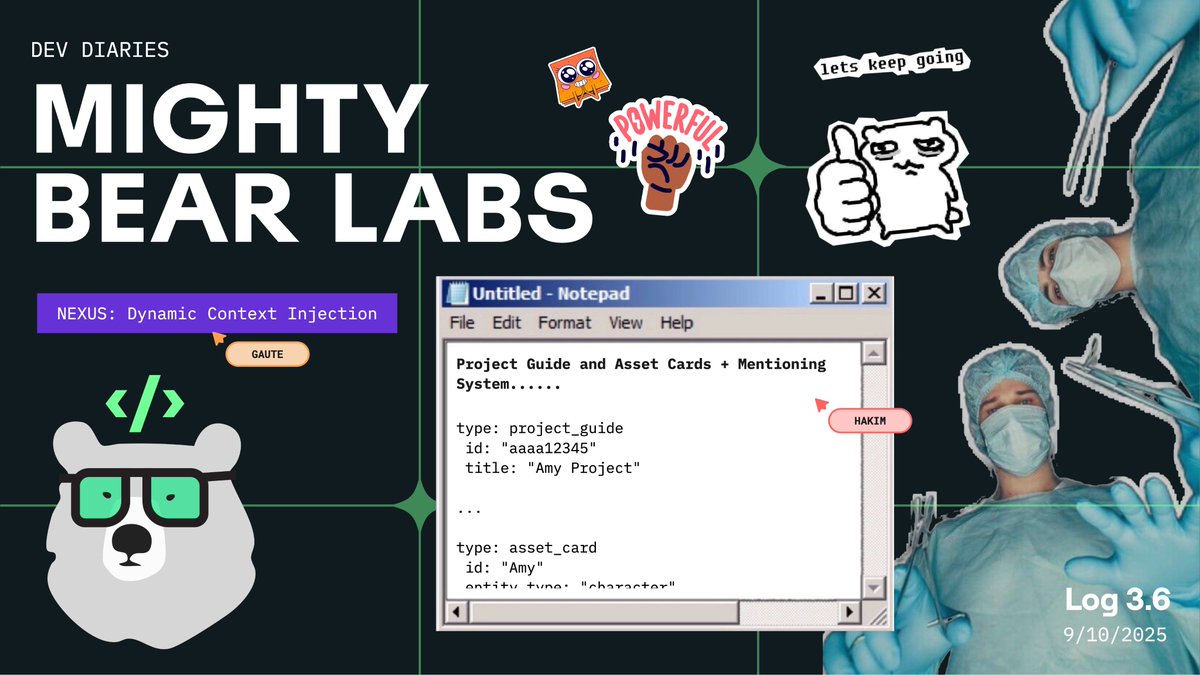

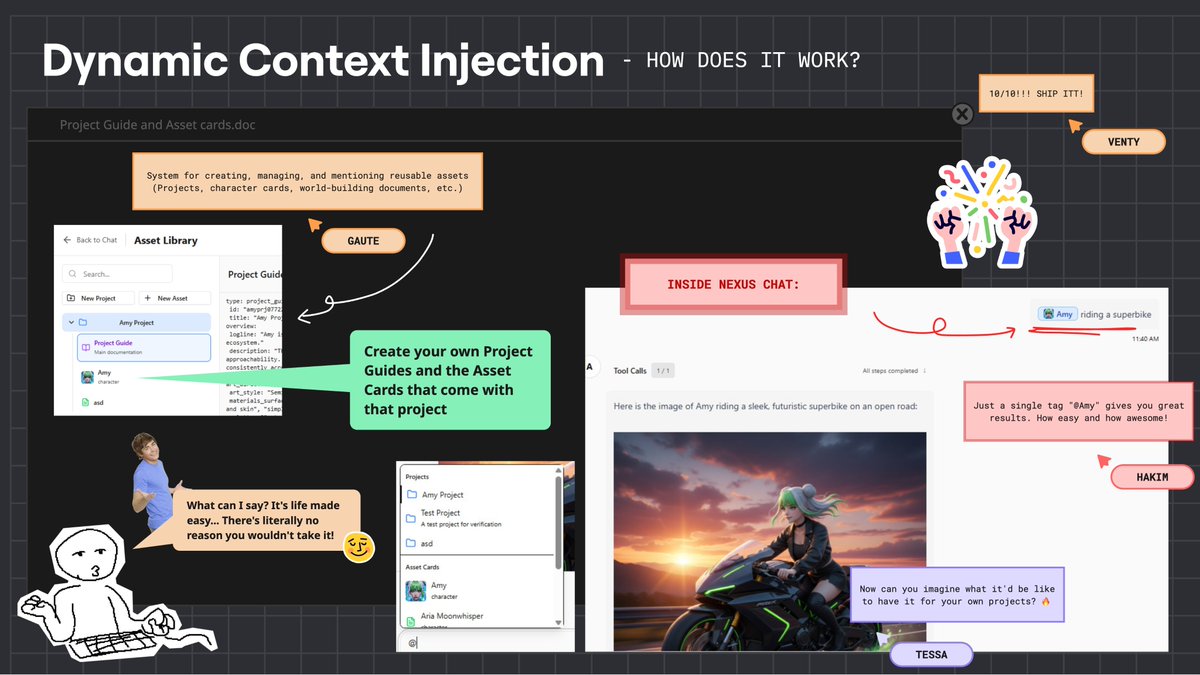

[MightyBearLabs]$ log –version 3.6

Quick Bite: Dynamic context injection lands in Nexus, tag a (@)Project or (@)Subject and Nexus auto-loads the right guides/assets into the prompt. Nexus → your AI-IDE for creative production.

Brought to you by @kimtako44 !

About Us: 20+ years shipping games & apps. Industry veterans with Apple & Disney credits, now pushing frontier AI.

🎯 Goal: Make creating 100% on-model, on-brand content as easy as typing “(@)Amy riding a superbike”.

Problem: Creative prompts drift without enough context using the right art bibles, character sheets, and world docs wired in. Too much copy-paste and human glue, especially as Production output scales up.

❌ Didn’t Work: Static system prompts + manual paste of docs, prompts, ref images; brittle, slow, and inconsistent across projects.

✅ Worked: User-created Project Guides and Asset Cards that can be mentioned in chat, like (@)Project, (@)Character, (@)World. Mentions inject scoped context (style, do/don’ts, refs) into the tool call at runtime.

Result: One tag → consistent outputs; less prompt wrangling, faster iteration; teams can spin up new IP spaces with their own controlled vocab.

💡 Takeaways:

• Context should be dynamic, scoped, and composable.

• Mentions are the UX primitive for creative IDEs.

• Project/Asset cards become source-of-truth for brand/IP.

Closing: This unlocks true “type-driven production” and sets the foundation for shortcuts, chained tools, and team workflows—all inside Nexus.

Like this content? Follow me and @MightyBearLabs for production-level behind-the-scenes, AI deep dives, process notes, prompt recipes, and reproducible workflow.

English

Kimtako retweetledi

[MightyBearLabs]$ log –version 1.5

Let's talk a little about the secret sauce behind Nexus: deep engineering know-how paired with artists prompt engineering.

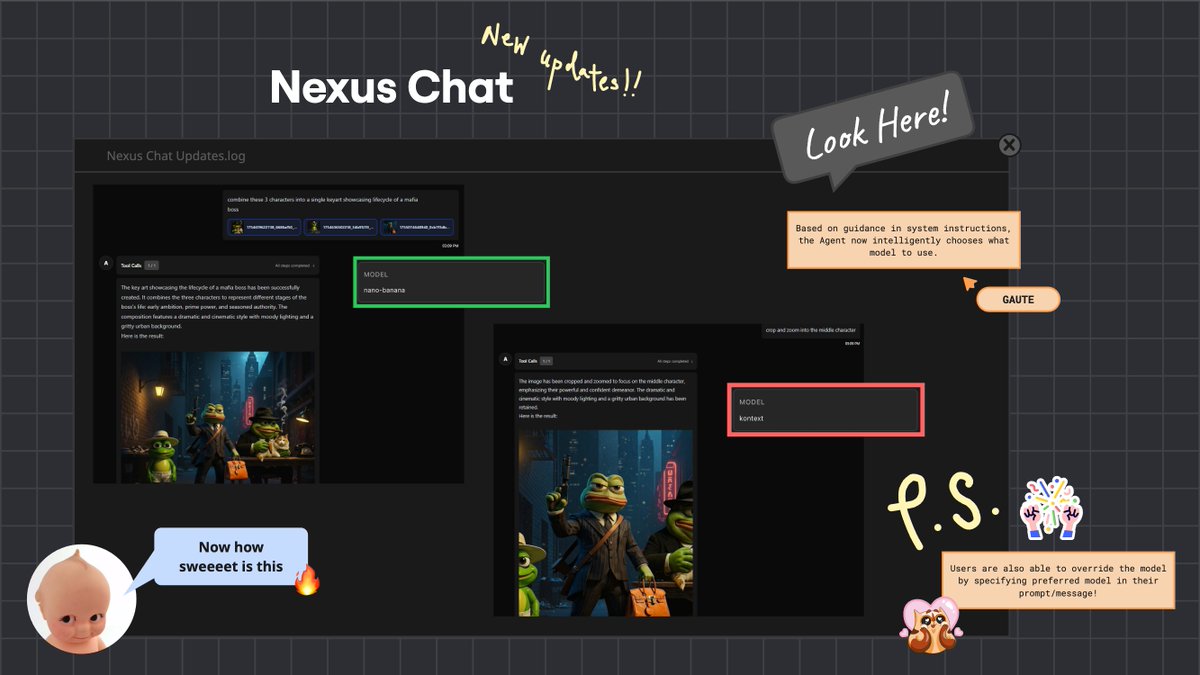

Nexus, our Creative Production Agent, just got even more powerful today!

We’ve just shipped intelligent model selection: within a given "tool", Nexus now chooses the right model for the job. First use case lands in editImage tool. Nexus will pick:

• Kontext for precision work (crop, zoom, aspect ratios)

• NanoBanana for absolutely everything else(for now)

Early results are strong even with two models using different schemas, by explicitly documenting parameter deltas in the tool description, Nexus routes cleanly.

As we add more, we’ll likely split by tool when schemas diverge to keep the interface sane and predictable.

Additionally, and that's the power of an agentic system here, as a user, we can interrupt override the decision by naming our preferred model either in the initial prompt or as we take turns talking to Nexus.

The secret sauce: building on top on strong technical capabilities, artists lead the prompt engineering.

• @kimtako44 is dialing in guidance prompts for each tool and the chaining strategies between them.

• @wemmwem is focused on model specific prompt enhancers that translate intent into production-ready inputs.

• Gaute is architecting the agent, driving the technical implementation, and shipping the initial guidance, while our artists iteratively expand capabilities through deep tool use, chaining logic, and workflow extraction.

Nexus is already punching above its weight with a small toolset. This is the foundation for a production-grade creative partner that understands craft, not just commands. If you’re curious about where creative tooling is actually heading, not demo-ware, watch this space. We use Nexus daily, as a we build it.

What’s next

I am not pretending it’s magic: Nexus isn’t yet great at inferring complex workflows from vague, one-line asks. Today, you still get better results if you know what it can do and how to chain requests. As such, it serves the Art team really well.

But the goal is for anyone in the team to just use Nexus.

So we’re in the process of implementing strategies for rapid context building so the agent can operate with far less hand-holding.

The first pillar is Contextual Elicitation, a deliberate tactic to clarify intent fast via targeted questions.

Paired with:

• smart defaults and assumptions about common creative tasks,

• pre-fed known context (projects brand kits, image references, past decisions...),

• and learning from prior interactions,

…Contextual Elicitation increases Nexus’s success rate and autonomy tenfold. Tighter outputs require much less fewer back-and-forths, leading to faster production.

Next up: extending intelligent model selection beyond editImage, standardizing or splitting tools where schemas clash, and evolving chaining guidance so multi-step workflows feel like one ask.

We’ll share more soon. Especially about the process artists are using to figure out the changes required to the system prompt, in order to improve the consistency and reliability of Nexus, a task normally reserved for AI Engineers.

Ask me anything!

And follow @MightyBearLabs for more insights into what we're buiding.

English

Kimtako retweetledi

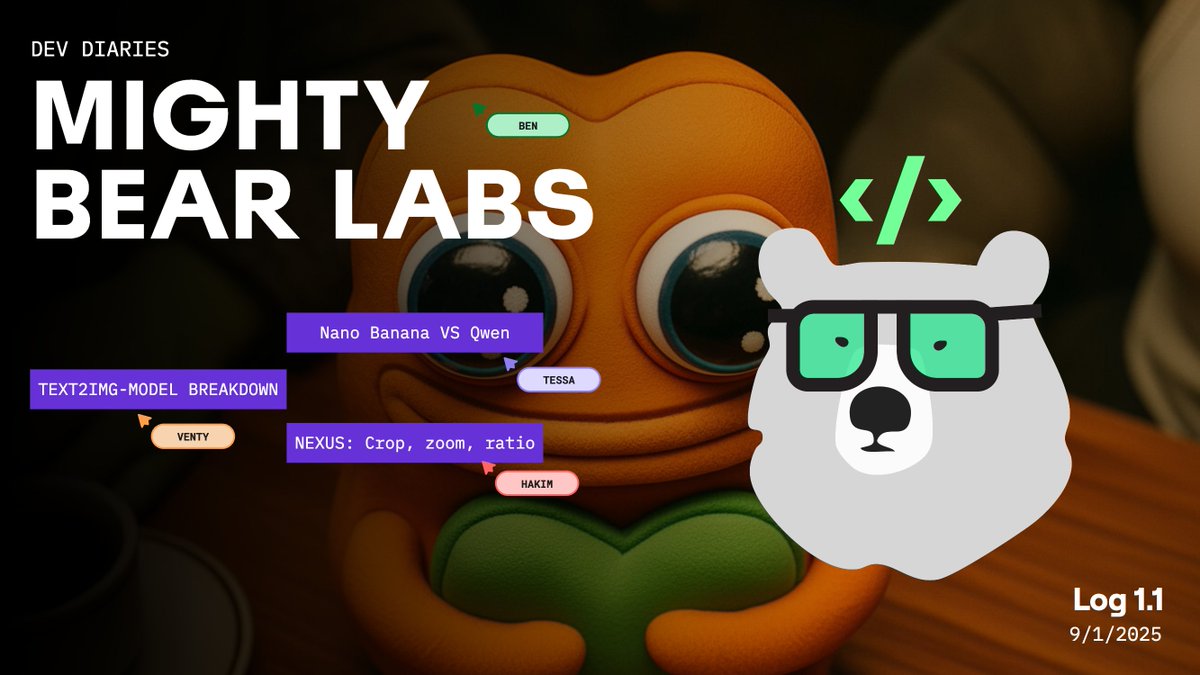

Very excited to announce Mighty Bear Labs, our brand new Dev Diaries initiative at the studio[mightynet.tv/log11]! It’s your backstage pass to our latest experiments and breakthroughs. In our first edition, we dive into some txt2img model evaluations, Nano Banana vs Qwen for image editing, and share an update on Nexus, our powerful in-house creative production agent. We’re testing the format, so expect more to come!

English

Kimtako retweetledi

[MightyBearLabs]$ log –version 1.2

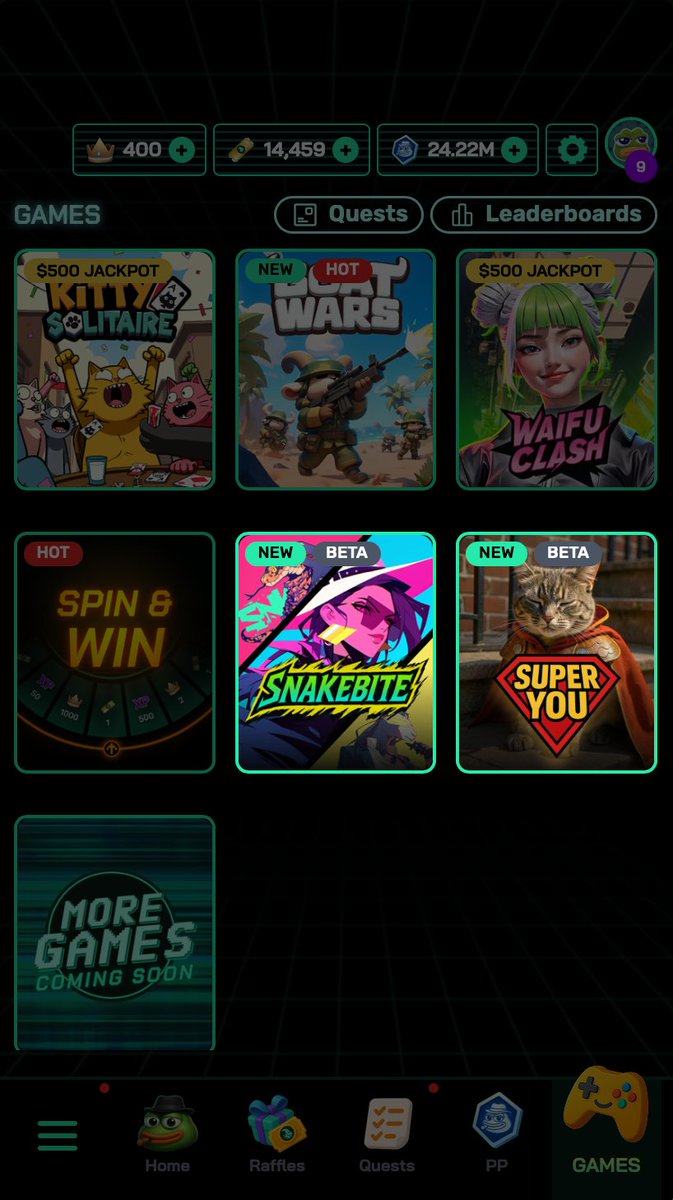

We’ve been cooking on the VibeOps side. We’re at a stage where we’re building and deploying all sorts of apps, games, and in-chat experiences, mostly done by non-technical individuals.

@kimtako44 (Artist) made a Telegram Raffle bot and SnakeBite, a turn-based fighting game with rogue-like elements.

@Zh14_O (Artist) created a really cool photo app called SuperYou, where you can turn ANYTHING into a superhero. Fun fact: SuperYou runs on the same infrastructure as Nexus, our Creative agent.

Both can be found in live beta on the @playgoatgaming app!

Then, big update this week: the team can now vibecode analytics support, from basic metrics (users, sessions, etc.) to deeper, complex event tracking (total boss fights, quest completion, win rate, and more).

The development of our VibeOps stack is led by Tom and involves the entire team, engineers building the systems, non-technical folks building and deploying the experiences.

And, big bonus: all vibecoded (or not) experiences are about to natively integrate with Amy for distribution and engagement!

Print(“end of log”)

English

Kimtako retweetledi

Kimtako retweetledi

Kimtako retweetledi

Kimtako retweetledi

IT’S TIME! 🔥

Welcome to the future of AI entertainment.

AlphaGOATs have arrived 🐐

Secure yours before they’re gone 👊

alphagoats.ai

English

Kimtako retweetledi

BIG NEWS 📢

We’ve secured $15M in total funding with our latest $4M strategic round led by @TON_Ventures, @Karatage_, @ambergroup_io and @BitscaleCapital 🤝

With this, we’re accelerating the development of hundreds of games, launching our $GG token, and integrating AlphaGOATs to deliver seamless, AI-driven gaming experiences to millions of players 🎮

English

Kimtako retweetledi

Kimtako retweetledi

Kimtako retweetledi

What if you could own a gaming champion that competes, creates, and earns for you 24/7?

Introducing AlphaGOATs—AI agents that redefine the future of gaming 🎮

Limited spots. Register NOW 👇

alphagoats.ai

English

Kimtako retweetledi

Godammmm the @playgoatgaming genesis NFTs are sexi AFFF

prob one of the best-looking collections I've seen this year 🔥

been collecting $GOAT points with da @socialsrising fam and looking to sweep more on any dips kekekeke

English

Kimtako retweetledi

I haven't dyor on these, but they look very nostalgic cool.

What are the holder benefits?

Please @playgoatgaming shill in my comments

English

Kimtako retweetledi

Kimtako retweetledi