Mighty Bear Labs

67 posts

Mighty Bear Labs

@MightyBearLabs

Building in the open. Dev Diaries from an AI-native studio (@wearemightyai) Experiments, fails and breakthroughs.

I have to praise the attention to details of the Higgsfield marketing team. Well done.

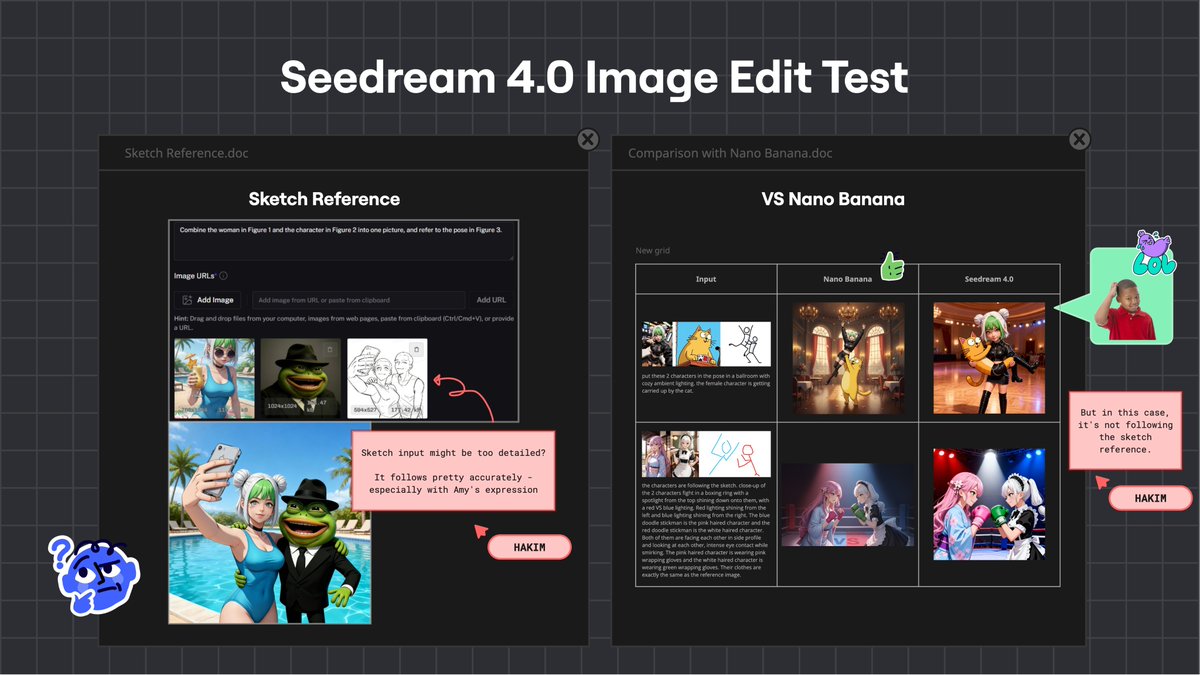

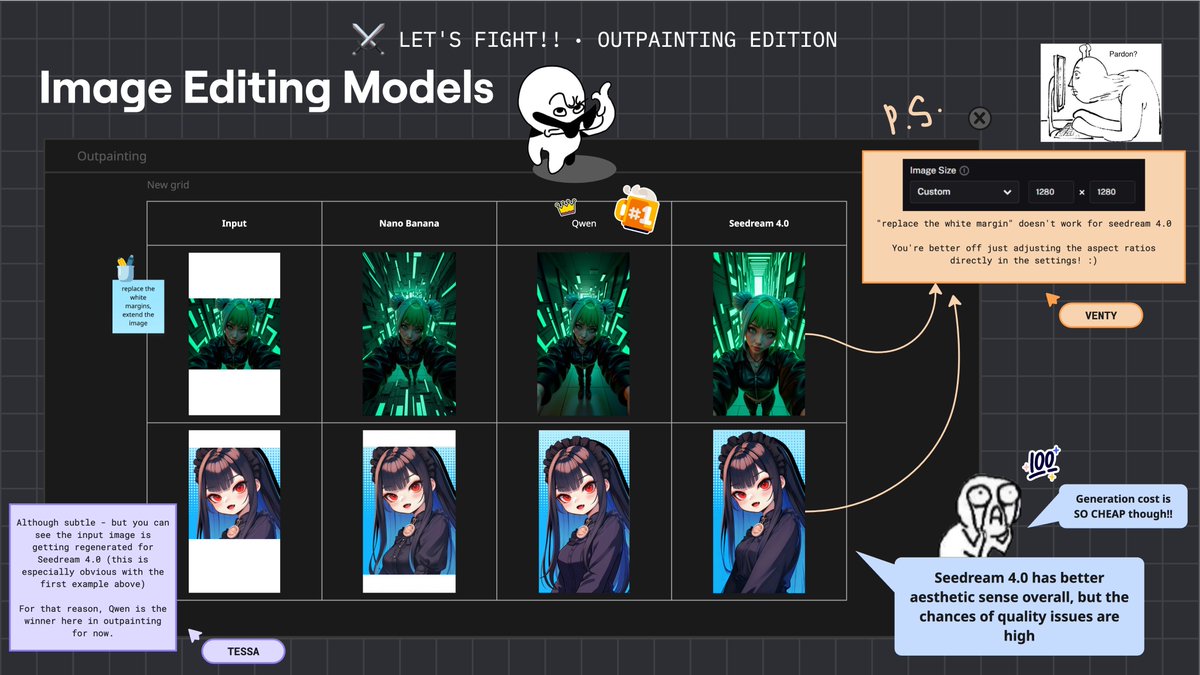

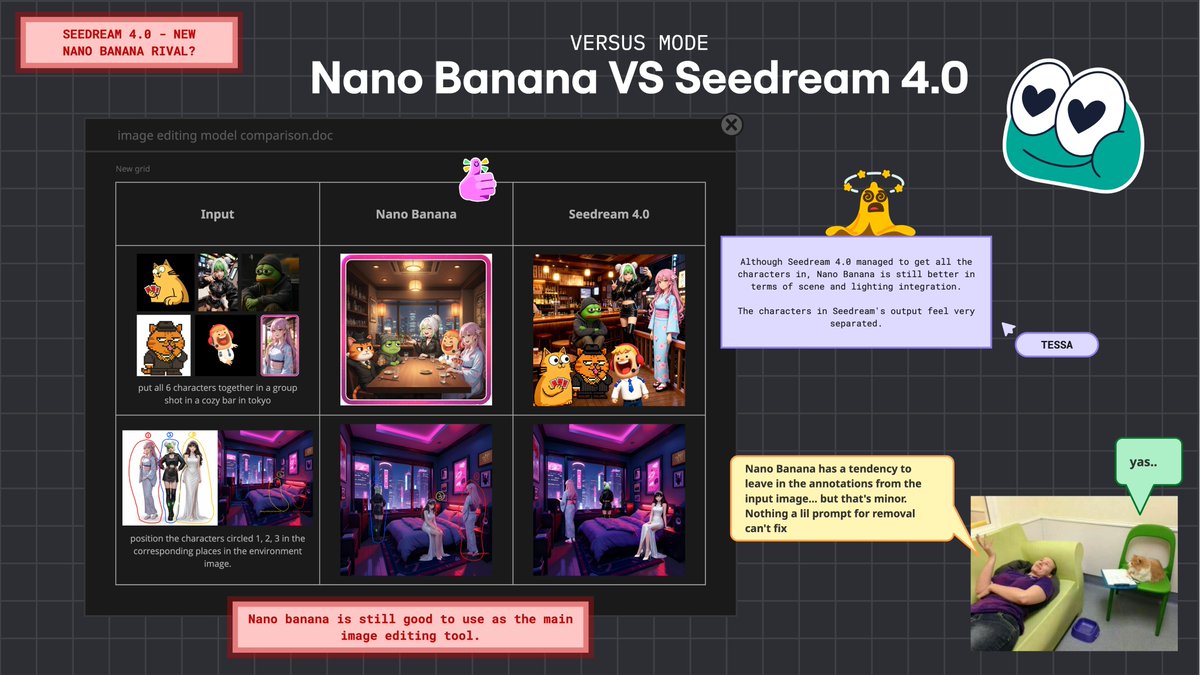

[MightyBearLabs]$ log –version 3.3 Quick Bite: Image restyle shootout across Nano Banana, Kontext, Qwen, Seedream 4.0. Brought to you by @wemmwem ! Detailed eval here: miro.com/app/board/uXjV… About Us: 20+ years shipping games & apps. Industry veterans with Apple & Disney credits, now pushing frontier AI. Goal: Find the most reliable model for controlled “style swap” edits from a clean photo/input. ❌ What didn’t work: • Nano Banana: inconsistent and off-model for this use case. • Seedream 4.0: often too literal to prompts (drifts into spoofed IP vibes). ✅ What worked: • Qwen: most consistent identity + scene preservation; best overall results. • Kontext: “good enough” backup, it holds composition, occasional color/style drift. ✨ Results: • Qwen wins both tests (cat→Ghibli, portrait→Simpsons). Kontext is second. Nano Banana benched. 💡 Takeaways: • For restyles where composition must hold, start with Qwen → fallback Kontext. • Keep prompts tight; avoid brand/IP cues that Seedream over-literalizes. • Next: stress-test on faces, hands, and busier scenes; measure pass rate at batch scale. That’s it, quick, sharp, and reproducible. What should we test next? Like this content? Follow me and @MightyBearLabs for production-level behind-the-scenes, AI deep dives, process notes, prompt recipes, and reproducible workflow.