gptape yeti

356 posts

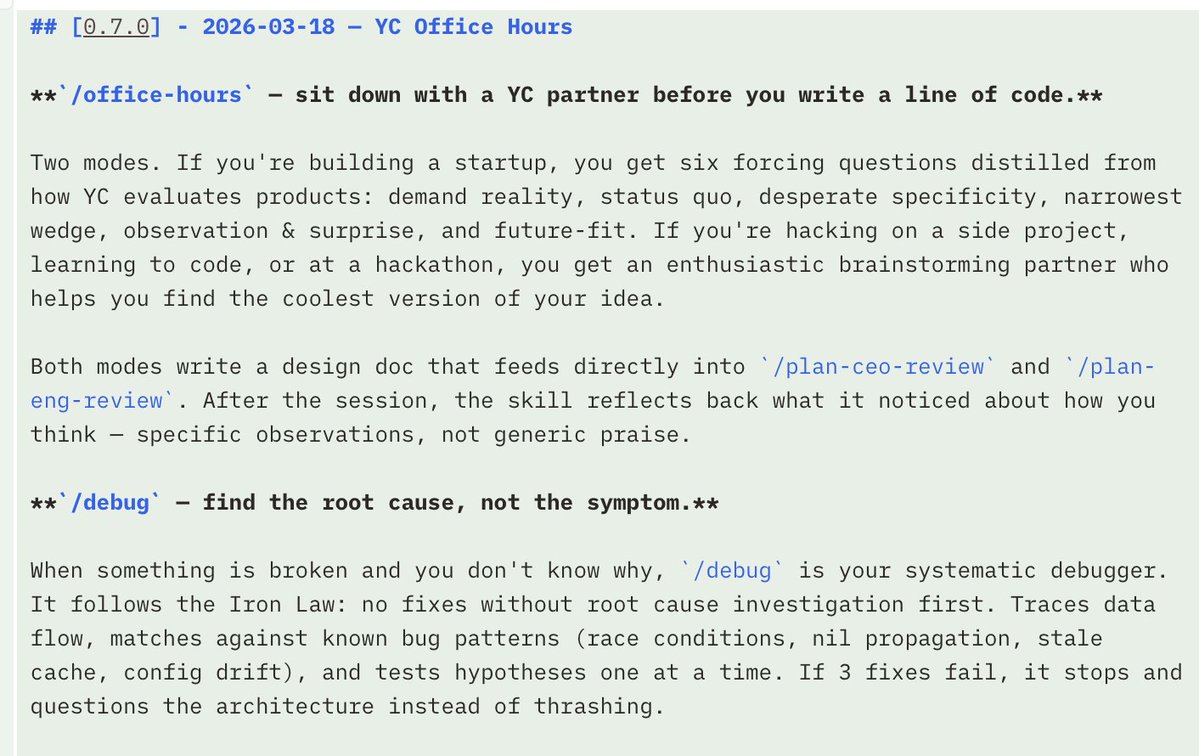

My first interview w @sulaimanghori, Member of Technical Staff @xAI. 0:41 WTF is happening at xAI 1:46 Predicting future bottlenecks 3:05 Shredding conventional timelines 4:23 Experience joining xAI 9:23 Bootstrapping off the Tesla network 11:59 What is Macrohard 13:14 How Elon deals w fires 16:30 What it’s like working at xAI 20:33 Cybertruck bet with Elon 21:12 Using 80 mobile generators + battery packs to balance load at their data centers 22:45 How they built Colossus in 122 days 23:35 Work backwards & figure out the highest leverage thing you can be doing 25:51 How xAI hires 30:27 Challenging requirements 32:46 Experimentation 34:55 How Elon recalibrated his timeline estimates 39:15 AI engineers vs AI researchers 40:36 No one tells me ‘no’ 42:09 Everyone’s an engineer 44:06 Why fuzziness between teams is an advantage 48:25 Testing human emulators as employees 50:00 Biggest blunders 53:23 What a meeting w Elon is like 54:22 How Elon gives feedback 56:44 Figuring out ‘what is truth’ for Grokipedia 59:21 What happens when Elon sees wrong Grok outputs on X 1:00:08 What a surge feels like & operating in xAI’s war room 1:02:53 Making fidget spinners & 3D printers in his bedroom 1:08:48 Creating a liquid fuel rocket engine

🚀 DeepSeek-OCR — the new frontier of OCR from @deepseek_ai , exploring optical context compression for LLMs, is running blazingly fast on vLLM ⚡ (~2500 tokens/s on A100-40G) — powered by vllm==0.8.5 for day-0 model support. 🧠 Compresses visual contexts up to 20× while keeping 97% OCR accuracy at <10×. 📄 Outperforms GOT-OCR2.0 & MinerU2.0 on OmniDocBench using fewer vision tokens. 🤝 The vLLM team is working with DeepSeek to bring official DeepSeek-OCR support into the next vLLM release — making multimodal inference even faster and easier to scale. 🔗 github.com/deepseek-ai/De… #vLLM #DeepSeek #OCR #LLM #VisionAI #DeepLearning

Google open sourcing an energy-efficient AI accelerator (NPU) was not on my bingo card Bonus points: It has a 32-bit RISC-V ISA