Prince Canuma@Prince_Canuma

mlx-vlm v0.5.0 is here 🚀

This is the largest release ever 🙌🏽

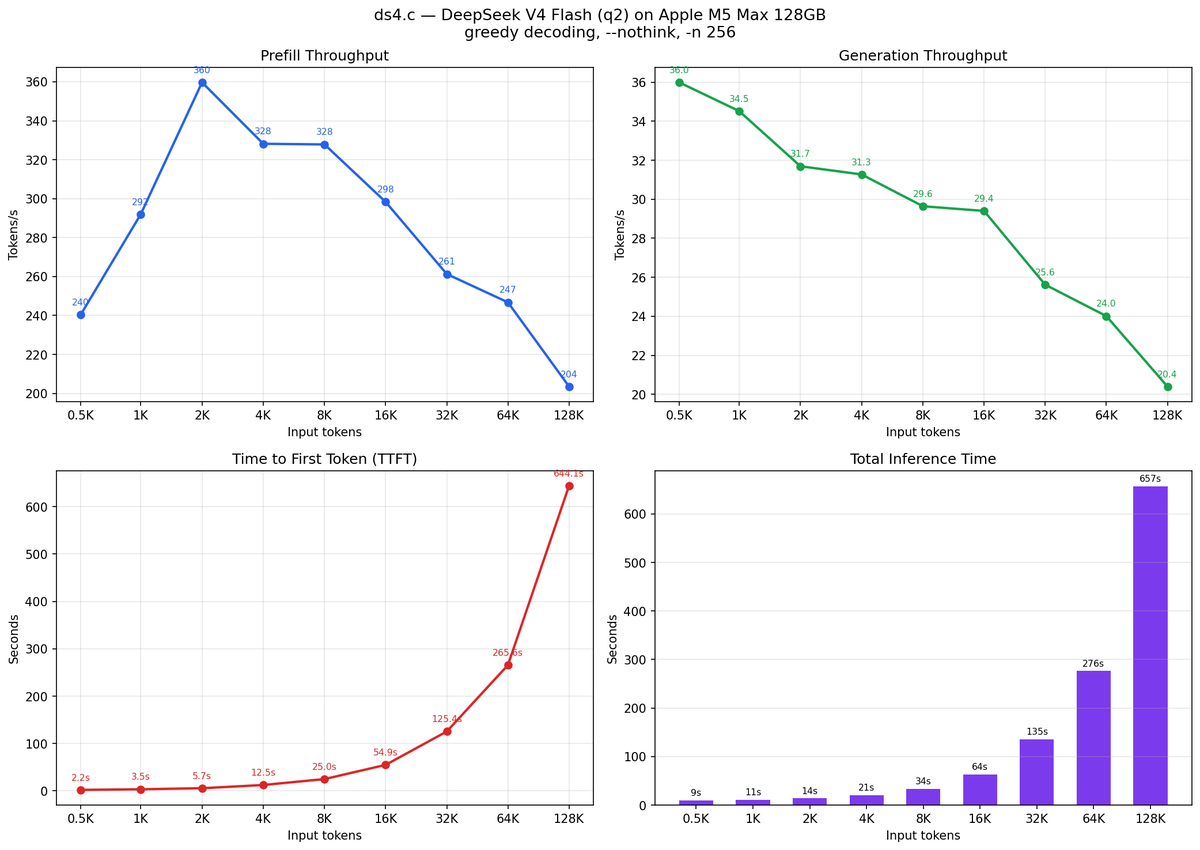

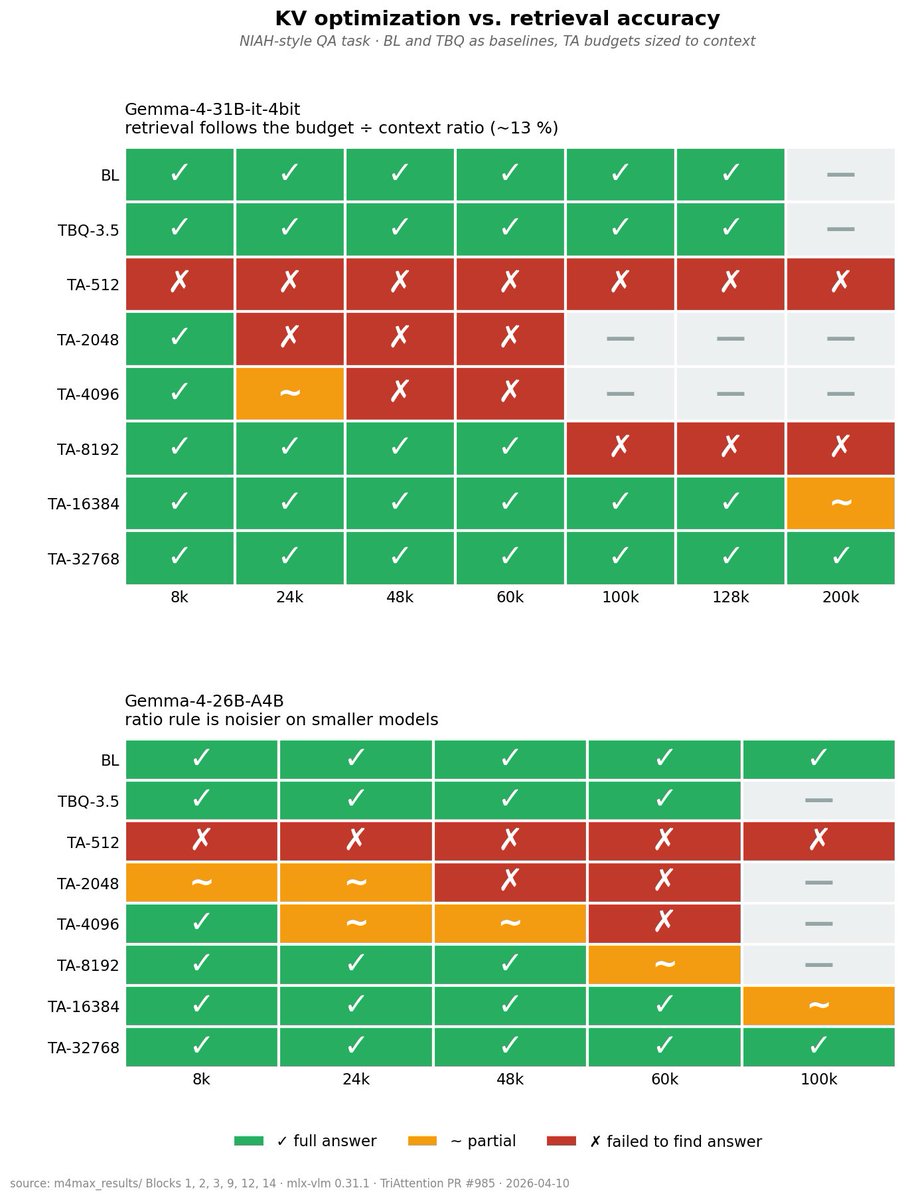

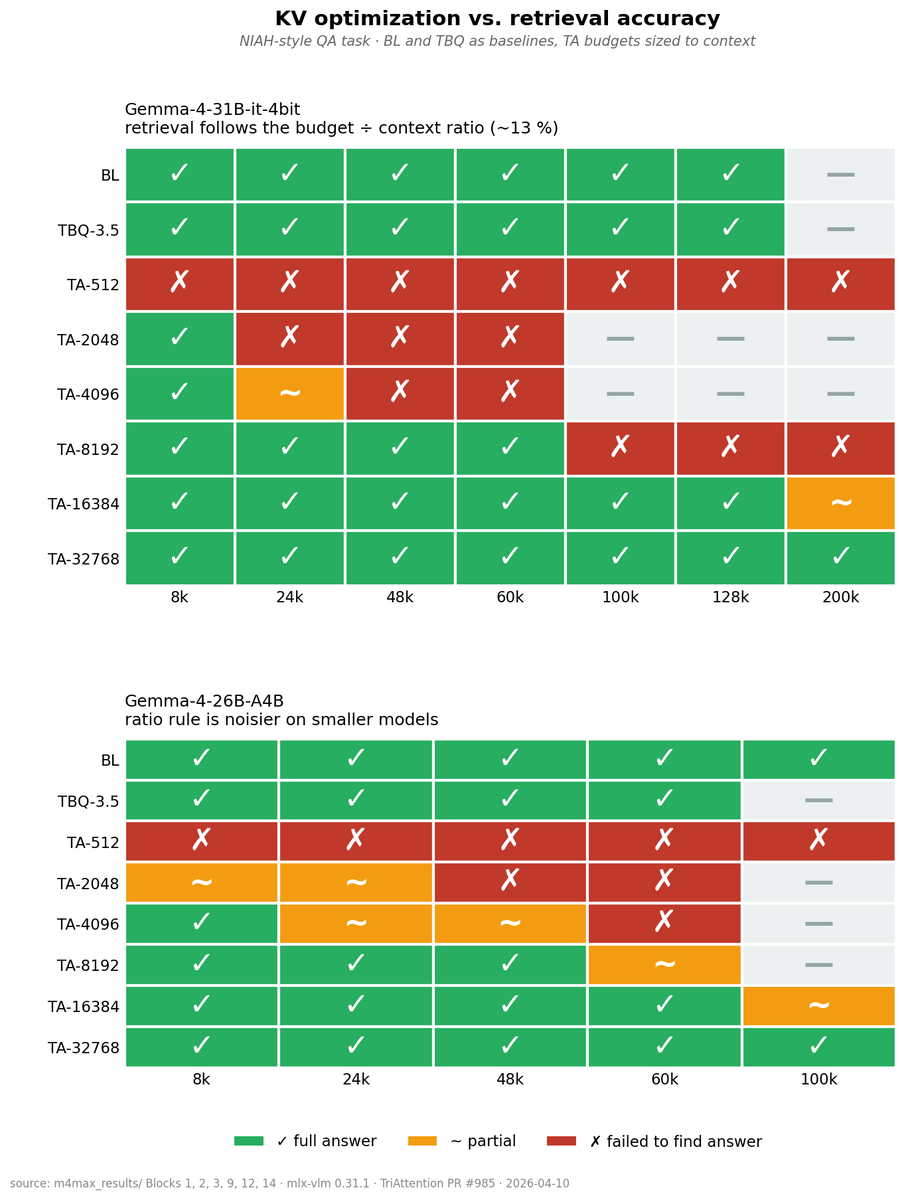

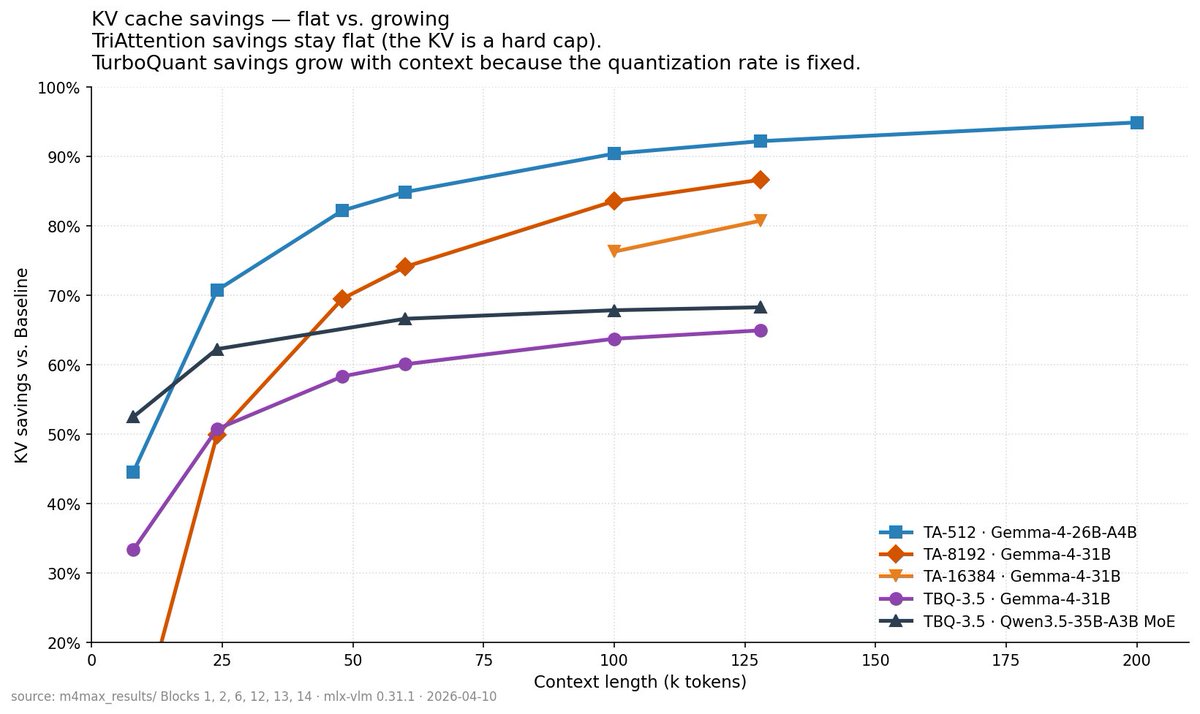

→ Continuous batching server + KV cache quantization

→ MTP and DFlash speculative decoding (single, batch, server)

→ Distributed inference: Qwen3.5, Kimi K2.5 & K2.6

→ Prompt caching w/ warm-disk persistence

→ Gemma 4 video (multi-video) + MTP drafter @googlegemma

→ New models: Youtu-VL, Nemotron 3 Nano Omni, SAM 3D Body

→ Server: json_schema response_format, thinking mode flag

Huge thanks to all 21 contributors and in particular the 18 new contributors, welcome aboard 🚢

Get started today:

> uv pip install -U mlx-vlm

Leave us a star ⭐️

github.com/Blaizzy/mlx-vlm